Abstract

Crowd counting on the drone platform is an interesting topic in computer vision, which brings new challenges such as small object inference, background clutter and wide viewpoint. However, there are few algorithms focusing on crowd counting on the drone-captured data due to the lack of comprehensive datasets. To this end, we collect a large-scale dataset and organize the Vision Meets Drone Crowd Counting Challenge (VisDrone-CC2020) in conjunction with the 16th European Conference on Computer Vision (ECCV 2020) to promote the developments in the related fields. The collected dataset is formed by 3, 360 images, including 2, 460 images for training, and 900 images for testing. Specifically, we manually annotate persons with points in each video frame. There are 14 algorithms from 15 institutes submitted to the VisDrone-CC2020 Challenge. We provide a detailed analysis of the evaluation results and conclude the challenge. More information can be found at the website: http://www.aiskyeye.com/.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Arteta, C., Lempitsky, V., Zisserman, A.: Counting in the wild. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9911, pp. 483–498. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46478-7_30

Bahmanyar, R., Vig, E., Reinartz, P.: MRCNet: crowd counting and density map estimation in aerial and ground imagery. CoRR abs/1909.12743 (2019)

Cai, Y., Wen, L., Zhang, L., Du, D., Wang, W., Zhu, P.: Rethinking object detection in retail stores. CoRR abs/2003.08230 (2020)

Cao, X., Wang, Z., Zhao, Y., Su, F.: Scale aggregation network for accurate and efficient crowd counting. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11209, pp. 757–773. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01228-1_45

Chan, A.B., Liang, Z.J., Vasconcelos, N.: Privacy preserving crowd monitoring: counting people without people models or tracking. In: CVPR (2008)

Chen, X., Bin, Y., Sang, N., Gao, C.: Scale pyramid network for crowd counting. In: WACV, pp. 1941–1950 (2019)

Chen, Yu., Wang, Y., Lu, P., Chen, Y., Wang, G.: Large-scale structure from motion with semantic constraints of aerial images. In: Lai, J.-H., et al. (eds.) PRCV 2018. LNCS, vol. 11256, pp. 347–359. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-03398-9_30

Cordts, M., et al.: The cityscapes dataset for semantic urban scene understanding. In: CVPR, pp. 3213–3223 (2016)

Dang-Nguyen, D., Pasquini, C., Conotter, V., Boato, G.: RAISE: a raw images dataset for digital image forensics. In: Ooi, W.T., Feng, W., Liu, F. (eds.) MMSys, pp. 219–224 (2015)

Fang, Y., Zhan, B., Cai, W., Gao, S., Hu, B.: Locality-constrained spatial transformer network for video crowd counting. In: ICME, pp. 814–819 (2019)

Farnebäck, G.: Two-frame motion estimation based on polynomial expansion. In: Bigun, J., Gustavsson, T. (eds.) SCIA 2003. LNCS, vol. 2749, pp. 363–370. Springer, Heidelberg (2003). https://doi.org/10.1007/3-540-45103-X_50

Gao, G., Gao, J., Liu, Q., Wang, Q., Wang, Y.: CNN-based density estimation and crowd counting: a survey. CoRR abs/2003.12783 (2020)

He, J., Zhang, S., Yang, M., Shan, Y., Huang, T.: Bi-directional cascade network for perceptual edge detection. In: CVPR, pp. 3828–3837 (2019)

Hsieh, M., Lin, Y., Hsu, W.H.: Drone-based object counting by spatially regularized regional proposal network. In: ICCV (2017)

Hu, Y., Chang, H., Nian, F., Wang, Y., Li, T.: Dense crowd counting from still images with convolutional neural networks. J. Vis. Commun. Image Represent. 38, 530–539 (2016)

Idrees, H., Saleemi, I., Seibert, C., Shah, M.: Multi-source multi-scale counting in extremely dense crowd images. In: CVPR, pp. 2547–2554 (2013)

Idrees, H., et al.: Composition loss for counting, density map estimation and localization in dense crowds. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11206, pp. 544–559. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01216-8_33

Jiang, X., et al.: Crowd counting and density estimation by trellis encoder-decoder networks. In: CVPR, pp. 6133–6142 (2019)

Leibe, B., Seemann, E., Schiele, B.: Pedestrian detection in crowded scenes. In: CVPR, pp. 878–885 (2005)

Lempitsky, V.S., Zisserman, A.: Learning to count objects in images. In: Lafferty, J.D., Williams, C.K.I., Shawe-Taylor, J., Zemel, R.S., Culotta, A. (eds.) NeurIPS, pp. 1324–1332 (2010)

Li, Y., Zhang, X., Chen, D.: CSRNet: dilated convolutional neural networks for understanding the highly congested scenes. In: CVPR, pp. 1091–1100 (2018)

Liu, N., Long, Y., Zou, C., Niu, Q., Pan, L., Wu, H.: ADCrowdNet: an attention-injective deformable convolutional network for crowd understanding. In: CVPR, pp. 3225–3234 (2019)

Liu, W., Salzmann, M., Fua, P.: Context-aware crowd counting. In: CVPR, pp. 5099–5108 (2019)

Liu, X., van de Weijer, J., Bagdanov, A.D.: Exploiting unlabeled data in CNNs by self-supervised learning to rank. TPAMI 41(8), 1862–1878 (2019)

Loy, C.C., Gong, S., Xiang, T.: From semi-supervised to transfer counting of crowds. In: ICCV, pp. 2256–2263 (2013)

Ma, Z., Yu, L., Chan, A.B.: Small instance detection by integer programming on object density maps. In: CVPR, pp. 3689–3697 (2015)

Pan, X., Mo, H., Zhou, Z., Wu, W.: Attention guided region division for crowd counting. In: ICASSP, pp. 2568–2572 (2020)

Sam, D.B., Sajjan, N.N., Maurya, H., Babu, R.V.: Almost unsupervised learning for dense crowd counting. In: AAAI, pp. 8868–8875 (2019)

Shi, Z., Mettes, P., Snoek, C.: Counting with focus for free. In: ICCV, pp. 4199–4208 (2019)

Sun, K., et al.: High-resolution representations for labeling pixels and regions. CoRR abs/1904.04514 (2019)

Suzuki, S., Abe, K.: Topological structural analysis of digitized binary images by border following. CVGIP 30(1), 32–46 (1985)

Wang, M., Wang, X.: Automatic adaptation of a generic pedestrian detector to a specific traffic scene. In: CVPR, pp. 3401–3408 (2011)

Wang, Q., Gao, J., Lin, W., Yuan, Y.: Learning from synthetic data for crowd counting in the wild. In: CVPR, pp. 8198–8207 (2019)

Wei, C., Wang, W., Yang, W., Liu, J.: Deep retinex decomposition for low-light enhancement. In: BMVC, p. 155 (2018)

Wen, L., et al.: Drone-based joint density map estimation, localization and tracking with space-time multi-scale attention network. CoRR abs/1912.01811 (2019)

Wu, B., Nevatia, R.: Detection of multiple, partially occluded humans in a single image by Bayesian combination of edgelet part detectors. In: ICCV, pp. 90–97 (2005)

Xu, C., et al.: Autoscale: learning to scale for crowd counting. CoRR abs/1912.09632 (2019)

Xu, C., Qiu, K., Fu, J., Bai, S., Xu, Y., Bai, X.: Learn to scale: generating multipolar normalized density maps for crowd counting. In: ICCV, pp. 8381–8389 (2019)

Yan, Z., et al.: Perspective-guided convolution networks for crowd counting. In: ICCV, pp. 952–961 (2019)

Zhang, A., et al.: Relational attention network for crowd counting. In: ICCV, pp. 6787–6796 (2019)

Zhang, C., Li, H., Wang, X., Yang, X.: Cross-scene crowd counting via deep convolutional neural networks. In: CVPR, pp. 833–841 (2015)

Zhang, L., Shi, M., Chen, Q.: Crowd counting via scale-adaptive convolutional neural network. In: WACV, pp. 1113–1121 (2018)

Zhang, Y., Zhou, D., Chen, S., Gao, S., Ma, Y.: Single-image crowd counting via multi-column convolutional neural network. In: CVPR, pp. 589–597 (2016)

Zhu, L., Zhao, Z., Lu, C., Lin, Y., Peng, Y., Yao, T.: Dual path multi-scale fusion networks with attention for crowd counting. CoRR abs/1902.01115 (2019)

Acknowledgements

This work was supported in part by the National Natural Science Foundation of China under Grant 61876127 and Grant 61732011, in part by Natural Science Foundation of Tianjin under Grant 17JCZDJC30800.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

A Submitted Crowd Counting Algorithms

A Submitted Crowd Counting Algorithms

In this appendix, we provide a short summary of all crowd counting algorithms that were considered in the VisDrone-CC2020 Challenge.

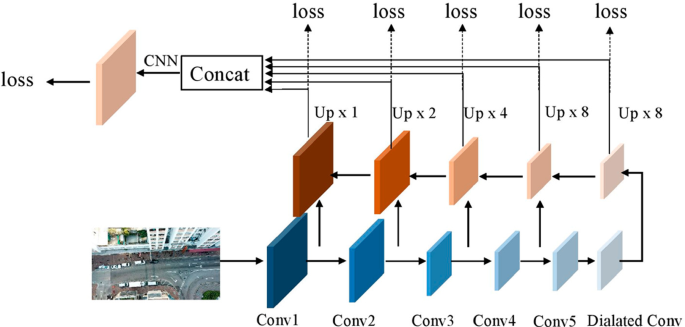

1.1 A.1 Feature Pyramid Network for Crowd Counting (FPNCC)

Dingkang Liang, Chenfeng Xu, Yongchao Xu, Xiang Bai

dkliang@hust.edu.cn

FPNCC is based on AutoScale [37] (an extension of L2S [38]) with the VGG16-based FPN backbone, which automatically scales dense regions into similar and appropriate density levels (see Fig. 3). Meanwhile, we separate the overlapped blobs and decompose the original accumulated density values in density map. To preserve sufficient spatial information for accurate counting, we discard the last pooling layer and all following fully connected layers, as well as the pooling layer between stage4 and stage5. Note that we exploit the pre-trained model based on ImageNet Database. Besides using the traditional MSE Loss, we also use SSIM loss to improve the high-quality density map to aid the final counting performance.

1.2 A.2 Bi-path Video Crowd Counting (BVCC)

Zhiyuan Zhao, Tao Han, Junyu Gao, Qi Wang, Xuelong Li

tuzixini@163.com, hantao10200@mail.nwpu.edu.cn, {gjy3035,

crabwq}@gmail.com, li@nwpu.edu.cn

To deal with the challenges such as varying scale, unstable exposure, and scene migration, BVCC is proposed to automatically understand the crowd from the visual data collected from drones. First, to alleviate the background noise generated in cross-scene testing, a double-stream crowd counting model is proposed, which extracts optical flow and frame difference information as an additional branch. Besides, to improve the generalization ability of the model at different scales and time, we randomly combine a variety of data transformation methods to simulate some unseen environments. To tackle the crowd density estimation problem under extreme dark environments, we introduce synthetic data generated by GTAV [33].

1.3 A.3 Counting with Focus for Free (CFF)

Wei Xu

wxu.bupt@gmail.com

CFF [29] proposes two kinds of free supervision including segmentation maps and global density. Besides, an improved kernel size estimator is proposed to facilitate density estimation and the focus from segmentation. During training, we augment the images by randomly cropping \(128\times 128\) patches. Code is available at https://github.com/shizenglin/Counting-with-Focus-for-Free.

1.4 A.4 Parallel Dilated Convolution Neural Network (PDCNN)

Pei Lyu, Lei Zhao, Jieru Wang, Yingnan Lin

{pei.lv, michael.zhao, jieru.wang, lynn.lin}@unisoc.com

PDCNN is the illumination-aware counting model based on CSRNet [21]. Since there are three categories of illumination conditions in the dataset (cloudy, sunny and night), we first distinguish the day and night illumination. Then, we use different networks to handle different scenarios. We use geometry-adaptive kernels to tackle the different congested scenes. We use Gaussian Kernel to blur each head annotation. We crop patches from each image at different location for data augmentation.

1.5 A.5 Crowd Counting with Attentional Mechanism and Feature Pyramids (DevaNetv2)

Ye Tian, Chenzhen Duan, Xiaoqing Xu, Zhiwei Wei

{19s151092, 18s151541, 19s051052, 19s051024}@stu.hit.edu.cn

In the field of aerial image crowd counting, people usually use grid density maps as the label with “unprejudiced” neural network block, but there are a series of problems. On one hand, the scale and shape of people will change too much, according to the different shooting angles and flight heights of the UAV. On the other hand, the luminance of the image will change, because of the various shooting time. In order to solve the above problems, we use Gaussian density maps and useful data enhancement methods to improve the robustness of our model. We use attentional mechanism and feature pyramids to make model adjust the predicting results based on the global information. We also design an auxiliary loss to reduce the difficulty of model optimization. The network is implemented by the \(C^3\) frameworkFootnote 1.

1.6 A.6 CSRNet on Drone-Based Scenarios (CSRNet+)

Florian Krüger, Thomas Golda

{florian.krueger, thomas.golda}@iosb.fraunhofer.de

CSRNet+ is modified from CSRNet [21] to perform crowd counting on drone-based scenarios. We pretrain our model using only the training set from DLR-Aerial Crowd Dataset (DLRACD) [2]. Then we finetune our model with the VisDrone2020-CC training dataset. For training on VisDrone2020-CC training dataset, we annotate the ground sampling distances (GSD) for the training set by hand and then generated density maps from those as in [2]. Furthermore, we upsample the training sequences to equalize the illumination distribution. For night sequences, we only double them since there are only 3 in the training set.

1.7 A.7 Mutil-scale Aware based SFANet (M-SFANet)

Zhipeng Luo, Bin Dong, Yuehan Yao, Zhenyu Xu

{luozp, dongb, yaoyh, xuzy}@blueai.com

M-SFANet modifies neural network architectures based on SFANet [44] for accurate and efficient crowd counting. Inspired by SFANet, the M-SFANet model is attached with two novel multi-scale-aware modules, called ASSP and CAN. The encoder of M-SFANet is enhanced with ASSP containing parallel atrous convolutional layers with different sampling rates and hence able to extract multi-scale features of the target object and incorporate larger context. To further deal with scale variation throughout an input image, we leverage contextual module called CAN which adaptively encodes the scales of the contextual information. The combination yields an effective model for counting in both dense and sparse crowd scenes. Based on SFANet decoder structure, M-SFANet’s decoder has dual paths, for density map generation and attention map generation. During training stage, we use MSE Loss for density map and CrossEntropy Loss for attention map; The whole dataset is split into 8 folds, which means that 8 independent models were trained. During inference stage, we use separated min clip for nightly scene videos.

1.8 A.8 Scaled Cascade Network for Crowd Counting on Drone data (SCNet)

Omar Elharrouss, Noor Almaadeed, Khalid Abualsaud, Amr Mohamed, Tamer Khattab, Ali Al-Ali, Somaya Al-Maadeed

elharrouss.omar@gmail.com

We propose a crowd counting method based on deep convolutional neural networks (CNN) by extracting high-level features to generate density maps that represent an estimation of the crowd count with respect to the scale variations in the scene. Specifically, SCNet is a CNN-based model by adding a cascade network after the frontend block inspired by those used in SPN [6], CSRNet [21], and AGRD [27]. Cascade block is inspired by the Bi-directional cascade network in [13] used for edge detection. To handle the scale variations a Scale Enhancement Module (SEM) is introduced. The architecture employs sequential dilated convolution blocks with different kernels.

1.9 A.9 CSRNet on Segmented Scenes with Optical Flow (CSR-SSOF)

Siyang Pan, Shidong Liu, Binyu Zhang, Yanyun Zhao

{pansiyang, lsd215, jl-lagrange, zyy}@bupt.edu.cn

CSR-SSOF is derived from CSRNet [21] with several additional modules offering improvements. First, optical flow is computed based on the Gunnar Farneback algorithm [11] to extract temporal information. RGB images concatenated with corresponding optical flow are fed into the modified CSRNet which takes 5-channel data as input. Furthermore, we replace the last layer with the density map estimator (DME) in SANet [4] in order to generate high-resolution density maps. The patch-based test scheme in SANet is also applied in our method. Considering the interference of irrelevant regions in which crowds rarely appear, an improved semantic segmentation model HRNetV2 [30] is implemented to block out building areas. We extract the contours of segmentation maps following [31] and fill the small holes. The corrosion expansion morphology method is used to smooth and narrow down the boundaries of building areas. Besides, V channel of the HSV model is chosen for the division of day and night sequences and we take a series of measures to cope with the night ones. Night images are enhanced by RetinexNet [34] at the beginning to make the buried details visible for counting. In addition, we assume buildings in night sequences exist in pixels with below-average V values, so we directly binarize the original night images accordingly to perform segmentation. All final counting results are obtained by the integral of density maps within non-building areas. With partial parameters pre-trained on ImageNet, the modified CSRNet is finetuned on ShanghaiTech Part B [43] dataset and VisDrone2020 train set successively. For segmentation, HRNetV2 is firstly trained on UDD5 [7] dataset making use of Cityscapes [8] pre-trained weight. Then we manually annotate some images on VisDrone2020 train set distinguishing building areas and use the annotation to finetune our model. For the night image enhancement, we use RetinexNet model pre-trained on LOL [34] dataset and RAISE [9] dataset.

1.10 A.10 Soft Dilated Convolutional Neural Networks for Understanding the Highly Congested Scenes (Soft-CSRNET)

Bakour Imene, Bouchali Hadia Nesma

{imene.bakour, hadianesma.bouchali}@etu.usthb.dz

Soft-CSRNET is modified on the network for Congested Scene Recognition called CSRNet [21] to provide a data-driven and deep learning method that can understand highly congested scenes and perform accurate count estimation as well as present high-quality density maps. We try several possible configurations on the network to be able to optimize it and render it in real time, for that we focus on the accuracy of the counting, removing the minimum possible resolution of the density maps estimated The proposed network is composed of two major components: a convolutional neural network (CNN) as the front-end for 2D feature extraction and a dilated CNN for the back-end, which uses dilated kernels to deliver larger reception fields and to replace pooling operations. Our Soft-CSRNET contains fewer parameters and convolutional layers in the backend.

1.11 A.11 Context-Aware Crowd Counting (CANet)

Laihui Ding

xmyyzy123@qq.com

CANet [23] is an end-to-end network that leverages multiple receptive field scales to learn and combine feature at each image location. Thus the contextual information is encoded for predicting accurate crowd density.

1.12 A.12 MultI-view fuLly convoLutional nEural Network for crowd couNting In drone-captUred iMages (MILLENNIUM)

Giovanna Castellano, Ciro Castiello, Marco Cianciotta, Corrado Mencar, Gennaro Vessio

{giovanna.castellano, ciro.castiello, marco.cianciotta, corrado.mencar,

gennaro.vessio}@uniba.it

We couple multi-view data and multi-functional deep learning for efficient crowd counting in aerial images. Specifically, we exploit the real-world RGB image and the corresponding crowd heatmap as multiple views of the same scene containing a crowd to create a powerful regression model for crowd counting. Two deep neural networks, one for each view, are jointly trained so that their weights are updated at the same time. After training, only the network that processes the real-world images is retained as final model for crowd counting. This provides an accurate light-weight model that is suitable to meet the limited computational resources of a UAV. The final model is able to provide the crowd count with an average processing speed of about 125 frames per second.

1.13 A.13 Scale Aggregation Network (SANet)

Shuang Qiu, Zhijian Zhao

{qiushuang, zhaozhijian}@supremind.com

SANet is the encoder-decoder based Scale Aggregation Network [4]. Specifically, the encoder is used to exploit multi-scale features by scale aggregation while the decoder is used to generate high-resolution density maps by transposed convolutions. To consider local correlation in density maps, both Euclidean loss and local pattern consistency loss are used to train the network for better performance. We finetune the network on additional datasets such as Shanghaitech B [43], and Crowd surveillance [39].

1.14 A.14 Residual Network Feature Pyramid Network_101 (ResNet-FPN101)

Muhammad Saqib, Sultan Daud Khan

muhammad.saqib@uts.edu.au, sultandaud@gmail.com

ResNet-FPN101 is the baseline crowd counting method. Specifically, we convert the dot-level annotation to bounding box-level annotation. We train and evaluate the network with ResNet-FPN-101 as a backbone architecture.

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Du, D. et al. (2020). VisDrone-CC2020: The Vision Meets Drone Crowd Counting Challenge Results. In: Bartoli, A., Fusiello, A. (eds) Computer Vision – ECCV 2020 Workshops. ECCV 2020. Lecture Notes in Computer Science(), vol 12538. Springer, Cham. https://doi.org/10.1007/978-3-030-66823-5_41

Download citation

DOI: https://doi.org/10.1007/978-3-030-66823-5_41

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-66822-8

Online ISBN: 978-3-030-66823-5

eBook Packages: Computer ScienceComputer Science (R0)