Abstract

Penetration testing (PT) is a crucial way to ensure the security of computer systems. However, it requires a high threshold and can only be implemented by trained experts. Automated tools can reduce the pressure of talent shortages, and reinforcement learning (RL) is a promising approach for achieving automated PT. Due to the unreasonable characterization of the PT process and the low efficiency of RL data, the applicability of the model is limited, and it is difficult to reuse, which hinders its practical application. In this paper, we propose an INNES (INtelligent peNEtration teSting) model based on deep reinforcement learning (DRL). First, the model characterizes the key elements of PT more reasonably based on the Markov decision process (MDP), fully considering the commonality of the PT process in different scenarios to improve its applicability. Second, the DQN_valid algorithm is designed to constrain the agent’s action space, to improve the agent’s decision-making accuracy, and avoid invalid exploration, according to the feature that enables the effective action space to gradually increase during the PT process. The experimental results show that our model is not only effective for automated PT in the network environment but also has portability, which provides a possible future direction for practical application of intelligent PT based on RL.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Availability of data and materials

The data that support the findings of this study are available from the corresponding author, Min, upon reasonable request.

Notes

The scenarios and the model code are available at https://github.com/liqianyu513/INNES-model.

References

ZC (2022) Research on internet security situation awareness prediction technology based on improved rbf neural network algorithm. Journal of Computational and Cognitive Engineering

Zennaro FM, Erdodi L (2020) Modeling penetration testing with reinforcement learning using capture-the-flag challenges and tabular q-learning

Wani A, Revathi S, Khaliq R (2021) Sdn-based intrusion detection system for iot using deep learning classifier (idsiot-sdl). CAAI Transactions on Intelligence Technology 003:006

RV, AK, AA (2022) Revisiting shift cipher technique for amplified data security. Journal of Computational and Cognitive Engineering

Stefinko Y, Piskozub A, Banakh R (2016) Manual and automated penetration testing. benefits and drawbacks. modern tendency. In: 2016 13th International Conference on Modern Problems of Radio Engineering. Telecommunications and Computer Science (TCSET)

Abu-Dabaseh F, Alshammari E (2018) Automated penetration testing : An overview. In: 4th International Conference on Natural Language Computing (NATL 2018)

Tabassum M, Sharma T, Mohanan S (2021) Ethical hacking and penetrate testing using kali and metasploit framework

Nadeem A, Verwer S, Moskal S, Yang SJ (2022) Alert-driven attack graph generation using s-pdfa. IEEE transactions on dependable and secure computing 2:19

Stergiopoulos GGD, Dedousis P (2022) Automatic analysis of attack graphs for risk mitigation and prioritization on large-scale and complex networks in industry 4.0. In: International Journal of Information Security pp. 37–59

Zhang Y, Zhou T, Zhu J, Wang Q (2020) Domain-independent intelligent planning technology and its application to automated penetration testing oriented attack path discovery. J Electron Inf Technol 42(9):2095–2107

Hoffmann J (2015) Simulated penetration testing: From" dijkstra" to" turing test++". Proceedings of the International Conference on Automated Planning and Scheduling 25:364–372

Silva C, Fernandes B, Feitosa EL, Garcia VC (2022) Piracema.io: A rules-based tree model for phishing prediction. Expert Systems with Applications 191, 116239

Bruce Schneier (2018) Artificial intelligence and the attack/defense balance. IEEE Security & Privacy 16(2):96–96

Debnath S, Roy P, Namasudra S, Crespo RG (2022) Audio-visual automatic speech recognition towards education for disabilities.J Autism Dev Disord

Gutub A (2022) Boosting image watermarking authenticity spreading secrecy from counting-based secret-sharing. CAAI Transactions on Intelligence Technology

Gupta A, Namasudra S (2022) A novel technique for accelerating live migration in cloud computing. Autom Softw Eng

Manjari KSG, Verma M (2022) Qest: Quantized and efficient scene text detector using deep learning. Transactions on Asian and Low-Resource Language Information Processing

Hunt MS, Stephanie DO, Msci A (2023) The role of data analytics and artificial intelligence (ai) in ocular telehealth. Ocular Telehealth 213–232

Witte J, Gao K, Zll A (2023) Artificial intelligence: The future of sustainable agriculture? a research agenda. Publications of Darmstadt Technical University, Institute for Business Studies (BWL)

Nath K (2023) The role of artificial intelligence in the modeling, analysis and inspection of ultrasonic welding processes - a review. Int J Comput Mater Sci Eng

Ravi V, Soman KP, Alazab M, Sriram S, KS (2020) A comprehensive tutorial and survey of applications of deep learning for cyber security

Sutton RS, Barto AG, et al (1998) Introduction to reinforcement learning

Sutton RS, Barto AG (2018) Reinforcement Learning: An Introduction

Spieker H, Gotlieb A, Marijan D, Mossige M (2018) Reinforcement learning for automatic test case prioritization and selection in continuous integration

Shen Z, Yin H, Jing L, Liang Y, Wang J (2022) A cooperative routing protocol based on q-learning for underwater optical-acoustic hybrid wireless sensor networks. IEEE sensors journal (22-1)

Mnih V, Kavukcuoglu K, Silver D, Graves A, Antonoglou I, Wierstra D, Riedmiller M (2013) Playing atari with deep reinforcement learning. Computer Science

Volodymyr, Mnih, Koray, Kavukcuoglu, David, Silver, Andrei, A, Rusu, Joel (2015) Human-level control through deep reinforcement learning. Nature

Liu Q, Zhai JW, Zhang ZZ, Zhong S, Xu J (2018) A survey on deep reinforcement learning. Chinese Journal of Computers

Liu W, Su S, Tang T, Wang X (2021) A dqn-based intelligent control method for heavy haul trains on long steep downhill section. Transportation Research Part C Emerging Technologies 129(10):103249

Zhang C, Zheng K, Tian Y, Pi Y, Chen R, Xue W, Yang T, An D (2022) Advertising impression resource allocation strategy with multi-level budget constraint dqn in real-time bidding. Neurocomputing (Jun.1), 488

Ahmadi H, Ashtiani M, Azgomi MA, Saheb-Nassagh R (2022) A dqn-based agent for automatic software refactoring. Information and software technology (Jul.), 147

Shmaryahu D, Shani G, Hoffmann J, Steinmetz M (2017) Partially observable contingent planning for penetration testing. In: Iwaise: First International Workshop on Artificial Intelligence in Security vol. 33

Pretschner A (2017) Automated attack planning using a partially observable model for penetration testing of industrial control systems

Ghanem MC, Chen TM (2018) Reinforcement learning for intelligent penetration testing. In: 2018 Second World Conference on Smart Trends in Systems, Security and Sustainability (WorldS4) pp. 185–192

Zhou T-y, Zang Y-c, Zhu J-h, Wang Q-x (2019) Nig-ap: a new method for automated penetration testing. Frontiers of Information Technology & Electronic Engineering 20(9):1277–1288

Nguyen H, Teerakanok S, Inomata A, Uehara T (2021) The proposal of double agent architecture using actor-critic algorithm for penetration testing. In: 7th International Conference on Information Systems Security and Privacy

Tran K, Akella A, Standen M, Kim J, Bowman D, Richer T, Lin CT (2021) Deep hierarchical reinforcement agents for automated penetration testing

Walter E, Ferguson-Walter K, Ridley A (2021) Incorporating deception into cyberbattlesim for autonomous defense

Calderon P (2017) Nmap : Network exploration and security auditing cookbook : A complete guide to mastering nmap and its scripting engine, covering practical tasks for penetration testers and system administrators

Team M (2021) Cyberbattlesim. Created by Christian Seifert, Michael Betser, William Blum, James Bono, Kate Farris, Emily Goren, Justin Grana, Kristian Holsheimer, Brandon Marken, Joshua Neil, Nicole Nichols, Jugal Parikh, Haoran Wei

Acknowledgements

This work was supported by the National Natural Science Foundation of China under Grant No.61971413.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing Interests

We declare that we have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A Network scenario details

Appendix A Network scenario details

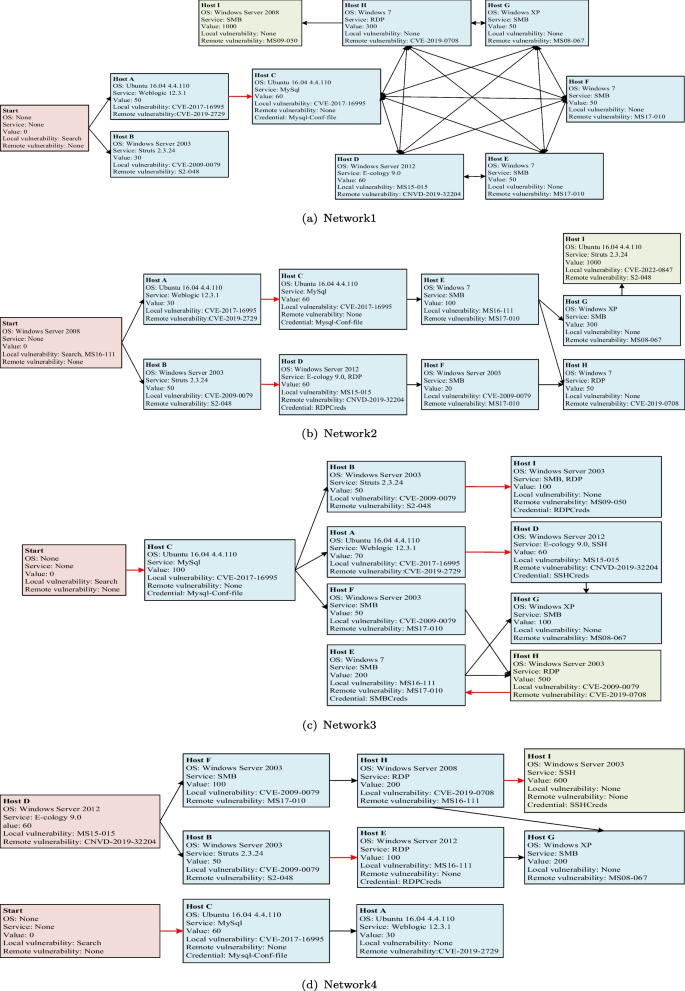

To verify the validity of our model, we conducted experiments on two parts of the scenarios. One part is the scenarios included in the currently open-source CyberBattleSim platform developed by Microsoft. Sample1 and Sample2 are randomly generated by the scenario automatic generation module contained by CyberBattleSim. The other part is the scenarios that we abstractly construct from the real network environment. In RL, the input and output of the agent, namely the scale of state space and action space, need to be defined before the neural network structure design. However, when applying RL to solve PT problems, it is nearly impossible to fully describe all the elements in the network scenarios. Therefore, we limit the size of the problem so that agents can be applied to all scenarios within that range. In this paper, the network scenarios limit of the PT tasks that the agents we build can perform is shown in Table 8. We design the network scenarios according to the scope given in the table.

Figure 8 shows the structure of each scenario built in this paper. In the figure, the start node is the starting point of the agent. It is not included in the network scenario, so each scenario contains 9 nodes. The lines between nodes represent the reachability between them. The black line A\( \rightarrow \)B indicates that the agent can discover node B after vulnerability exploitation at node A, and the red line A\( \rightarrow \)B indicates that the agent can discover the credential of node B after vulnerability exploitation at node A. The red nodes indicate the starting point of the penetration testing or the agent already owned nodes before testing began, and the green nodes indicate the penetration testing target. In node information, None means that the element does not exist on the node. None on the Start node means that this information does not participate in the agent’s decision. We set up a search operation on the Start node to discover other nodes.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Li, Q., Hu, M., Hao, H. et al. INNES: An intelligent network penetration testing model based on deep reinforcement learning. Appl Intell 53, 27110–27127 (2023). https://doi.org/10.1007/s10489-023-04946-1

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-023-04946-1