Abstract

The manufacturing domain is regarded as one of the most important engineering areas. Recently, smart manufacturing merges the use of sensors, intelligent controls, and software to manage each stage in the manufacturing lifecycle. Additionally, the increasing use of point clouds to model real products and machining tools in a virtual space facilitates the more accurate monitoring of the end-to-end production lifecycle. Thus, the conjunction of both, intelligent methods and more accurate 3D models allows the prediction of uncertainties and anomalies in the manufacturing process as well as reduces the final production costs. However, the high complexity of the geometrical structures defined by point clouds and the high accuracy required by the Quality Assurance/Quality control parameters during the process, pave the way for continuous improvements in smart manufacturing methods. This paper addresses a comprehensive analysis of machining tool identification utilizing temporal point cloud data. Specifically, we deal with the identification of machining tools from temporal 3D point clouds. To do that, we propose a process to construct and train intelligent models utilizing such data. Moreover, in our case study, we provide the research community with two labeled temporal 3D point cloud datasets, and we experiment with the pioneering PointNet neural network and three of its variants demonstrating an accuracy of 95% in the identification of the utilized machining tools in a machining process. Finally, we provide a prototype end-to-end intelligent system of machining tool identification.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Manufacturing is considered one of the most important engineering domains and one of the pillars of the industry (Lasi et al., 2014). Manufacturing intelligence is a key concept in the modernization of the industry and plays a significant role in Industry 4.0 (Zhong et al., 2017). In the context of Industry 4.0, innovative and pioneer companies should incorporate autonomous, smart, sustainable, and resource-efficient manufacturing operations (Ghobakhloo, 2020), leading to operational cost reduction and improvement in machining efficiency.

Specifically, the application of machine learning and deep learning algorithms in manufacturing favors complex manufacturing tasks (Harding et al., 2006). However, the geometrical complexity of the data to be analyzed following the high demand for Quality Assurance/Quality control (QA/QC) parameters is still a tough and difficult challenge (Bia and Wang, 2010; Altıparmak et al., 2021).

In this regard, recently in 3D data analysis, point clouds have become one of the most utilized data types. A point cloud is a collection of data that consists of a large number of points and represents a 3D object. Point clouds are normally represented with the x, y, and z coordinates for each point, although more information may be included such as color intensity or the surface normals of the geometric surface (Wang et al., 2020). Point cloud usage is growing in numerous disciplines, including manufacturing, since additional acquisition technologies, such as LIDARsFootnote 1 or 3D scanners, become accessible (Liang et al., 2021). The acquisition of 3D point cloud data from the aforementioned sensing technologies has great advantages over conventional measurement methods, such as the considerably greater measurement rate and better measurement accuracy (Wang et al., 2020).

However, the lack of specific structure and order in point cloud data, as well as the significantly redundant and inconsistent sample densities, makes it challenging to analyze (Nguyen and Le, 2013). Point clouds are characterized by their unstructured and irregular nature; thus, computational problems are frequently encountered (Guo et al., 2021). Even more, in the manufacturing process, point clouds with high point density are crucial to guarantee the QA/QC parameters. Besides, manufacturing is a dynamic process that produces data at different times generating temporal 3D point clouds (Hou et al., 2016). Therefore, in addition to the spatial dimension, we have also temporality. Depending on the time step, a workpiece in the manufacturing process will be geometrically less or more formed to the final product shape. In similar spatio-temporal intelligent processes, such as autonomous driving, machine, and deep learning algorithms use a wide assortment of data as input to achieve a somehow all-around intelligence (Zhang et al., 2022). Observing the success of such methods in autonomous driving, it is of utmost importance to assure that certain of those methods can also be applied in specific manufacturing tasks, such as the automatic identification of properties in machining processes, utilizing this challenging data type, i.e. 3D point clouds (Ma et al., 2019).

To address these issues, in this paper, we study the application of intelligent techniques in manufacturing utilizing temporal 3D point clouds. Specifically, our contribution is three-fold. First, we propose a novel process and develop guidelines for the task of identification of machining tools while having in possession temporal 3D point cloud data of a workpiece in progress. Additionally, as a second contribution, we provide to the research community two novel 3D point cloud datasets. Finally, we propose an end-to-end intelligent system for machining tool identification using the PointNet (Qi et al., 2017a) neural network. For clarification, in this initial study, even though our data consist of sequences of 3D point clouds, our modeling is based on single instance identification. Thus, we do not fully exploit the temporality of the provided datasets.

The paper is organized as follows. Section 2 portrays an overview of relevant studies and shows certain open challenges in 3D point cloud manufacturing in both industry and academic research. Section 3 displays the research and development of an intelligent machining tool identification framework and Sect. 4 describes our experiments and results. Finally, Sect. 6 summarizes our concluding remarks and provides future research lines.

Related work

Deep learning enables sophisticated analytics capabilities for analyzing large amounts of manufacturing data. A detailed assessment of frequently used deep learning algorithms is presented in (Wang et al., 2018). Also, deep learning is utilized to provide intelligence in specialized manufacturing processes, such as defects detection in products (Yang et al., 2020), fault diagnosis in motors (Shao et al., 2017), manufacturing cost estimation or part machining feature recognition (Ning et al., 2020).

Furthermore, nowadays, specialized research exists on tool monitoring (Nasir and Sassani, 2021). Specifically, researchers apply deep learning techniques to analyze the wear of a tool in high-speed (Zheng and Lin, 2019) while others apply more sophisticated physics-guided intelligent techniques to model the tool’s life (Karandikar et al., 2021). Also, Liu et al. (2020) develop an intelligent prognostic framework for remaining useful life prediction of machining tools under variable conditions improving the health assessment of machining tools by utilizing not only data from multiple sensors but also the spindle load.

Besides, in recent times, the conjunction of deep learning and computer vision methods draws a lot of attention. For instance, researchers focus on downstream tasks utilizing image data, such as feature points recognition of the machining tool path (Hu et al., 2022). Sequential pattern analysis and image processing are also employed. Others use time series of image data (frames) paired with Recurrent Neural Networks (RNNs) and Convolutional Neural Networks (CNNs) in a welding case study (Wang et al., 2021). Finally, a 3D vision system based on image data is developed to identify tool insert specifications, such as insert angles and cutting edge lengths (Ping et al., 2018). All these processes described above produce enormous amounts of data, ranging from time series to 3D shapes (Wang et al., 2018, 2021).

The analysis of 3D shapes in the manufacturing domain is mostly focused on using 3D CAD models instead of images because this type of data carries implicit accurate geometrical information. However, format translation from CAD files is essential because they cannot directly deliver volumetric information to a deep learning model (Peddireddy et al., 2020). Also, there are fewer studies, such as (Feng et al., 2019), that use mesh processing directly. However, meshes contain certain complexity and irregularity, and, thus, the majority of the current research on manufacturing uses mainly 3D point clouds and voxel-based data as inputs. It is worth mentioning that Cao et al. (2020) proposed a graph representation of 3D CAD models, which is more efficient than voxels for deep learning algorithms.

Point cloud data appear in today’s literature in studies that deal with additive manufacturing, i.e. 3D printing guidance (Ye et al., 2020), or automated bridge component recognition, i.e. damage observation in the bridges’ maintenance (Kim et al., 2020), among others. Also, Ma et al. (2019) use 3D point cloud data of part models with point-based neural networks and CNNs to provide automatic recognition of machining features, such as ring slot, triangular passage, etc. Recently, 3D point clouds are also utilized as input to develop efficient and effective detection of surface defects for remanufacturing (He et al., 2022).

However, complex analysis in manufacturing operations using point clouds implies certain challenges, which mainly belong to the heavy industry (Kumar et al., 2018; Moon et al., 2019). Machining manufacturing thrives for complex modeling in its operations due to the need for high-performance computations, which are resource hungry and as a result, their efficiency is more than a need (He et al., 2012). Machining is the removal of material from a workpiece in order to bring it into the desired shape. To perform machining operations on the workpiece a variety of specialized tools are used, such as drilling, milling, and turning tools. Initially, the workpiece is usually a piece of raw material or an existing product, and the machine setup is dictated by the type of material and the amount of material to be removed. During this process, machine tool accessories, precision measurement equipment, and advanced mathematical methods are utilized to ensure and guarantee optimal machining results (Ehmann et al., 1997).

Monitoring a workpiece in progress during a machining task is not a new concept and it aims to enhance the agility in manufacturing (Sun and Jiang, 2008). In complex machining tasks, such as turn-milling, the classification of the machining tools and their properties by understanding the impact of cutting angles and forces is critical. In such machining scenarios, the identification of the optimal tool and its posture could lead to high-efficiency machining and improve the life of the tools. During a machining process, Utsumi et al. (2020) model time series of cutting forces to produce a final point cloud of a workpiece. They represent a workpiece with a point cloud, with any points that interfere with the tool volume being eliminated when the tool edge passes over the workpiece’s surface, i.e. the swept volume. Besides, forecasting the swept volume of a workpiece during a machining process can be a key aid in diminishing the tool wear and making the necessary tool change during the machining process. Wiederkehr et al. (2018) developed stochastic modeling of tool wear in a grinding simulation utilizing point clouds of the swept volume. Finally, the analysis and prediction of the 3D surface topography of a workpiece in progress and its 3D shape evolution are considered a need in auto, aviation, and other industries that have high-performance requirements. However, in prediction tasks that involve five-axis machine tool processing, the process of machining becomes way more challenging due to the time-varying tool locations and orientations (Wang et al., 2020).

The main source of challenges and issues in the research of point clouds is the dimensionality of the data and especially the number of points contained in a point cloud. The data analysis and the ways to pre-process them require certain high computational power and expertise. The majority of the point-based neural network architectures, such as PointNet (Qi et al., 2017a), PointNet++ (Qi et al., 2017b), RSConv (Liu et al., 2019), PointMLP (Ma et al., 2022) operate in most cases with small in size point clouds, i.e. the number of points in each point cloud are normally 1024, 2048 or 4096. However, in real machining scenarios and even machining simulations, the extracted point clouds contain thousands or millions of points. Therefore, there are two ways of analyzing such data: (i) suitable oversampling or undersampling techniques should be considered depending on the size of extracted simulated data, and/or (ii) the input size scalability of the applied neural networks should be enhanced. Indeed, sampling and representation learning on 3D point clouds are challenging because of the irregular and unstructured nature of such data.

In summary, according to our analysis of the existing literature, we have identified certain needs and challenges of the current research on the intersection of temporal 3D point clouds extracted through machining processes and the modeling of them with deep learning algorithms. This fact leads us to perform a series of analysis tasks, provide meaningful insights and develop an end-to-end intelligent system in this field. There is a gap in tool identification methods and especially when working with temporal point clouds, i.e. point clouds extracted in time intervals with uniform or non-uniform sampling forming a sequence of temporal 3D point clouds. While the majority of the studies in the domain, focus on side data of the workpiece or the machining tool, such as cutting forces or other properties, to later form a final point cloud through modeling Utsumi et al. (2020), we focus directly on the point clouds of the workpiece and tools. We aim to provide end-to-end intelligence in such machining scenarios emphasizing the point clouds sampled through the whole machining process in different time steps.

The study of Ping et al. (2018) is somehow related to our study. However, they provide a solution to the problem of misplacement of tool inserts in machining tools with turning capability. Their proposed framework is able to identify insert angles, cutting edge lengths, and nose radii utilizing image data and not on 3D point clouds. Instead, we utilize 3D point clouds of the surface of an in-machining process workpiece and identify the tool that was utilized to machine its surface. Our work provides novelty in the analysis of manufacturing-related 3D point clouds. Machining tool identification may lead to the reverse engineering of workpieces. Additionally, incorporating a tool identification model into a machining decision support system may provide extra insights to avoid defects or resource waste due to non-optimal tool selection.

At the time of writing, there are no exact relative studies or well-known frameworks able to provide concrete end-to-end results and insights in the task of tool identification based on temporal 3D point cloud data of a workpiece in progress. Finally, the forecasting of the shape of the workpiece in progress and the swept volume during the machining process are two open issues of utmost importance for the manufacturing industry.

Case study: machining tool identification

This section details the designed machining tool identification case study. First, Sect. 3.1 defines the problem. Second, Sect. 3.2 details the overall process of machining tool identification. Third, Sect. 3.3 describes the complete data preparation and pre-processing steps to construct temporal 3D point clouds from a workpiece in progress. Fourth, Sect. 3.4 defines the modeling process, where the goal is to effectively classify the input 3D point clouds to identify the most utilized machining tool that shaped the workpiece. Finally, Sect. 3.5 shows the final intelligent system to identify machining tools.

Problem statement

Normally, the machining process starts with a workpiece of raw material in which different tools can be used to achieve the final product shape. In Fig. 1, we illustrate examples of the machining process of a raw material utilizing two different tools. In our case, we assume that all the present elements in a machining process, i.e. workpiece and machining tools, are modeled through a sampling process with 3D point clouds at different steps in time, resulting in temporal point clouds. In Eqs. 1 and 2, we formulate a point cloud and a series of temporal point clouds per machining tool respectively. Also, the total number of temporal point clouds per machining tool is formulated and explained in Eq. 3.

where \(pc^{tool_i}\) denotes a point cloud of a workpiece shaped by a machining tool, \(tool_i\), and \(k_1, \dots , k_{npoints}\) represent the points contained in it, with npoints showing the maximum number of points.

where \(p^{tool_i}\) denotes a series of temporal point clouds (i.e. multiple temporal \(pc^{tool_i}\)) of a workpiece utilizing the machining tool, \(tool_i\). The union \(\bigcup _{t=0}^{T}\) includes all the point clouds from the time step \(t=0\) to \(t=T\). The T denotes the final time step of sampling.

where P shows the total number of temporal point clouds obtained by utilizing all the available machining tools (n) and it represents the complete dataset. The union \(\bigcup _{i=1}^{n}\) includes all the temporal point clouds that belong to each tool ranging from the \(tool_1\) to \(tool_n\).

A machining process of a workpiece using two different machining tools (The figures were downloaded from https://www.grainger.com/category/machining. No copyright infringement intended)

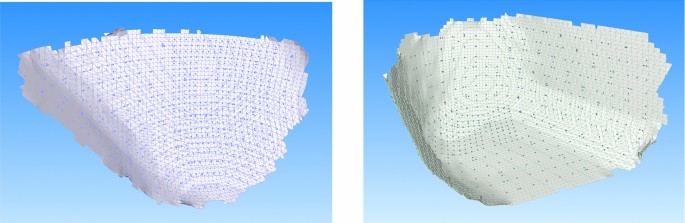

For completion, we also show three examples of temporal point clouds of a workpiece in progress in Fig. 2. Then, given a product (workpiece), the aim is to identify the machining tool with which it has been created. For this purpose, we utilize as input in our intelligent process these temporal point clouds of the workpiece, capturing all the machining process evolution, from the first step to the final product workpiece.

Temporal point clouds of the workpiece in progress. In this example, we show the application of tool 4 in three different time steps, \(t=0\), \(t=150\), \(t=300\) (from left to right) illustrating the \(pc_0^{tool_4}\), \(pc_{150}^{tool_4}\), \(pc_{300}^{tool_4}\) respectively. Please note that each point cloud has 1024 points, i.e. \(pc^{tool_4} = \bigg \{p1,\dots ,p1024\bigg \}\)

Overall process

In this section, we describe the overall process of machining tool identification utilizing point clouds. An abstract overview of the development of an intelligent framework to identify machining tools is portrayed in Fig. 3. Actually, the knowledge in the intelligent system, depicted in Fig. 3, is provided by a model that is trained in a set of temporal workpieces resulting from different machining tools. In the first stage, namely data preparation and pre-processing, a set of temporal point clouds of the workpiece shaped with different tools is built. These temporal workpieces are categorized according to the machining tool that is used to remove raw material during the shaping process into the desired form. In the learning process, a deep learning model is trained to classify a temporal workpiece according to n different tool labels, where n denotes the number of available tools. Afterward, this trained model will be used by the intelligent system to classify unknown temporal workpieces, predicting with certain accuracy the machining tool that shaped the workpiece. It should be noted that, initially, we extract temporal 3D point clouds during a machining process of a workpiece with several tools. Then, the temporal point clouds of a workpiece are categorized and labeled according to the machining tool that was used during shaping.

Data preparation and pre-processing

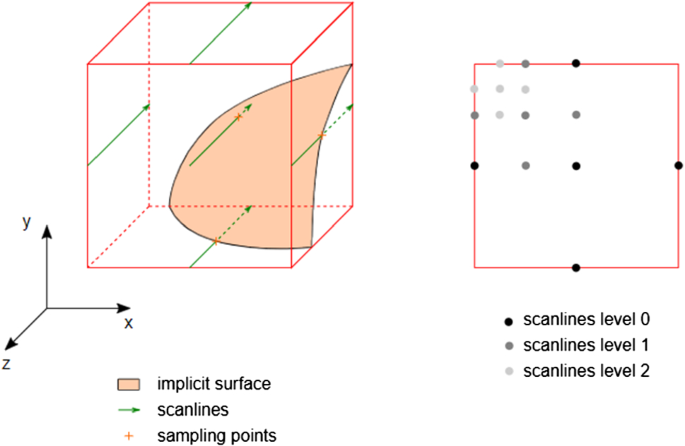

In our study, we acquire data through machining simulation software, which uses an implicit surface representation during geometric modeling. Then, in order to extract temporal 3D point clouds of the in-process workpiece geometry, a scanline-based approach is used for point sampling of the implicit surface representation, as displayed in Figs. 4 and 5.

The machining process is illustrated in four steps in Fig. 4. Steps (a), (b), and (c) refer to the machining simulation and the actions that take place before the process of data preparation. In the first step (a) we have the initial 3D mesh workpiece. Then we apply a machining tool, where the swept volume of a moving machining tool is depicted in yellow in (b) and we observe the material removal on the resulting workpiece in step (c). Finally, in step (d) we show the current tool engagement, where we apply a sampling process in the exact region in which the machining tool removed the material of the workpiece. Indeed, the data preparation of our proposal takes place in step (d).

Once we obtain the region to be rasterized (Fig. 4 (d)), we proceed with the next process. Initially, three scanline directions in a geometrical box are used to scan the x, y, and z coordinated space. The scanlines are used to sample the implicit surface representation of the current workpiece geometry. Figure 5 details the sampling process according to which we acquired the 3D point clouds. To achieve a fixed and predefined number of sampled points, a recursive subdivision of scanlines using a breadth-first strategy is utilized. The levels of scanlines denote the recursive subdivision. The scanning process stops when the defined number of sampling points is acquired. The scanning takes place in different time steps during the machining process. It is worth noticing that, the sampled points acquired during each scanning dimension are merged together to form the final point cloud. Please note that, during the whole process, we sample the entry points of the implicit surface, or alternatively the first contact points, in which a scanline is passing through.

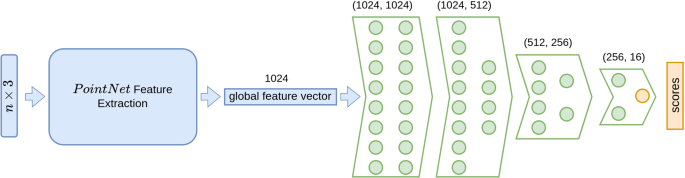

\(PointNet_{+1L}\) Architecture. We have added a layer of (1024, 1024) on the PointNet’s classification head (Qi et al., 2017a)

\(PointNet_{l}^{v1}\) Architecture. We have decreased the number of neurons per layer on PointNet’s (Qi et al., 2017a) architecture to 1/4, both in the back-end feature extraction layers and classification head

Therefore, by following the above-mentioned sampling process, we acquire two 3D point cloud datasets, taking into account different scanning resolutions. The first one, called 16 Tools Small Dataset, consists of point cloud objects having a fixed amount of sampled points (1024) in each point cloud. It contains 324 sequential (in time) temporal point cloud objects of the workpiece in each tool label while having in total 16 different tools (\(n=16\)). The total number of point clouds in 16 Tools Small Dataset is 5184. The second dataset, called 16 Tools Large Dataset, is obtained by adjusting the sampling density of the sampling process, which similarly to the 16 Tools Small Dataset consists of point cloud objects having a fixed amount of sampled points (1024) in each point cloud. However, in this case, the sampling process is done in a smaller region of the workpiece point cloud, which corresponds to a more compact geometrical sampling box. This region of points corresponds to the exact contact area of contact between the tool and the workpiece in progress, i.e. the bounding box of the scanning is more compact and focuses on a smaller region. This dataset contains 810 temporal point cloud objects for each tool label while having in total 16 different tools. The total number of point clouds in 16 Tools Large Dataset is 12960. In practice, the geometrical sampling box in the creation of 16 Tools Small Dataset covers a larger area of the implicit surface of the workpiece than the one in 16 Tools Large Dataset. We summarize the details of the two datasets in Table 1. Also, we portray example point clouds obtained by applying different machining tools on the workpiece in progress in Fig. 6.

For clarification purposes, we also calculate the maximum distance of each point from all the other points inside a point cloud in each dataset using the generalization of the Euclidean distance in 3D space. Thus, in 16 Tools Large Dataset the maximum distance of a point from its neighbors is approximately 1.77, while in 16 Tools Small Dataset it is approximately 1.86, showing that the point clouds contained in 16 Tools Small Dataset capture a larger area of the current tool engagement with 1024 points, while the ones contained in 16 Tools Large Dataset capture a smaller one with the same number of points (1024).

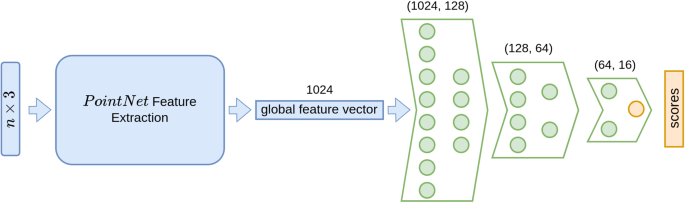

\(PointNet_{l}^{v2}\) Architecture. We have decreased the number of neurons per layer on PointNet’s (Qi et al., 2017a) architecture to 1/4 in the classification head while keeping the default backend feature extraction layers

Modelling

During the learning process, depicted in Fig. 3, we train intelligent models to identify the tools that have been used in the machining process utilizing the temporal point clouds that we previously extracted from a workpiece, as explained in Sect. 3.2. We analyze the task of tool identification with four point-based deep learning models, the PointNet (Qi et al., 2017a) and three variants of it. These point-based neural networks are based on the pioneering deep learning architecture, PointNet. PointNet is a fundamental deep learning architecture that enables the direct analysis of point cloud instances. Also, we construct three variants of PointNet architecture in order to exploit its full capabilities in identifying underlying point cloud data relations and to create a baseline method for machining tool identification using 3D point clouds.

We decided to fully exploit the capabilities of the well-known point-based neural network architecture, PointNet, in order to create a baseline for future analyses, by modifying it in three ways: (i) extending its classification head by adding a linear layer, (ii) simplifying it by reducing the number of neurons in each layer in both backend feature extraction layers and classification head layers, (iii) simplifying it by reducing the number of neurons in each layer of the classification head but keeping the default backend feature extraction layers.

Firstly, we increase the complexity and the number of parameters in the default PointNet architecture in order to identify highly complex relations between the point cloud data. Thus, we experiment with the addition of a linear layer with an input and output size equal to the length of the global feature vector in PointNet architecture, i.e 1024. We name \(PointNet_{+1L}\) the neural network that includes this additional layer on top of PointNet and is depicted in Fig. 7. By increasing the network’s complexity, we expect to observe higher classification accuracy than the default architecture in the presence of highly complex underlying relations in the input point clouds.

Secondly, to reveal the effectiveness of a simpler neural network, we experiment with less complex PointNet architectures having fewer parameters than the default one, namely \(PointNet_{l}^{v1}\) and \(PointNet_{l}^{v2}\). In \(PointNet_{l}^{v1}\), we decrease the neurons in each layer (both backend and classification head) to 1/4 compared to the default PointNet architecture. In \(PointNet_{l}^{v2}\), we decrease the neurons in each layer of the classification head to 1/4 compared to the default PointNet architecture and keep the default backend feature extraction. Figures 8 and 9 display \(PointNet_{l}^{v1}\) and \(PointNet_{l}^{v2}\) respectively.

Final system

Finally, after the learning process, as shown in Fig. 3, an intelligent system is constructed by selecting the best-trained model. Figure 10 shows an example run of the intelligent system, where we have the input of a point cloud into the system. Then, the system utilizes a pre-trained neural network model to identify the machining tool that was used to shape the input workpiece.

Experiments

We detail our experimental setup in Sect. 4.1, and discuss our results in Sect. 4.2.

Experimental setup

Here, we describe our experimental investigation on the development of an intelligent system capable of identifying machining tools with the extracted temporal 3D point clouds of a workpiece in progress.

Technically, we use Python 3.8.10, CUDA 10.2, and Pytorch 1.8.1 version. Our system configuration includes an Intel Core i9-10900 paired with 32GB of RAM and a Quadro RTX 5000 with 16 GB and the operating system is Ubuntu 20.04.

We utilize a standard protocol for training and testing the neural network models. Regarding the training and testing with the 16 Tools Small Dataset, we utilize 4672 temporal point clouds of a workpiece for training and 512 for testing. Concerning the training with 16 Tools Large Dataset, we utilize 11661 temporal point clouds for training and 1295 for testing. In both cases, 90% of the total point clouds is used for training and 10% for testing. Also, to assess the variability in our models we run the training of the models 4 times and we report the standard deviation of the results.

Moreover, although our data collection consists of artificial/synthetic data, we train the selected neural networks with certain data augmentation techniques in order to robustify their performance. Specifically, during training, we apply input noise in each point cloud, scaling, and random point dropout. Additionally, we train all the networks for 200 epochs utilizing the Adam optimizer with exponential learning rate decay and an initial learning rate of 0.001. Finally, we experiment with different batch size values (8, 16, 32, and 64).

Results

In this section, we show the results of our experiments in Table 2 and describe them onwards. As detailed in Sect. 4.1, we investigate different batch sizes for training the four neural network models, PointNet, \(PointNet_{+1L}\), \(PointNet_{l}^{v1}\) and \(PointNet_{l}^{v2}\). Besides, we run the training process for each model 4 times to estimate the models’ variability, and we include the mean learning curves, i.e. training and testing loss and accuracy curves, in the Supplementary material. Please recall that the models are trained with input noise in each point cloud, scaling, and random point dropout, which clearly enhances the generalizability of the models.

Also, as efficiency is a need in machining manufacturing systems (He et al., 2012), we report the time for a single instance inference (i.e. \(1 \times [1024, 3]\)) and the number of parameters of each model in Table 3. Please note that measuring inference time is closely related to the system setup, which is described previously in Sect. 4.1.

It can be observed that in the 16 Tools Small Dataset, the two best models are the ones that use fewer parameters, i.e. the most efficient ones (Table 3). \(PointNet_{l}^{v2}\) achieves a high classification accuracy of 0.77 with a batch size of 8. Also, both \(PointNet_{l}^{v2}\) and the most efficient model \(PointNet_{l}^{v1}\) achieve the second-best score of 0.68 with batch sizes equal to 32 and 16 respectively. However, \(PointNet_{l}^{v2}\) has a lower standard deviation in its best results, meaning that it has a lower variability in its predictions.

On the other hand, in 16 Tools Large Dataset, we observe that \(PointNet_{l}^{v2}\) using a batch size of 16 achieves the best accuracy of 0.95, with a very low standard deviation of 0.01, denoting that it has consistency in its best accuracy results in the 4 training sessions. PointNet achieves the second-best accuracy with a score of 0.84 with a batch size of 32. In fact, in both datasets, large batch sizes do not achieve high accuracy.

It should be noted that the accuracy scores of \(PointNet_{l}^{v1}\) and \(PointNet_{l}^{v2}\) in both datasets, reveal two promising models in terms of machining tool identification as the selected model for a tool identification system should also be highly efficient in its utilized resources. \(PointNet_{l}^{v1}\) has 0.17 M parameters while \(PointNet_{l}^{v2}\) has 2.94 M. Also, their inference times are lower than the rest of the models.

Consequently, due to its general high accuracy in both datasets and its efficiency, \(PointNet_{l}^{v2}\) is utilized to serve as a classifier of tools in our intelligent system for machining tool identification, presented in Fig. 3. However, the model selection could be changed according to the expert’s needs.

Limitations and discussion

Machining tool identification based on 3D point clouds of a workpiece in-process could lead to reverse engineering of a final product shape and reduce material waste due to non-optimal tool selection. The purpose of this research is to provide a baseline framework and a prototype end-to-end intelligent system of machining tool identification based on 3D point cloud representation learning. Point-based neural networks have shown great potential in a wide variety of artificial and real datasets and successfully applied in other domains (Guo et al., 2021). In this study, we develop our framework with synthetic/artificial data due to non-publicly available real data for such tasks. We are aware that in a real machining environment the acquired 3D point cloud of the area by a LIDAR scanner will be highly saturated from noise and probably we will observe degraded accuracy performance of the trained neural network. In this case, data pre-processing is essential and should receive high attention. Noise filtering, outlier removal, and context-aware sampling methods should be employed prior to modeling. However, in an attempt to handle such issues, we apply data augmentation during training. Specifically, as mentioned in Sect. 4.1, we apply input noise in each point cloud, scaling, and random point dropout. Augmenting the input data during training is beneficial for a model to generalize better. It may strengthen its robustness to noise, outlier points, and rigid transformations to achieve similar performance in a real scenario. In the near future, we plan to utilize point-based neural networks to analyze 3D point clouds captured in the wild from real laser sensors. Finally, even though our data consist of sequences of 3D point clouds, our modeling is based on single instance identification and thus, we do not fully exploit the temporality of the provided datasets. For this, a future work direction of utmost importance is spatio-temporal forecasting of multiple machining tools using sequences of temporal 3D point clouds of a workpiece. Also, as an extension, potential research directions imply forecasting the whole shape of a workpiece in progress.

Conclusion

The analysis of point cloud data originating from manufacturing simulations implies certain challenges and research opportunities. In this paper, we propose a novel process and advance guidelines in the task of identification of machining tools while possessing temporal 3D point cloud data of a workpiece in progress. In addition, we provide to the research community two datasets, the 16 Tools Small Dataset and 16 Tools Large Dataset, both of them containing sequences of temporal 3D point clouds. The two datasets can be found in this data repository: https://doi.org/10.34810/data205. Finally, we propose a prototype intelligent system for machining tool identification using PointNet neural network, which is able to analyze the input 3D point clouds of a workpiece in progress and identify the utilized machining tool with high accuracy. Our research could be a source of interest for industrial companies and research institutes. The code associated with this study is available in the following GitHub repository: https://github.com/thzou/machining_tools_identification.

Notes

Light Imaging, Detection, And Ranging.

References

Altıparmak, S. C., Yardley, V. A., Shi, Z., et al. (2021). Challenges in additive manufacturing of high-strength aluminium alloys and current developments in hybrid additive manufacturing. International Journal of Lightweight Materials and Manufacture, 4(2), 246–261. https://doi.org/10.1016/J.IJLMM.2020.12.004

Bia, Z. M., & Wang, L. (2010). Advances in 3D data acquisition and processing for industrial applications. Robotics and Computer-Integrated Manufacturing, 26(5), 403–413. https://doi.org/10.1016/J.RCIM.2010.03.003

Cao, W., Robinson, T., Hua, Y., et al. (2020). Graph representation of 3D CAD models for machining feature recognition with deep learning. In Proceedings of the ASME 2020 international design engineering technical conferences and computers and information in engineering conference. https://doi.org/10.1115/DETC2020-22355

Ehmann, K. F., Kapoor, S. G., DeVor, R. E., et al. (1997). Machining process modeling: A review. Journal of Manufacturing Science and Engineering, 119(4B), 655–663. https://doi.org/10.1115/1.2836805

Feng, Y., Feng, Y., You, H., et al. (2019). Meshnet: Mesh neural network for 3d shape representation. In Proceedings of the\(33{rd}\)AAAI conference on artificial intelligence, pp. 8279–8286. https://doi.org/10.1609/aaai.v33i01.33018279

Ghobakhloo, M. (2020). Industry 4.0, digitization, and opportunities for sustainability. Journal of Cleaner Production, 252, 119869. https://doi.org/10.1016/J.JCLEPRO.2019.119869

Guo, Y., Wang, H., Hu, Q., et al. (2021). Deep learning for 3D point clouds: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence, 43(12), 4338–4364. https://doi.org/10.1109/TPAMI.2020.3005434

Harding, J. A., Shahbaz, M., Srinivas, et al. (2006). Data mining in manufacturing: A review. Journal of Manufacturing Science and Engineering, 128(4), 969–976. https://doi.org/10.1115/1.2194554

He, Y., Liu, B., Zhang, X., et al. (2012). A modeling method of task-oriented energy consumption for machining manufacturing system. Journal of Cleaner Production, 23(1), 167–174. https://doi.org/10.1016/J.JCLEPRO.2011.10.033

He, Y., Ma, W., Li, Y., et al. (2022). An octree-based two-step method of surface defects detection for remanufacture. International Journal of Precision Engineering and Manufacturing - Green Technology. https://doi.org/10.1007/s40684-022-00433-z

Hou, M., Li, S., Jiang, L., et al. (2016). A new method of gold foil damage detection in stone carving relics based on multi-temporal 3d lidar point clouds. ISPRS International Journal of Geo-Information, 5(5), 60. https://doi.org/10.3390/ijgi5050060

Hu, P., Song, Y., Zhou, H., et al. (2022). Feature points recognition of computerized numerical control machining tool path based on deep learning. Computer-Aided Design, 149(103), 273. https://doi.org/10.1016/J.CAD.2022.103273

Karandikar, J., Schmitz, T., & Smith, S. (2021). Physics-guided logistic classification for tool life modeling and process parameter optimization in machining. Journal of Manufacturing Systems, 59, 522–534. https://doi.org/10.1016/J.JMSY.2021.03.025

Kim, H., Yoon, J., & Sim, S. H. (2020). Automated bridge component recognition from point clouds using deep learning. Structural Control and Health Monitoring, 27(9), e2591. https://doi.org/10.1002/stc.2591

Kumar, G. A., Patil, A. K., & Chai, Y. H. (2018). Alignment of 3d point cloud, cad model, real-time camera view and partial point cloud for pipeline retrofitting application. In 2018 International conference on electronics, information, and communication (ICEIC), pp 1–4. https://doi.org/10.23919/ELINFOCOM.2018.8330627

Lasi, H., Fettke, P., Kemper, H. G., et al. (2014). Industry 4.0. Business and Information Systems Engineering, 6(4), 239–242. https://doi.org/10.1007/12599-014-0334-4

Liang, Z., Guo, Y., Feng, Y., et al. (2021). Stereo matching using multi-level cost volume and multi-scale feature constancy. IEEE Transactions on Pattern Analysis and Machine Intelligence, 43(1), 300–315. https://doi.org/10.1109/TPAMI.2019.2928550

Liu, C., Zhang, L., Niu, J., et al. (2020). Intelligent prognostics of machining tools based on adaptive variational mode decomposition and deep learning method with attention mechanism. Neurocomputing, 417, 239–254. https://doi.org/10.1016/J.NEUCOM.2020.06.116

Liu, Y., Fan, B., Xiang, S., et al. (2019). Relation-shape convolutional neural network for point cloud analysis. In 2019 IEEE/CVF conference on computer vision and pattern recognition (CVPR), pp. 8887–8896. https://doi.org/10.1109/CVPR.2019.00910

Ma, X., Qin, C., You, H., et al. (2022). Rethinking network design and local geometry in point cloud: A simple residual MLP framework. In International conference on learning representations (ICLR). https://doi.org/10.48550/ARXIV.2202.07123

Ma, Y., Zhang, Y., & Luo, X. (2019). Automatic recognition of machining features based on point cloud data using convolution neural networks. In Proceedings of the 2019 international conference on artificial intelligence and computer science, AICS 2019, pp. 229–235. https://doi.org/10.1145/3349341.3349407

Moon, D., Chung, S., Kwon, S., et al. (2019). Comparison and utilization of point cloud generated from photogrammetry and laser scanning: 3D world model for smart heavy equipment planning. Automation in Construction, 98, 322–331. https://doi.org/10.1016/J.AUTCON.2018.07.020

Nasir, V., & Sassani, F. (2021). A review on deep learning in machining and tool monitoring: Methods, opportunities, and challenges. The International Journal of Advanced Manufacturing Technology, 115(9), 2683–2709. https://doi.org/10.1007/S00170-021-07325-7

Nguyen, A., & Le, B. (2013). 3D point cloud segmentation: A survey. In 6th IEEE conference on robotics, automation and mechatronics (RAM), pp 225–230. IEEE. https://doi.org/10.1109/RAM.2013.6758588

Ning, F., Shi, Y., Cai, M., et al. (2020). Manufacturing cost estimation based on a deep-learning method. Journal of Manufacturing Systems, 54, 186–195. https://doi.org/10.1016/J.JMSY.2019.12.005

Peddireddy, D., Fu, X., Wang, H., et al. (2020). Deep learning based approach for identifying conventional machining processes from CAD data. Procedia Manufacturing, 48, 915–925. https://doi.org/10.1016/J.PROMFG.2020.05.130

Ping, G. H., Chien, J. H., & Chiang, P. J. (2018). Verification of turning insert specifications through three-dimensional vision system. The International Journal of Advanced Manufacturing Technology, 96(9), 3391–3401. https://doi.org/10.1007/S00170-018-1805-4

Qi, CR., Su, H., Mo, K., et al. (2017a). PointNet: Deep learning on point sets for 3D classification and segmentation. In Proceedings of the 30th IEEE conference on computer vision and pattern recognition (CVPR), pp. 77–85. https://doi.org/10.1109/CVPR.2017.16

Qi, CR., Yi, L., Su, H., et al. (2017b). PointNet++: Deep hierarchical feature learning on point sets in a metric space. In advances in neural information processing systems (neurips), pp. 5100–5109. https://doi.org/10.5555/3295222

Shao, S. Y., Sun, W. J., Yan, R. Q., et al. (2017). A Deep Learning Approach for Fault Diagnosis of Induction Motors in Manufacturing. Chinese Journal of Mechanical Engineering, 30(6), 1347–1356. https://doi.org/10.1007/s10033-017-0189-y

Sun, H., & Jiang, P. (2008). Tracking online workpiece machining procedure in a mobile collaborative environment. Concurrent Engineering, 16(4), 291–300. https://doi.org/10.1177/1063293X08100029

Utsumi, K., Shichiri, S., & Sasahara, H. (2020). Determining the effect of tool posture on cutting force in a turn milling process using an analytical prediction model. International Journal of Machine Tools and Manufacture, 150(103), 511. https://doi.org/10.1016/J.IJMACHTOOLS.2019.103511

Wang, J., Ma, Y., Zhang, L., et al. (2018). Deep learning for smart manufacturing: Methods and applications. Journal of Manufacturing Systems, 48, 144–156. https://doi.org/10.1016/J.JMSY.2018.01.003

Wang, Q., Tan, Y., & Mei, Z. (2020). Computational methods of acquisition and processing of 3D point cloud data for construction applications. Archives of Computational Methods in Engineering, 27(2), 479–499. https://doi.org/10.1007/s11831-019-09320-4

Wang, Q., Jiao, W., Wang, P., et al. (2021). A tutorial on deep learning-based data analytics in manufacturing through a welding case study. Journal of Manufacturing Processes, 63, 2–13. https://doi.org/10.1016/J.JMAPRO.2020.04.044

Wang, W., Li, Q., & Jiang, Y. (2020). A novel 3D surface topography prediction algorithm for complex ruled surface milling and partition process optimization. International Journal of Advanced Manufacturing Technology, 107(9–10), 3817–3831. https://doi.org/10.1007/s00170-020-05263-4

Wiederkehr, P., Siebrecht, T., & Potthoff, N. (2018). Stochastic modeling of grain wear in geometric physically-based grinding simulations. CIRP Annals, 67(1), 325–328. https://doi.org/10.1016/J.CIRP.2018.04.089

Yang, J., Li, S., Wang, Z., et al. (2020). Using deep learning to detect defects in manufacturing: A comprehensive survey and current challenges. Materials, 13(24), 5755. https://doi.org/10.3390/MA13245755

Ye, Z., Liu, C., Tian, W., et al. (2020). A deep learning approach for the identification of small process shifts in additive manufacturing using 3D point clouds. Procedia Manufacturing, 48, 770–775. https://doi.org/10.1016/J.PROMFG.2020.05.112

Zhang, M., Ma, M., Zhang, J., et al. (2022). A novel spatio-temporal trajectory data-driven development approach for autonomous vehicles. Frontiers of Earth Science, 15(3), 620–630. https://doi.org/10.1007/S11707-021-0938-1

Zheng, H., & Lin, J. (2019). A deep learning approach for high speed machining tool wear monitoring. In Proceedings of the 3rd international conference on robotics and automation sciences (ICRAS), pp. 63–68. https://doi.org/10.1109/ICRAS.2019.8809070

Zhong, R. Y., Xu, X., Klotz, E., et al. (2017). Intelligent manufacturing in the context of industry 4.0: A review. Engineering, 3(5), 616–630. https://doi.org/10.1016/J.ENG.2017.05.015

Funding

Open Access funding provided thanks to the CRUE-CSIC agreement with Springer Nature.  This project has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie grant agreement No 860843.

This project has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie grant agreement No 860843.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing Interests

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Zoumpekas, T., Leutgeb, A., Puig, A. et al. Machining tool identification utilizing temporal 3D point clouds. J Intell Manuf 35, 1221–1232 (2024). https://doi.org/10.1007/s10845-023-02093-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10845-023-02093-5