Contents

Evaluating the Best Image Annotation Tools for Computer Vision Projects

Most Popular Image Annotation Tools

Encord

Scale

CVAT (Computer Vision Annotation Tool)

Label Studio

Labelbox

Playment

Appen

Dataloop

SuperAnnotate

V7 Labs

Hive

COCO Annotator

Make Sense

VGG Image Annotator

LabelMe

Amazon SageMaker Ground Truth

VOTT

Key Takeaways from Using Image Annotation Tools for Computer Vision Projects

Encord Blog

Best Image Annotation Tools for Computer Vision [Updated 2024]

Power your AI models with the right data

Automate your data curation, annotation and label validation workflows.

4.8/5

Contents

Evaluating the Best Image Annotation Tools for Computer Vision Projects

Most Popular Image Annotation Tools

Encord

Scale

CVAT (Computer Vision Annotation Tool)

Label Studio

Labelbox

Playment

Appen

Dataloop

SuperAnnotate

V7 Labs

Hive

COCO Annotator

Make Sense

VGG Image Annotator

LabelMe

Amazon SageMaker Ground Truth

VOTT

Key Takeaways from Using Image Annotation Tools for Computer Vision Projects

Written by

Nikolaj Buhl

TLDR: We will outline the most popular image annotation tools in 2024. Compare features and pricing to choose the best image annotation tool for your use case.

It’s 2024—annotating images is still one of the most time-consuming steps in bringing a computer vision project to market. To help you out, we put together a list of the most popular image labeling tools out there.

Whether you are:

- A computer vision team building unmanned drones with your own in-house annotation tool.

- A team of data scientists working on an autonomous driving project looking for large-scale labeling services.

- Or a data operations team working in healthcare looking for the right platform for your radiologists to accurately label CT scans.

This guide will help you compare the top AI annotation tools and find the right one for you.

We will compare each based on key factors - including image annotation service, support for different data types and use cases, QA/QC capabilities, security and data privacy, integration with the machine learning pipeline, and customer support.

But first, let's explore the process of selecting an image annotation tool from the available providers.

Choosing the right image annotation tool is a critical decision that can significantly impact the quality and efficiency of the annotation process. To make an informed choice, it's essential to consider several factors and evaluate the suitability of an image annotation tool for specific needs.

Evaluating the Best Image Annotation Tools for Computer Vision Projects

Selecting the perfect image annotation tool is like choosing the perfect brush for your painting.

Different projects require specific annotation needs that dictate how downstream components. When evaluating an annotation tool that fits your project specifications, there are a few key factors you have to consider. In this section, we will explore those key factors and practical considerations to help you navigate the selection process and find the most fitting AI annotation tool for your computer vision applications.

- Annotation Types: An effective labeling tool should support various annotation types, such as bounding boxes (ideal for object localization), polygons (useful for detailed object outlines), keypoints (for pose estimation), and semantic segmentation (for scene understanding). The tool must be adaptable to different annotation requirements, allowing users to annotate images with precision and specificity based on the task at hand.

- User Interface (UI) and User Experience (UX): The user interface plays a crucial role in the efficiency and accuracy of the annotation process. A good annotation tool should have an intuitive interface that is easy to navigate, reducing the learning curve for users. Clear instructions, user-friendly controls, and efficient workflows contribute to a smoother annotation experience.

- Scalability: Consider the tool's ability to scale with the growing volume of data. A tool that efficiently handles large datasets and multiple annotators is crucial for projects with evolving requirements.

- Automation and AI Integration: Look for image labeling tools that offer automation features, such as automatic annotation tools or features, to accelerate the annotation process. Integrating an AI photo editor into the annotation process can significantly refine the accuracy of annotations, especially in complex imaging scenarios, thereby enhancing both the speed and quality of data labeling. Integration with artificial intelligence (AI) algorithms can further enhance efficiency by automating repetitive tasks, reducing manual effort, and improving annotation accuracy.

- Collaboration and Workflow Management: Assess the data annotation tool's collaboration features, including version control, user roles, and workflow management. Collaboration tools are essential for teams working on complex annotation projects.

- Data Security and Privacy: Ensure that the tool adheres to data security and privacy standards like GDPR. Evaluate encryption methods, access controls, and policies regarding the handling of sensitive data.

- Pricing: Consider various pricing models, such as per-user, per-project, or subscription models. Also factor in scalability costs, and potential additional fees, ensuring transparency in the pricing structure.

Once you've identified which factors are most important for you to evaluate image annotating tools, the next step is understanding how to assess their suitability for your specific use case.

Most Popular Image Annotation Tools

Let's compare the features offered by the best image annotation companies such as Encord, Scale AI, Label Studio, SuperAnnotate, CVAT, and Amazon SageMaker Ground Truth, and understand how they assist in annotating images.

This article discusses the top 17 image annotation tools in 2024 to help you choose the right image annotation software for your use case.

- Encord

- Scale

- CVAT

- Label Studio

- Labelbox

- Playment

- Appen

- Dataloop

- SuperAnnotate

- V7 Labs

- Hive

- COCO Annotator

- Make Sense

- VGG Image Annotator

- LabelMe

- Amazon SageMaker Ground Truth

- VOTT

Encord

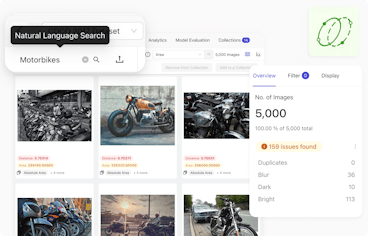

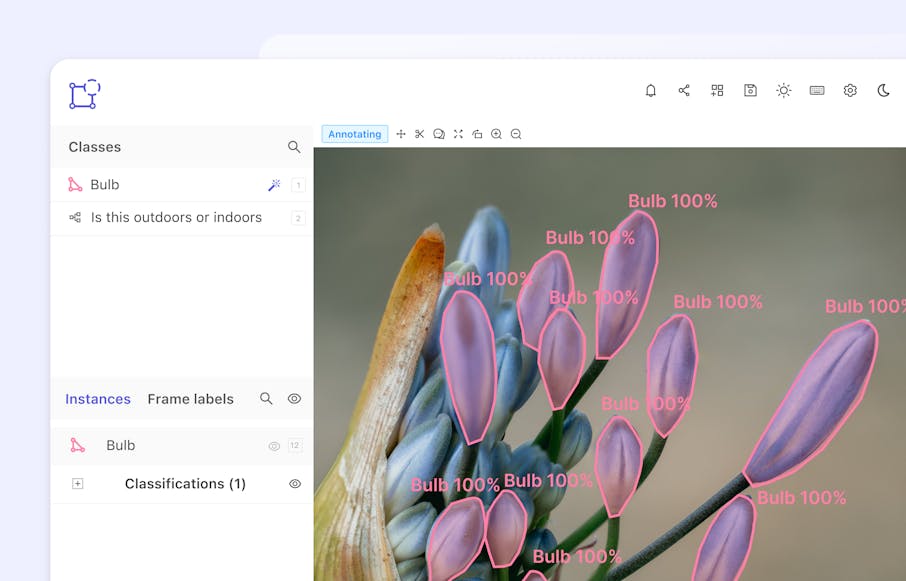

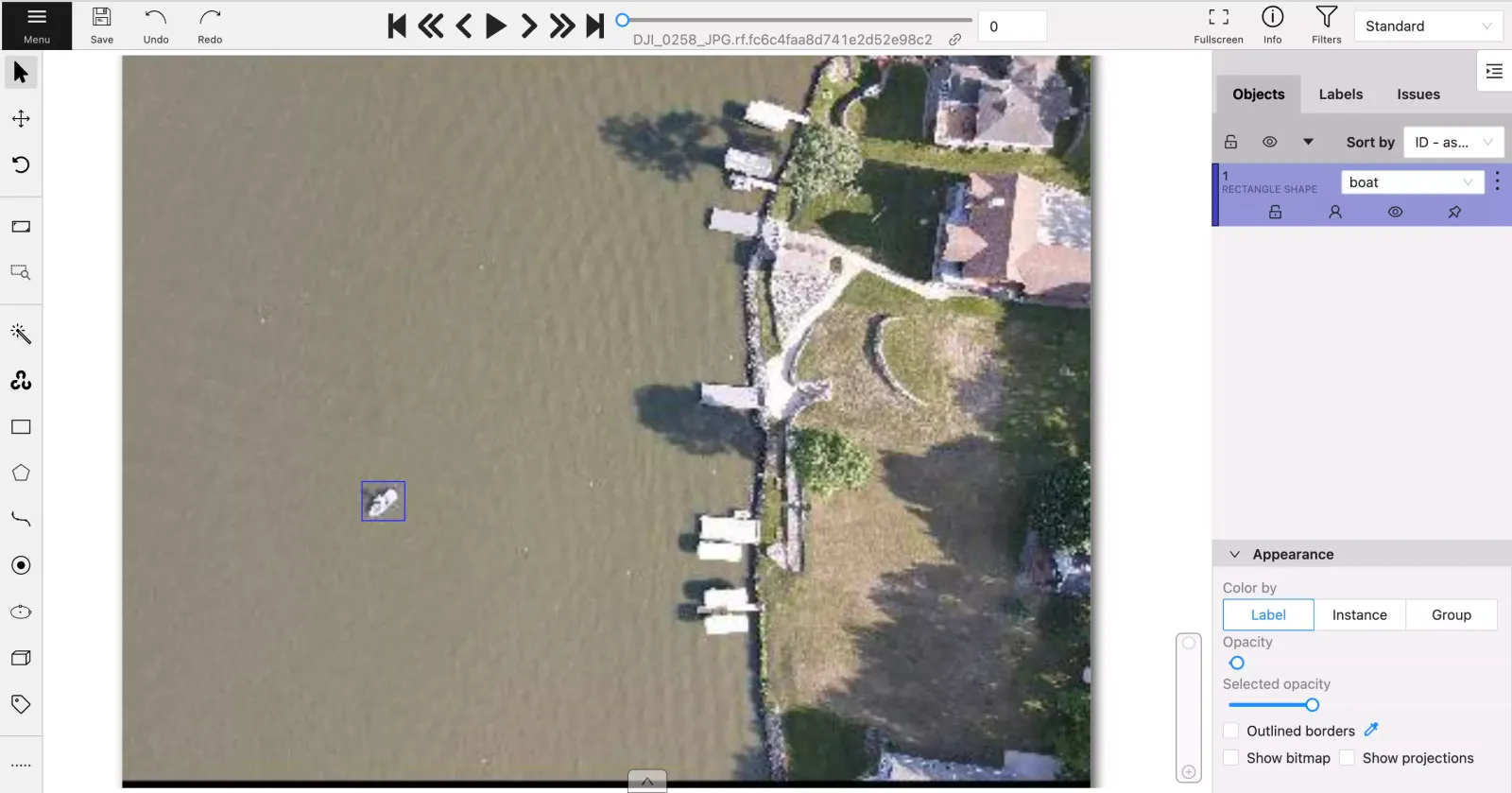

Encord is an automated annotation platform for AI-assisted image annotation, video annotation, and dataset management.

Key Features

- Data Management: Compile your raw data into curated datasets, organize datasets into folders, and send datasets for labeling.

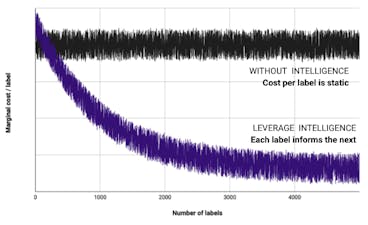

- AI-assisted Labeling: Automate 97% of your annotations with 99% accuracy using auto-annotation features powered by Meta's Segment Anything Model or GPT-4’s LLaVA.

- Collaboration: Integrate human-in-the-loop seamlessly with customized Workflows - create workflows with the no-code drag and drop builder to fit your data ops & ML pipelines.

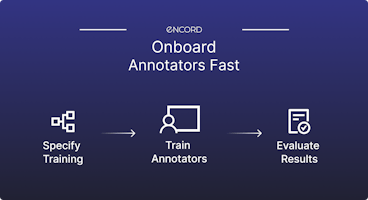

- Quality Assurance: Robust annotator management & QA workflows to track annotator performance and increase label quality.

- Integrated Data Labeling Services for all Industries: outsource your labeling tasks to an expert workforce of vetted, trained and specialized annotators to help you scale.

- Video Labeling Tool: provides the same support for video annotation. One of the leading video annotation tools with positive customer reviews, providing automated video annotations without frame rate errors.

- Robust Security Functionality: label audit trails, encryption, FDA, CE Compliance, and HIPAA compliance.

- Integrations: Advanced Python SDK and API access (+ easy export into JSON and COCO formats).

Best for

- Commercial teams: Teams translating from an in-house solution or open-source tool that require a scalable annotation workflow with a robust, secure, and collaborative enterprise-grade platform.

- Complex or unique use case: For teams that require advanced annotation tool and functionality. It includes, complex nested ontologies or rendering native DICOM formats.

Pricing

- Simple per-user pricing – no need to track annotation hours, label consumption or data usage.

Curious? Try it out

Scale

Scale AI, now Scale, is a data and labeling services platform that supports computer vision use cases but specializes in RLHF, user experience optimization, large language models, and synthetic data.

Scale AI's Image Annotation Tool.

Key Features

- Customizable Workflows: Offers customizable labeling workflows tailored to specific project requirements and use cases.

- Data labeling services: Provides high-quality data labeling services for various data types, including images, text, audio, and video.

- Scalability: Capable of handling large-scale annotation projects and accommodating growing datasets and annotation needs.

Best for

- Teams Looking for a Labeling Tool: Scale is a very popular option for data labeling services.

- Teams Looking for Annotation Tools for Autonomous Vehicle Vision: Scale is one of the earliest platforms on the market to support 3D Sensor Fusion annotation for RADAR and LiDAR use cases.

- Teams Looking for Medical Imaging Annotation Tools: Platforms like Scale will usually not support DICOM or NIfTI data types nor allow companies to work with their data annotators on the platform.

Pricing

- On a per-image basis

CVAT (Computer Vision Annotation Tool)

CVAT is an open source image annotation tool that is a web-based annotation toolkit, built by Intel. For image labeling, CVAT supports four types of annotations: points, polygons, bounding boxes, and polylines, as well as a subset of computer vision tasks: image segmentation, object detection, and image classification. In 2022, CVAT’s data, content, and GitHub repository were migrated over to OpenCV, where CVAT continues to be open-source. Furthermore, CVAT can also be utilized to annotate QR codes within images, facilitating the integration of QR code recognition into computer vision pipelines and applications.

Key Features

- Open-source: Easy and free to get started labeling images.

- Manual Annotation Tools: Supports a wide range of annotation types including bounding boxes, polygons, polylines, points, and cuboids, catering to diverse annotation needs.

- Multi-platform Compatibility: Works on various operating systems such as Windows, Linux, and macOS, providing flexibility for users.

- Export Formats: CVAT offers support for various data formats including JSON, COCO, and XML-based like Pascal VOC, ensuring annotation compatibility with diverse tools and platforms.

Best for

- Students, researchers, and academics testing the waters with image annotation (perhaps with a few images or a small dataset).

- Not preferable for commercial teams as it lacks scalability, collaborative features, and robust security.

Pricing

- Free

💡 More insights on image labeling with CVAT:

For a team looking for free image annotation tools, CVAT is one of the most popular open-source tools in the space, with over 1 million downloads since 2021. Other popular free image annotation alternatives to CVAT are 3D Slicer, Labelimg, VoTT (Visual Object Tagging Tool - developed by Microsoft), VIA (VGG Image Annotator), LabelMe, and Label Studio.

If data security is a requirement for your annotation project… Commercial labeling tools will most likely be a better fit — key security features like audit trails, encryption, SSO, and generally-required vendor certifications (like SOC2, HIPAA, FDA, and GDPR) are usually not available in open-source tools.

Label Studio

Label Studio is another popular open source data labeling platform. It provides a versatile platform for annotating various data types, including images, text, audio, and video. Label Studio supports collaborative labeling, custom labeling interfaces, and integration with machine learning pipelines for data annotation tasks.

Label Studio Image Annotation Tool.

Key Features

- Customizable Labeling Interfaces: Flexible configuration for tailored annotation interfaces to specific tasks.

- Collaboration Tools: Real-time annotation and project sharing capabilities for seamless collaboration among annotators.

- Extensible: Easily connect to cloud object storage and label data there directly

- Export Formats: Label Studio supports multiple data formats including JSON, CSV, TSV, and VOC XML like Pascal VOC, facilitating integration and annotation from diverse sources for machine learning tasks.

Best for

- Data scientists, machine learning engineers, and researchers or teams requiring versatile data labeling for images.

- Not suitable for teams with limited technical expertise or resources for managing an open source tool

Price

- Free with enterprise plan available

Labelbox

Labelbox is a US-based data annotation platform founded in 2017. Like most of the other platforms mentioned in this guide, Labelbox offers both an image labeling platform, as well as labeling services.

Key Features

- Data Management: QA workflows and data annotator performance tracking.

- Customizable Labeling Interface: 3rd party labeling services through Labelbox Boost.

- Automation: Integration with AI models for automatic data labeling to accelerate the annotation process.

- Annotation Type: Support for multiple data types beyond images, especially text.

Best for

- Teams looking for a platform to quickly annotate documents and text.

- Teams carrying out annotation projects that are use-case specific.

As generalist tools, platforms like Labelbox are great at handling a broad variety of data types. If you’re working on a unique use-case-specific annotation project (like scans in DICOM formats or high-resolution images that require pixel-perfect annotations), other commercial AI labeling tools will be a better fit: check out our blog exploring Best DICOM Labeling Tools.

Pricing

- Varies based on the volume of data, percent of the total volume needing to be labeled, number of seats, number of projects, and percent of data used in model training.

- For larger commercial teams, this pricing may get expensive as your project scales.

Playment

Playment is a fully-managed data annotation platform. The workforce labeling company was acquired by Telus in 2021 and provides computer vision teams with training data for various use cases, supported by manual labelers and a machine learning platform.

Playment Image Annotation Tool

Key Features

- Data Labeling Services: Provides high-quality data labeling services for various data types including images, videos, text, and sensor data.

- Support: Global workforces of contractors and data labelers.

- Scalability: Capable of handling large-scale annotation projects and accommodating growing datasets and annotation needs.

- Audio Labeling Tool: Speech recognition training platform (handles all data types across 500+ languages and dialects).

Best for

- Teams looking for a fully managed solution who do not need visibility into the process.

Pricing

- Enterprise plan

Appen

Appen is a data labeling services platform founded in 1996, making it one of the first and oldest solutions in the market. The company offers data labeling services for a wide range of industries and in 2019, acquired Figure Eight to build out its software capabilities and help businesses also train and improve their computer vision models.

Key Features

- Data Labeling Services: Support for multiple annotation types (bounding boxes, polygons, and image segmentation).

- Data Collection: Data sourcing (pre-labeled datasets), data preparation, and real-world model evaluation.

- Natural Language Processing: Supports natural language processing tasks such as sentiment analysis, entity recognition, and text classification.

- Image and Video Analysis: Analyzes images and videos for tasks such as object detection, image classification, and video segmentation.

Best for

- Teams looking for image data sourcing and collection alongside annotation services.

Pricing

- Enterprise plan

Dataloop

Dataloop is an Israel-based data labeling platform that provides a comprehensive solution for data Dataloop is an Israel-based data labeling platform that provides a comprehensive solution for data management and annotation projects. The tool offers data labeling capabilities across images, text, audio, and video annotation, helping businesses train and improve their machine learning models.

Dataloop Image Annotation Tool

Key Features

- Data Annotation: Features for image annotation tasks, including classification, detection, and semantic segmentation.

- Video Annotation Tool: Support for video annotations.

- Collaboration Tool: Features for real-time collaboration among annotators, project sharing, and version control for efficient teamwork.

- Data Management: Offers data management capabilities including data versioning, tracking, and organization for streamlined workflows.

Best for

- Teams looking for a generalist annotation tool for various data annotation needs.

- Teams carrying out specific image and video annotation projects that are use-case specific.

- As generalist tools, platforms like Dataloop are built to support a wide variety of simple use cases, so other commercial platforms are a better fit if you’re trying to label use-case-specific annotation projects (like high-resolution images that require pixel-perfect annotations in satellite imaging or DICOM files for medical teams).

Pricing

- Free trial and an enterprise plan.

SuperAnnotate

SuperAnnotate provides enterprise solutions for image and video annotation, catering primarily to the needs of the computer vision community. It provides powerful annotation tools and features tailored for machine learning and AI applications, offering efficient labeling solutions to enhance model training and accuracy.

SuperAnnotate - Image Annotation Tool

Key Features

- Multi-Data Type Support: Versatile annotation tool for image, video, text, and audio.

- AI Assistance: Integrates AI-assisted annotation to accelerate the annotation process and improve efficiency.

- Customization: Provides customizable annotation interfaces and workflows to tailor annotation tasks according to specific project requirements.

- Integration: Seamlessly integrates with machine learning pipelines and workflows for efficient model training and deployment.

- Scalability: Capable of handling large-scale annotation projects and accommodating growing datasets and annotation needs.

- Export Formats: SuperAnnotate supports multiple data formats, including popular ones like JSON, COCO, and Pascal VOC.

Best for

- Larger teams working on various machine learning solutions looking for a versatile annotation tool.

Pricing

- Free for early stage startups and academics for team size up to 3.

- Enterprise plan

V7 Labs

V7 is a UK-based data annotation platform founded in 2018. The company enables teams to annotate training data, support the human-in-the-loop processes, and also connect with annotation services. V7 offers annotation of a wide range of data types alongside image annotation tooling, including documents and videos.

Key Features

- Collaboration Capabilities: Project management and automation workflow functionality, with real-time collaboration and tagging.

- Data Labeling Services: Provides labeling services for images and videos.

- AI Assistance: Model-assisted annotation of multiple annotation types (segmentation, detection, and more).

Best for

- Students or teams looking for a generalist platform to easily annotate different data types in one place (like documents, images, and short videos).

- Limited functionalities for use-case specific annotations.

Pricing

- Various options, including academic, business, and pro.

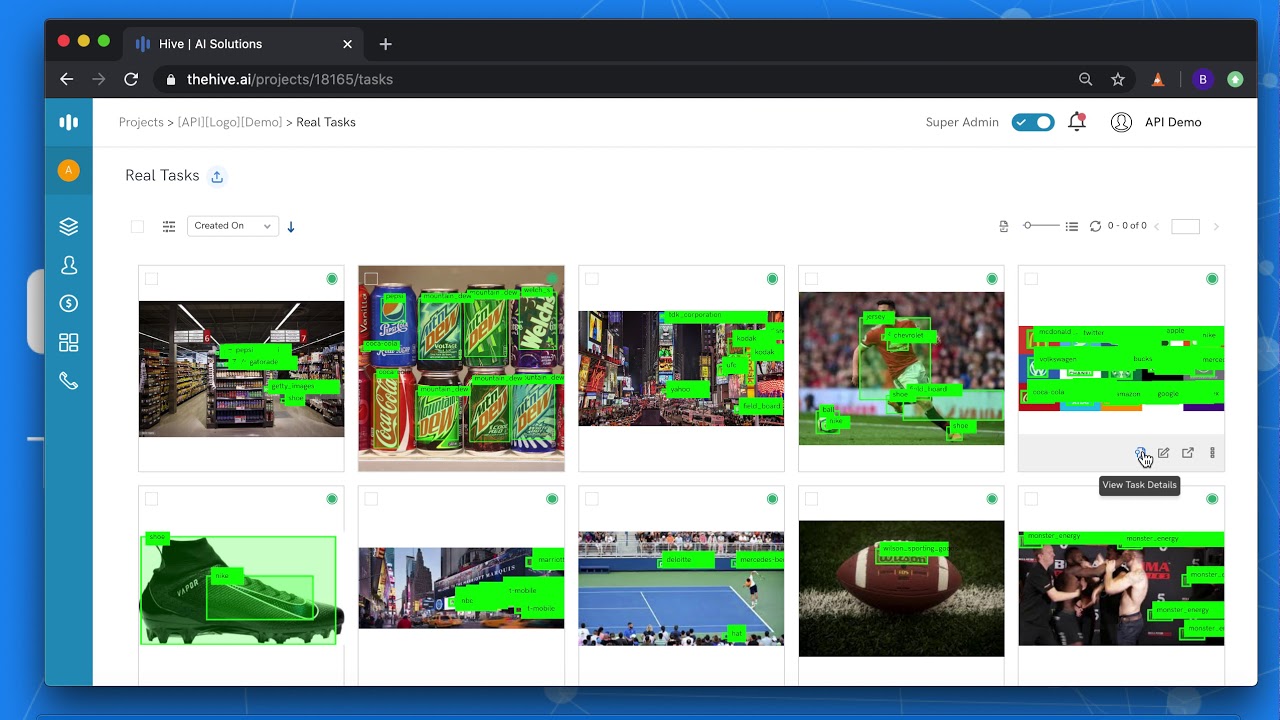

Hive

Hive was founded in 2013 and provides cloud-based AI solutions for companies wanting to label content across a wide range of data types, including images, video, audio, text, and more.

Key Features

- Image Annotation Tool: Offers annotation tools and workflows for labeling images along with support for unique image annotation use cases (ad targeting, semi-automated logo detection).

- Ease of Access: Flexible access to model predictions with a single API call.

- Integration: Seamlessly integrates with machine learning pipelines and workflows for AI model training and deployment.

Best for

- Teams labeling images and other data types for the purpose of content moderation.

Pricing

- Enterprise plan

COCO Annotator

COCO Annotator is a web-based image annotation tool, crafted by Justin Brooks under the MIT license. Specifically designed to streamline the process of labeling images for object detection, localization, and keypoints detection models, this tool offers a range of features that cater to the diverse needs of machine learning practitioners and researchers.

COCO Annotator - Image Annotation Tool

Key Features

- Image Annotation: Supports annotation of images for object detection, instance segmentation, keypoint detection, and captioning tasks.

- Export Formats: To facilitate large-scale object detection, the tool exports and stores annotations in the COCO format.

- Automations: The tool makes annotating an image easier by incorporating semi-trained models. Additionally, it provides access to advanced selection tools, including the MaskRCNN, Magic Wand and DEXTR.

Best For

- ML Research Teams: COCO Annotator is a good choice for ML researchers, preferable for image annotation for tasks like object detection and keypoints detection.

Price

- Free

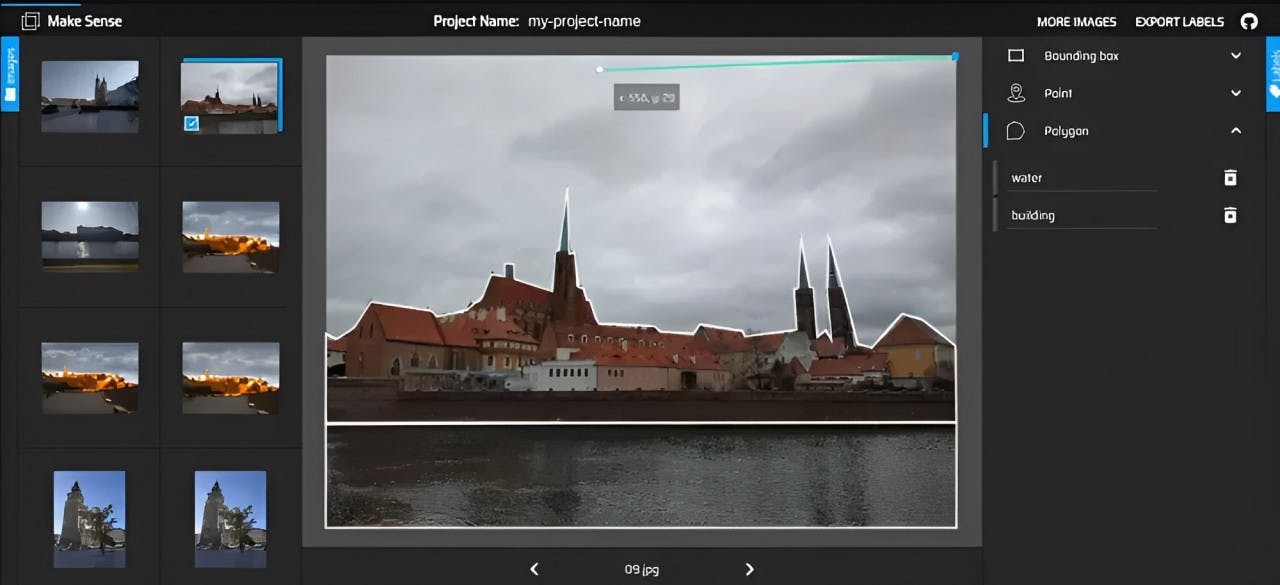

Make Sense

Make Sense AI is a user-friendly and open-source annotation tool, available under the GPLv3 license. Accessible through a web browser without the need for advanced installations, this tool simplifies the annotation process for various image types.

Make Sense - Image Annotation Tool

Key Features

- Open Sourced: Make Sense AI stands out as an open-source tool, freely available under the GPLv3 license, fostering collaboration and community engagement for its ongoing development.

- Accessibility: It ensures web-based accessibility, operating seamlessly in a web browser without complex installations, promoting ease of use across various devices.

- Export Formats: It facilitates exporting annotations in multiple formats (YOLO, VOC XML like Pascal VOC, VGG JSON, and CSV), ensuring compatibility with diverse machine learning algorithms and seamless integration into various workflows.

Best For

- Small teams seeking an efficient solution to annotate an image.

Price

- Free

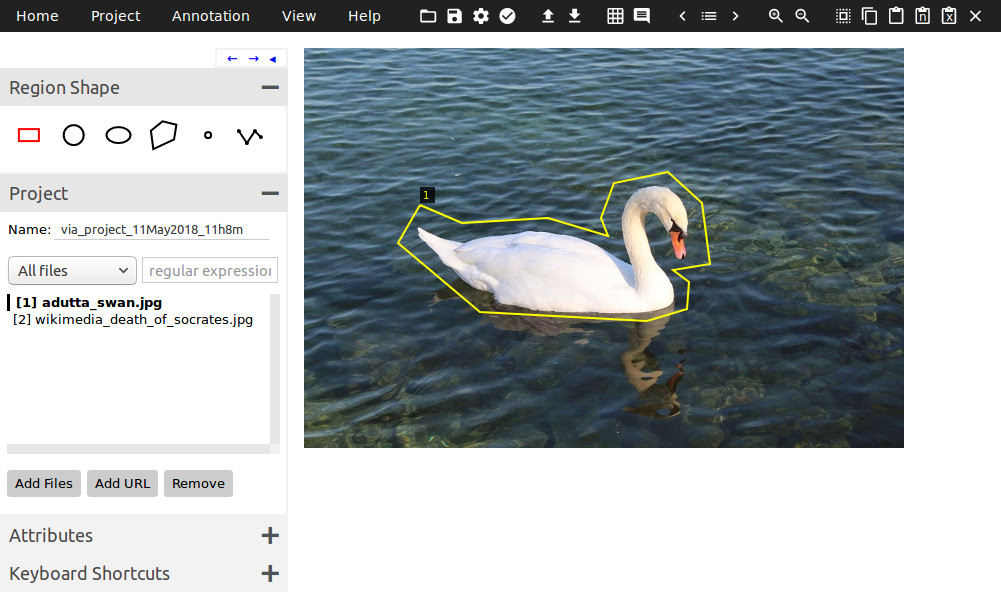

VGG Image Annotator

VGG Image Annotator (VIA) is a versatile open-source tool crafted by the Visual Geometry Group (VGG) for the manual annotation of both image and video data. Released under the permissive BSD-2 clause license, VIA serves the needs of both academic and commercial users, offering a lightweight and accessible solution for annotation tasks.

VGG Image Annotator - Image Annotation Tool

Key Features

- Lightweight and User-Friendly: VIA is a lightweight, self-contained annotation tool, utilizing HTML, Javascript, and CSS without external libraries, enabling offline usage in modern web browsers without setup or installation.

- Offline Capability: The tool is designed to be used offline, providing a full application experience within a single HTML file of size less than 200 KB.

- Multi-User Collaboration: Facilitates collaboration among multiple annotators with features such as project sharing, real-time annotation, and version control.

Best For

- VGG Image Annotator (VIA) is ideal for individuals and small teams involved in projects for academic researchers.

Price

- Free

LabelMe

LabelMe is an open-source web-based tool developed by the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) that allows users to label and annotate images for computer vision research. It provides a user-friendly interface for drawing bounding boxes, polygons, and semantic segmentation masks to label objects within images.

Key Features

- Web-Based: Accessible through a web-based interface, allowing for annotation tasks to be performed in any modern web browser without requiring software installation.

- Customizable Interface: Provides a customizable annotation interface with options to adjust settings, colors, and layout preferences to suit specific project requirements.

Best for

- Academic and research purposes

Pricing

- Free

Amazon SageMaker Ground Truth

Amazon SageMaker Ground Truth is a fully managed data labeling service provided by Amazon Web Services (AWS). It offers a platform for efficiently labeling large datasets to train machine learning models. Ground Truth supports various annotation tasks, including image classification, object detection, semantic segmentation, and more.

Amazon SageMaker Ground Truth - Image Annotation Tool

Key Features

- Managed Service: Fully managed by AWS, eliminating the need for infrastructure setup and management.

- Human-in-the-Loop Labeling: Harnesses the power of human feedback across the ML lifecycle to improve the accuracy and relevancy of models.

- Scalability: Capable of handling large-scale annotation projects and accommodating growing datasets and annotation needs.

- Integration with Amazon SageMaker: Seamlessly integrates with Amazon SageMaker for model training and deployment, providing a streamlined end-to-end machine learning workflow.

Best for

- Teams requiring large-scale data labeling.

Pricing

- Varies based on labeling task and type of data.

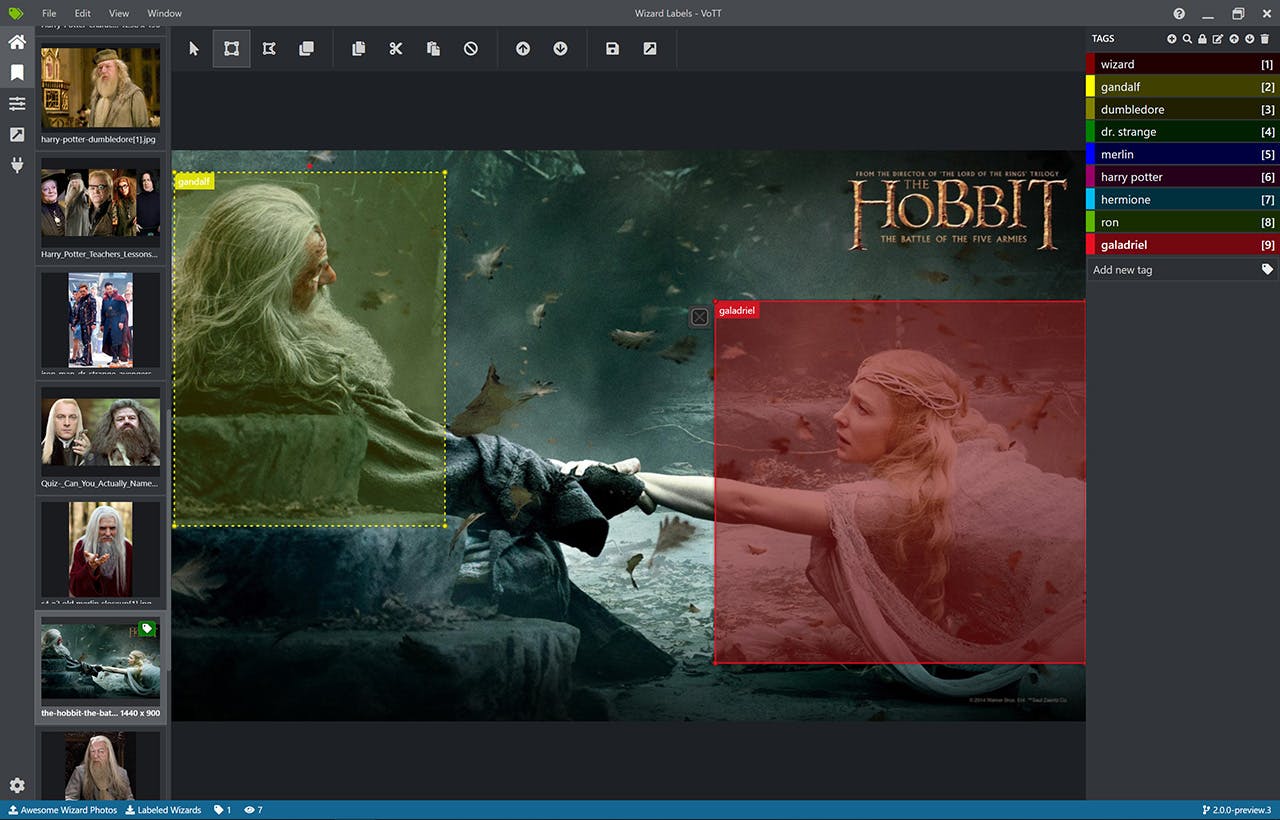

VOTT

VOTT or Visual Object Tagging Tool is an open-source tool developed by Microsoft for annotating images and videos to create training datasets for computer vision models. VOTT provides an intuitive interface for drawing bounding boxes around objects of interest and labeling them with corresponding class names.

Key Features

- Versatile Annotation Tool: Supports a wide range of annotation types including bounding boxes, polygons, polylines, points, and segmentation masks for precise labeling.

- Video Annotation: Enables annotation of videos frame by frame, with support for object tracking and interpolation to streamline the annotation process.

- Multi-Platform Compatibility: Works across various operating systems such as Windows, Linux, and macOS, ensuring flexibility for users.

Best for

- Teams requiring lightweight and customizable annotation tool for object detection.

Pricing

- Free

Key Takeaways from Using Image Annotation Tools for Computer Vision Projects

There you have it!

The 17 Best Image Annotation Tools for computer vision in 2024.

For further reading, you might also want to check out a few 2024 honorable mentions, both paid and free annotation tools:

- Supervisely - commercial data labeling platform praised for its quality control functionality and basic interpolation feature.

- Labelimg - Labelimg is an open source multi-modal data annotation tool now part of Label Studio.

- MarkUp - MarkUp image is a free web annotation tool to annotate an image or a PDF.

Power your AI models with the right data

Automate your data curation, annotation and label validation workflows.

4.8/5

Written by

Nikolaj Buhl

- There are various options, including open-source, low-code or no-code, and active learning annotation solutions like Encord. Encord is the leading annotation tool to build better models, faster. Accelerate the creation of training data with pixel-perfect AI-assisted labeling to develop high quality training data and build product-ready models up to 10x faster.

- Image annotation involves several stages. Image and image-based datasets need to be sourced (either bought or downloaded from open-source databases), cleaned, and uploaded into annotation tools and software.

- Automatically annotate images with active learning annotation platforms, like Encord. Traditional computer vision models require extensive data for robustness and generalizability. You can leverage the power of the Segment Anything Model to complete single one-click annotations and in just minutes, train Encord’s micro-models starting from a small set of labels.

- Automatically annotate images with active learning annotation platforms, like Encord. Traditional computer vision models require extensive data for robustness and generalizability. You can leverage the power of the Segment Anything Model to complete single one-click annotations and in just minutes, train Encord’s micro-models starting from a small set of labels.

- You should look for versatility in annotation types (bounding boxes, polygons), user-friendly interfaces, collaboration support, scalability, automation features, and compatibility with various annotation formats for seamless integration into your workflow.

- Model-assisted labeling involves using pre-trained models to assist in the annotation process, improving efficiency by automating certain tasks and reducing manual effort.

- Encord offers a complimentary trial, followed by straightforward per-user pricing. Consider notable options like CVAT, MakeSense, and VGG Annotator, known for being open-source, web-based, supporting diverse annotation types, and offering export flexibility in various formats.

- Choose your preferred annotator, upload the image, select the annotation type (bounding box, polygon), annotate, and export labels in your chosen format. Tools like Encord offer automated annotation features, streamlining the process for added convenience.

- Auto annotation features, like the one by Encord Annotator, assist in the annotation process by leveraging semi-trained models to automatically suggest annotations, enhancing efficiency in various deep learning tasks like object detection, instance segmentation, object recognition, and localization.

- Image annotation tools facilitate collaboration by enabling multiple users to annotate images simultaneously, fostering real-time communication and feedback. They streamline the process of labeling objects within images, enhancing efficiency and accuracy in object detection and classification tasks.

Related blogs

Automating Foundation Models with Segment Anything Model (SAM) Using Encord Annotate

At Encord, our mission is to accelerate the development and democratization of quality AI and computer vision applications by providing tools which enable actionable insights across your data, labels and models. Today, we’re bringing that one step further announcing our product launch integrating Meta’s Segment Anything Model (SAM) into the Encord Annotate platform. Watch the video below to learn more about SAM and its integration with Encord. SAM, or the Segment Anything Model, is Meta’s new zero-shot foundation model in computer vision, a cornerstone of their Segment Anything project. As a zero-shot foundation model, and as its name suggest, SAM is immediately capable of "segmenting anything" including image data it hasn't seen before, from a simple combination of keypoints and, if you wish, a delimiting bounding box. For all the details of the inner workings and greater significance of SAM, check out our SAM explainer. The release last week set the internet ablaze with possibilities, those both obvious and those yet to come. We’re here to tell you about the possibilities available now. Integrating SAM with Encord Annotate pairs the power of SAM to segment anything with Encord’s powerful ontologies, interactive editor, and comprehensive media support. Encord supports using SAM to annotate images and videos, as well as speciality data types such as satellite and DICOM data. DICOM support includes X-ray, CT, and MRI among others — with no additional effort from you. Our powerful labeling tool gives you an interactive editor experience allowing you to define regions to include and exclude, producing both bounding boxes and segmentations to your exact specification. Of course, integrating with Encord means you can take advantage of our annotation workflows as well — ensuring you get all the benefits of a collaborative annotation and review platform powered by AI-assisted labeling and our annotator training module. We’re very excited to bring SAM to Encord to support your AI initiatives - get started here. You can also check out our tutorial on how to fine-tune Segment Anything here.

Apr 11 2023

8 M

Top 8 Video Annotation Tools for Computer Vision

Are you looking for a video annotation tool for your computer vision project? Look no further! We've compiled a list of the top eight best video annotation tools, complete with their use cases, benefits, key features, and pricing. Deciding on the right video annotation toolkit for your needs depends on several factors, including whether you have vast amounts of unlabeled data and whether manual annotation is too time-consuming and expensive. With a powerful video annotation tool, you can automate and accelerate the process. Our list is designed for data ops teams looking to manage in-house or outsourced annotators, CTOs hoping to reduce the cost of manual annotation, and data scientists and ML engineers in search of a solution to automate annotations and labeling while identifying potential edge cases and outliers. Working with images? Check out our Best Image Annotation Tools blog instead! Top 8 Video Annotation Tools for Computer Vision Encord LabelMe CVAT SuperAnnotate Dataloop Supervisely Scale Img Lab Let’s dive in ... Encord Encord's collaborative video annotation platform helps you label video training data more quickly, build active learning pipelines, create better-quality datasets and accelerate the development of your computer vision models. Encord's suite of features and toolkits includes an automated video annotation platform that will help you 6x the speed and efficiency of model development. Encord is a powerful solution for teams that: Need a native-enabled video annotation platform with features that make it easy to automate the end-to-end management of data labeling, QA workflows, and automated AI-powered annotation Want to accelerate their computer vision model development, making video annotation 6x faster than manual labeling. Benefits & key features: Encord is a state-of-the-art AI-assisted labeling and workflow tooling platform powered by micro-models, ideal for video annotation, labeling, QA workflows, and training computer vision models Built for computer vision, with native support for numerous annotation types, such as bounding box, polygon, polyline, instance segmentation, keypoints, classification, and much more As a computer vision toolkit, it supports a wide-range of native and visual modalities for video annotation and labeling, including native video file format support (e.g., full-length videos, and numerous file formats, including MP4 and WebM) Automated, AI-powered object tracking means your annotation teams can annotate videos 6x faster than manual processes Assess and rank the quality of your video-based datasets and labels against pre-defined or custom metrics, including brightness, annotation duplicates, occlusions in video or image sequences, frame object density, and numerous others Evaluate training datasets more effectively using a trained model and imported model predictions with acquisition functions such as entropy, least confidence, margin, and variance with pre-built implementations Manage annotators collaboratively and at scale with customizable annotator and data management dashboards Best for: ML, data ops, and annotation teams looking for a video annotation tool that will accelerate model development. Data science and operations teams that need a solution for collaborative end-to-end management of outsourced video annotation work. Pricing: Start with a free trial or contact sales for enterprise plans. Further reading: The Complete Guide to Image Annotation for Computer Vision 4 Ways to Debug Computer Vision Models [Step By Step Explainer] Closing the AI Production Gap with Encord Active Active Learning in Machine Learning: A Comprehensive Guide LabelMe LabelMe is an open-source online annotation tool developed by the MIT Computer Science and Artificial Intelligence Laboratory. It includes the downloadable source code, a toolbox, an open-source version for 3D images, and image datasets you can train computer vision models on. LabelMe Benefits & key features: LabelMe includes a dataset you can use to train models on, and you can use the LabelMe Matlab toolbox to annotate and label them (here’s the Github repository for this) It also comes with a 3D database with thousands of images of everyday scenes and object categories You can also outsource annotation using Amazon Mechanical Turk, and LabelMe encourages this here. Best for: ML and annotation teams. Although, given the open-source nature of LabelM and the database, it may be more effective and useful for academic rather than commercial computer vision projects. Pricing: Free, open-source. CVAT CVAT (Computer Vision Annotation Tool) started life as an Intel application that they made open-source, thanks to an MIT license. Now it operates as an independent company and foundation, with Intel’s continued support under the OpenCV umbrella. CVAT.org has moved to its new home, at CVAT.ai. CVAT Benefits & key features: CVAT is now part of an extensive OpenCV ecosystem that includes a feauture-rich open-source annotation tool With CVAT, you can annotate images and videos by creating classifications, segmentations, 3D cuboids, and skeleton templates Over 1 million people have downloaded it since CVAT launched, and under OpenCV, there’s an even larger community of users to ask for guidance and support. Best for: Data ops and annotation teams that need access to an open-source tool and ecosystem of ML engineers and annotators. Pricing: Free, open-source. SuperAnnotate SuperAnnotate is a commercial platform and toolkit for creating annotations and labels, managing automated annotation workflows, and even generating images and datasets for computer vision projects. SuperAnnotate Benefits & key features: SuperAnnotate includes a full-service Data Studio, including access to a marketplace of 400+ outsourced annotation teams and service providers It also comes with an ML Studio to manage computer vision and AI-based workflows, including AI data management and curation, MLOps and automation, and quality assurance (QA) It’s designed for numerous use cases, including healthcare, insurance, sports, autonomous driving, and several others. Best for: ML engineers, data scientists, annotation teams, and MLOps professionals in academia, businesses, and enterprise organizations. Pricing: Free for early-stage startups and academic researchers. You would need a demo or contact sales for the Pro and Enterprise plans. Dataloop Dataloop is a "data engine for AI" that includes automated annotation for video datasets, full lifecycle dataset management, and AI-powered model training tools. Dataloop Benefits & key features: Multiple data types supported, including numerous video file formats Automated and AI-powered data labeling End-to-end annotation and QA workflow managment and dashboards for collaborative working Best for: ML, data ops, enterprise AI teams, and managing video annotation workflows with outsourced teams. Pricing: From $85/mo for 150 annotation tool hours. Supervisely Supervisely is a "Unified OS enterprise-grade platform for computer vision" that includes video annotation tools and features. Supervisely Benefits & key features: Native video file support, so that you don't need to cut them into segments or images Automated multi-track timelines within videos Built-in object tracking and segments tagging tools, and numerous other features for video annotation, QA, collaborative working, and computer vision model development Best for: ML, data ops, and AI teams in Fortune 500 companies and computer vision research teams. Pricing: 30-day free trial, with custom plans after signing-up for a demo. Scale Scale is positioned as the AI data labeling and project/workflow management platform for “generative AI companies, US government agencies, enterprise organizations, and startups.” Building the best AI, ML, and CV models means accessing the “best data,” and for that reason, it comes with tools and solutions such as the Scale Data Engine and Generative AI Platform. Scale, an enterprise-grade data engine and generative AI platform Benefits & key features: A Data Engine to unlock data organizations already have or can tap into vast public and open-source datasets Tools to create synthetic data (e.g., generative AI features) A full-stack Generative AI platform for AI companies and US government agencies An extensive developers platform for Large Language Model (LLM) applications. Best for: Data scientists and ML engineers in generative AI companies, US government agencies, enterprise organizations, and startups. Pricing: There are two core offerings: Label My Data (priced per-label), and an Enterprise plan that requires a demo to secure a price. Img Lab Img Lab is an open-source image annotation tool to “simplify image labeling/ annotation process with multiple supported formats.” Img Lab Benefits & key features: Img Lab isn’t as feature-rich as most of the tools and platforms on this list. It would need to be integrated with other tools and applications to ensure it could be used effectively for large-scale image annotation projects. Best for: Img Lab seems best equipped for annotators and those who need a quick and easy-to-use open-source annotation tool. Pricing: Free, open-source. How To Pick the Best Video Annotation Tool for Computer Vision Projects? And there we go, the best video annotation tools for computer vision! In this post, we covered Encord, LabelMe, CVAT, SuperAnnotate, Dataloop, Supervisely, Scale, and Img Lab. Each tool and suite of features that are included are applicable to a wide-range of use cases, data types, and project scales. Making the right choice depends on what your computer vision project needs, such as supporting various data modalities and annotation types, active learning strategies, and pricing. When you’ve selected the best annotation tool for your project or AI application will accelerate model development, enhance the quality of your training data, and optimize your data labeling and annotation process.

May 11 2023

4 M

Structured Vs. Unstructured Data: What is the Difference?

Data, often called oil for its resource value, is crucial in machine learning (ML). Machine learning has evolved significantly since its inception in the 1940s thanks to contributions from pioneers like Turing and McCarthy and developments in neural networks and algorithms. This evolution underscores the transition of data from mere information to a driver of growth and innovation. Data can be categorized into structured and unstructured types. Structured data is organized in databases, making it easily searchable. It is also ideal for quantitative analysis due to its organization. This type includes data in rows and columns, such as financial records in spreadsheets or customer information in CRM systems. In contrast, unstructured data forms the bulk of today's data generation and is not confined to a specific format. This includes different forms like images, videos, text, and audio files. They provide valuable insights but also pose analytical challenges. Unstructured data is complex with diverse data structures. It requires advanced AI and ML technologies for effective processing. Understanding data types is crucial because it directly impacts the accuracy and effectiveness of machine learning models. Proper selection and processing of data types enable more precise algorithms and inform innovation and decision-making in AI applications. By the end of this article, readers will gain a comprehensive understanding of the differences between structured and unstructured data and how each type impacts the field of machine learning and data-driven decision-making. Structured Data What is Structured Data? Structured data is organized in a specific format, typically rows and columns, to facilitate processing and analysis by computer systems. This data type adheres to a clear structure defined by a schema or data model. Examples include numerical data, dates, and strings in relational databases like SQL. Structured data can be efficiently indexed and queried, making it ideal for various applications, from business intelligence to data analytics. Sources of Structured Data Structured data sources are diverse and include various systems and platforms where data is methodically organized. Key sources include: Relational Databases (RDBMS): Stores data in a structured format using tables. Examples include MySQL, PostgreSQL, and Oracle. They are widely used for managing large volumes of structured data in enterprises. Customer Relationship Management (CRM) Systems: These platforms manage customer data, interactions, and business information in a structured format, enabling businesses to track and analyze customer activities and trends like gym owners managing their customer data through gym CRM software Online Transaction Processing (OLTP) Systems: They manage transaction-oriented applications. OLTP systems are designed to process high volumes of transactions efficiently and typically structure the data to support quick, reliable transaction processing. Enterprise Resource Planning (ERP) Systems: ERP systems integrate various business processes and manage related datasets within an organization. They store and process the data in a structured format for functions like finance, HR, and supply chain management. Spreadsheets and CSV Files: Common in business and data analysis contexts, spreadsheets and CSV files structure data in rows and columns, making it easy to organize, store, and analyze information. Data Warehouses: These systems are used for reporting and analysis, acting as central repositories of integrated data from one or more sources. Data warehouses store structured data extracted from various operational systems and are used for creating analytical reports. APIs and Web Services: Many modern APIs and web services return data in a structured format, like JSON or XML, which can be easily parsed and integrated into various applications. Internet of Things (IoT) Devices: Many IoT devices generate and transmit data in a structured format, which can be used for monitoring, analysis, and decision-making in various applications, including smart homes, healthcare, and industrial automation. Types of Structured Data Structured data sources are vast, ranging from traditional databases to modern IoT devices, each playing a pivotal role in the data ecosystem. Use Cases of Structured Data SEO Tools: Web developers use structured data to enhance SEO. By embedding microdata tags into the HTML of a webpage, they provide search engines with more context, improving the page's visibility in search results. Machine Learning: Structured data is pivotal in training supervised machine learning algorithms. Its well-defined nature facilitates the creation of labeled datasets that guide machines to learn specific tasks. Data Management: In business intelligence, structured data is essential for managing core data like customer information, financial transactions, and login credentials. Tools like SQL databases, OLAP, and PostgreSQL are commonly employed. ETL Processes: In ETL (Extract, Transform, Load) processes, structured data is extracted from various sources, transformed for consistency, and loaded into a data warehouse for analysis. Advantages of Structured Data Accessibility and Manageability: The well-defined organization of structured data makes it easily accessible and manageable. It simplifies data storage, retrieval, and analysis, particularly for users with varying technical expertise. Data Analysis: Structured data allows for stable and reliable analytics workflows due to its standardized nature. This enables businesses to derive insights and make informed decisions more effectively. Support with Mature Tools: A wide array of mature tools and models are available to process structured data, making it easier for organizations to integrate it into their decision-making processes. Facilitates Data Democratization: The simplicity and accessibility of structured data empower an organization's broader range of professionals to leverage data for decision-making, promoting a data-informed culture. Limitations of Structured Data Limited Scope: Structured data accounts for about 20% of enterprise data, providing a narrow view of business functions. Relying solely on structured data means missing out on insights you could derive from unstructured data. Rigidity: Structured data is often rigid in its format, making it less flexible for various data manipulation and analysis techniques. This can be restrictive when diverse data needs arise. Cost Implications: Structured data is typically stored in relational databases or data warehouses, which can be more expensive than data lakes used for unstructured data storage. Disruption in Workflow: Changes in reporting or analytics requirements can disrupt existing ETL and data warehousing workflows due to the structured nature of the data. While structured data remains essential in many business applications due to its organized format and ease of use, it is necessary to consider its limitations and the potential benefits of integrating unstructured data into the data strategy. The balance between structured and unstructured data handling can provide more comprehensive insights for business growth and decision-making. Unstructured Data What is Unstructured Data? Unstructured data refers to information that does not have a predefined data model or schema. This data type is typically qualitative and includes various formats such as text, video, audio, images, and social media posts. Unlike structured data, which is easy to search and analyze in databases or spreadsheets, unstructured data is more challenging to process and research due to its lack of organization. For example, while the structure of web pages is defined in HTML code, the actual content, which can be text, images, or video, remains unstructured. Sources of Unstructured Data Web Pages: The internet is a vast source of unstructured data. Web pages contain diverse content like text, images, and unstructured videos. Open-Ended Survey Responses: Surveys with open-ended questions generate unstructured data through textual responses. This data provides more nuanced insights compared to structured, multiple-choice survey data. Images, Audio, and Video: Multimedia files are considered unstructured data. Technologies like speech-to-text and facial recognition software analyze these data types. Emails: Emails are a form of semi-structured data where the metadata (like sender, recipient, and date) is structured but the email content remains unstructured. An SPF record checker help companies ensure the authenticity of incoming emails, protecting against phishing attacks. Social Media and Customer Feedback: Social media posts, blogs, product reviews, and customer feedback generate a significant amount of unstructured data. This data includes customer preferences, market trends, and brand perception insights. Types of Unstructured Data Use Cases of Unstructured Data Social Media Monitoring: Social media platforms generate vast unstructured data through posts, comments, and interactions. Businesses utilize machine learning tools to analyze this data, gaining insights into brand perception, customer satisfaction, and market trends. Customer Feedback Analysis: Companies collect feedback from online reviews, surveys, and emails. Analyzing this unstructured data helps understand customer needs, preferences, and areas for improvement. Content Analysis of Webpages: The internet, with its myriad of webpages containing text, images, and videos, is a significant source of unstructured data. Businesses use this data for competitive analysis, market research, and understanding public sentiment. Analysis of Open-Ended Survey Responses: Surveys often include open-ended questions where respondents answer in their own words. Analyzing these responses uncovers nuanced insights that can guide business strategies and product development. Multimedia Analysis: The analysis of images, audio, and video files, though challenging, can reveal crucial information. Advancements in speech-to-text and image recognition make extracting and analyzing data from these sources easier. Advantages of Unstructured Data Unstructured data presents a vast and largely untapped resource for engineers seeking to extract valuable insights and drive innovation. Unlike structured data, which adheres to a predefined schema, unstructured data possesses inherent advantages that can unlock new possibilities across various disciplines. Richer Insights: Unstructured data captures the real-world nuance and complexity often missing in structured datasets. This includes text, audio, video, and images, allowing engineers to analyze human sentiment, behavior, and interactions in their natural forms. Increased Flexibility: Unstructured data's lack of rigid schema allows for greater flexibility and adaptability. ML and Data Engineers can explore diverse data sources without being constrained by predefined formats. Enhanced Innovation: Unstructured data fuels the engine of innovation by providing ML models with a broader and deeper understanding of the world around them. Scalability and Cost-Effectiveness: With the increasing affordability of data storage and processing technologies, handling vast amounts of unstructured data becomes more feasible. Competitive Advantage: In today's data-driven world, embracing the power of unstructured data is critical for gaining a competitive advantage. However, it's essential to acknowledge that unstructured data also presents inherent challenges despite its advantages. Limitations of Unstructured Data The inherent lack of structure in unstructured data presents several limitations that you must consider. Difficulty in Processing: Due to their diverse formats and need for standardized schema, analyzing unstructured data requires specialized tools and techniques such as Natural Language Processing (NLP) algorithms, text analytics software, and machine learning models. Data Bias: Unstructured data can be susceptible to biases inherent in its source or collection process. This can lead to accurate or misleading insights if addressed appropriately. Data Privacy and Security: Unstructured data often contains sensitive information that requires robust security measures to protect individual privacy. Data Quality Concerns: Unstructured data can be incomplete, noisy, and inconsistent, demanding significant effort to clean and prepare before you can analyze it effectively. Lack of Standardization: Unstandardized formats and structures in unstructured data present data integration and interoperability challenges. Despite these limitations, the potential benefits of unstructured data outweigh the challenges. By developing the necessary skills and expertise, you can effectively address the limitations and unlock the vast potential of this valuable resource, driving innovation and gaining a competitive edge in the data-driven world. Structured vs Unstructured Data Semi-Structured Data What is Semi-Structured Data? Semi-structured data is rapidly becoming ubiquitous across various industries, posing unique challenges and opportunities for data engineers. This section delves into the technical aspects of semi-structured data, exploring its characteristics, sources, and critical considerations for effective management and utilization. Traditional data storage methods, such as relational databases, rely on rigid schema structures. However, the increasing proliferation of diverse data sources, including sensor readings, social media posts, and weblogs, necessitates flexible approaches. Enter semi-structured data, characterized by its reliance on self-describing formats like JSON, XML, and YAML and lack of a predefined schema. Sources of Semi-Structured Data The requirement for semi-structured data stems from its inherent flexibility, making it ideal for capturing complex and evolving information. Key sources include: Web Applications: User interactions, log files, and API responses often utilize semi-structured formats for easy data exchange and representation. Internet of Things (IoT) Devices: Sensor data, device logs, and operational information are frequently represented in semi-structured formats for efficient transmission and analysis. Social Media Platforms: User posts, comments, and interactions generate vast amounts of semi-structured data valuable for social listening and sentiment analysis. Scientific Research: Experiment results, gene sequencing data, and scientific observations often utilize semi-structured formats for flexible data representation and analysis. Use Cases of Semi-Structured Data Real-time Analytics: Analyze real-time sensor data, social media feeds, and website traffic to make informed decisions and identify problems quickly. Fraud Detection: Spot fraudulent activity in financial transactions and online interactions by looking for patterns in semi-structured data. Customer Personalization: Make product recommendations and content more relevant for each user based on their preferences and behavior data. Log Analysis: Find the root causes of system errors and performance bottlenecks by analyzing log files in their native semi-structured formats. Scientific Research: Manage and analyze complex scientific data, like gene sequences, experimental results, and scientific observations, effectively using the flexibility of semi-structured formats. Advantages of Semi-Structured Data Flexible: Adapt your data model as needed without changing the schema. This lets you add new information and handle changes easily. Scalable: Efficiently store and process large datasets by eliminating unnecessary structure and overhead. Enables Deep Analysis: Capture the relationships and context within your data to gain deeper insights. Cost-Effective: Often cheaper to store and process than structured data. Limitations of Semi-Structured Data Complexity: You'll need specialized tools and techniques to handle and process semi-structured data. It doesn't have a standard format, so finding the right tools can be tricky. Data Quality: Semi-structured data can be inconsistent, missing, or noisy. You'll need to clean and process it before you can use it. Security and Privacy: Ensure you have robust security measures to protect sensitive information in your semi-structured data. Interoperability: Sharing data between different systems can be complex because of the need for standardized formats. Limited Tools and Techniques: There are fewer established tools and techniques for analyzing semi-structured data than structured data. You can unlock its vast potential by learning how to handle semi-structured data effectively and using the right tools. Structured Vs. Unstructured Data vs Semi-Structured Data I have outlined some key differentiating characteristics of the different data sources in the table below. Best Practices in Data Management Effective data management is the cornerstone of data-driven decision-making and AI success. By implementing the following best practices, you can establish a robust and efficient data management system that empowers them to leverage the full potential of their data: Process Mapping and Stakeholder Identification: Clearly define data workflows and identify all stakeholders involved in data creation, storage, and utilization. This transparency facilitates collaboration, ensures accountability, and prevents confusion. Data Ownership and Responsibility: Establish clear ownership for data quality and ensure accountability at every data lifecycle stage. This promotes consistent data management practices, reduces errors, and facilitates data reliability. Efficient Data Capture: Implement reliable mechanisms for capturing relevant data accurately and comprehensively. This might involve utilizing scraping techniques, web scraping APIs, or sensor data collection tools tailored to the specific data source. Standardize Data Naming Conventions: Establish consistent naming conventions for data elements to increase data discoverability, accessibility, and analysis. Standardized names facilitate easier identification, retrieval, and manipulation of specific data points. Centralized Data Storage: Utilize a centralized data storage solution, such as a data lake or data warehouse, to enable efficient access, retrieval, and analysis of data from various sources. This centralized approach promotes data accessibility and allows for data aggregation and integration. Data Quality Management: Prioritize data quality by implementing data quality checks and cleansing processes. This ensures data accuracy, completeness, and consistency, reducing the risk of errors and misinterpretations in data analysis and decision-making. Robust Data Security: Implement robust data security measures to protect sensitive information and comply with regulatory requirements. This might involve data encryption, access controls, intrusion detection systems, and data security protocols tailored to the specific data types and organizational needs. Data-Driven Culture: Foster a data-driven culture within the organization. This involves providing engineers and other stakeholders access to relevant data and encouraging its use in problem-solving, strategic planning, and data-driven decision-making across all levels. Collaboration and Communication: Foster effective collaboration and communication between data engineers and stakeholders, such as business analysts and domain experts. This ensures data is collected, managed, and utilized in a way that aligns with business objectives and drives organizational success. Continuous Monitoring and Improvement: Regularly monitor data management processes and performance metrics. Analyze the collected data to identify areas for improvement and implement changes to optimize data management practices and ensure data accessibility, reliability, and security. By adopting these best practices, organizations can establish a data management system that empowers them to unlock the full potential of data for informed decision-making and innovative solutions, driving success and competitive advantage. Structured Vs. Unstructured Data: Key Takeaways In the ever-evolving data landscape, harnessing the potential of diverse data types necessitates a comprehensive approach to data management. By understanding the unique characteristics of structured, semi-structured, and unstructured data (quantitative, qualitative), organizations can leverage the strengths of each type and overcome inherent challenges. Utilizing APIs and choosing appropriate file formats (XML, CSV, JSON) ensures data accessibility and interoperability across different systems and applications, further enhancing data utilization. Adopting best practices, including utilizing cloud-based storage solutions and implementing efficient data pipelines (ETL), ensures scalability and the ability to handle increasing data volumes. Additionally, addressing data quality concerns through cleansing processes is crucial for reliable data-driven decisions that impact every aspect of an organization's operations (decision-making, scalability). Embracing a data-driven culture fosters collaboration and communication (APIs) across various teams, including data scientists and programmers using diverse programming languages. This collaborative approach unlocks the full potential of data, driving innovation and long-term success. Furthermore, adhering to ethical considerations in data collection and usage protects individual privacy rights, builds trust, and ensures responsible data management practices. Ultimately, organizations can unlock valuable insights, gain a competitive edge, and navigate the ever-changing, data-driven world by effectively managing and utilizing data in all its forms. By embracing the challenges and opportunities presented by different data types, organizations can position themselves for continued growth and success.

Dec 20 2023

8 M

Video Data Curation Guide for Computer Vision Teams