diff --git a/.github/dependabot.yml b/.github/dependabot.yml

new file mode 100644

index 00000000..0b845d3b

--- /dev/null

+++ b/.github/dependabot.yml

@@ -0,0 +1,11 @@

+version: 2

+updates:

+ - package-ecosystem: "github-actions"

+ directory: "/"

+ schedule:

+ interval: "monthly"

+

+ - package-ecosystem: "pip"

+ directory: "/"

+ schedule:

+ interval: "monthly"

diff --git a/.github/workflows/build_executable.yml b/.github/workflows/build_executable.yml

index ed522efd..2807bcf8 100644

--- a/.github/workflows/build_executable.yml

+++ b/.github/workflows/build_executable.yml

@@ -1,16 +1,33 @@

-name: Build executable version of CLI

+name: Build executable version of CLI and optionally upload artifact

on:

+ workflow_dispatch:

+ inputs:

+ publish:

+ description: 'Upload artifacts to release'

+ required: false

+ default: false

+ type: boolean

push:

branches:

- main

+permissions:

+ contents: write

+

jobs:

build:

+ name: Build on ${{ matrix.os }} (${{ matrix.mode }} mode)

strategy:

fail-fast: false

matrix:

- os: [ ubuntu-20.04, macos-11, windows-2019 ]

+ os: [ ubuntu-22.04, macos-15-intel, macos-15, windows-2022 ]

+ mode: [ 'onefile', 'onedir' ]

+ exclude:

+ - os: ubuntu-22.04

+ mode: onedir

+ - os: windows-2022

+ mode: onedir

runs-on: ${{ matrix.os }}

@@ -20,7 +37,7 @@ jobs:

steps:

- name: Run Cimon

- if: matrix.os == 'ubuntu-20.04'

+ if: matrix.os == 'ubuntu-22.04'

uses: cycodelabs/cimon-action@v0

with:

client-id: ${{ secrets.CIMON_CLIENT_ID }}

@@ -30,31 +47,46 @@ jobs:

files.pythonhosted.org

install.python-poetry.org

pypi.org

+ uploads.github.com

- name: Checkout repository

- uses: actions/checkout@v3

+ uses: actions/checkout@v4

with:

fetch-depth: 0

- - name: Set up Python 3.7

- uses: actions/setup-python@v4

+ - name: Checkout latest release tag

+ if: ${{ github.event_name == 'workflow_dispatch' }}

+ run: |

+ LATEST_TAG=$(git describe --tags `git rev-list --tags --max-count=1`)

+ git checkout $LATEST_TAG

+ echo "LATEST_TAG=$LATEST_TAG" >> $GITHUB_ENV

+

+ - name: Set up Python 3.13

+ uses: actions/setup-python@v6

with:

- python-version: '3.7'

+ python-version: '3.13'

+

+ - name: Load cached Poetry setup

+ id: cached-poetry

+ uses: actions/cache@v5

+ with:

+ path: ~/.local

+ key: poetry-${{ matrix.os }}-2 # increment to reset cache

- name: Setup Poetry

+ if: steps.cached-poetry.outputs.cache-hit != 'true'

uses: snok/install-poetry@v1

+ with:

+ version: 2.2.1

- - name: Install dependencies

- run: poetry install

-

- - name: Build executable

- run: poetry run pyinstaller pyinstaller.spec

+ - name: Add Poetry to PATH

+ run: echo "$HOME/.local/bin" >> $GITHUB_PATH

- - name: Test executable

- run: ./dist/cycode --version

+ - name: Install dependencies

+ run: poetry install --without dev,test

- - name: Sign macOS executable

- if: ${{ startsWith(matrix.os, 'macos') }}

+ - name: Import macOS signing certificate

+ if: runner.os == 'macOS'

env:

APPLE_CERT: ${{ secrets.APPLE_CERT }}

APPLE_CERT_PWD: ${{ secrets.APPLE_CERT_PWD }}

@@ -75,11 +107,58 @@ jobs:

security import $CERTIFICATE_PATH -P "$APPLE_CERT_PWD" -A -t cert -f pkcs12 -k $KEYCHAIN_PATH

security list-keychain -d user -s $KEYCHAIN_PATH

- # sign executable

- codesign --deep --force --options=runtime --entitlements entitlements.plist --sign "$APPLE_CERT_NAME" --timestamp dist/cycode

+ - name: Build executable (onefile)

+ if: matrix.mode == 'onefile'

+ env:

+ APPLE_CERT_NAME: ${{ secrets.APPLE_CERT_NAME }}

+ run: |

+ poetry run pyinstaller pyinstaller.spec

+ echo "PATH_TO_CYCODE_CLI_EXECUTABLE=dist/cycode-cli" >> $GITHUB_ENV

+

+ - name: Build executable (onedir)

+ if: matrix.mode == 'onedir'

+ env:

+ CYCODE_ONEDIR_MODE: 1

+ APPLE_CERT_NAME: ${{ secrets.APPLE_CERT_NAME }}

+ run: |

+ poetry run pyinstaller pyinstaller.spec

+ echo "PATH_TO_CYCODE_CLI_EXECUTABLE=dist/cycode-cli/cycode-cli" >> $GITHUB_ENV

+

+ - name: Test executable

+ run: time $PATH_TO_CYCODE_CLI_EXECUTABLE status

+

+ - name: Codesign onedir binaries

+ if: runner.os == 'macOS' && matrix.mode == 'onedir'

+ env:

+ APPLE_CERT_NAME: ${{ secrets.APPLE_CERT_NAME }}

+ run: |

+ # The standalone _internal/Python fails codesign --verify --strict because it was

+ # extracted from Python.framework without Info.plist context.

+ # Fix: remove the bare copy and replace with the framework version's binary,

+ # then delete the framework directory (it's redundant).

+ if [ -d dist/cycode-cli/_internal/Python.framework ]; then

+ FRAMEWORK_PYTHON=$(find dist/cycode-cli/_internal/Python.framework/Versions -name "Python" -type f | head -1)

+ if [ -n "$FRAMEWORK_PYTHON" ]; then

+ echo "Replacing _internal/Python with framework binary"

+ rm dist/cycode-cli/_internal/Python

+ cp "$FRAMEWORK_PYTHON" dist/cycode-cli/_internal/Python

+ fi

+ rm -rf dist/cycode-cli/_internal/Python.framework

+ fi

+

+ # Sign all Mach-O binaries (excluding the main executable)

+ while IFS= read -r file; do

+ if file -b "$file" | grep -q "Mach-O"; then

+ echo "Signing: $file"

+ codesign --force --sign "$APPLE_CERT_NAME" --timestamp --options runtime "$file"

+ fi

+ done < <(find dist/cycode-cli -type f ! -name "cycode-cli")

+

+ # Re-sign the main executable with entitlements (must be last)

+ codesign --force --sign "$APPLE_CERT_NAME" --timestamp --options runtime --entitlements entitlements.plist dist/cycode-cli/cycode-cli

- name: Notarize macOS executable

- if: ${{ startsWith(matrix.os, 'macos') }}

+ if: runner.os == 'macOS'

env:

APPLE_NOTARIZATION_EMAIL: ${{ secrets.APPLE_NOTARIZATION_EMAIL }}

APPLE_NOTARIZATION_PWD: ${{ secrets.APPLE_NOTARIZATION_PWD }}

@@ -88,20 +167,155 @@ jobs:

# create keychain profile

xcrun notarytool store-credentials "notarytool-profile" --apple-id "$APPLE_NOTARIZATION_EMAIL" --team-id "$APPLE_NOTARIZATION_TEAM_ID" --password "$APPLE_NOTARIZATION_PWD"

- # create zip file (notarization does not support binaries)

- ditto -c -k --keepParent dist/cycode notarization.zip

+ # create zip file (notarization does not support bare binaries)

+ ditto -c -k --keepParent dist/cycode-cli notarization.zip

# notarize app (this will take a while)

- xcrun notarytool submit notarization.zip --keychain-profile "notarytool-profile" --wait

+ NOTARIZE_OUTPUT=$(xcrun notarytool submit notarization.zip --keychain-profile "notarytool-profile" --wait 2>&1) || true

+ echo "$NOTARIZE_OUTPUT"

+

+ # extract submission ID for log retrieval

+ SUBMISSION_ID=$(echo "$NOTARIZE_OUTPUT" | grep " id:" | head -1 | awk '{print $2}')

+

+ # check notarization status explicitly

+ if echo "$NOTARIZE_OUTPUT" | grep -q "status: Accepted"; then

+ echo "Notarization succeeded!"

+ else

+ echo "Notarization failed! Fetching log for details..."

+ if [ -n "$SUBMISSION_ID" ]; then

+ xcrun notarytool log "$SUBMISSION_ID" --keychain-profile "notarytool-profile" || true

+ fi

+ exit 1

+ fi

- # we can't staple the app because it's executable. we should only staple app bundles like .dmg

- # xcrun stapler staple dist/cycode

+ # we can't staple the app because it's executable

- - name: Test signed executable

- if: ${{ startsWith(matrix.os, 'macos') }}

- run: ./dist/cycode --version

+ - name: Verify macOS code signatures

+ if: runner.os == 'macOS'

+ run: |

+ FAILED=false

+ while IFS= read -r file; do

+ if file -b "$file" | grep -q "Mach-O"; then

+ if ! codesign --verify "$file" 2>&1; then

+ echo "INVALID: $file"

+ codesign -dv "$file" 2>&1 || true

+ FAILED=true

+ else

+ echo "OK: $file"

+ fi

+ fi

+ done < <(find dist/cycode-cli -type f)

+

+ if [ "$FAILED" = true ]; then

+ echo "Found binaries with invalid signatures!"

+ exit 1

+ fi

+

+ codesign -dv --verbose=4 $PATH_TO_CYCODE_CLI_EXECUTABLE

+

+ - name: Test macOS signed executable

+ if: runner.os == 'macOS'

+ run: |

+ file -b $PATH_TO_CYCODE_CLI_EXECUTABLE

+ time $PATH_TO_CYCODE_CLI_EXECUTABLE status

+

+ - name: Import cert for Windows and setup envs

+ if: runner.os == 'Windows'

+ env:

+ SM_CLIENT_CERT_FILE_B64: ${{ secrets.SM_CLIENT_CERT_FILE_B64 }}

+ run: |

+ # import certificate

+ echo "$SM_CLIENT_CERT_FILE_B64" | base64 --decode > /d/Certificate_pkcs12.p12

+ echo "SM_CLIENT_CERT_FILE=D:\\Certificate_pkcs12.p12" >> "$GITHUB_ENV"

+

+ # add required soft to the path

+ echo "C:\Program Files (x86)\Windows Kits\10\App Certification Kit" >> $GITHUB_PATH

+ echo "C:\Program Files\DigiCert\DigiCert One Signing Manager Tools" >> $GITHUB_PATH

+

+ - name: Sign Windows executable

+ if: runner.os == 'Windows'

+ shell: cmd

+ env:

+ SM_HOST: ${{ secrets.SM_HOST }}

+ SM_KEYPAIR_ALIAS: ${{ secrets.SM_KEYPAIR_ALIAS }}

+ SM_API_KEY: ${{ secrets.SM_API_KEY }}

+ SM_CLIENT_CERT_PASSWORD: ${{ secrets.SM_CLIENT_CERT_PASSWORD }}

+ SM_CODE_SIGNING_CERT_SHA1_HASH: ${{ secrets.SM_CODE_SIGNING_CERT_SHA1_HASH }}

+ run: |

+ :: setup SSM KSP

+ curl -X GET https://one.digicert.com/signingmanager/api-ui/v1/releases/smtools-windows-x64.msi/download -H "x-api-key:%SM_API_KEY%" -o smtools-windows-x64.msi

+ msiexec /i smtools-windows-x64.msi /quiet /qn

+ C:\Windows\System32\certutil.exe -csp "DigiCert Signing Manager KSP" -key -user

+ smctl windows certsync --keypair-alias=%SM_KEYPAIR_ALIAS%

+

+ :: sign executable

+ signtool.exe sign /sha1 %SM_CODE_SIGNING_CERT_SHA1_HASH% /tr http://timestamp.digicert.com /td SHA256 /fd SHA256 ".\dist\cycode-cli.exe"

- - uses: actions/upload-artifact@v3

+ - name: Test Windows signed executable

+ if: runner.os == 'Windows'

+ shell: cmd

+ run: |

+ :: call executable and expect correct output

+ .\dist\cycode-cli.exe status

+

+ :: verify signature

+ signtool.exe verify /v /pa ".\dist\cycode-cli.exe"

+

+ - name: Prepare files for artifact and release (rename and calculate sha256)

+ run: echo "ARTIFACT_NAME=$(./process_executable_file.py dist/cycode-cli)" >> $GITHUB_ENV

+

+ - name: Upload files as artifact

+ uses: actions/upload-artifact@v4

with:

- name: cycode-cli-${{ matrix.os }}

+ name: ${{ env.ARTIFACT_NAME }}

path: dist

+

+ - name: Verify macOS artifact end-to-end

+ if: runner.os == 'macOS' && matrix.mode == 'onedir'

+ uses: actions/download-artifact@v8

+ with:

+ name: ${{ env.ARTIFACT_NAME }}

+ path: /tmp/artifact-verify

+

+ - name: Verify macOS artifact signatures and run with quarantine

+ if: runner.os == 'macOS' && matrix.mode == 'onedir'

+ run: |

+ # extract the onedir zip exactly as an end user would

+ ARCHIVE=$(find /tmp/artifact-verify -name "*.zip" | head -1)

+ echo "Verifying archive: $ARCHIVE"

+ unzip "$ARCHIVE" -d /tmp/artifact-extracted

+

+ # verify all Mach-O code signatures

+ FAILED=false

+ while IFS= read -r file; do

+ if file -b "$file" | grep -q "Mach-O"; then

+ if ! codesign --verify "$file" 2>&1; then

+ echo "INVALID: $file"

+ codesign -dv "$file" 2>&1 || true

+ FAILED=true

+ else

+ echo "OK: $file"

+ fi

+ fi

+ done < <(find /tmp/artifact-extracted -type f)

+

+ if [ "$FAILED" = true ]; then

+ echo "Artifact contains binaries with invalid signatures!"

+ exit 1

+ fi

+

+ # simulate download quarantine and test execution

+ # this is the definitive test — it triggers the same dlopen checks end users experience

+ find /tmp/artifact-extracted -type f -exec xattr -w com.apple.quarantine "0081;$(printf '%x' $(date +%s));CI;$(uuidgen)" {} \;

+ EXECUTABLE=$(find /tmp/artifact-extracted -name "cycode-cli" -type f | head -1)

+ echo "Testing quarantined executable: $EXECUTABLE"

+ time "$EXECUTABLE" status

+

+ - name: Upload files to release

+ if: ${{ github.event_name == 'workflow_dispatch' && inputs.publish }}

+ uses: svenstaro/upload-release-action@v2

+ with:

+ file: dist/*

+ tag: ${{ env.LATEST_TAG }}

+ overwrite: true

+ file_glob: true

diff --git a/.github/workflows/docker-image-dev.yml b/.github/workflows/docker-image-dev.yml

deleted file mode 100644

index b0743afc..00000000

--- a/.github/workflows/docker-image-dev.yml

+++ /dev/null

@@ -1,38 +0,0 @@

-on:

- push:

- branches: dev

-

-permissions:

- contents: read

-

-jobs:

- main:

- runs-on: ubuntu-latest

- steps:

- -

- name: Checkout

- uses: actions/checkout@v2

- -

- name: Set up QEMU

- uses: docker/setup-qemu-action@v1

- -

- name: Set up Docker Buildx

- uses: docker/setup-buildx-action@v1

- -

- name: Login to DockerHub Registry

- env:

- DOCKERHUB_PASSWORD: ${{ secrets.DOCKERHUB_PASSWORD }}

- DOCKERHUB_USER: ${{ secrets.DOCKERHUB_USER }}

- run: echo "$DOCKERHUB_PASSWORD" | docker login -u "$DOCKERHUB_USER" --password-stdin

- -

- name: Build and push

- id: docker_build

- uses: docker/build-push-action@v3

- with:

- context: .

- file: ./Dockerfile

- push: true

- tags: cycodehq/cycode_cli:dev

- -

- name: Image digest

- run: echo ${{ steps.docker_build.outputs.digest }}

diff --git a/.github/workflows/docker-image.yml b/.github/workflows/docker-image.yml

index 8912d969..4e2d4ee8 100644

--- a/.github/workflows/docker-image.yml

+++ b/.github/workflows/docker-image.yml

@@ -1,46 +1,91 @@

+name: Build Docker Image. On tag creation push to Docker Hub. On dispatch event build the latest tag and push to Docker Hub

+

on:

workflow_dispatch:

-

-permissions:

- # Write permission needed for creating a tag.

- contents: write

+ pull_request:

+ push:

+ tags: [ 'v*.*.*' ]

jobs:

- main:

+ docker:

runs-on: ubuntu-latest

+

steps:

- -

- name: Checkout

- uses: actions/checkout@v2

- -

- name: Set up QEMU

- uses: docker/setup-qemu-action@v1

- -

- name: Set up Docker Buildx

- uses: docker/setup-buildx-action@v1

- -

- name: Login to DockerHub Registry

- env:

- DOCKERHUB_PASSWORD: ${{ secrets.DOCKERHUB_PASSWORD }}

- DOCKERHUB_USER: ${{ secrets.DOCKERHUB_USER }}

- run: echo "$DOCKERHUB_PASSWORD" | docker login -u "$DOCKERHUB_USER" --password-stdin

-

- - name: Bump version

- id: bump_version

- uses: anothrNick/github-tag-action@1.36.0

- env:

- GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

- DEFAULT_BUMP: minor

-

- -

- name: Build and push

+ - name: Checkout repository

+ uses: actions/checkout@v4

+ with:

+ fetch-depth: 0

+

+ - name: Get latest release tag

+ id: latest_tag

+ run: |

+ LATEST_TAG=$(git describe --tags `git rev-list --tags --max-count=1`)

+ echo "LATEST_TAG=$LATEST_TAG" >> $GITHUB_OUTPUT

+

+ - name: Check out latest release tag

+ if: ${{ github.event_name == 'workflow_dispatch' }}

+ run: |

+ git checkout ${{ steps.latest_tag.outputs.LATEST_TAG }}

+

+ - name: Set up Python

+ uses: actions/setup-python@v6

+ with:

+ python-version: '3.9'

+

+ - name: Load cached Poetry setup

+ id: cached_poetry

+ uses: actions/cache@v5

+ with:

+ path: ~/.local

+ key: poetry-ubuntu-1 # increment to reset cache

+

+ - name: Setup Poetry

+ if: steps.cached_poetry.outputs.cache-hit != 'true'

+ uses: snok/install-poetry@v1

+ with:

+ version: 2.2.1

+

+ - name: Add Poetry to PATH

+ run: echo "$HOME/.local/bin" >> $GITHUB_PATH

+

+ - name: Install Poetry Plugin

+ run: poetry self add "poetry-dynamic-versioning[plugin]"

+

+ - name: Get CLI Version

+ id: cli_version

+ run: |

+ echo "::debug::Package version: $(poetry version --short)"

+ echo "CLI_VERSION=$(poetry version --short)" >> $GITHUB_OUTPUT

+

+ - name: Set up QEMU

+ uses: docker/setup-qemu-action@v3

+

+ - name: Set up Docker Buildx

+ uses: docker/setup-buildx-action@v4

+

+ - name: Login to Docker Hub

+ if: ${{ github.event_name == 'workflow_dispatch' || startsWith(github.ref, 'refs/tags/v') }}

+ uses: docker/login-action@v3

+ with:

+ username: ${{ secrets.DOCKERHUB_USER }}

+ password: ${{ secrets.DOCKERHUB_PASSWORD }}

+

+ - name: Build and push

id: docker_build

- uses: docker/build-push-action@v3

+ if: ${{ github.event_name == 'workflow_dispatch' || startsWith(github.ref, 'refs/tags/v') }}

+ uses: docker/build-push-action@v7

with:

context: .

- file: ./Dockerfile

+ platforms: linux/amd64,linux/arm64

push: true

- tags: cycodehq/cycode_cli:${{ steps.bump_version.outputs.new_tag }}

- -

- name: Image digest

- run: echo ${{ steps.docker_build.outputs.digest }}

+ tags: cycodehq/cycode_cli:${{ steps.latest_tag.outputs.LATEST_TAG }},cycodehq/cycode_cli:latest

+

+ - name: Verify build

+ id: docker_verify_build

+ if: ${{ github.event_name != 'workflow_dispatch' && !startsWith(github.ref, 'refs/tags/v') }}

+ uses: docker/build-push-action@v7

+ with:

+ context: .

+ platforms: linux/amd64,linux/arm64

+ push: false

+ tags: cycodehq/cycode_cli:${{ steps.cli_version.outputs.CLI_VERSION }}

diff --git a/.github/workflows/pre_release.yml b/.github/workflows/pre_release.yml

index 13ae474b..802f4e27 100644

--- a/.github/workflows/pre_release.yml

+++ b/.github/workflows/pre_release.yml

@@ -25,19 +25,33 @@ jobs:

install.python-poetry.org

pypi.org

upload.pypi.org

+ *.sigstore.dev

- name: Checkout repository

uses: actions/checkout@v3

with:

fetch-depth: 0

- - name: Set up Python 3.7

- uses: actions/setup-python@v4

+ - name: Set up Python

+ uses: actions/setup-python@v6

with:

- python-version: '3.7'

+ python-version: '3.9'

+

+ - name: Load cached Poetry setup

+ id: cached-poetry

+ uses: actions/cache@v5

+ with:

+ path: ~/.local

+ key: poetry-ubuntu-1 # increment to reset cache

- name: Setup Poetry

+ if: steps.cached-poetry.outputs.cache-hit != 'true'

uses: snok/install-poetry@v1

+ with:

+ version: 2.2.1

+

+ - name: Add Poetry to PATH

+ run: echo "$HOME/.local/bin" >> $GITHUB_PATH

- name: Install Poetry Plugin

run: poetry self add "poetry-dynamic-versioning[plugin]"

diff --git a/.github/workflows/release.yml b/.github/workflows/release.yml

index 70a60c33..88f86ef7 100644

--- a/.github/workflows/release.yml

+++ b/.github/workflows/release.yml

@@ -24,19 +24,33 @@ jobs:

install.python-poetry.org

pypi.org

upload.pypi.org

+ *.sigstore.dev

- name: Checkout repository

uses: actions/checkout@v3

with:

fetch-depth: 0

- - name: Set up Python 3.7

- uses: actions/setup-python@v4

+ - name: Set up Python

+ uses: actions/setup-python@v6

with:

- python-version: '3.7'

+ python-version: '3.9'

+

+ - name: Load cached Poetry setup

+ id: cached-poetry

+ uses: actions/cache@v5

+ with:

+ path: ~/.local

+ key: poetry-ubuntu-1 # increment to reset cache

- name: Setup Poetry

+ if: steps.cached-poetry.outputs.cache-hit != 'true'

uses: snok/install-poetry@v1

+ with:

+ version: 2.2.1

+

+ - name: Add Poetry to PATH

+ run: echo "$HOME/.local/bin" >> $GITHUB_PATH

- name: Install Poetry Plugin

run: poetry self add "poetry-dynamic-versioning[plugin]"

diff --git a/.github/workflows/ruff.yml b/.github/workflows/ruff.yml

new file mode 100644

index 00000000..ae6c7913

--- /dev/null

+++ b/.github/workflows/ruff.yml

@@ -0,0 +1,51 @@

+name: Ruff (linter and code formatter)

+

+on: [ pull_request, push ]

+

+jobs:

+ ruff:

+ runs-on: ubuntu-latest

+ steps:

+ - name: Run Cimon

+ uses: cycodelabs/cimon-action@v0

+ with:

+ client-id: ${{ secrets.CIMON_CLIENT_ID }}

+ secret: ${{ secrets.CIMON_SECRET }}

+ prevent: true

+ allowed-hosts: >

+ files.pythonhosted.org

+ install.python-poetry.org

+ pypi.org

+

+ - name: Checkout repository

+ uses: actions/checkout@v3

+

+ - name: Setup Python

+ uses: actions/setup-python@v6

+ with:

+ python-version: 3.9

+

+ - name: Load cached Poetry setup

+ id: cached-poetry

+ uses: actions/cache@v5

+ with:

+ path: ~/.local

+ key: poetry-ubuntu-1 # increment to reset cache

+

+ - name: Setup Poetry

+ if: steps.cached-poetry.outputs.cache-hit != 'true'

+ uses: snok/install-poetry@v1

+ with:

+ version: 2.2.1

+

+ - name: Add Poetry to PATH

+ run: echo "$HOME/.local/bin" >> $GITHUB_PATH

+

+ - name: Install dependencies

+ run: poetry install

+

+ - name: Run linter check

+ run: poetry run ruff check --output-format=github .

+

+ - name: Run code style check

+ run: poetry run ruff format --check .

diff --git a/.github/workflows/tests.yml b/.github/workflows/tests.yml

index 27450b71..c69fe4ac 100644

--- a/.github/workflows/tests.yml

+++ b/.github/workflows/tests.yml

@@ -20,20 +20,34 @@ jobs:

files.pythonhosted.org

install.python-poetry.org

pypi.org

+ *.ingest.us.sentry.io

- name: Checkout repository

- uses: actions/checkout@v3

+ uses: actions/checkout@v4

- name: Set up Python

- uses: actions/setup-python@v4

+ uses: actions/setup-python@v6

with:

- python-version: '3.7'

+ python-version: '3.9'

+

+ - name: Load cached Poetry setup

+ id: cached-poetry

+ uses: actions/cache@v5

+ with:

+ path: ~/.local

+ key: poetry-ubuntu-1 # increment to reset cache

- name: Setup Poetry

+ if: steps.cached-poetry.outputs.cache-hit != 'true'

uses: snok/install-poetry@v1

+ with:

+ version: 2.2.1

+

+ - name: Add Poetry to PATH

+ run: echo "$HOME/.local/bin" >> $GITHUB_PATH

- name: Install dependencies

run: poetry install

- name: Run Tests

- run: poetry run pytest

+ run: poetry run python -m pytest

diff --git a/.github/workflows/tests_full.yml b/.github/workflows/tests_full.yml

index bdcc49d4..65426b13 100644

--- a/.github/workflows/tests_full.yml

+++ b/.github/workflows/tests_full.yml

@@ -13,7 +13,7 @@ jobs:

strategy:

matrix:

os: [ macos-latest, ubuntu-latest, windows-latest ]

- python-version: [ "3.7", "3.8", "3.9", "3.10", "3.11" ]

+ python-version: [ "3.9", "3.10", "3.11", "3.12", "3.13", "3.14" ]

runs-on: ${{matrix.os}}

@@ -33,20 +33,43 @@ jobs:

files.pythonhosted.org

install.python-poetry.org

pypi.org

+ *.ingest.us.sentry.io

- name: Checkout repository

- uses: actions/checkout@v3

+ uses: actions/checkout@v4

+ with:

+ fetch-depth: 0

- name: Set up Python

- uses: actions/setup-python@v4

+ uses: actions/setup-python@v6

with:

python-version: ${{ matrix.python-version }}

+ - name: Load cached Poetry setup

+ id: cached-poetry

+ uses: actions/cache@v5

+ with:

+ path: ~/.local

+ key: poetry-${{ matrix.os }}-${{ matrix.python-version }}-3 # increment to reset cache

+

- name: Setup Poetry

+ if: steps.cached-poetry.outputs.cache-hit != 'true'

uses: snok/install-poetry@v1

+ with:

+ version: 2.2.1

+

+ - name: Add Poetry to PATH

+ run: echo "$HOME/.local/bin" >> $GITHUB_PATH

- name: Install dependencies

run: poetry install

- - name: Run Tests

- run: poetry run pytest

+ - name: Run executable test

+ # we care about the one Python version that will be used to build the executable

+ if: matrix.python-version == '3.13'

+ run: |

+ poetry run pyinstaller pyinstaller.spec

+ ./dist/cycode-cli version

+

+ - name: Run pytest

+ run: poetry run python -m pytest

diff --git a/.gitignore b/.gitignore

index 1de92d03..7f27180f 100644

--- a/.gitignore

+++ b/.gitignore

@@ -1,6 +1,8 @@

+.DS_Store

.idea

*.iml

.env

+.ruff_cache/

# Byte-compiled / optimized / DLL files

__pycache__/

diff --git a/.pre-commit-hooks.yaml b/.pre-commit-hooks.yaml

index d3b55ce6..fd2bfbed 100644

--- a/.pre-commit-hooks.yaml

+++ b/.pre-commit-hooks.yaml

@@ -1,10 +1,39 @@

- id: cycode

- name: Cycode pre commit defender

+ name: Cycode Secrets pre-commit defender

language: python

+ language_version: python3

entry: cycode

- args: [ 'scan', 'pre_commit' ]

+ args: [ '-o', 'text', '--no-progress-meter', 'scan', '-t', 'secret', 'pre-commit' ]

- id: cycode-sca

- name: Cycode SCA pre commit defender

+ name: Cycode SCA pre-commit defender

language: python

+ language_version: python3

entry: cycode

- args: [ 'scan', '--scan-type', 'sca', 'pre_commit' ]

\ No newline at end of file

+ args: [ '-o', 'text', '--no-progress-meter', 'scan', '-t', 'sca', 'pre-commit' ]

+- id: cycode-sast

+ name: Cycode SAST pre-commit defender

+ language: python

+ language_version: python3

+ entry: cycode

+ args: [ '-o', 'text', '--no-progress-meter', 'scan', '-t', 'sast', 'pre-commit' ]

+- id: cycode-pre-push

+ name: Cycode Secrets pre-push defender

+ language: python

+ language_version: python3

+ entry: cycode

+ args: [ '-o', 'text', '--no-progress-meter', 'scan', '-t', 'secret', 'pre-push' ]

+ stages: [pre-push]

+- id: cycode-sca-pre-push

+ name: Cycode SCA pre-push defender

+ language: python

+ language_version: python3

+ entry: cycode

+ args: [ '-o', 'text', '--no-progress-meter', 'scan', '-t', 'sca', 'pre-push' ]

+ stages: [pre-push]

+- id: cycode-sast-pre-push

+ name: Cycode SAST pre-push defender

+ language: python

+ language_version: python3

+ entry: cycode

+ args: [ '-o', 'text', '--no-progress-meter', 'scan', '-t', 'sast', 'pre-push' ]

+ stages: [pre-push]

diff --git a/CODEOWNERS b/CODEOWNERS

index 874040fd..f05ffdb9 100644

--- a/CODEOWNERS

+++ b/CODEOWNERS

@@ -1 +1 @@

-* @MarshalX @MichalBor @MaorDavidzon @artem-fedorov

+* @elsapet @gotbadger @mateusz-sterczewski

diff --git a/CONTRIBUTING.md b/CONTRIBUTING.md

new file mode 100644

index 00000000..857a27cd

--- /dev/null

+++ b/CONTRIBUTING.md

@@ -0,0 +1,165 @@

+

+

+

+

+## How to contribute to Cycode CLI

+

+The minimum version of Python that we support is 3.9.

+We recommend using this version for local development.

+But it’s fine to use a higher version without using new features from these versions.

+

+The project is under Poetry project management.

+To deal with it, you should install it on your system:

+

+Install Poetry (feel free to use Brew, etc.):

+

+```shell

+curl -sSL https://install.python-poetry.org | python - -y

+```

+

+Add Poetry to PATH if required.

+

+Add a plugin to support dynamic versioning from Git Tags:

+

+```shell

+poetry self add "poetry-dynamic-versioning[plugin]"

+```

+

+Install dependencies of the project:

+

+```shell

+poetry install

+```

+

+Check that the version is valid (not 0.0.0):

+

+```shell

+poetry version

+```

+

+You are ready to write code!

+

+To run the project use:

+

+```shell

+poetry run cycode

+```

+

+or main entry point in an activated virtual environment:

+

+```shell

+python cycode/cli/main.py

+```

+

+### Code linting and formatting

+

+We use `ruff`.

+It is configured well, so you don’t need to do anything.

+You can see all enabled rules in the `pyproject.toml` file.

+Both tests and the main codebase are checked.

+Try to avoid type annotations like `Any`, etc.

+

+GitHub Actions will check that your code is formatted well. You can run it locally:

+

+```shell

+# lint

+poetry run ruff check .

+# format

+poetry run ruff format .

+```

+

+Many rules support auto-fixing. You can run it with the `--fix` flag.

+

+Plugin for JB IDEs with auto formatting on save is available [here](https://plugins.jetbrains.com/plugin/20574-ruff).

+

+### Branching and versioning

+

+We use the `main` branch as the main one.

+All development should be done in feature branches.

+When you are ready create a Pull Request to the `main` branch.

+

+Each commit in the `main` branch will be built and published to PyPI as a pre-release!

+Such builds could be installed with the `--pre` flag. For example:

+

+```shell

+pip install --pre cycode

+```

+

+Also, you can select a specific version of the pre-release:

+

+```shell

+pip install cycode==1.7.2.dev6

+```

+

+We are using [Semantic Versioning](https://semver.org/) and the version is generated automatically from Git Tags. So,

+when you are ready to release a new version, you should create a new Git Tag. The version will be generated from it.

+

+Pre-release versions are generated on distance from the latest Git Tag. For example, if the latest Git Tag is `1.7.2`,

+then the next pre-release version will be `1.7.2.dev1`.

+

+We are using GitHub Releases to create Git Tags with changelogs.

+For changelogs, we are using a standard template

+of [Automatically generated release notes](https://docs.github.com/en/repositories/releasing-projects-on-github/automatically-generated-release-notes).

+

+### Testing

+

+We are using `pytest` for testing. You can run tests with:

+

+```shell

+poetry run pytest

+```

+

+The library used for sending requests is [requests](https://github.com/psf/requests).

+To mock requests, we are using the [responses](https://github.com/getsentry/responses) library.

+All requests must be mocked.

+

+To see the code coverage of the project, you can run:

+

+```shell

+poetry run coverage run -m pytest .

+```

+

+To generate the HTML report, you can run:

+

+```shell

+poetry run coverage html

+```

+

+The report will be generated in the `htmlcov` folder.

+

+### Documentation

+

+Keep [README.md](README.md) up to date.

+All CLI commands are documented automatically if you add a docstring to the command.

+Clean up the changelog before release.

+

+### Publishing

+

+New versions are published automatically on the new GitHub Release.

+It uses the OpenID Connect publishing mechanism to upload on PyPI.

+

+[Homebrew formula](https://formulae.brew.sh/formula/cycode) is updated automatically on the new PyPI release.

+

+The CLI is also distributed as executable files for Linux, macOS, and Windows.

+It is powered by [PyInstaller](https://pyinstaller.org/) and the process is automated by GitHub Actions.

+These executables are attached to GitHub Releases as assets.

+

+To pack the project locally, you should run:

+

+```shell

+poetry build

+```

+

+It will create a `dist` folder with the package (sdist and wheel). You can install it locally:

+

+```shell

+pip install dist/cycode-{version}-py3-none-any.whl

+```

+

+To create an executable file locally, you should run:

+

+```shell

+poetry run pyinstaller pyinstaller.spec

+```

+

+It will create an executable file for **the current platform** in the `dist` folder.

diff --git a/Dockerfile b/Dockerfile

index a2197817..40d6fad3 100644

--- a/Dockerfile

+++ b/Dockerfile

@@ -1,12 +1,12 @@

-FROM python:3.8.16-alpine3.17 as base

+FROM python:3.12.9-alpine3.21 AS base

WORKDIR /usr/cycode/app

-RUN apk add git=2.38.5-r0

+RUN apk add git=2.47.3-r0

-FROM base as builder

-ENV POETRY_VERSION=1.4.2

+FROM base AS builder

+ENV POETRY_VERSION=2.2.1

# deps are required to build cffi

-RUN apk add --no-cache --virtual .build-deps gcc=12.2.1_git20220924-r4 libffi-dev=3.4.4-r0 musl-dev=1.2.3-r4 && \

+RUN apk add --no-cache --virtual .build-deps gcc=14.2.0-r4 libffi-dev=3.4.7-r0 musl-dev=1.2.5-r9 && \

pip install --no-cache-dir "poetry==$POETRY_VERSION" "poetry-dynamic-versioning[plugin]" && \

apk del .build-deps gcc libffi-dev musl-dev

@@ -19,7 +19,7 @@ RUN poetry config virtualenvs.in-project true && \

poetry --no-cache install --only=main --no-root && \

poetry build

-FROM base as final

+FROM base AS final

COPY --from=builder /usr/cycode/app/dist ./

RUN pip install --no-cache-dir cycode*.whl

diff --git a/LICENCE b/LICENCE

index 404e3d08..8d72be9a 100644

--- a/LICENCE

+++ b/LICENCE

@@ -1,6 +1,6 @@

MIT License

-Copyright (c) 2022 cycodehq-public

+Copyright (c) 2022 Cycode Ltd.

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

diff --git a/README.md b/README.md

index 370001e7..b512c813 100644

--- a/README.md

+++ b/README.md

@@ -1,61 +1,77 @@

# Cycode CLI User Guide

-The Cycode Command Line Interface (CLI) is an application you can install on your local machine which can scan your locally stored repositories for any secrets or infrastructure as code misconfigurations.

+The Cycode Command Line Interface (CLI) is an application you can install locally to scan your repositories for secrets, infrastructure as code misconfigurations, software composition analysis vulnerabilities, and static application security testing issues.

-This guide will guide you through both installation and usage.

+This guide walks you through both installation and usage.

# Table of Contents

1. [Prerequisites](#prerequisites)

2. [Installation](#installation)

1. [Install Cycode CLI](#install-cycode-cli)

- 1. [Use `auth` command](#use-auth-command)

- 2. [Use `configure` command](#use-configure-command)

+ 1. [Using the Auth Command](#using-the-auth-command)

+ 2. [Using the Configure Command](#using-the-configure-command)

3. [Add to Environment Variables](#add-to-environment-variables)

1. [On Unix/Linux](#on-unixlinux)

2. [On Windows](#on-windows)

2. [Install Pre-Commit Hook](#install-pre-commit-hook)

-3. [Cycode Command](#cycode-command)

-4. [Running a Scan](#running-a-scan)

- 1. [Repository Scan](#repository-scan)

- 1. [Branch Option](#branch-option)

- 2. [Monitor Option](#monitor-option)

- 3. [Report Option](#report-option)

- 4. [Package Vulnerabilities Scan](#package-vulnerabilities-option)

- 1. [License Compliance Option](#license-compliance-option)

- 2. [Severity Threshold](#severity-threshold)

- 5. [Path Scan](#path-scan)

- 6. [Commit History Scan](#commit-history-scan)

- 1. [Commit Range Option](#commit-range-option)

- 7. [Pre-Commit Scan](#pre-commit-scan)

-5. [Scan Results](#scan-results)

- 1. [Show/Hide Secrets](#showhide-secrets)

- 2. [Soft Fail](#soft-fail)

- 3. [Example Scan Results](#example-scan-results)

- 1. [Secrets Result Example](#secrets-result-example)

- 2. [IaC Result Example](#iac-result-example)

- 3. [SCA Result Example](#sca-result-example)

- 4. [SAST Result Example](#sast-result-example)

-6. [Ignoring Scan Results](#ignoring-scan-results)

- 1. [Ignoring a Secret Value](#ignoring-a-secret-value)

- 2. [Ignoring a Secret SHA Value](#ignoring-a-secret-sha-value)

- 3. [Ignoring a Path](#ignoring-a-path)

- 4. [Ignoring a Secret, IaC, or SCA Rule](#ignoring-a-secret-iac-sca-or-sast-rule)

- 5. [Ignoring a Package](#ignoring-a-package)

-7. [Syntax Help](#syntax-help)

+3. [Cycode CLI Commands](#cycode-cli-commands)

+4. [MCP Command](#mcp-command-experiment)

+ 1. [Starting the MCP Server](#starting-the-mcp-server)

+ 2. [Available Options](#available-options)

+ 3. [MCP Tools](#mcp-tools)

+ 4. [Usage Examples](#usage-examples)

+5. [Scan Command](#scan-command)

+ 1. [Running a Scan](#running-a-scan)

+ 1. [Options](#options)

+ 1. [Severity Threshold](#severity-option)

+ 2. [Monitor](#monitor-option)

+ 3. [Cycode Report](#cycode-report-option)

+ 4. [Package Vulnerabilities](#package-vulnerabilities-option)

+ 5. [License Compliance](#license-compliance-option)

+ 6. [Lock Restore](#lock-restore-option)

+ 2. [Repository Scan](#repository-scan)

+ 1. [Branch Option](#branch-option)

+ 3. [Path Scan](#path-scan)

+ 1. [Terraform Plan Scan](#terraform-plan-scan)

+ 4. [Commit History Scan](#commit-history-scan)

+ 1. [Commit Range Option (Diff Scanning)](#commit-range-option-diff-scanning)

+ 5. [Pre-Commit Scan](#pre-commit-scan)

+ 6. [Pre-Push Scan](#pre-push-scan)

+ 2. [Scan Results](#scan-results)

+ 1. [Show/Hide Secrets](#showhide-secrets)

+ 2. [Soft Fail](#soft-fail)

+ 3. [Example Scan Results](#example-scan-results)

+ 1. [Secrets Result Example](#secrets-result-example)

+ 2. [IaC Result Example](#iac-result-example)

+ 3. [SCA Result Example](#sca-result-example)

+ 4. [SAST Result Example](#sast-result-example)

+ 4. [Company Custom Remediation Guidelines](#company-custom-remediation-guidelines)

+ 3. [Ignoring Scan Results](#ignoring-scan-results)

+ 1. [Ignoring a Secret Value](#ignoring-a-secret-value)

+ 2. [Ignoring a Secret SHA Value](#ignoring-a-secret-sha-value)

+ 3. [Ignoring a Path](#ignoring-a-path)

+ 4. [Ignoring a Secret, IaC, or SCA Rule](#ignoring-a-secret-iac-sca-or-sast-rule)

+ 5. [Ignoring a Package](#ignoring-a-package)

+ 6. [Ignoring via a config file](#ignoring-via-a-config-file)

+6. [Report command](#report-command)

+ 1. [Generating SBOM Report](#generating-sbom-report)

+7. [Import command](#import-command)

+8. [Scan logs](#scan-logs)

+9. [Syntax Help](#syntax-help)

# Prerequisites

-- The Cycode CLI application requires Python version 3.7 or later.

-- Use the [`cycode auth` command](#use-auth-command) to authenticate to Cycode with the CLI

- - Alternatively, a Cycode Client ID and Client Secret Key can be acquired using the steps from the [Service Account Token](https://docs.cycode.com/reference/creating-a-service-account-access-token) and [Personal Access Token](https://docs.cycode.com/reference/creating-a-personal-access-token-1) pages for details on obtaining these values.

+- The Cycode CLI application requires Python version 3.9 or later. The MCP command is available only for Python 3.10 and above. If you're using an earlier Python version, this command will not be available.

+- Use the [`cycode auth` command](#using-the-auth-command) to authenticate to Cycode with the CLI

+ - Alternatively, you can get a Cycode Client ID and Client Secret Key by following the steps detailed in the [Service Account Token](https://docs.cycode.com/docs/en/service-accounts) and [Personal Access Token](https://docs.cycode.com/v1/docs/managing-personal-access-tokens) pages, which contain details on getting these values.

# Installation

-The following installation steps are applicable on both Windows and UNIX / Linux operating systems.

+The following installation steps are applicable to both Windows and UNIX / Linux operating systems.

-> :memo: **Note**

-> The following steps assume the use of `python3` and `pip3` for Python-related commands, but some systems may instead use the `python` and `pip` commands, depending on your Python environment’s configuration.

+> [!NOTE]

+> The following steps assume the use of `python3` and `pip3` for Python-related commands; however, some systems may instead use the `python` and `pip` commands, depending on your Python environment’s configuration.

## Install Cycode CLI

@@ -63,21 +79,37 @@ To install the Cycode CLI application on your local machine, perform the followi

1. Open your command line or terminal application.

-2. Execute the following command:

+2. Execute one of the following commands:

- `pip3 install cycode`

+ - To install from [PyPI](https://pypi.org/project/cycode/):

-3. Navigate to the top directory of the local repository you wish to scan.

+ ```bash

+ pip3 install cycode

+ ```

-4. There are three methods to set the Cycode client ID and client secret:

+ - To install from [Homebrew](https://formulae.brew.sh/formula/cycode):

- - [cycode auth](#use-auth-command) (**Recommended**)

- - [cycode configure](#use-configure-command)

+ ```bash

+ brew install cycode

+ ```

+

+ - To install from [GitHub Releases](https://github.com/cycodehq/cycode-cli/releases) navigate and download executable for your operating system and architecture, then run the following command:

+

+ ```bash

+ cd /path/to/downloaded/cycode-cli

+ chmod +x cycode

+ ./cycode

+ ```

+

+3. Finally authenticate the CLI. There are three methods to set the Cycode client ID and credentials (client secret or OIDC ID token):

+

+ - [cycode auth](#using-the-auth-command) (**Recommended**)

+ - [cycode configure](#using-the-configure-command)

- Add them to your [environment variables](#add-to-environment-variables)

-### Use auth Command

+### Using the Auth Command

-> :memo: **Note**

+> [!NOTE]

> This is the **recommended** method for setting up your local machine to authenticate with Cycode CLI.

1. Type the following command into your terminal/command line window:

@@ -86,66 +118,70 @@ To install the Cycode CLI application on your local machine, perform the followi

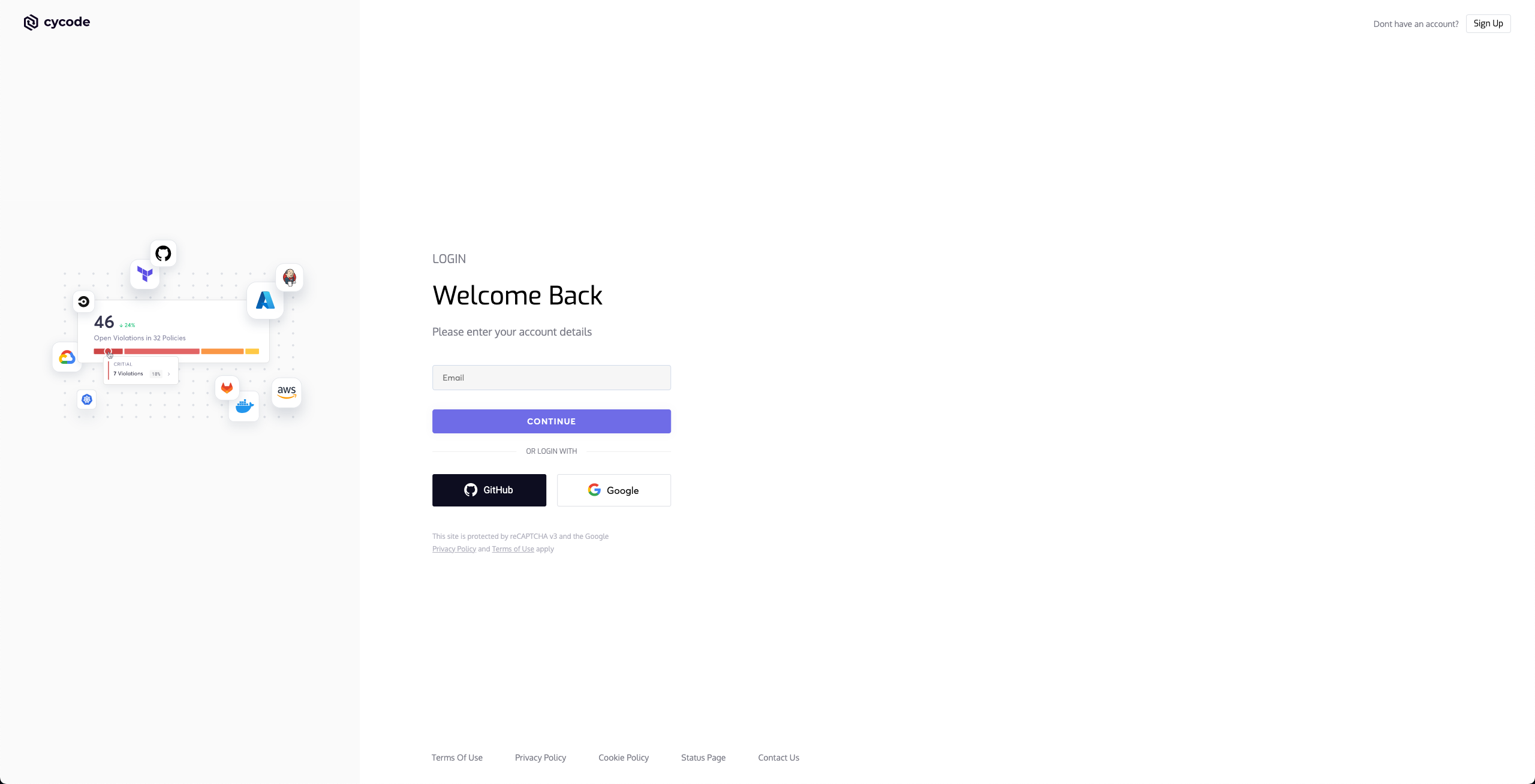

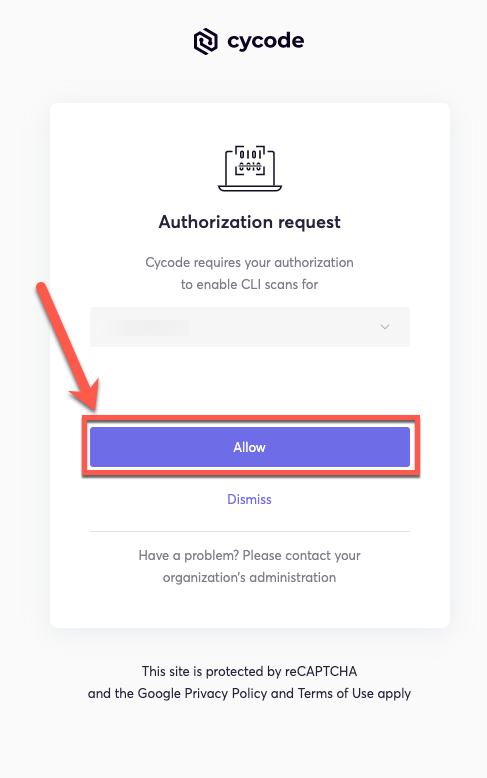

2. A browser window will appear, asking you to log into Cycode (as seen below):

-

+  -3. Enter you login credentials on this page and log in.

+3. Enter your login credentials on this page and log in.

-4. You will eventually be taken to this page, where you will be asked to choose the business group you want to authorize Cycode with (if applicable):

+4. You will eventually be taken to the page below, where you'll be asked to choose the business group you want to authorize Cycode with (if applicable):

-

+

-3. Enter you login credentials on this page and log in.

+3. Enter your login credentials on this page and log in.

-4. You will eventually be taken to this page, where you will be asked to choose the business group you want to authorize Cycode with (if applicable):

+4. You will eventually be taken to the page below, where you'll be asked to choose the business group you want to authorize Cycode with (if applicable):

-

+  -> :memo: **Note**

-> :memo: **Note**

-> This will be the default method for authenticating with the Cycode CLI.

+ > [!NOTE]

+ > This will be the default method for authenticating with the Cycode CLI.

-5. Click the **Allow** button to authorize the Cycode CLI on the chosen business group.

+5. Click the **Allow** button to authorize the Cycode CLI on the selected business group.

-

+  -6. Once done, you will see the following screen, if it was successfully selected:

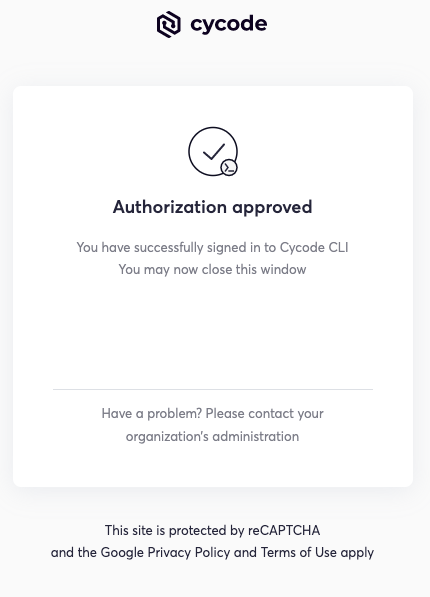

+6. Once completed, you'll see the following screen if it was selected successfully:

-

+

-6. Once done, you will see the following screen, if it was successfully selected:

+6. Once completed, you'll see the following screen if it was selected successfully:

-

+  7. In the terminal/command line screen, you will see the following when exiting the browser window:

- ```bash

- Successfully logged into cycode

- ```

+ `Successfully logged into cycode`

-### Use configure Command

+### Using the Configure Command

-> :memo: **Note**

7. In the terminal/command line screen, you will see the following when exiting the browser window:

- ```bash

- Successfully logged into cycode

- ```

+ `Successfully logged into cycode`

-### Use configure Command

+### Using the Configure Command

-> :memo: **Note**

-> If you already setup your Cycode client ID and client secret through the Linux or Windows environment variables, those credentials will take precedent over this method

+> [!NOTE]

+> If you already set up your Cycode Client ID and Client Secret through the Linux or Windows environment variables, those credentials will take precedent over this method.

1. Type the following command into your terminal/command line window:

- `cycode configure`

+ ```bash

+ cycode configure

+ ```

- You will see the following appear:

+2. Enter your Cycode API URL value (you can leave blank to use default value).

- ```bash

- Update credentials in file (/Users/travislloyd/.cycode/credentials.yaml)

- cycode client id []:

- ```

+ `Cycode API URL [https://api.cycode.com]: https://api.onpremise.com`

-2. Enter your Cycode client ID value.

+3. Enter your Cycode APP URL value (you can leave blank to use default value).

- ```bash

- cycode client id []: 7fe5346b-xxxx-xxxx-xxxx-55157625c72d

- ```

+ `Cycode APP URL [https://app.cycode.com]: https://app.onpremise.com`

-3. Enter your Cycode client secret value.

+4. Enter your Cycode Client ID value.

- ```bash

- cycode client secret []: c1e24929-xxxx-xxxx-xxxx-8b08c1839a2e

- ```

+ `Cycode Client ID []: 7fe5346b-xxxx-xxxx-xxxx-55157625c72d`

-4. If the values were entered successfully, you will see the following message:

+5. Enter your Cycode Client Secret value (skip if you plan to use an OIDC ID token).

- ```bash

- Successfully configured CLI credentials!

- ```

+ `Cycode Client Secret []: c1e24929-xxxx-xxxx-xxxx-8b08c1839a2e`

+

+6. Enter your Cycode OIDC ID Token value (optional).

-If you go into the `.cycode` folder under you user folder, you will find these credentials were created and placed in the `credentials.yaml` file in that folder.

+ `Cycode ID Token []: eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9...`

+

+7. If the values were entered successfully, you'll see the following message:

+

+ `Successfully configured CLI credentials!`

+

+ or/and

+

+ `Successfully configured Cycode URLs!`

+

+If you go into the `.cycode` folder under your user folder, you'll find these credentials were created and placed in the `credentials.yaml` file in that folder.

+The URLs were placed in the `config.yaml` file in that folder.

### Add to Environment Variables

@@ -153,261 +189,540 @@ If you go into the `.cycode` folder under you user folder, you will find these c

```bash

export CYCODE_CLIENT_ID={your Cycode ID}

+```

+

+and

+

+```bash

export CYCODE_CLIENT_SECRET={your Cycode Secret Key}

```

+If your organization uses OIDC authentication, you can provide the ID token instead (or in addition):

+

+```bash

+export CYCODE_ID_TOKEN={your Cycode OIDC ID token}

+```

+

#### On Windows

1. From the Control Panel, navigate to the System menu:

-

+  2. Next, click Advanced system settings:

-

+

2. Next, click Advanced system settings:

-

+  3. In the System Properties window that opens, click the Environment Variables button:

-

+

3. In the System Properties window that opens, click the Environment Variables button:

-

+  +

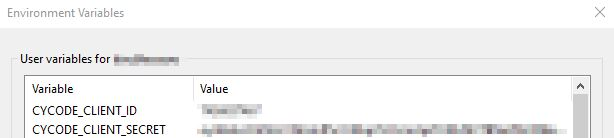

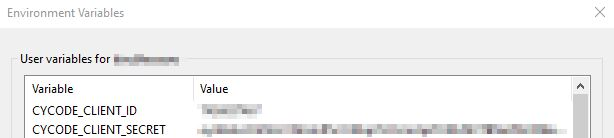

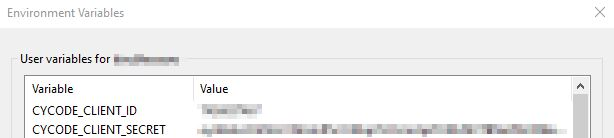

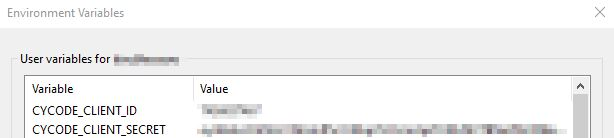

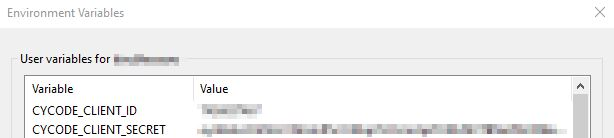

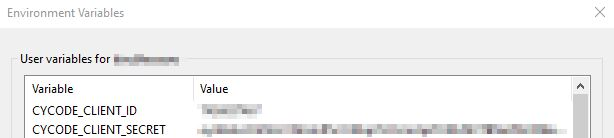

+4. Create `CYCODE_CLIENT_ID` and `CYCODE_CLIENT_SECRET` variables with values matching your ID and Secret Key, respectively. If you authenticate via OIDC, add `CYCODE_ID_TOKEN` with your OIDC ID token value as well:

-4. Create `CYCODE_CLIENT_ID` and `CYCODE_CLIENT_SECRET` variables with values matching your ID and Secret Key, respectively:

+

+

+4. Create `CYCODE_CLIENT_ID` and `CYCODE_CLIENT_SECRET` variables with values matching your ID and Secret Key, respectively. If you authenticate via OIDC, add `CYCODE_ID_TOKEN` with your OIDC ID token value as well:

-4. Create `CYCODE_CLIENT_ID` and `CYCODE_CLIENT_SECRET` variables with values matching your ID and Secret Key, respectively:

+  -

+5. Insert the `cycode.exe` into the path to complete the installation.

## Install Pre-Commit Hook

-Cycode’s pre-commit hook can be set up within your local repository so that the Cycode CLI application will automatically identify any issues with your code before you commit it to your codebase.

+Cycode's pre-commit and pre-push hooks can be set up within your local repository so that the Cycode CLI application will identify any issues with your code automatically before you commit or push it to your codebase.

+

+> [!NOTE]

+> pre-commit and pre-push hooks are not available for IaC scans.

Perform the following steps to install the pre-commit hook:

-1. Install the pre-commit framework:

+### Installing Pre-Commit Hook

+

+1. Install the pre-commit framework (Python 3.9 or higher must be installed):

- `pip3 install pre-commit`

+ ```bash

+ pip3 install pre-commit

+ ```

-2. Navigate to the top directory of the local repository you wish to scan.

+2. Navigate to the top directory of the local Git repository you wish to configure.

3. Create a new YAML file named `.pre-commit-config.yaml` (include the beginning `.`) in the repository’s top directory that contains the following:

-```yaml

-repos:

- - repo: https://github.com/cycodehq-public/cycode-cli

- rev: stable

- hooks:

- - id: cycode

- language_version: python3

- stages:

- - commit

-```

+ ```yaml

+ repos:

+ - repo: https://github.com/cycodehq/cycode-cli

+ rev: v3.5.0

+ hooks:

+ - id: cycode

+ stages: [pre-commit]

+ ```

-4. Install Cycode’s hook:

+4. Modify the created file for your specific needs. Use hook ID `cycode` to enable scan for Secrets. Use hook ID `cycode-sca` to enable SCA scan. Use hook ID `cycode-sast` to enable SAST scan. If you want to enable all scanning types, use this configuration:

+

+ ```yaml

+ repos:

+ - repo: https://github.com/cycodehq/cycode-cli

+ rev: v3.5.0

+ hooks:

+ - id: cycode

+ stages: [pre-commit]

+ - id: cycode-sca

+ stages: [pre-commit]

+ - id: cycode-sast

+ stages: [pre-commit]

+ ```

- `pre-commit install`

+5. Install Cycode’s hook:

-> :memo: **Note**

-

+5. Insert the `cycode.exe` into the path to complete the installation.

## Install Pre-Commit Hook

-Cycode’s pre-commit hook can be set up within your local repository so that the Cycode CLI application will automatically identify any issues with your code before you commit it to your codebase.

+Cycode's pre-commit and pre-push hooks can be set up within your local repository so that the Cycode CLI application will identify any issues with your code automatically before you commit or push it to your codebase.

+

+> [!NOTE]

+> pre-commit and pre-push hooks are not available for IaC scans.

Perform the following steps to install the pre-commit hook:

-1. Install the pre-commit framework:

+### Installing Pre-Commit Hook

+

+1. Install the pre-commit framework (Python 3.9 or higher must be installed):

- `pip3 install pre-commit`

+ ```bash

+ pip3 install pre-commit

+ ```

-2. Navigate to the top directory of the local repository you wish to scan.

+2. Navigate to the top directory of the local Git repository you wish to configure.

3. Create a new YAML file named `.pre-commit-config.yaml` (include the beginning `.`) in the repository’s top directory that contains the following:

-```yaml

-repos:

- - repo: https://github.com/cycodehq-public/cycode-cli

- rev: stable

- hooks:

- - id: cycode

- language_version: python3

- stages:

- - commit

-```

+ ```yaml

+ repos:

+ - repo: https://github.com/cycodehq/cycode-cli

+ rev: v3.5.0

+ hooks:

+ - id: cycode

+ stages: [pre-commit]

+ ```

-4. Install Cycode’s hook:

+4. Modify the created file for your specific needs. Use hook ID `cycode` to enable scan for Secrets. Use hook ID `cycode-sca` to enable SCA scan. Use hook ID `cycode-sast` to enable SAST scan. If you want to enable all scanning types, use this configuration:

+

+ ```yaml

+ repos:

+ - repo: https://github.com/cycodehq/cycode-cli

+ rev: v3.5.0

+ hooks:

+ - id: cycode

+ stages: [pre-commit]

+ - id: cycode-sca

+ stages: [pre-commit]

+ - id: cycode-sast

+ stages: [pre-commit]

+ ```

- `pre-commit install`

+5. Install Cycode’s hook:

-> :memo: **Note**

-> Successful hook installation will result in the message:

-`Pre-commit installed at .git/hooks/pre-commit`

+ ```bash

+ pre-commit install

+ ```

-# Cycode Command

+ A successful hook installation will result in the message: `Pre-commit installed at .git/hooks/pre-commit`.

-The following are the options and commands available with the Cycode CLI application:

+6. Keep the pre-commit hook up to date:

-| Option | Description |

-|--------------------------------|-------------------------------------------------------------------|

-| `--output [text\|json\|table]` | Specify the output (`text`/`json`/`table`). The default is `text` |

-| `-v`, `--verbose` | Show detailed logs |

-| `--version` | Show the version and exit. |

-| `--help` | Show options for given command. |

+ ```bash

+ pre-commit autoupdate

+ ```

-| Command | Description |

-|-------------------------------------|-------------|

-| [auth](#use-auth-command) | Authenticates your machine to associate CLI with your Cycode account. |

-| [configure](#use-configure-command) | Initial command to authenticate your CLI client with Cycode using client ID and client secret. |

-| [ignore](#ingoring-scan-results) | Ignore a specific value, path or rule ID |

-| [scan](#running-a-scan) | Scan content for secrets/IaC/SCA/SAST violations. You need to specify which scan type: `ci`/`commit_history`/`path`/`repository`/etc |

+ It will automatically bump `rev` in `.pre-commit-config.yaml` to the latest available version of Cycode CLI.

-# Running a Scan

+> [!NOTE]

+> Trigger happens on `git commit` command.

+> Hook triggers only on the files that are staged for commit.

-The Cycode CLI application offers several types of scans so that you can choose the option that best fits your case. The following are the current options and commands available:

+### Installing Pre-Push Hook

-| Option | Description |

-|--------------------------------------|----------------------------------------------------------------------------|

-| `-t, --scan-type [secret\|iac\|sca\|sast]` | Specify the scan you wish to execute (`secret`/`iac`/`sca`/`sast`), the default is `secret` |

-| `--secret TEXT` | Specify a Cycode client secret for this specific scan execution |

-| `--client-id TEXT` | Specify a Cycode client ID for this specific scan execution |

-| `--show-secret BOOLEAN` | Show secrets in plain text. See [Show/Hide Secrets](#showhide-secrets) section for more details. |

-| `--soft-fail BOOLEAN` | Run scan without failing, always return a non-error status code. See [Soft Fail](#soft-fail) section for more details. |

-| `--severity-threshold [INFO\|LOW\|MEDIUM\|HIGH\|CRITICAL]` | Show only violations at the specified level or higher (supported for the SCA scan type only). |

-| `--sca-scan` | Specify the SCA scan you wish to execute (`package-vulnerabilities`/`license-compliance`). The default is both |

-| `--monitor` | When specified, the scan results will be recorded in the knowledge graph. Please note that when working in `monitor` mode, the knowledge graph will not be updated as a result of SCM events (Push, Repo creation). (Supported for SCA scan type only). |

-| `--report` | When specified, a violations report will be generated. A URL link to the report will be printed as an output to the command execution |

-| `--help` | Show options for given command. |

-

-| Command | Description |

-|----------------------------------------|-----------------------------------------------------------------|

-| [commit_history](#commit-history-scan) | Scan all the commits history in this git repository |

-| [path](#path-scan) | Scan the files in the path supplied in the command |

-| [pre_commit](#pre-commit-scan) | Use this command to scan the content that was not committed yet |

-| [repository](#repository-scan) | Scan git repository including its history |

-

-## Repository Scan

-

-A repository scan examines an entire local repository for any exposed secrets or insecure misconfigurations. This more holistic scan type looks at everything: the current state of your repository and its commit history. It will look not only for currently exposed secrets within the repository but previously deleted secrets as well.

+To install the pre-push hook in addition to or instead of the pre-commit hook:

-To execute a full repository scan, execute the following:

+1. Add the pre-push hooks to your `.pre-commit-config.yaml` file:

-`cycode scan repository {{path}}`

+ ```yaml

+ repos:

+ - repo: https://github.com/cycodehq/cycode-cli

+ rev: v3.5.0

+ hooks:

+ - id: cycode-pre-push

+ stages: [pre-push]

+ ```

-For example, consider a scenario in which you want to scan your repository stored in `~/home/git/codebase`. You could then execute the following:

+2. Install the pre-push hook:

-`cycode scan repository ~/home/git/codebase`

+ ```bash

+ pre-commit install --hook-type pre-push

+ ```

-The following option is available for use with this command:

+3. For both pre-commit and pre-push hooks, use:

-| Option | Description |

-|---------------------|-------------|

-| `-b, --branch TEXT` | Branch to scan, if not set scanning the default branch |

+ ```bash

+ pre-commit install

+ pre-commit install --hook-type pre-push

+ ```

-### Branch Option

+> [!NOTE]

+> Pre-push hooks trigger on `git push` command and scan only the commits about to be pushed.

-To scan a specific branch of your local repository, add the argument `-b` (alternatively, `--branch`) followed by the name of the branch you wish to scan.

+# Cycode CLI Commands

-Consider the previous example. If you wanted to only scan a branch named `dev`, you could execute the following:

+The following are the options and commands available with the Cycode CLI application:

-`cycode scan repository ~/home/git/codebase -b dev`

+| Option | Description |

+|-------------------------------------------------------------------|------------------------------------------------------------------------------------|

+| `-v`, `--verbose` | Show detailed logs. |

+| `--no-progress-meter` | Do not show the progress meter. |

+| `--no-update-notifier` | Do not check CLI for updates. |

+| `-o`, `--output [rich\|text\|json\|table]` | Specify the output type. The default is `rich`. |

+| `--client-id TEXT` | Specify a Cycode client ID for this specific scan execution. |

+| `--client-secret TEXT` | Specify a Cycode client secret for this specific scan execution. |

+| `--id-token TEXT` | Specify a Cycode OIDC ID token for this specific scan execution. |

+| `--install-completion` | Install completion for the current shell.. |

+| `--show-completion [bash\|zsh\|fish\|powershell\|pwsh]` | Show completion for the specified shell, to copy it or customize the installation. |

+| `-h`, `--help` | Show options for given command. |

-or:

+| Command | Description |

+|-------------------------------------------|----------------------------------------------------------------------------------------------------------------------------------------------|

+| [auth](#using-the-auth-command) | Authenticate your machine to associate the CLI with your Cycode account. |

+| [configure](#using-the-configure-command) | Initial command to configure your CLI client authentication. |

+| [ignore](#ignoring-scan-results) | Ignore a specific value, path or rule ID. |

+| [mcp](#mcp-command-experiment) | Start the Model Context Protocol (MCP) server to enable AI integration with Cycode scanning capabilities. |

+| [scan](#running-a-scan) | Scan the content for Secrets/IaC/SCA/SAST violations. You`ll need to specify which scan type to perform: commit-history/path/repository/etc. |

+| [report](#report-command) | Generate report. You will need to specify which report type to perform as SBOM. |

+| status | Show the CLI status and exit. |

-`cycode scan repository ~/home/git/codebase --branch dev`

+# MCP Command \[EXPERIMENT\]

-## Monitor Option

+> [!WARNING]

+> The MCP command is available only for Python 3.10 and above. If you're using an earlier Python version, this command will not be available.

-> :memo: **Note**

-> This option is only available to SCA scans.

+The Model Context Protocol (MCP) command allows you to start an MCP server that exposes Cycode's scanning capabilities to AI systems and applications. This enables AI models to interact with Cycode CLI tools via a standardized protocol.

-To push scan results tied to the [SCA policies](https://docs.cycode.com/docs/sca-policies) found in an SCA type scan to Cycode's knowledge graph, add the argument `--monitor` to the scan command.

+> [!TIP]

+> For the best experience, install Cycode CLI globally on your system using `pip install cycode` or `brew install cycode`, then authenticate once with `cycode auth`. After global installation and authentication, you won't need to configure `CYCODE_CLIENT_ID` and `CYCODE_CLIENT_SECRET` environment variables in your MCP configuration files.

-Consider the following example. The following command will scan the repository for SCA policy violations and push them to Cycode:

+[](https://cursor.com/en/install-mcp?name=cycode&config=eyJjb21tYW5kIjoidXZ4IGN5Y29kZSBtY3AiLCJlbnYiOnsiQ1lDT0RFX0NMSUVOVF9JRCI6InlvdXItY3ljb2RlLWlkIiwiQ1lDT0RFX0NMSUVOVF9TRUNSRVQiOiJ5b3VyLWN5Y29kZS1zZWNyZXQta2V5IiwiQ1lDT0RFX0FQSV9VUkwiOiJodHRwczovL2FwaS5jeWNvZGUuY29tIiwiQ1lDT0RFX0FQUF9VUkwiOiJodHRwczovL2FwcC5jeWNvZGUuY29tIn19)

-`cycode scan -t sca --monitor repository ~/home/git/codebase`

-or:

+## Starting the MCP Server

-`cycode scan --scan-type sca --monitor repository ~/home/git/codebase`

+To start the MCP server, use the following command:

-When using this option, the scan results from this scan will appear in the knowledge graph, which can be found [here](https://app.cycode.com/query-builder).

+```bash

+cycode mcp

+```

-> :warning: **NOTE**

-> You must be an `owner` or an `admin` in Cycode to view the knowledge graph page.

+By default, this starts the server using the `stdio` transport, which is suitable for local integrations and AI applications that can spawn subprocesses.

-## Report Option

+### Available Options

-> :memo: **Note**

-> This option is only available to SCA scans.

+| Option | Description |

+|-------------------|--------------------------------------------------------------------------------------------|

+| `-t, --transport` | Transport type for the MCP server: `stdio`, `sse`, or `streamable-http` (default: `stdio`) |

+| `-H, --host` | Host address to bind the server (used only for non stdio transport) (default: `127.0.0.1`) |

+| `-p, --port` | Port number to bind the server (used only for non stdio transport) (default: `8000`) |

+| `--help` | Show help message and available options |

-To push scan results tied to the [SCA policies](https://docs.cycode.com/docs/sca-policies) found in the Repository scan to Cycode, add the argument `--report` to the scan command.

+### MCP Tools

-`cycode scan -t sca --report repository ~/home/git/codebase`

+The MCP server provides the following tools that AI systems can use:

-or:

+| Tool Name | Description |

+|----------------------|---------------------------------------------------------------------------------------------|

+| `cycode_secret_scan` | Scan files for hardcoded secrets |

+| `cycode_sca_scan` | Scan files for Software Composition Analysis (SCA) - vulnerabilities and license issues |

+| `cycode_iac_scan` | Scan files for Infrastructure as Code (IaC) misconfigurations |

+| `cycode_sast_scan` | Scan files for Static Application Security Testing (SAST) - code quality and security flaws |

+| `cycode_status` | Get Cycode CLI version, authentication status, and configuration information |

-`cycode scan --scan-type sca --report repository ~/home/git/codebase`

+### Usage Examples

-When using this option, the scan results from this scan will appear in the On-Demand Scans section of Cycode. To get to this page, click the link that appears after the printed results:

+#### Basic Command Examples

-> :warning: **NOTE**

-> You must be an `owner` or an `admin` in Cycode to view this page.

+Start the MCP server with default settings (stdio transport):

+```bash

+cycode mcp

+```

+Start the MCP server with explicit stdio transport:

```bash

-Scan Results: (scan_id: e04e06e5-6dd8-474f-b409-33bbee67270b)

-⛔ Found issue of type: Security vulnerability in package 'vyper' referenced in project '': Multiple evaluation of contract address in call in vyper (rule ID: d003b23a-a2eb-42f3-83c9-7a84505603e5) in file: ./requirements.txt ⛔

+cycode mcp -t stdio

+```

-1 | PyYAML~=5.3.1

-2 | vyper==0.3.1

-3 | cleo==1.0.0a5

+Start the MCP server with Server-Sent Events (SSE) transport:

+```bash

+cycode mcp -t sse -p 8080

+```

-⛔ Found issue of type: Security vulnerability in package 'vyper' referenced in project '': Integer bounds error in Vyper (rule ID: d003b23a-a2eb-42f3-83c9-7a84505603e5) in file: ./requirements.txt ⛔

+Start the MCP server with streamable HTTP transport on custom host and port:

+```bash

+cycode mcp -t streamable-http -H 0.0.0.0 -p 9000

+```

+

+Learn more about MCP Transport types in the [MCP Protocol Specification – Transports](https://modelcontextprotocol.io/specification/2025-03-26/basic/transports).

+

+#### Configuration Examples

+

+##### Using MCP with Cursor/VS Code/Claude Desktop/etc (mcp.json)

+

+> [!NOTE]

+> For EU Cycode environments, make sure to set the appropriate `CYCODE_API_URL` and `CYCODE_APP_URL` values in the environment variables (e.g., `https://api.eu.cycode.com` and `https://app.eu.cycode.com`).

+

+Follow [this guide](https://code.visualstudio.com/docs/copilot/chat/mcp-servers) to configure the MCP server in your **VS Code/GitHub Copilot**. Keep in mind that in `settings.json`, there is an `mcp` object containing a nested `servers` sub-object, rather than a standalone `mcpServers` object.

+

+For **stdio transport** (direct execution):

+```json

+{

+ "mcpServers": {

+ "cycode": {

+ "command": "cycode",

+ "args": ["mcp"],

+ "env": {

+ "CYCODE_CLIENT_ID": "your-cycode-id",

+ "CYCODE_CLIENT_SECRET": "your-cycode-secret-key",

+ "CYCODE_API_URL": "https://api.cycode.com",

+ "CYCODE_APP_URL": "https://app.cycode.com"

+ }

+ }

+ }

+}

+```

+

+For **stdio transport** with `pipx` installation:

+```json

+{

+ "mcpServers": {

+ "cycode": {

+ "command": "pipx",

+ "args": ["run", "cycode", "mcp"],

+ "env": {

+ "CYCODE_CLIENT_ID": "your-cycode-id",

+ "CYCODE_CLIENT_SECRET": "your-cycode-secret-key",

+ "CYCODE_API_URL": "https://api.cycode.com",

+ "CYCODE_APP_URL": "https://app.cycode.com"

+ }

+ }

+ }

+}

+```

+

+For **stdio transport** with `uvx` installation:

+```json

+{

+ "mcpServers": {

+ "cycode": {

+ "command": "uvx",

+ "args": ["cycode", "mcp"],

+ "env": {

+ "CYCODE_CLIENT_ID": "your-cycode-id",

+ "CYCODE_CLIENT_SECRET": "your-cycode-secret-key",

+ "CYCODE_API_URL": "https://api.cycode.com",

+ "CYCODE_APP_URL": "https://app.cycode.com"

+ }

+ }

+ }

+}

+```

-1 | PyYAML~=5.3.1

-2 | vyper==0.3.1

-3 | cleo==1.0.0a5

+For **SSE transport** (Server-Sent Events):

+```json

+{

+ "mcpServers": {

+ "cycode": {

+ "url": "http://127.0.0.1:8000/sse"

+ }

+ }

+}

+```

-⛔ Found issue of type: Security vulnerability in package 'pyyaml' referenced in project '': Improper Input Validation in PyYAML (rule ID: d003b23a-a2eb-42f3-83c9-7a84505603e5) in file: ./requirements.txt ⛔

+For **SSE transport** on custom port:

+```json

+{

+ "mcpServers": {

+ "cycode": {

+ "url": "http://127.0.0.1:8080/sse"

+ }

+ }

+}

+```

-1 | PyYAML~=5.3.1

-2 | vyper==0.3.1

-3 | cleo==1.0.0a5

+For **streamable HTTP transport**:

+```json

+{

+ "mcpServers": {

+ "cycode": {

+ "url": "http://127.0.0.1:8000/mcp"

+ }

+ }

+}

+```

-⛔ Found issue of type: Security vulnerability in package 'cleo' referenced in project '': cleo is vulnerable to Regular Expression Denial of Service (ReDoS) (rule ID: d003b23a-a2eb-42f3-83c9-7a84505603e5) in file: ./requirements.txt ⛔

+##### Running MCP Server in Background

-2 | vyper==0.3.1

-3 | cleo==1.0.0a5

-4 |

+For **SSE transport** (start server first, then configure client):

+```bash

+# Start the MCP server in the background

+cycode mcp -t sse -p 8000 &

+

+# Configure in mcp.json

+{

+ "mcpServers": {

+ "cycode": {

+ "url": "http://127.0.0.1:8000/sse"

+ }

+ }

+}

+```

-⛔ Found issue of type: Security vulnerability in package 'vyper' referenced in project '': Incorrect Comparison in Vyper (rule ID: d003b23a-a2eb-42f3-83c9-7a84505603e5) in file: ./requirements.txt ⛔

+For **streamable HTTP transport**:

+```bash

+# Start the MCP server in the background

+cycode mcp -t streamable-http -H 127.0.0.2 -p 9000 &

+

+# Configure in mcp.json

+{

+ "mcpServers": {

+ "cycode": {

+ "url": "http://127.0.0.2:9000/mcp"

+ }

+ }

+}

+```

-1 | PyYAML~=5.3.1

-2 | vyper==0.3.1

-3 | cleo==1.0.0a5

+> [!NOTE]

+> The MCP server requires proper Cycode CLI authentication to function. Make sure you have authenticated using `cycode auth` or configured your credentials before starting the MCP server.

-⛔ Found issue of type: Security vulnerability in package 'vyper' referenced in project '': Buffer Overflow in vyper (rule ID: d003b23a-a2eb-42f3-83c9-7a84505603e5) in file: ./requirements.txt ⛔

+### Troubleshooting MCP

-1 | PyYAML~=5.3.1

-2 | vyper==0.3.1

-3 | cleo==1.0.0a5

+If you encounter issues with the MCP server, you can enable debug logging to get more detailed information about what's happening. There are two ways to enable debug logging:

-Report URL: https://app.cycode.com/on-demand-scans/617ecc3d-9ff2-493e-8be8-2c1fecaf6939

+1. Using the `-v` or `--verbose` flag:

+```bash

+cycode -v mcp

```

+2. Using the `CYCODE_CLI_VERBOSE` environment variable:

+```bash

+CYCODE_CLI_VERBOSE=1 cycode mcp

+```

+

+The debug logs will show detailed information about:

+- Server startup and configuration

+- Connection attempts and status

+- Tool execution and results

+- Any errors or warnings that occur

+

+This information can be helpful when:

+- Diagnosing connection issues

+- Understanding why certain tools aren't working

+- Identifying authentication problems

+- Debugging transport-specific issues

+

+

+# Scan Command

+

+## Running a Scan

+

+The Cycode CLI application offers several types of scans so that you can choose the option that best fits your case. The following are the current options and commands available:

+

+| Option | Description |

+|------------------------------------------------------------|----------------------------------------------------------------------------------------------------------------------------------|

+| `-t, --scan-type [secret\|iac\|sca\|sast]` | Specify the scan you wish to execute (`secret`/`iac`/`sca`/`sast`), the default is `secret`. |

+| `--show-secret BOOLEAN` | Show secrets in plain text. See [Show/Hide Secrets](#showhide-secrets) section for more details. |

+| `--soft-fail BOOLEAN` | Run scan without failing, always return a non-error status code. See [Soft Fail](#soft-fail) section for more details. |

+| `--severity-threshold [INFO\|LOW\|MEDIUM\|HIGH\|CRITICAL]` | Show only violations at the specified level or higher. |

+| `--sca-scan` | Specify the SCA scan you wish to execute (`package-vulnerabilities`/`license-compliance`). The default is both. |

+| `--monitor` | When specified, the scan results will be recorded in Cycode. |

+| `--cycode-report` | Display a link to the scan report in the Cycode platform in the console output. |

+| `--no-restore` | When specified, Cycode will not run the restore command. This will scan direct dependencies ONLY! |

+| `--gradle-all-sub-projects` | Run gradle restore command for all sub projects. This should be run from |

+| `--maven-settings-file` | For Maven only, allows using a custom [settings.xml](https://maven.apache.org/settings.html) file when scanning for dependencies |

+| `--help` | Show options for given command. |

+

+| Command | Description |

+|----------------------------------------|-----------------------------------------------------------------------|

+| [commit-history](#commit-history-scan) | Scan commit history or perform diff scanning between specific commits |

+| [path](#path-scan) | Scan the files in the path supplied in the command |

+| [pre-commit](#pre-commit-scan) | Use this command to scan the content that was not committed yet |

+| [repository](#repository-scan) | Scan git repository including its history |

+

+### Options

+

+#### Severity Option

+

+To limit the results of the scan to a specific severity threshold, the argument `--severity-threshold` can be added to the scan command.

+