Contents

What is Contrastive Learning?

How does Contrastive Learning Work?

Loss Functions in Contrastive Learning

Contrastive Learning: Use Cases

Popular Contrastive Learning Frameworks

Contrastive Learning: Key Takeaways

Encord Blog

Full Guide to Contrastive Learning

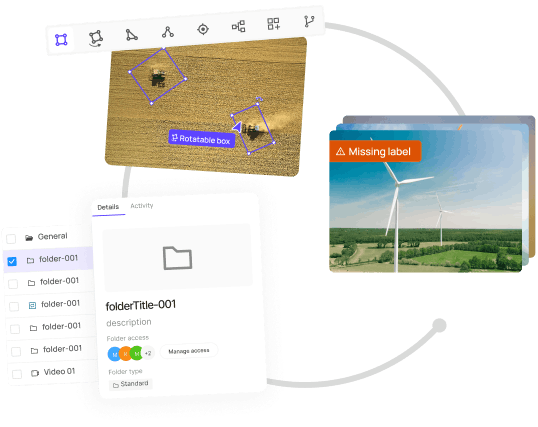

Power your AI models with the right data

Automate your data curation, annotation and label validation workflows.

Get startedContents

What is Contrastive Learning?

How does Contrastive Learning Work?

Loss Functions in Contrastive Learning

Contrastive Learning: Use Cases

Popular Contrastive Learning Frameworks

Contrastive Learning: Key Takeaways

Written by

Nikolaj Buhl

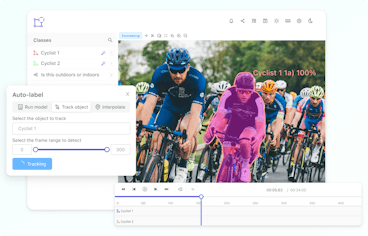

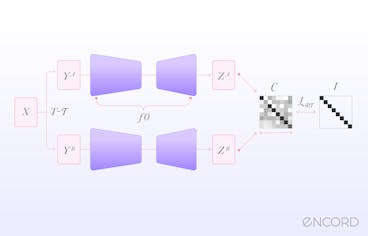

Contrastive learning allows models to extract meaningful representations from unlabeled data.

By leveraging similarity and dissimilarity, contrastive learning enables models to map similar instances close together in a latent space while pushing apart those that are dissimilar.

This approach has proven to be effective across diverse domains, spanning computer vision, natural language processing (NLP), and reinforcement learning.

What is Contrastive Learning?

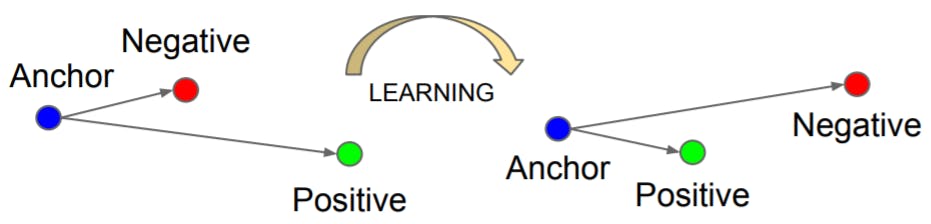

Contrastive learning is an approach that focuses on extracting meaningful representations by contrasting positive and negative pairs of instances. It leverages the assumption that similar instances should be closer in a learned embedding space while dissimilar instances should be farther apart. By framing learning as a discrimination task, contrastive learning allows models to capture relevant features and similarities in the data.

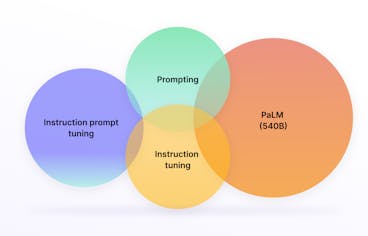

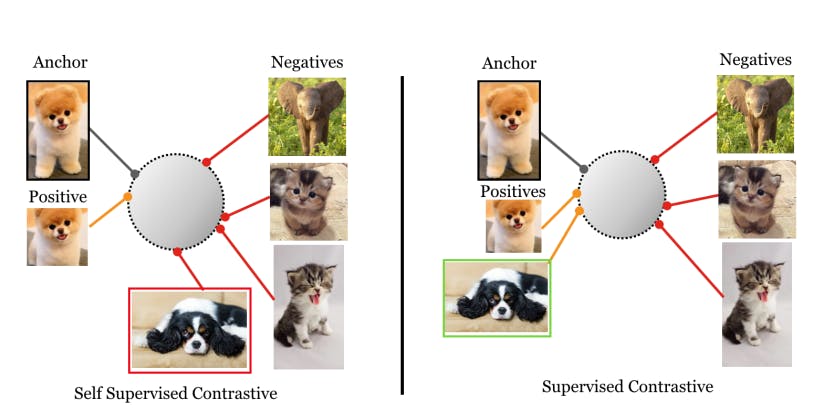

Supervised Contrastive Learning (SCL)

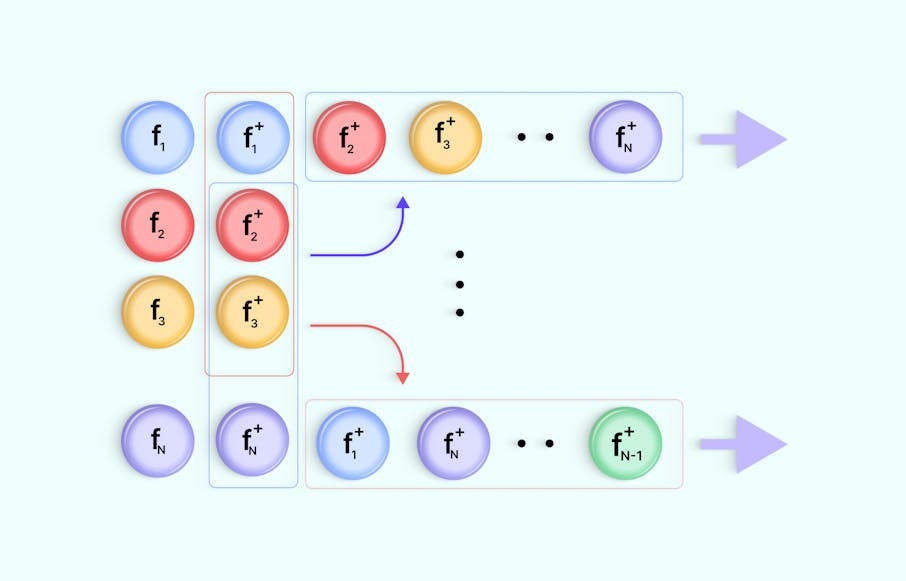

Supervised contrastive learning (SCL) is a branch that uses labeled data to train models explicitly to differentiate between similar and dissimilar instances. In SCL, the model is trained on pairs of data points and their labels, indicating whether the data points are similar or dissimilar. The objective is to learn a representation space where similar instances are clustered closer together, while dissimilar instances are pushed apart.

The Information Noise Contrastive Estimation (InfoNCE) loss function is a popular method. InfoNCE loss maximizes the agreement between positive samples and minimizes the agreement between negative samples in the learned representation space. By optimizing this objective, the model learns to discriminate between similar and dissimilar instances, leading to improved performance on downstream tasks.

We will discuss the InfoNCe loss and other losses later in the article.

Self-Supervised Contrastive Learning (SSCL)

Self-supervised contrastive learning (SSCL) takes a different approach by learning representations from unlabeled data without relying on explicit labels. SSCL leverages the design of pretext tasks, which create positive and negative pairs from the unlabeled data. These pretext tasks are carefully designed to encourage the model to capture meaningful features and similarities in the data.

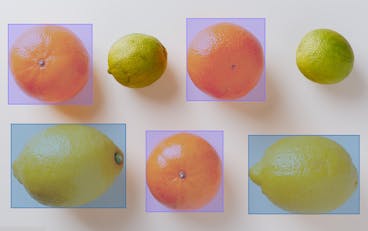

One commonly used pretext task in SSCL is the generation of augmented views. This involves creating multiple augmented versions of the same instance and treating them as positive pairs, while instances from different samples are treated as negative pairs. Training the model to differentiate between these pairs, it learns to capture higher-level semantic information and generalize well to downstream tasks.

SSCL has shown impressive results in various domains, including computer vision and natural language processing. In computer vision, SSCL has been successful in tasks such as image classification, object detection, and image generation. SSCL has been applied to tasks like sentence representation learning, text classification, and machine translation in natural language processing.

Supervised Contrastive Learning

How does Contrastive Learning Work?

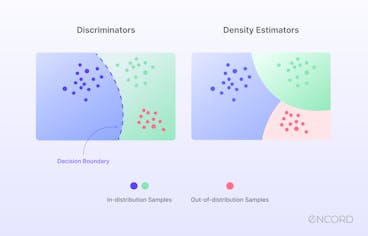

Contrastive learning has proven a powerful technique, allowing models to leverage large amounts of unlabeled data and improve performance even with limited labeled data. The fundamental idea behind contrastive learning is to encourage similar instances to be mapped closer together in a learned embedding space while pushing dissimilar instances farther apart. By framing learning as a discrimination task, contrastive learning allows models to capture relevant features and similarities in the data.

Now, let's dive into each step of the contrastive learning method to gain a deeper understanding of how it works.

Data Augmentation

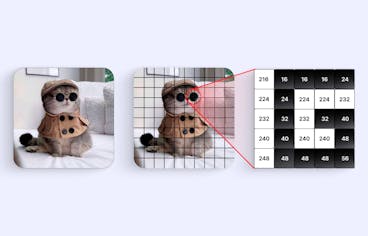

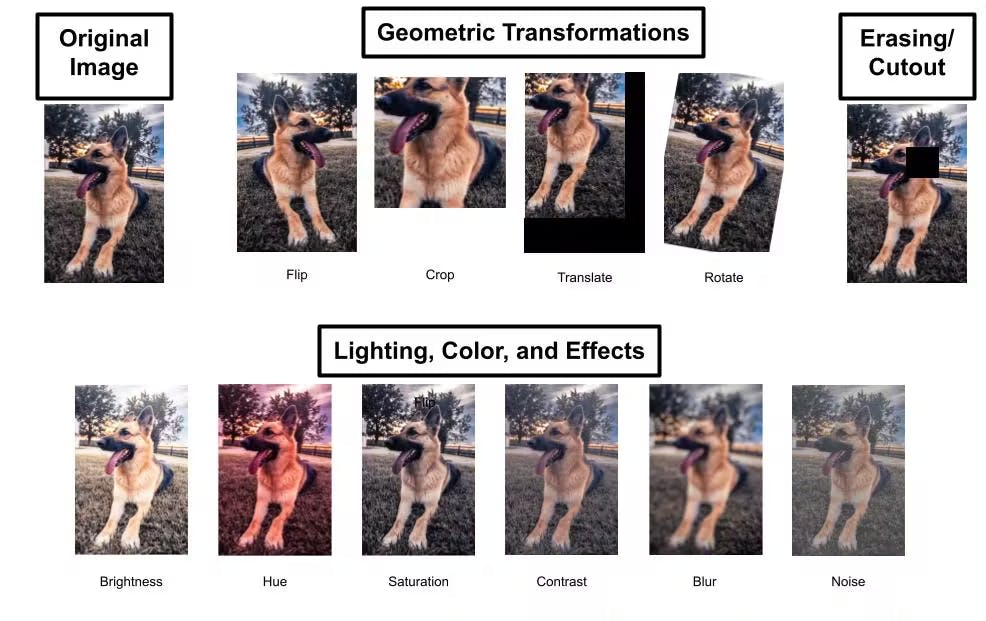

Contrastive learning often begins with data augmentation, which involves applying various transformations or perturbations to unlabeled data to create diverse instances or augmented views.

Data augmentation aims to increase the data's variability and expose the model to different perspectives of the same instance. Common data augmentation techniques include cropping, flipping, rotation, random crop, and color transformations. By generating diverse instances, contrastive learning ensures that the model learns to capture relevant information regardless of variations in the input data.

The Full Guide to Data Augmentation in Computer Vision

Encoder Network

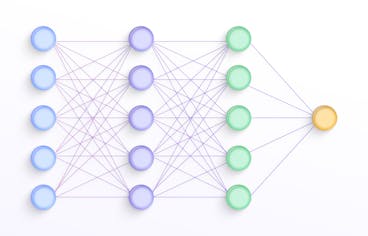

The next step in contrastive learning is training an encoder network. The encoder network takes the augmented instances as input and maps them to a latent representation space, where meaningful features and similarities are captured.

The encoder network is typically a deep neural network architecture, such as a convolutional neural network (CNN) for image data or a recurrent neural network (RNN) for sequential data. The network learns to extract and encode high-level representations from the augmented instances, facilitating the discrimination between similar and dissimilar instances in the subsequent steps.

Projection Network

A projection network is employed to refine the learned representations further. The projection network takes the output of the encoder network and projects it onto a lower-dimensional space, often referred to as the projection or embedding space.

This additional projection step helps enhance the discriminative power of the learned representations. By mapping the representations to a lower-dimensional space, the projection network reduces the complexity and redundancy in the data, facilitating better separation between similar and dissimilar instances.

Contrastive Learning

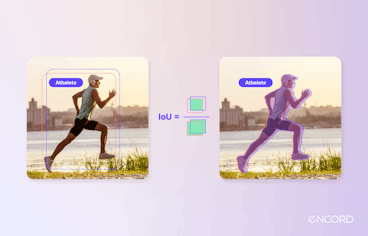

Once the augmented instances are encoded and projected into the embedding space, the contrastive learning objective is applied. The objective is to maximize the agreement between positive pairs (instances from the same sample) and minimize the agreement between negative pairs (instances from different samples).

This encourages the model to pull similar instances closer together while pushing dissimilar instances apart. The similarity between instances is typically measured using a distance metric, such as Euclidean distance or cosine similarity. The model is trained to minimize the distance between positive pairs and maximize the distance between negative pairs in the embedding space.

Loss Function

Contrastive learning utilizes a variety of loss functions to establish the objectives of the learning process. These loss functions are crucial in guiding the model to capture significant representations and differentiate between similar and dissimilar instances.

The selection of the appropriate loss function depends on the specific task requirements and data characteristics. Each loss function aims to facilitate learning representations that effectively capture meaningful similarities and differences within the data. In a later section, we will detail these loss functions.

Training and Optimization

Once the loss function is defined, the model is trained using a large unlabeled dataset. The training process involves iteratively updating the model's parameters to minimize the loss function.

Optimization algorithms such as stochastic gradient descent (SGD) or its variants are commonly used to fine-tune the model's hyperparameters. The training process typically involves batch-wise updates, where a subset of augmented instances is processed simultaneously.

During training, the model learns to capture the relevant features and similarities in the data. The iterative optimization process gradually refines the learned representations, leading to better discrimination and separation between similar and dissimilar instances.

Evaluation and Generalization

Evaluation and generalization are crucial steps to assess the quality of the learned representations and their effectiveness in practical applications.

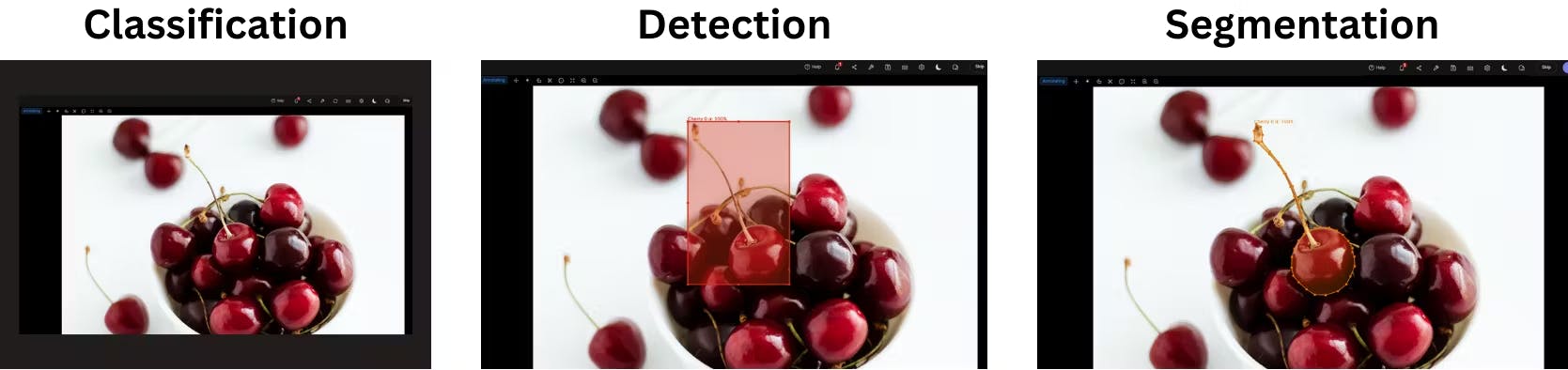

Downstream Task Evaluation

Image Classification vs Object Detection vs Image Segmentation

The ultimate measure of success for contrastive learning is its performance on downstream tasks. The learned representations are input features for tasks such as image classification, object detection, sentiment analysis, or language translation.

The model's performance on these tasks is evaluated using appropriate metrics, including accuracy, precision, recall, F1 score, or task-specific evaluation criteria. Higher performance on the downstream tasks indicates better generalization and usefulness of the learned representations.

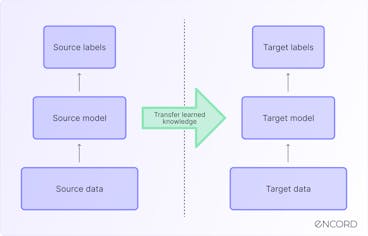

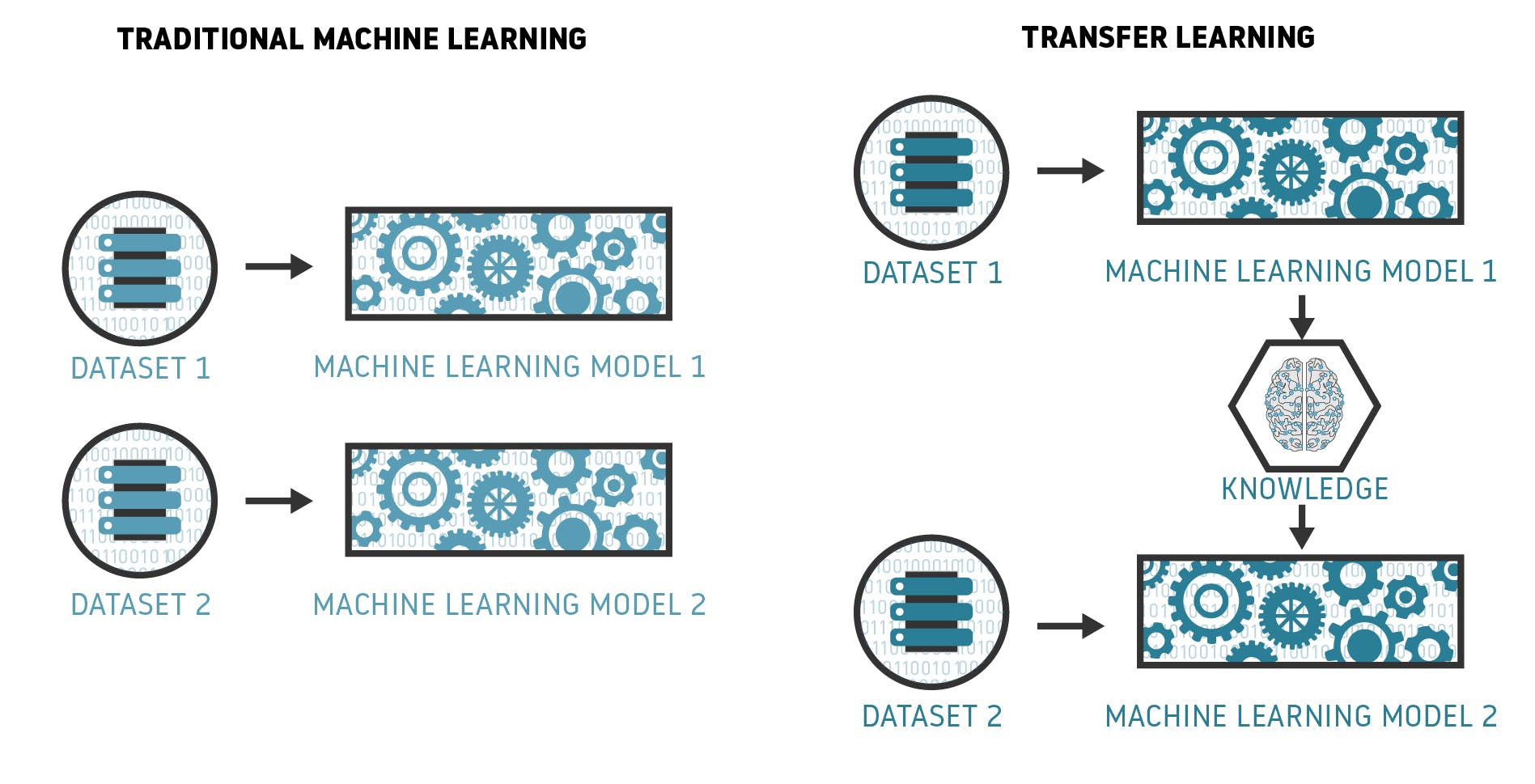

Transfer Learning

Contrastive learning enables transfer learning, where the presentation of learned representations from one task can be applied to related tasks. Generalization is evaluated by assessing how well the representations transfer to new tasks or datasets. If the learned representations generalize well to unseen data and improve performance on new tasks, it indicates the effectiveness of contrastive learning in capturing meaningful features and similarities.

Is Transfer Learning the final step for enabling AI in Aviation?

Comparison with Baselines

To understand the effectiveness of contrastive learning, comparing the learned representations with baseline models or other state-of-the-art approaches is essential. Comparisons can be made regarding performance metrics, robustness, transfer learning capabilities, or computational efficiency. Such comparisons provide insights into the added value of contrastive learning and its potential advantages over alternative methods.

After understanding how contrastive learning works, let's explore an essential aspect of this learning technique: the choice and role of loss functions.

Loss Functions in Contrastive Learning

In contrastive learning, various loss functions are employed to define the objectives of the learning process. These loss functions guide the model in capturing meaningful representations and discriminating between similar and dissimilar instances. By understanding the different loss functions used in contrastive learning, we can gain insights into how they contribute to the learning process and enhance the model's ability to capture relevant features and similarities within the data.

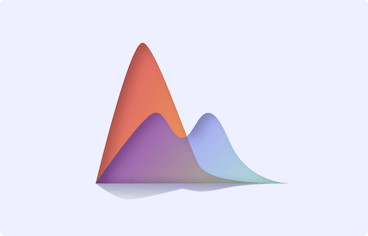

Contrastive Loss

Contrastive loss is a fundamental loss function in contrastive learning. It aims to maximize the agreement between positive pairs (instances from the same sample) and minimize the agreement between negative pairs (instances from different samples) in the learned embedding space. The goal is to pull similar instances closer together while pushing dissimilar instances apart.

The contrastive loss function is typically defined as a margin-based loss, where the similarity between instances is measured using a distance metric, such as Euclidean distance or cosine similarity. The contrastive loss is computed by penalizing positive samples for being far apart and negative samples for being too close in the embedding space.

One widely used variant of contrastive loss is the InfoNCE loss, which we will discuss in more detail shortly. Contrastive loss has shown effectiveness in various domains, including computer vision and natural language processing, as it encourages the model to learn discriminative representations that capture meaningful similarities and differences.

Triplet Loss

Triplet loss is another popular loss function employed in contrastive learning. It aims to preserve the relative distances between instances in the learned representation space. Triplet loss involves forming triplets of instances: an anchor instance, a positive sample (similar to the anchor), and a negative sample (dissimilar to the anchor). The objective is to ensure that the distance between the anchor and the positive sample is smaller than the distance between the anchor and the negative sample by a specified margin.

FaceNet: A Unified Embedding for Face Recognition and Clustering

The intuition behind triplet loss is to create a "triplet constraint" where the anchor instance is pulled closer to the positive sample while being pushed away from the negative sample. The model can better discriminate between similar and dissimilar instances by learning representations that satisfy the triplet constraint.

Triplet loss has been widely applied in computer vision tasks, such as face recognition and image retrieval, where capturing fine-grained similarities is crucial. However, triplet loss can be sensitive to the selection of triplets, as choosing informative triplets from a large dataset can be challenging and computationally expensive.

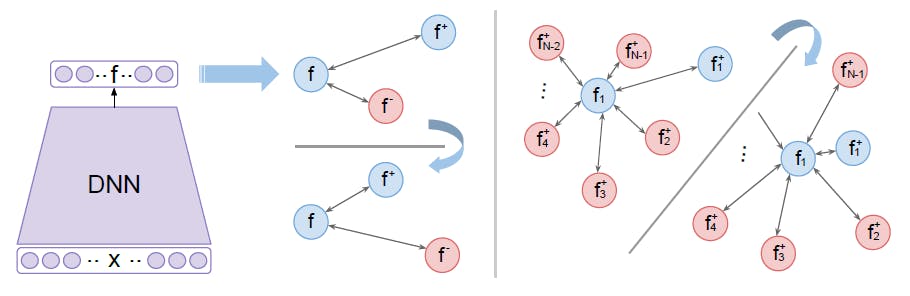

N-pair Loss

N-pair loss is an extension of triplet loss that considers multiple positive and negative samples for a given anchor instance. Rather than comparing an anchor instance to a single positive and negative sample, N-pair loss aims to maximize the similarity between the anchor and all positive instances while minimizing the similarity between the anchor and all negative instances.

Improved Deep Metric Learning with Multi-class N-pair Loss Objective

The N-pair loss encourages the model to capture nuanced relationships among multiple instances, providing more robust supervision during learning. By considering multiple instances simultaneously, N-pair loss can capture more complex patterns and improve the discriminative power of the learned representations.

N-pair loss has been successfully employed in various tasks, such as fine-grained image recognition, where capturing subtle differences among similar instances is essential. It alleviates some of the challenges associated with triplet loss by leveraging multiple positive and negative instances, but it can still be computationally demanding when dealing with large datasets.

InfoNCE

Information Noise Contrastive Estimation (InfoNCE) loss is derived from the framework of noise contrastive estimation. It measures the similarity between positive and negative pairs in the learned embedding space. InfoNCE loss maximizes the agreement between positive pairs and minimizes the agreement between negative pairs.

The key idea behind InfoNCE loss is to treat the contrastive learning problem as a binary classifier. Given a positive pair and a set of negative pairs, the model is trained to discriminate between positive and negative instances. The similarity between instances is measured using a probabilistic approach, such as the softmax function.

InfoNCE loss is commonly used in self-supervised contrastive learning, where positive pairs are created from augmented views of the same instance, and negative pairs are formed using instances from different samples. By optimizing InfoNCE loss, the model learns to capture meaningful similarities and differences in the data points, acquiring powerful representations.

Logistic Loss

Logistic loss, also known as logistic regression loss or cross-entropy loss, is a widely used loss function in machine learning. It has been adapted for contrastive learning as a probabilistic loss function. Logistic loss models the likelihood of two instances being similar or dissimilar based on their respective representations in the embedding space.

Logistic loss is commonly employed in contrastive learning to estimate the probability that two instances belong to the same class (similar) or different classes (dissimilar). By maximizing the likelihood of positive pairs being similar and minimizing the likelihood of negative pairs being similar, logistic loss guides the model toward effective discrimination.

The probabilistic nature of logistic loss makes it suitable for modeling complex relationships and capturing fine-grained differences between instances. Logistic loss has been successfully applied in various domains, including image recognition, text classification, and recommendation systems.

These different loss functions provide diverse ways to optimize the contrastive learning objective and encourage the model to learn representations that capture meaningful similarities and differences in the data points. The choice of loss function depends on the specific requirements of the task and the characteristics of the data.

By leveraging these objectives, contrastive learning enables models to learn powerful representations that facilitate better discrimination and generalization in various machine learning applications.

Contrastive Learning: Use Cases

Let's explore some prominent use cases where contrastive learning has proven to be effective:

Semi-Supervised Learning

Semi-supervised learning is a scenario where models are trained using labeled and unlabeled data. Obtaining labeled data can be costly and time-consuming in many real-world situations, while unlabeled data is abundant. Contrastive learning is particularly beneficial in semi-supervised learning as it allows models to leverage unlabeled data to learn meaningful representations.

By training on unlabeled data using contrastive learning, models can capture useful patterns and improve performance when labeled data is limited. Contrastive learning enables the model to learn discriminative representations that capture relevant features and similarities in the data. These learned representations can then enhance performance on downstream tasks, such as image classification, object recognition, speech recognition, and more.

Supervised Learning

Contrastive learning also has applications in traditional supervised learning scenarios with plentiful labeled data. In supervised learning, models are trained on labeled data to predict or classify new instances. However, labeled data may not always capture the full diversity and complexity of real-world instances, leading to challenges in generalization.

By leveraging unlabeled data alongside labeled data, contrastive learning can augment the learning process and improve the discriminative power of the model. The unlabeled data provides additional information and allows the model to capture more robust representations. This improves performance on various supervised learning tasks, such as image classification, sentiment analysis, recommendation systems, and more.

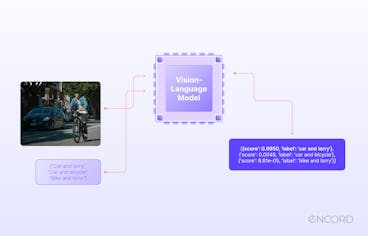

NLP

Contrastive learning has shown promising results in natural language processing (NLP). NLP deals with the processing and understanding human language, and contrastive learning has been applied to enhance various NLP tasks.

Contrastive learning enables models to capture semantic information and contextual relationships by learning representations from large amounts of unlabeled text data. This has been applied to tasks such as sentence similarity, text classification, language modeling, sentiment analysis, machine translation, and more.

For example, in sentence similarity tasks, contrastive learning allows models to learn representations that capture the semantic similarity between pairs of sentences. By leveraging the power of contrastive learning, models can better understand the meaning and context of sentences, facilitating more accurate and meaningful comparisons.

Data Augmentation

Augmentation involves applying various transformations or perturbations to unlabeled data to create diverse instances or augmented views. These augmented views serve as positive pairs during the contrastive learning, allowing the model to learn robust representations that capture relevant features.

Data augmentation techniques used in contrastive learning include cropping, flipping, rotation, color transformations, and more. By generating diverse instances, contrastive learning ensures that the model learns to capture relevant information regardless of variations in the input data.

Data augmentation plays a crucial role in combating data scarcity and addressing the limitations of labeled data. By effectively utilizing unlabeled data through contrastive learning and data augmentation, models can learn more generalized and robust representations, improving performance on various tasks, especially in computer vision domains.

Popular Contrastive Learning Frameworks

In recent years, several contrastive learning frameworks have gained prominence in deep learning due to their effectiveness in learning powerful representations. Let’s explore some popular contrastive learning frameworks that have garnered attention:

SimCLR

Simple Contrastive Learning of Representations (SimCLR) is a self-supervised contrastive learning framework that has garnered widespread recognition for its effectiveness in learning powerful representations. It builds upon the principles of contrastive learning by leveraging a combination of data augmentation, a carefully designed contrastive objective, and a symmetric neural network architecture.

Advancing Self-Supervised and Semi-Supervised Learning with SimCLR

The core idea of SimCLR is to maximize the agreement between augmented views of the same instance while minimizing the agreement between views from different instances. By doing so, SimCLR encourages the model to learn representations that capture meaningful similarities and differences in the data. The framework employs a large-batch training scheme to facilitate efficient and effective contrastive learning.

Additionally, SimCLR incorporates a specific normalization technique called "normalized temperature-scaled cross-entropy" (NT-Xent) loss, which enhances training stability and improves the quality of the learned representations.

SimCLR has demonstrated remarkable performance across various domains, including computer vision, natural language processing, and reinforcement learning. It outperforms prior methods in several benchmark datasets and tasks, showcasing its effectiveness in learning powerful representations without relying on explicit labels.

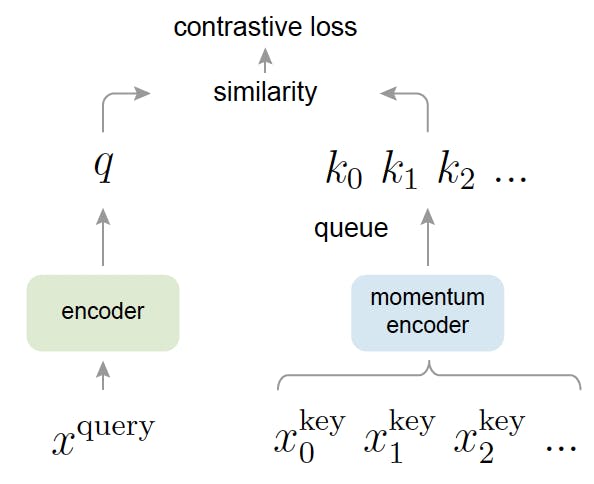

MoCo

Momentum Contrast (MoCo) is another prominent self-supervised contrastive learning framework that has garnered significant attention. It introduces the concept of a dynamic dictionary of negative instances, which helps the model capture meaningful features and similarities in the data. MoCo utilizes a momentum encoder, which gradually updates the representations of negative examples to enhance the model's ability to capture relevant information.

Momentum Contrast for Unsupervised Visual Representation Learning

The framework maximizes agreement between positive pairs while minimizing agreement between negative pairs. By maintaining a dynamic dictionary of negative instances, MoCo provides richer contrasting examples for learning representations. It has shown strong performance in various domains, including computer vision and natural language processing, and has achieved state-of-the-art results in multiple benchmark datasets.

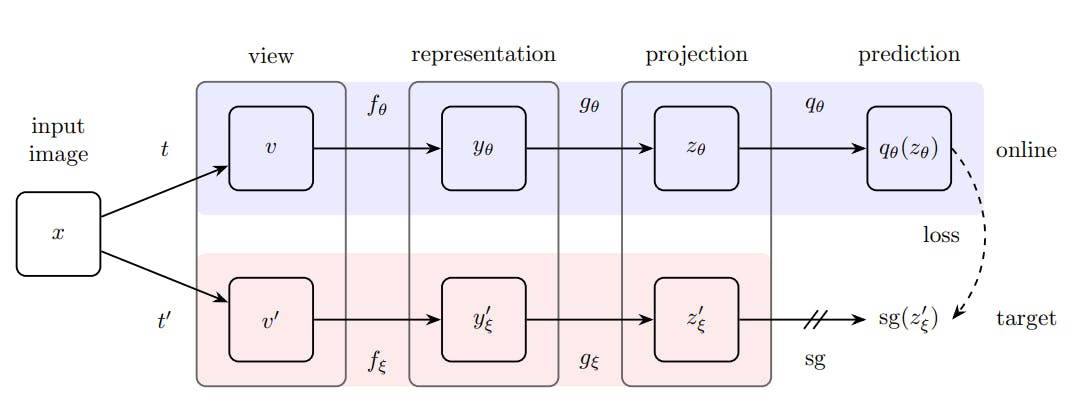

BYOL

Bootstrap Your Own Latent (BLOY) is a self-supervised contrastive learning framework that emphasizes the online updating of target network parameters. It employs a pair of online and target networks, with the target network updated through exponential moving averages of the online network's weights. BYOL focuses on learning representations without the need for negative examples.

Bootstrap your own latent: A new approach to self-supervised Learning

The framework maximizes agreement between augmented views of the same instance while decoupling the similarity estimation from negative examples. BYOL has demonstrated impressive performance in various domains, including computer vision and natural language processing. It has shown significant gains in representation quality and achieved state-of-the-art results in multiple benchmark datasets.

SwAV

Swapped Augmentations and Views (SwAV) is a self-supervised contrastive learning framework that introduces clustering-based objectives to learn representations. It leverages multiple augmentations of the same image and multiple views within a mini-batch to encourage the model to assign similar representations to augmented views of the same instance.

Using clustering objectives, SwAV aims to identify clusters of similar representations without the need for explicit class labels. It has shown promising results in various computer vision tasks, including image classification and object detection, and has achieved competitive performance on several benchmark datasets.

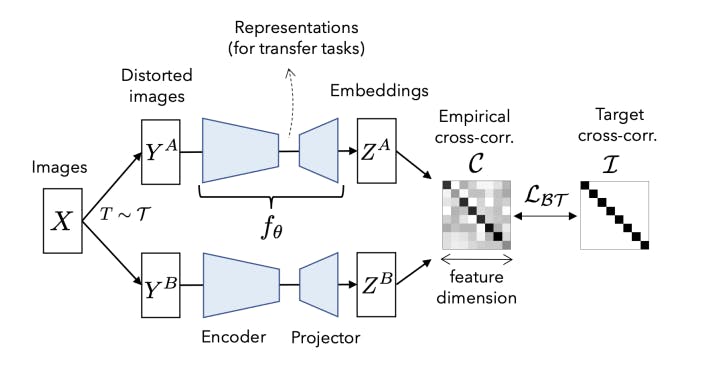

Barlow Twins

Barlow Twins is a self-supervised contrastive learning framework that reduces cross-correlation between latent representations. It introduces a decorrelation loss that explicitly encourages the model to produce diverse representations for similar instances, enhancing the overall discriminative power.

Barlow Twins: Self-Supervised Learning via Redundancy Reduction

Barlow Twins aims to capture more unique and informative features in the learned representations by reducing cross-correlation. The framework has demonstrated impressive performance in various domains, including computer vision and natural language processing, and has achieved state-of-the-art results in multiple benchmark datasets.

By leveraging the principles of contrastive learning and incorporating innovative methodologies, these frameworks have paved the way for advancements in various domains, including computer vision, natural language processing, and reinforcement learning.

Contrastive Learning: Key Takeaways

- Contrastive learning is a powerful technique for learning meaningful representations from unlabeled data, leveraging similarity and dissimilarity to map instances in a latent space.

- It encompasses supervised contrastive learning (SSCL) with labeled data and self-supervised contrastive learning (SCL) with pretext tasks for unlabeled data.

- Important components include data augmentation, encoder, and projection networks, capturing relevant features and similarities.

- Common loss functions used in contrastive learning are contrastive loss, triplet loss, N-pair loss, InfoNCE loss, and logistic loss.

- Contrastive learning has diverse applications, such as semi-supervised learning, supervised learning, NLP, and data augmentation, and it improves model performance and generalization.

Power your AI models with the right data

Automate your data curation, annotation and label validation workflows.

Get startedWritten by

Nikolaj Buhl

- Contrastive learning is a machine learning technique that aims to learn meaningful representations by contrasting positive and negative pairs of instances. It leverages the assumption that similar instances should be closer together in a learned embedding space, while dissimilar instances should be farther apart.

- Contrastive learning differs from traditional supervised learning in that it doesn’t rely on explicit labels for training. Instead, it learns representations by contrasting positive and negative pairs of data points. This makescontrastive learning suitable for scenarios where labeled data is limited or unavailable.

- Contrastive learning can be applied to various types of data, including images, text, audio, and other types of structured and unstructured data. As long as there are meaningful similarities and dissimilarities between instances, contrastive learning can be used to learn representations from the data.

- Contrastive learning can be beneficial for small datasets because it leverages unlabeled data to enhance representation learning. By learning from a combination of labeled and unlabeled data, contrastive learning can help improve the model's generalization and performance on small datasets.

- Yes, contrastive learning can be combined with other learning techniques to further enhance representation learning. For example, contrastive learning can be used in conjunction with transfer learning, where pre-trained representations from one task or domain are fine-tuned on a target task. This combination allows leveraging the benefits of both techniques for improved performance.

Related blogs

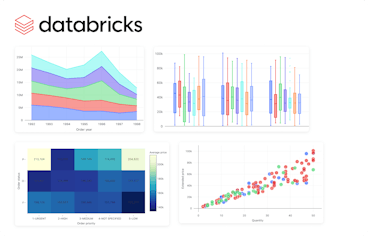

Visualizations in Databricks

With data becoming a pillar stone of a company’s growth strategy, the market for visualization tools is growing rapidly, with a projected compound annual growth rate (CAGR) of 10.07% between 2023 and 2028. The primary driver of these trends is the need for data-driven decision-making, which involves understanding complex data patterns and extracting actionable insights to improve operational efficiency. PowerBI and Tableau are traditional tools with interactive workspaces for creating intuitive dashboards and exploring large datasets. However, other platforms are emerging to address the ever-changing nature of the modern data ecosystem. In this article, we will discuss the visualizations offered by Databricks - a modern enterprise-scale platform for building data, analytics, and artificial intelligence (AI) solutions. Databricks Databricks is an end-to-end data management and model development solution built on Apache Spark. It lets you create and deploy the latest generative AI (Gen AI) and large language models (LLMs). The platform uses a proprietary Mosaic AI framework to streamline the model development process. It provides tools to fine-tune LLMs seamlessly through enterprise data and offers a unified service for experimentation through foundation models. In addition, it features Databricks SQL, a state-of-the-art lakehouse for cost-effective data storage and retrieval. It lets you centrally store all your data assets in an open format, Delta Lake, for effective governance and discoverability. Further, Databricks SQL has built-in support for data visualization, which lets you extract insights from datasets directly from query results in the SQL editor. Users also benefit from the visualization tools featured in Databricks Notebooks, which help you build interactive charts by using the Plotly library in Python. Through these visualizations, Databricks offers robust data analysis for monitoring data assets critical to your AI models. So, let’s discuss in more detail the types of chart visualizations, graphs, diagrams, and maps available on Databricks to help you choose the most suitable visualization type for your use case. Effective visualization can help with effortless data curation. Learn more about how you can use data curation for computer vision Visualizations in Databricks As mentioned earlier, Databricks provides visualizations through Databricks SQL and Databricks Notebooks. The platform lets you run multiple SQL queries to perform relevant aggregations and apply filters to visualize datasets according to your needs. Databricks also allows you to configure settings related to the X and Y axes, legends, missing values, colors, and labels. Users can also download visualizations in PNG format for documentation purposes. The following sections provide an overview of the various visualization types available in these two frameworks, helping you select the most suitable option for your project. Bar Chart Bar charts are helpful when you want to compare the frequency of occurrence of different categories in your dataset. For instance, you can draw a bar chart to compare the frequency of various age groups, genders, ethnicities, etc. Additionally, bar charts can be used to view the sum of the prices of all orders placed in a particular month and group them by priority. Bar chart The result will show the months on the X-axis and the sum of all the orders categorized by priority on the Y-axis. Line Line charts connect different data points through straight lines. They are helpful when users want to analyze trends over some time. The charts usually show time on the X-axis and some metrics whose trajectory you want to explore on the Y-axis. Line chart For instance, you can view changes in the average price of orders over the years grouped by priority. The trends can help you predict the most likely future values, which can help you with financial projections and budget planning. Pie Chart Pie charts display the proportion of different categories in a dataset. They divide a circle into multiple segments, each showing the proportion of a particular category, with the segment size proportional to the category’s percentage of the total. Pie chart For instance, you can visualize the proportion of orders for each priority. The visualization is helpful when you want a quick overview of data distribution across different segments. It can help you analyze demographic patterns, market share of other products, budget allocation, etc. Scatter Plot A scatter plot displays each data point as a dot representing a relationship between two variables. Users can also control the color of each dot to reflect the relationship across different groups. Scatter Plot For instance, you can plot the relationship between quantity and price for different color-coded item categories. The visualization helps in understanding the correlation between two variables. However, users must interpret the relationship cautiously, as correlation does not always imply causation. Deeper statistical analysis is necessary to uncover causal factors. Area Charts Area charts combine line and bar charts by displaying lines and filling the area underneath with colors representing particular categories. They show how the contribution of a specific category changes relative to others over time. Area Charts For instance, you can visualize which type of order priority contributed the most to revenue by plotting the total price of different order priorities across time. The visualization helps you analyze the composition of a specific metric and how that composition varies over time. It is particularly beneficial in analyzing sales growth patterns for different products, as you can see which product contributed the most to growth across time. Box Chart Box charts concisely represent data distributions of numerical values for different categories. They show the distribution’s median, skewness, interquartile, and value ranges. Box Chart For instance, the box can display the median price value through a line inside the box and the interquartile range through the top and bottom box enclosures. The extended lines represent minimum and maximum price values to compute the price range. The chart helps determine the differences in distribution across multiple categories and lets you detect outliers. You can also see the variability in values across different categories and examine which category was the most stable. Bubble Chart Bubble charts enhance scatter plots by allowing you to visualize the relationship of three variables in a two-dimensional grid. The bubble position represents how the variable on the X-axis relates to the variable on the Y-axis. The bubble size represents the magnitude of a third variable, showing how it changes as the values of the first two variables change. Bubble chart The visualization is helpful for multi-dimensional datasets and provides greater insight when analyzing demographic data. However, like scatter plots, users must not mistake correlation for causation. Combo Chart Combo charts combine line and bar charts to represent key trends in continuous and categorical variables. The categorical variable is on the X-axis, while the continuous variable is on the Y-axis. Combo Chart For instance, you can analyze how the average price varies with the average quantity according to shipping date. The visualization helps summarize complex information involving relationships between three variables on a two-dimensional graph. However, unambiguous interpretation requires careful configuration of labels, colors, and legends. Heatmap Chart Heatmap charts represent data in a matrix format, with each cell having a different color according to the numerical value of a specific variable. The colors change according to the value intensity, with lower values typically having darker and higher values having lighter colors. Heatmap chart For instance, you can visualize how the average price varies according to order priority and order status. Heatmaps are particularly useful in analyzing correlation intensity between two variables. They also help detect outliers by representing unusual values through separate colors. However, interpreting the chart requires proper scaling to ensure colors do not misrepresent intensities. Histogram Histograms display the frequency of particular value ranges to show data distribution patterns. The X-axis contains the value ranges organized as bins, and the Y-axis shows the frequency of each bin. Histogram For instance, you can visualize the frequency of different price ranges to understand price distribution for your orders. The visualization lets you analyze data spread and skewness. It is beneficial in deeper statistical analysis, where you want to derive probabilities and build predictive models. Pivot Tables Pivot tables can help you manipulate tabular displays through drag-and-drop options by changing aggregation records. The option is an alternative to SQL filters for viewing aggregate values according to different conditions. Pivot Tables For instance, you can group total orders by shipping mode and order category. The visualization helps prepare ad-hoc reports and provides important summary information for decision-making. Interactive pivot tables also let users try different arrangements to reveal new insights. Choropleth Map Visualization Choropleth map visualization represents color-coded aggregations categorized according to different geographic locations. Regions with higher value intensities have darker colors, while those with lower intensities have lighter shades. Choropleth map visualization For instance, you can visualize the total revenue coming from different countries. This visualization helps determine global presence and highlight disparities across borders. The insights will allow you to develop marketing strategies tailored to regional tastes and behavior. Funnel Visualization Funnel visualization depicts data aggregations categorized according to specific steps in a pipeline. It represents each step from top to bottom with a bar and the associated value as a label overlay on each bar. It also displays cumulative percentage values showing the proportion of the aggregated value resulting from each stage. Funnel Visualization For instance, you can determine the incoming revenue streams at each stage of the ordering process. This visualization is particularly helpful in analyzing marketing pipelines for e-commerce sites. The tool shows the proportion of customers who view a product ad, click on it, add it to the cart, and proceed to check out. Cohort Analysis Cohort analysis offers an intuitive visualization to track the trajectory of a particular metric across different categories or cohorts. Cohort Analysis For instance, you can analyze the number of active users on an app that signed up in different months of the year. The rows will depict the months, and the columns will represent the proportion of active users in a particular cohort as they move along each month. The visualization helps in retention analysis as you can determine the proportion of retained customers across the user lifecycle. Counter Display Databricks allows you to configure a counter display that explicitly shows how the current value of a particular metric compares with the metric’s target value. Counter display For instance, you can check how the average total revenue compares against the target value. In Databricks, the first row represents the current value, and the second is the target. The visualization helps give a quick snapshot of trending performance and allows you to quantify goals for better strategizing. Sankey Diagrams Sankey diagrams show how data flows between different entities or categories. It represents flows through connected links representing the direction, with entities displayed as nodes on either side of a two-dimensional grid. The width of the connected links represents the magnitude of a particular value flowing from one entity to the other. Sankey Diagram For instance, you can analyze traffic flows from one location to the other. Sankey diagrams can help data engineering teams analyze data flows from different platforms or servers. The analysis can help identify bottlenecks, redundancies, and resource constraints for optimization planning. Sunburst Sequence The sunburst sequence visualizes hierarchical data through concentric circles. Each circle represents a level in the hierarchy and has multiple segments. Each segment represents the proportion of data in the hierarchy. Furthermore, it color codes segments to distinguish between categories within a particular hierarchy. Sunburst Sequence For instance, you can visualize the population of different world regions through a sunburst sequence. The innermost circle represents a continent, the middle one shows a particular region, and the outermost circle displays the country within that region. The visualization helps data science teams analyze relationships between nested data structures. The information will allow you to define clear data labels needed for model training. Table A table represents data in a structured format with rows and columns. Databricks offers additional functionality to hide, reformat, and reorder data. Tables help summarize information in structured datasets. You can use them for further analysis through SQL queries. Word Cloud Word cloud visualizations display words in different sizes according to their frequency in textual data. For instance, you can analyze customer comments or feedback and determine overall sentiment based on the highest-occurring words. Word Cloud While word clouds help identify key themes in unstructured textual datasets, they can suffer from oversimplification. Users must use word clouds only as a quick overview and augment textual analysis with advanced natural language processing techniques. Visualization is critical to efficient data management. Find out the top tools for data management for computer vision Visualizations in Databricks: Key Takeaways With an ever-increasing data volume and variety, visualization is becoming critical for quickly communicating data-based insights in a simplified manner. Databricks is a powerful tool with robust visualization types for analyzing complex datasets. Below are a few key points to remember regarding visualization in Databricks. Databricks SQL and Databricks Notebooks: Databricks offers advanced visualizations through Databricks SQL and Databricks Notebooks as a built-in functionality. Visualization configurations: Users can configure multiple visualization settings to produce charts, graphs, maps, and diagrams per their requirements. Visualization types: Databricks offers multiple visualizations, including bar charts, line graphs, pie charts, scatter plots, area graphs, box plots, bubble charts, combo charts, heatmaps, histograms, pivot tables, choropleth maps, funnels, cohort tables, counter display, Sankey diagrams, sunburst sequences, tables, and word clouds.

Mar 28 2024

10 M

Microsoft MORA: Multi-Agent Video Generation Framework

What is Mora? Mora is a multi-agent framework designed for generalist video generation. Based on OpenAI's Sora, it aims to replicate and expand the range of generalist video generation tasks. Sora, famous for making very realistic and creative scenes from written instructions, set a new standard for creating videos that are up to a minute long and closely match the text descriptions given. Mora distinguishes itself by incorporating several advanced visual AI agents into a cohesive system. This lets it undertake various video generation tasks, including text-to-video generation, text-conditional image-to-video generation, extending generated videos, video-to-video editing, connecting videos, and simulating digital worlds. Mora can mimic Sora’s capabilities using multiple visual agents, significantly contributing to video generation. In this article, you will learn: Mora's innovative multi-agent framework for video generation. The importance of open-source collaboration that Mora enables. Mora's approach to complex video generation tasks and instruction fidelity. About the challenges in video dataset curation and quality enhancement. TL; DR Mora's novel approach uses multiple specialized AI agents, each handling different aspects of the video generation process. This innovation allows various video generation tasks, showcasing adaptability in creating detailed and dynamic video content from textual descriptions. Mora aims to fix the problems with current models like Sora, which is closed-source and does not let anyone else use it or do more research in the field, even though it has amazing text-to-video conversion abilities 📝🎬. Unfortunately, Mora still has problems with dataset quality, video fidelity, and ensuring that outputs align with complicated instructions and people's preferences. These problems show where more work needs to be done in the future. OpenAI Sora’s Closed-Source Nature The closed-source nature of OpenAI's Sora presents a significant challenge to the academic and research communities interested in video generation technologies. Sora's impressive capabilities in generating realistic and detailed videos from text descriptions have set a new standard in the field. Related: New to Sora? Check out our detailed explainer on the architecture, relevance, limitations, and applications of Sora. However, the inability to access its source code or detailed architecture hinders external efforts to replicate or extend its functionalities. This limits researchers from fully understanding or replicating its state-of-the-art performance in video generation. Here are the key challenges highlighted due to Sora's closed-source nature: Inaccessibility to Reverse-Engineer Without access to Sora's source code, algorithms, and detailed methodology, the research community faces substantial obstacles in dissecting and understanding the underlying mechanisms that drive its exceptional performance. This lack of transparency makes it difficult for other researchers to learn from and build upon Sora's advancements, potentially slowing down the pace of innovation in video generation. Extensive Training Datasets Sora's performance is not just the result of sophisticated modeling and algorithms; it also benefits from training on extensive and diverse datasets. But the fact that researchers cannot get their hands on similar datasets makes it very hard to copy or improve Sora's work. High-quality, large-scale video datasets are crucial for training generative models, especially those capable of creating detailed, realistic videos from text descriptions. However, these datasets are often difficult to compile due to copyright issues, the sheer volume of data required, and the need for diverse, representative samples of the real world. Creating, curating, and maintaining high-quality video datasets requires significant resources, including copyright permissions, data storage, and management capabilities. Sora's closed nature worsens these challenges by not providing insights into compiling the datasets, leaving researchers to navigate these obstacles independently. Computational Power Creating and training models like Sora require significant computational resources, often involving large clusters of high-end GPUs or TPUs running for extended periods. Many researchers and institutions cannot afford this much computing power, which makes the gap between open-source projects like Mora and proprietary models like Sora even bigger. Without comparable computational resources, it becomes challenging to undertake the necessary experimentation—with different architectures and hyperparameters—and training regimes required to achieve similar breakthroughs in video generation technology. Learn more about these limitations in the technical paper. Evolution: Text-to-Video Generation Over the years, significant advancements in text-to-video generation technology have occurred, with each approach and architecture uniquely contributing to the field's growth. Here's a summary of these evolutionary stages, as highlighted in the discussion about text-to-video generation in the Mora paper: GANs (Generative Adversarial Networks) Early attempts at video generation leveraged GANs, which consist of two competing networks: a generator that creates images or videos that aim to be indistinguishable from real ones, and a discriminator that tries to differentiate between the real and generated outputs. Despite their success in image generation, GANs faced challenges in video generation due to the added complexity of temporal coherence and higher-dimensional data. Generative Video Models Moving beyond GANs, the field saw the development of generative video models designed to produce dynamic sequences. Generating realistic videos frame-by-frame and maintaining temporal consistency is a challenge, unlike in static image generation. Auto-Regressive Transformers Auto-regressive transformers were a big step forward because they could generate video sequences frame-by-frame. These models predicted each new frame based on the previously generated frames, introducing a sequential element that mirrors the temporal progression of videos. But this approach often struggled with long-term coherence over longer sequences. Large-Scale Diffusion Models Diffusion models, known for their capacity to generate high-quality images, were extended to video generation. These models gradually refine a random noise distribution toward a coherent output. They apply this iterative denoising process to the temporal domain of videos. Related: Read our guide on HuggingFace’s Dual-Stream Diffusion Net for Text-to-Video Generation. Image Diffusion U-Net Adapting the U-Net architecture for image diffusion models to video content was critical. This approach extended the principles of image generation to videos, using a U-Net that operates over sequences of frames to maintain spatial and temporal coherence. 3D U-Net Structure The change to a 3D U-Net structure allowed for more nuance in handling video data, considering the extra temporal dimension. This change also made it easier to model time-dependent changes, improving how we generate coherent and dynamic video content. Latent Diffusion Models (LDMs) LDMs generate content in a latent space rather than directly in pixel space. This approach reduces computational costs and allows for more efficient handling of high-dimensional video data. LDMs have shown that they can better capture the complex dynamics of video content. Diffusion Transformers Diffusion transformers (DiT) combine the strengths of transformers in handling sequential data with the generative capabilities of diffusion models. This results in high-quality video outputs that are visually compelling and temporally consistent. Useful: Stable Diffusion 3 is an example of a multimodal diffusion transformer model that generates high-quality images and videos from text. Check out our explainer on how it works. AI Agents: Advanced Collaborative Multi-agent Structures The paper highlights the critical role of collaborative, multi-agent structures in developing Mora. It emphasizes their efficacy in handling multimodal tasks and improving video generation capabilities. Here's a concise overview based on the paper's discussion on AI Agents and their collaborative frameworks: Multimodal Tasks Advanced collaborative multi-agent structures address multimodal tasks involving processing and generating complex data across different modes, such as text, images, and videos. These structures help integrate various AI agents, each specialized in handling specific aspects of the video generation process, from understanding textual prompts to creating visually coherent sequences. Cooperative Agent Framework (Role-Playing) The cooperative agent framework, characterized by role-playing, is central to the operation of these multi-agent structures. Each agent is assigned a unique role or function in this framework, such as prompt enhancement, image generation, or video editing. By defining these roles, the framework ensures that an agent with the best skills for each task is in charge of that step in the video generation process, increasing overall efficiency and output quality. Multi-Agent Collaboration Strategy The multi-agent collaboration strategy emphasizes the orchestrated interaction between agents to achieve a common goal. In Mora, this strategy involves the sequential and sometimes parallel processing of tasks by various agents. For instance, one agent might enhance an initial text prompt, convert it into another image, and finally transform it into a video sequence by yet another. This collaborative approach allows for the flexible and dynamic generation of video content that aligns with user prompts. AutoGen (Generic Programming Framework) A notable example of multi-agent collaboration in practice is AutoGen. This generic programming framework is designed to automate the assembly and coordination of multiple AI agents for a wide range of applications. Within the context of video generation, AutoGen can streamline the configuration of agents according to the specific requirements of each video generation task to generate complex video content from textual or image-based prompts. Mora drone to butterfly flythrough shot. | Image Source. Role of an AI Agent The paper outlines the architecture involving multiple AI agents, each serving a specific role in the video generation process. Here's a closer look at the role of each AI agent within the framework: Illustration of how to use Mora to conduct video-related tasks Prompt Selection and Generation Agent This agent is tasked with processing and optimizing textual prompts for other agents to process them further. Here are the key techniques used for Mora: GPT-4: This agent uses the generative capabilities of GPT-4 to generate high-quality prompts that are detailed and rich in context. Prompt Selection: This involves selecting or enhancing textual prompts to ensure they are optimally prepared for the subsequent video generation process. This step is crucial for setting the stage for generating images and videos that closely align with the user's intent. Good Read: Interested in GPT-4 Vision alternatives? Check out our blog post. Text-to-Image Generation Agent This agent uses a retrained large text-to-image model to convert the prompts into initial images. The retraining process ensures the model is finely tuned to produce high-quality images, laying a strong foundation for the video generation process. Image-to-Image Generation Agent This agent specializes in image-to-image generation, taking initial images and editing them based on new prompts or instructions. This ability allows for a high degree of customization and improvement in video creation. Image-to-Video Generation Agent This agent transforms static images into dynamic video sequences, extending the visual narrative by generating coherent frames. Here are the core techniques and models: Core Components: It incorporates two pre-trained models: GPT-3 for understanding and generating text-based instructions, and Stable Diffusion for translating these instructions into visual content. Prompt-to-Prompt Technique: The prompt-to-prompt technique guides the transformation from an initial image to a series of images that form a video sequence. Classifier-Free Guidance: Classifier-free guidance is used to improve the fidelity of generated videos to the textual prompts so that the videos remain true to the users' vision. Text-to-Video Generation Agent: This role is pivotal in transforming static images into dynamic videos that capture the essence of the provided descriptions. Stable Video Diffusion (SVD) and Hierarchical Training Strategy: A model specifically trained to understand and generate video content, using a hierarchical training strategy to improve the quality and coherence of the generated videos. Video Connection Agent This agent creates seamless transitions between two distinct video sequences for a coherent narrative flow. Here are the key techniques used: Pre-Trained Diffusion-Based T2V Model: This model uses a pre-trained diffusion-based model specialized in text-to-video (T2V) tasks to connect separate video clips into a cohesive narrative. Text-Based Control: This method uses textual descriptions to guide the generation of transition videos that seamlessly connect disparate video clips, ensuring logical progression and thematic consistency. Image-to-Video Animation and Autoregressive Video Prediction: These capabilities allow the agent to animate still images into video sequences, predict and generate future video frames based on previous sequences, and create extended and coherent video narratives. Mora’s Video Generation Process Mora's video-generation method is a complex, multi-step process that uses the unique capabilities of specialized AI agents within its framework. This process allows Mora to tackle various video generation tasks, from creating videos from text descriptions to editing and connecting existing videos. Here's an overview of how Mora handles each task: Mora’s video generation process. Text-to-Video Generation This task begins with a detailed textual prompt from the user. Then, the Text-to-Image Generation Agent converts the prompts into initial static images. These images serve as the basis for the Image-to-Video Generation Agent, which creates dynamic sequences that encapsulate the essence of the original text and produce a coherent video narrative. Text-Conditional Image-to-Video Generation This task combines textual prompts with a specific starting image. Mora first improves the input with the Prompt Selection and Generation Agent, ensuring that the text and image are optimally prepared for video generation. Then, the Image-to-Video Generation Agent takes over, generating a video that evolves from the initial image and aligns with the textual description. Extend Generated Videos To extend an existing video, Mora uses the final frame of the input video as a launchpad. The Image-to-Video Generation Agent crafts additional sequences that logically continue the narrative from the last frame, extending the video while maintaining narrative and visual continuity. Video-to-Video Editing In this task, Mora edits existing videos based on new textual prompts. The Image-to-Image Generation Agent first edits the video's initial frame according to the new instructions. Then, the Image-to-Video Generation Agent generates a new video sequence from the edited frame, adding the desired changes to the video content. Connect Videos Connecting two videos involves creating a transition between them. Mora uses the Video Connection Agent, which analyzes the first video's final frame and the second's initial frame. It then generates a transition video that smoothly links the two segments into a cohesive narrative flow. Simulating Digital Worlds Mora generates video sequences in this task that simulate digital or virtual environments. The process involves appending specific style cues (e.g., "in digital world style") to the textual prompt, guiding the Image-to-Video Generation Agent to create a sequence reflecting the aesthetics of a digital realm. This can involve stylistically transforming real-world images into digital representations or generating new content within the specified digital style. See Also: Read our explainer on Google’s Video Gaming Companion: Scalable Instructable Multiworld Agent [SIMA]. Mora: Experimental Setup As detailed in the paper, the experimental setup for evaluating Mora is comprehensive and methodically designed to assess the framework's performance across various dimensions of video generation. Here's a breakdown of the setup: Baseline The baseline for comparison includes existing open-sourced models that showcase competitive performance in video generation tasks. These models include Videocrafter, Show-1, Pika, Gen-2, ModelScope, LaVie-Interpolation, LaVie, and CogVideo. These models are a reference point for evaluating Mora's advancements and position relative to the current state-of-the-art video generation. Basic Metrics The evaluation framework comprises several metrics to quantify Mora's performance across different dimensions of video quality and condition consistency: Video Quality Measurement Object Consistency: Measures the stability of object appearances across video frames. Background Consistency: Assesses the uniformity of the background throughout the video. Motion Smoothness: Evaluates the fluidity of motion within the video. Aesthetic Score: Gauges the artistic and visual appeal of the video. Dynamic Degree: Quantifies the video's dynamic action or movement level. Imaging Quality: Assesses the overall visual quality of the video, including clarity and resolution. Video Condition Consistency Metric Temporal Style: Measures how consistently the video reflects the temporal aspects (e.g., pacing, progression) described in the textual prompt. Appearance Style: Evaluates the adherence of the video's visual style to the descriptions provided in the prompt, ensuring that the generated content matches the intended appearance. Self-Defined Metrics Video-Text Integration (VideoTI): Measures the model’s fidelity to textual instructions by comparing text representations of input images and generated videos. Temporal Consistency (TCON): Evaluates the coherence between an original video and its extended version, providing a metric for assessing the integrity of extended video content. Temporal Coherence (Tmean): Quantifies the correlation between the intermediate generated and input videos, measuring overall temporal coherence. Video Length: This parameter quantifies the duration of the generated video content, indicating the model's capacity for producing videos of varying lengths. Implementation Details The experiments use high-performance hardware, specifically TESLA A100 GPUs with substantial VRAM. This setup ensures that Mora and the baseline models are evaluated under conditions allowing them to fully express their video generation capabilities. The choice of hardware reflects the computational intensity of training and evaluating state-of-the-art video generation models. Mora video generation - Fish underwater flythrough Limitations of Mora The paper outlines several limitations of the Mora framework. Here's a summary of these key points: Curating High-Quality Video Datasets Access to high-quality video datasets is a major challenge for training advanced video generation models like Mora. Copyright restrictions and the sheer volume of data required make it difficult to curate diverse and representative datasets that can train models capable of generating realistic and varied video content. Read Also: The Full Guide to Video Annotation for Computer Vision. Quality and Length Gaps While Mora demonstrates impressive capabilities, it has a noticeable gap in quality and maximum video length compared to state-of-the-art models like Sora. This limitation is particularly evident in tasks requiring the generation of longer videos, where maintaining visual quality and coherence becomes increasingly challenging. Simulating videos in Mora vs in Sora. Instruction Following Capability Mora sometimes struggles to precisely follow complex or detailed instructions, especially when generating videos that require specific actions, movements, or directionality. This limitation suggests that further improvement in understanding and interpreting textual prompts is needed. Human Visual Preference Alignment The experimental results may not always align with human visual preferences, particularly in scenarios requiring the generation of realistic human movements or the seamless connection of video segments. This misalignment highlights the need to incorporate a more nuanced understanding of physical laws and human dynamics into the video-generation process. Mora Vs. Sora: Feature Comparisons The paper compares Mora and OpenAI's Sora across various video generation tasks. Here's a detailed feature comparison based on their capabilities in different aspects of video generation: Check out the project repository on GitHub. Mora Multi-Agent Framework: Key Takeaways The paper "Mora: Enabling Generalist Video Generation via a Multi-Agent Framework" describes Mora, a new framework that advances video technology. Using a multi-agent approach, Mora is flexible and adaptable across various video generation tasks, from creating detailed scenes to simulating complex digital worlds. Because it is open source, it encourages collaboration, which leads to new ideas, and lets the wider research community add to and improve its features. Even though Mora has some good qualities, it needs high-quality video datasets, video quality, length gaps, trouble following complicated instructions correctly, and trouble matching outputs to how people like to see things. Finding solutions to these problems is necessary to make Mora work better and be used in more situations. Continuing to improve and develop Mora could change how we make video content so it is easier for creators and viewers to access and have an impact.

Mar 26 2024

8 M

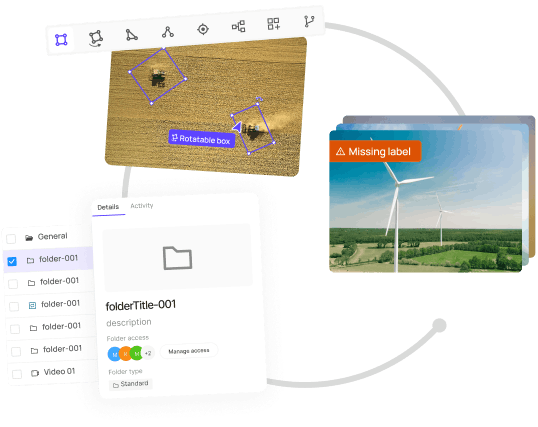

Panoptic Segmentation Updates in Encord

Panoptic Segmentation Updates in Encord Over the past 6 months, we have updated and built new features within Encord with a strong focus on improving your panoptic segmentation workflows across data, labeling, and model evaluation. Here are some updates we’ll cover in this article: Bitmask lock. SAM + Bitmask lock + Brush for AI-assisted precision labeling. Fast and performant rendering of fully bitmask-segmented images and videos. Panoptic Quality model evaluation metrics. Bitmask Lock within Encord Annotate to Manage Segmentation Overlap Our Bitmask Lock feature introduces a way to prevent segmentation and masks from overlapping, providing pixel-perfect accuracy for your object segmentation tasks. By simply toggling the “Bitmask cannot be drawn over” button, you can prevent any part of a bitmask label from being included in another label. This feature is crucial for applications requiring precise object boundaries and pixel-perfect annotations, eliminating the risk of overlapping segmentations. Let’s see how to do this within Encord Annotate: Step 1: Create your first Bitmask Initiating your labeling process with the Bitmask is essential for creating precise object boundaries. If you are new to the Bitmask option, check out our quickstart video walkthrough on creating your first Bitmask using brush tools for labeling. Step 2: Set Bitmask Overlapping Behavior Managing how bitmasks overlap is vital for ensuring accurate segmentation, especially when dealing with multiple objects that are close to each other or overlapping. After creating your first bitmask, adjust the overlapping behavior settings to dictate how subsequent bitmasks interact with existing ones. This feature is crucial for delineating separate objects without merging their labels—perfect for panoptic segmentation. This prevents any part of this bitmask label from being included in another label. This is invaluable for creating high-quality datasets for training panoptic segmentation models. Step 3: Lock Bitmasks When Labeling Multiple Instances Different images require different approaches. Beyond HSV, you can use intensity values for grayscale images (like DICOM) or RGB for color-specific labeling. This flexibility allows for tailored labeling strategies that match the unique attributes of your dataset. Experiment with the different settings (HSV, intensity, and RGB) to select the best approach for your specific labeling task. Adjust the criteria to capture the elements you need precisely. Step 4: Using the Eraser Tool Even with careful labeling, adjustments may be necessary. The eraser tool can remove unwanted parts of a bitmask label before finalizing it, providing an extra layer of precision. If you've applied a label inaccurately, use the eraser tool to correct any errors by removing unwanted areas of the bitmask. See our documentation to learn more. Bitmask-Segmented Images and Videos Got a Serious Performance Lift (At Least 5x) Encord's commitment to enhancing user experience and efficiency is evident in the significant performance improvements made to the Bitmask-segmented annotation within the Label Editor. Our Engineering team has achieved a performance lift of at least 5x by directly addressing user feedback and pinpointing critical bottlenecks. This improves how fast the editor loads for your panoptic segmentation labeling instances. Here's a closer look at the differences between the "before" and "after" scenarios, highlighting the advancements: Before the Performance Improvements: Performance Lag on Zoom: Users experienced small delays when attempting to zoom in on images, with many instances (over 100) that impacted the precision and speed of their labeling process. Slow Response to Commands: Basic functionalities like deselecting tools or simply navigating through the label editor were met with sluggish responses. Operational Delays: Every action, from image loading to applying labels, was hindered by "a few milliseconds" of delay, which accumulated significant time overheads across projects. After the Performance Enhancements: Quicker Image Load Time: The initial step of image loading has seen a noticeable speed increase! This sets a good pace for the entire labeling task. Responsiveness: The entire label editor interface, from navigating between tasks to adjusting image views, is now remarkably more responsive. This change eradicates previous lag-related frustrations and allows for a smoother user experience. Improved Zoom Functionality: Zooming in and out has become significantly more fluid and precise. This improvement is precious for detailed labeling work, where accuracy is paramount. The positive changes directly result from the Engineering team's responsiveness to user feedback. Our users have renewed confidence in handling future projects with the Label Editor. We are dedicated to improving Encord based on actual user experiences. Use Segment Anything Model (SAM) and Bitmask Lock for High Annotation Precision Starting your annotation process can be time-consuming, especially for complex images. Our Segment Anything Model (SAM) integration offers a one-click solution to create initial annotations. SAM identifies and segments objects in your image, significantly speeding up the annotation process while ensuring high accuracy. Step 1: Select the SAM tool from the toolbar with the Bitmask Lock enabled. Step 2: Click on the object you wish to segment in your image. SAM will automatically generate a precise bitmask for the object. Step 3: Use the bitmask brush to refine the edges for pixel-perfect segmentation if needed. See how to use the Segment Anything Model (SAM) within Encord in our documentation. Validate Segmentation with Panoptic Quality Metrics You can easily evaluate your segmentation model’s panoptic mask quality with new metrics: mSQ (mean Segmentation Quality) mRQ (mean Recognition Quality) mPQ (mean Panoptic Quality) The platform will calculate mSQ, mRQ, and mPQ for your predictions, labels, and dataset to clearly understand the segmentation performance and areas for improvement. Navigate to Active → Under the Model Evaluation tab, choose the panoptic model you want to evaluate. Under Display, toggle the Panoptic Quality Metrics (still in beta) option to see the model's mSQ, mRQ, and mPQ scores. Fast Rendering of Fully Bitmask-Segmented Images within Encord Active The performance improvement within the Label Editor also translates to how you view and load panoptic segmentation within Active. Try it yourself: Key Takeaways: Panoptic Segmentation Updates in Encord Here’s a recap of the key features and improvements within Encord that can improve your Panoptic Segmentation workflows across data and models: Bitmask Lock: This feature prevents overlaps in segmentation. it guarantees the integrity of each label, enhancing the quality of the training data and, consequently, the accuracy of machine learning models. This feature is crucial for projects requiring meticulous detail and precision. SAM + Bitmask Lock + Brush: The Lock feature allows you to apply Bitmasks to various objects within an image, which reduces manual effort and significantly speeds up your annotation process. The integration of SAM within Encord's platform, using Lock to manage Bitmask overlaps, and the generic brush tool empower you to achieve precise, pixel-perfect labels with minimal effort. Fast and Performant Rendering of Fully Bitmask-segmented Images and Videos: We have made at least 5x improvements to how Encord quickly renders fully Bitmask-segmented images and videos across Annotate Label Editor and Active. Panoptic Quality Model Evaluation Metrics: The Panoptic Quality Metrics—comprising mean Segmentation Quality (mSQ), mean Recognition Quality (mRQ), and mean Panoptic Quality (mPQ)—provide a comprehensive framework for evaluating the effectiveness of segmentation models.

Mar 06 2024

7 M

Qwen-VL and Qwen-VL-Chat: Introduction to Alibaba’s AI Models