Optimal control systems

- 2. Electrical Engineering Textbook Series Richard C. Dorf, Series Editor University of California, Davis Forthcoming and Published Titles Applied Vector Analysis Matiur Rahman and Isaac Mulolani Continuous Signals and Systems with MATLAB Taan EIAli and Mohammad A. Karim Discrete Signals and Systems with MATLAB Taan EIAIi Electromagnetics Edward J. Rothwell and Michael J. Cloud Optimal Control Systems Desineni Subbaram Naidu

- 3. OPTIMAL CONTROL SYSTEMS Desineni Subbaram Naidu Idaho State Universitv. Pocatello. Idaho. USA o CRC PRESS Boca Raton London New York Washington, D.C.

- 4. Cover photo: Terminal phase (using fuel-optimal control) of the lunar landing of the Apollo 11 mission. Courtesy of NASA. TJ "l13 N1. b'~ <'l ~ot Library of Congress Cataloging-in-Publication Data Naidu, D. s. (Desineni S.), 1940- Optimal control systems I by Desineni Subbaram N aidu. p. cm.- (Electrical engineering textbook series) Includes bibliographical references and index. ISBN 0-8493-0892-5 (alk. paper) 1. Automatic control. 2. Control theory. 3. Mathematical optimization. I. Title II. Series. 2002067415 This book contains information obtained from authentic and highly regarded sources. Reprinted material is quoted with permission, and sources are indicated. A wide variety of references are listed. Reasonable efforts have been made to publish reliable data and information, but the author and the publisher cannot assume responsibility for the validity of all materials or for the consequences of their use. Neither this book nor any part may be reproduced or transmitted in any form or by any means, electronic or mechanical, including photocopying, microfilming, and recording, or by any information storage or retrieval system, without prior permission in writing from the publisher. The consent of CRC Press LLC does not extend to copying for general distribution, for promotion, for creating new works, or for resale. Specific permission must be obtained in writing from CRC Press LLC for such copying. Direct all inquiries to CRC Press LLC, 2000 N.W. Corporate Blvd., Boca Raton, Florida 33431. Trademark Notice: Product or corporate names may be trademarks or registered trademarks, and are used only for identification and explanation, without intent to infringe. Visit the CRC Press Web site at www.crcpress.com © 2003 by CRC Press LLC No claim to original u.S. Government works International Standard Book Number 0-8493-0892-5 Library of Congress Card Number 2002067415 Printed in the United States of America 1 2 3 4 5 6 7 8 9 0 Printed on acid-free paper

- 5. v "Because the shape of the whole universe is most perfect and, in fact, designed by the wisest Creator, nothing in all of the world will occur in which no maximum or minimum rule is somehow shining forth. " Leohard Euler, 1144

- 6. vi Dedication My deceased parents who shaped my life Desineni Rama Naidu Desineni Subbamma and My teacher who shaped my education Buggapati A udi Chetty

- 7. vii Preface Many systems, physical, chemical, and economical, can be modeled by mathematical relations, such as deterministic and/or stochastic differential and/or difference equations. These systems then change with time or any other independent variable according to the dynamical relations. It is possible to steer these systems from one state to another state by the application of some type of external inputs or controls. If this can be done at all, there may be different ways of doing the same task. If there are different ways of doing the same task, then there may be one way of doing it in the "best" way. This best way can be minimum time to go from one state to another state, or maximum thrust developed by a rocket engine. The input given to the system corresponding to this best situation is called "optimal" control. The measure of "best" way or performance is called "performance index" or "cost function." Thus, we have an "optimal control system," when a system is controlled in an optimum way satisfying a given performance index. The theory of optimal control systems has enjoyed a flourishing period for nearly two decades after the dawn of the so-called "modern" control theory around the 1960s. The interest in theoretical and practical aspects of the subject has sustained due to its applications to such diverse fields as electrical power, aerospace, chemical plants, economics, medicine, biology, and ecology. Aim and Scope In this book we are concerned with essentially the control of physical systems which are "dynamic" and hence described by ordinary differential or difference equations in contrast to "static" systems, which are characterized by algebraic equations. Further, our focus is on "deterministic" systems only. The development of optimal control theory in the sixties revolved around the "maximum principle" proposed by the Soviet mathematician L. S. Pontryagin and his colleagues whose work was published in English in 1962. Further contributions are due to R. E. Kalman of the United States. Since then, many excellent books on optimal control theory of varying levels of sophistication have been published. This book is written keeping the "student in mind" and intended to provide the student a simplified treatment of the subject, with an

- 8. viii appropriate dose of mathematics. Another feature of this book is to assemble all the topics which can be covered in a one-semester class. A special feature of this book is the presentation of the procedures in the form of a summary table designed in terms of statement of the problem and a step-by-step solution of the problem. Further, MATLAB© and SIMULINK© 1 , including Control System and Symbolic Math Toolboxes, have been incorporated into the book. The book is ideally suited for a one-semester, second level, graduate course in control systems and optimization. Background and Audience This is a second level graduate text book and as such the background material required for using this book is a first course on control systems, state space analysis, or linear systems theory. It is suggested that the student review the material in Appendices A and B given at the end of the book. This book is aimed at graduate students in Electrical, Mechanical, Chemical, and Aerospace Engineering and Applied Mathematics. It can also be used by professional scientists and engineers working in a variety of industries and research organizations. Acknowledgments This book has grown out of my lecture notes prepared over many years of teaching at the Indian Institute of Technology (IIT), Kharagpur, and Idaho State University (ISU), Pocatello, Idaho. As such, I am indebted to many of my teachers and students. In recent years at ISU, there are many people whom I would like to thank for their encouragement and cooperation. First of all, I would like to thank the late Dean Hary Charyulu for his encouragement to graduate work and research which kept me "live" in the area optimal control. Also, I would like to mention a special person, Kevin Moore, whose encouragement and cooperation made my stay at ISU a very pleasant and scholarly productive one for many years during 1990-98. During the last few years, Dean Kunze and Associate Dean Stuffie have been of great help in providing the right atmosphere for teaching and research work. IMATLAB and SIMULINK are registered trademarks of The Mathworks, Inc., Natick, MA, USA.

- 9. ix Next, my students over the years were my best critics in providing many helpful suggestions. Among the many, special mention must be made about Martin Murillo, Yoshiko Imura, and Keith Fisher who made several suggestions to my manuscript. In particular, Craig Rieger ( of Idaho National Engineering and Environmental Laboratory (INEEL)) deserves special mention for having infinite patience in writing and testing programs in MATLAB© to obtain analytical solutions to matrix Riccati differential and difference equations. The camera-ready copy of this book was prepared by the author using H'IEX of the PCTEX322 Version 4.0. The figures were drawn using CoreiDRAW3 and exported into H'IEX document. Several people at the publishing company CRC Press deserve mention. Among them, special mention must be made about Nora Konopka, Acquisition Editor, Electrical Engineering for her interest, understanding and patience with me to see this book to completion. Also, thanks are due to Michael Buso, Michelle Reyes, Helena Redshaw, and Judith Simon Kamin. I would like to make a special mention of Sean Davey who helped me in many issues regarding H'IEX. Any corrections and suggestions are welcome via email to naiduds@isu. edu Finally, it is my pleasant duty to thank my wife, Sita and my daughters, Radhika and Kiranmai who have been a great source of encouragement and cooperation throughout my academic life. Desineni Subbaram Naidu Pocatello, Idaho June 2002 2:rg..'lEX is a registered trademark of Personal 'lEX, Inc., Mill Valley, CA. 3CorelDRAW is a registered trademark of Corel Corporation or Corel Corporation Limited.

- 10. x ACKNOWLEDGMENTS The permissions given by 1. Prentice Hall for D. E. Kirk, Optimal Control Theory: An Introduction, Prentice Hall, Englewood Cliffs, NJ, 1970, 2. John Wiley for F. L. Lewis, Optimal Control, John Wiley & Sons, Inc., New York, NY, 1986, 3. McGraw-Hill for M. Athans and P. L. Falb, Optimal Control: An Introduction to the Theory and Its Applications, McGraw-Hill Book Company, New York, NY, 1966, and 4. Springer-Verlag for H. H. Goldstine, A History of the Calculus of Variations, Springer-Verlag, New York, NY, 1980, are hereby acknowledged.

- 11. xi AUTHOR'S BIOGRAPHY Desineni "Subbaram" Naidu received his B.E. degree in Electrical Engineering from Sri Venkateswara University, Tirupati, India, and M.Tech. and Ph.D. degrees in Control Systems Engineering from the Indian Institute of Technology (lIT), Kharagpur, India. He held various positions with the Department of Electrical Engineering at lIT. Dr. Naidu was a recipient of a Senior National Research Council (NRC) Associateship of the National Academy of Sciences, Washington, DC, tenable at NASA Langley Research Center, Hampton, Virginia, during 1985-87 and at the U. S. Air Force Research Laboratory (AFRL) at Wright-Patterson Air Force Base (WPAFB), Ohio, during 1998- 99. During 1987-90, he was an adjunct faculty member in the Department of Electrical and Computer Engineering at Old Dominion University, Norfolk, Virginia. Since August 1990, Dr. Naidu has been a professor at Idaho State University. At present he is Director of the Measurement and Control Engineering Research Center; Coordinator, Electrical Engineering program; and Associate Dean of Graduate Studies in the College of Engineering, Idaho State University, Pocatello, Idaho. Dr. Naidu has over 150 publications including a research monograph, Singular Perturbation Analysis of Discrete Control Systems, Lecture Notes in Mathematics, 1985; a book, Singular Perturbation Methodology in Control Systems, lEE Control Engineering Series, 1988; and a research monograph entitled, Aeroassisted Orbital Transfer: Guidance and Control Strategies, Lecture Notes in Control and Information Sciences, 1994. Dr. Naidu is (or has been) a member of the Editorial Boards of the IEEE Transaction on Automatic Control, (1993-99), the International Journal of Robust and Nonlinear Control, (1996-present), the International Journal of Control-Theory and Advanced Technology (C-TAT), (1992-1996), and a member of the Editorial Advisory Board of Mechatronics: The Science of Intelligent Machines, an International Journal, (1992-present). Professor Naidu is an elected Fellow of The Institute of Electrical and Electronics Engineers (IEEE), a Fellow of World Innovation Foundation (WIF), an Associate Fellow of the American Institute of Aeronautics and Astronautics (AIAA) and a member of several other organizations such as SIAM, ASEE, etc. Dr. Naidu was a recipient of the Idaho State University Outstanding Researcher Award for 1993-94 and 1994-95 and the Distinguished Researcher Award for 1994-95. Professor Naidu's biography is listed (multiple years) in Who's Who among America's Teachers, the Silver Anniversary 25th Edition of Who's Who in the West, Who's Who in Technology, and The International Directory of Distinguished Leadership.

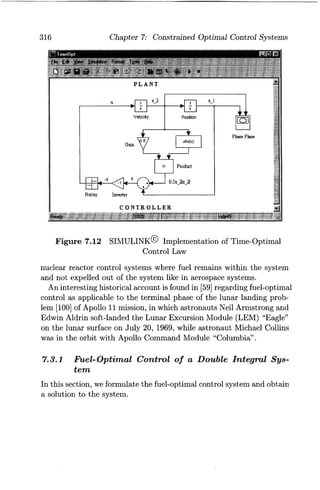

- 13. Contents 1 Introduction 1 1.1 Classical and Modern Control . . . . . . . . . . . . . . . . . . . . . 1 1.2 Optimization................................. 4 1.3 Optimal Control .............................. 6 1.3.1 Plant ................................. 6 1.3.2 Performance Index ........................ 6 1.3.3 Constraints ............................. 9 1.3.4 Formal Statement of Optimal Control System .... 9 1.4 Historical Tour .............................. 11 1.4.1 Calculus of Variations .................... 11 1.4.2 Optimal Control Theory .................. 13 1.5 About This Book ............................. 15 1.6 Chapter Overview ............................ 16 1.7 Problems ................................... 17 2 Calculus of Variations and Optimal Control 19 2.1 Basic Concepts .............................. 19 2.1.1 Function and Functional .................. 19 2.1.2 Increment ............................. 20 2.1.3 Differential and Variation . . . . . . . . . . . . . . . . . . 22 2.2 Optimum of a Function and a Functional ............ 25 2.3 The Basic Variational Problem ................... 27 2.3.1 Fixed-End Time and Fixed-End State System ... 27 2.3.2 Discussion on Euler-Lagrange Equation ........ 33 2.3.3 Different Cases for Euler-Lagrange Equation .... 35 2.4 The Second Variation . . . . . . . . . . . . . . . . . . . . . . . . . . 39 2.5 Extrema of Functions with Conditions .............. 41 2.5.1 Direct Method .......................... 43 2.5.2 Lagrange Multiplier Method ................ 45 2.6 Extrema of Functionals with Conditions ............ 48 2.7 Variational Approach to Optimal Control Systems . . . . . 57 xiii

- 14. XIV 2.7.1 Terminal Cost Problem ................... 57 2.7.2 Different Types of Systems ................. 65 2.7.3 Sufficient Condition ...................... 67 2.7.4 Summary of Pontryagin Procedure ........... 68 2.8 Summary of Variational Approach ................. 84 2.8.1 Stage I: Optimization of a Functional . . . . . . . . . 85 2.8.2 Stage II: Optimization of a Functional with Condition ............................. 86 2.8.3 Stage III: Optimal Control System with Lagrangian Formalism .................... 87 2.8.4 Stage IV: Optimal Control System with Hamiltonian Formalism: Pontryagin Principle ... 88 2.8.5 Salient Features ......................... 91 2.9 Problems ................................... 96 3 Linear Quadratic Optimal Control Systems I 101 3.1 Problem Formulation . . . . . . . . . . . . . . . . . . . . . . . .. 101 3.2 Finite-Time Linear Quadratic Regulator ........... 104 3.2.1 Symmetric Property of the Riccati Coefficient Matrix .............................. 109 3.2.2 Optimal Control ....................... 110 3.2.3 Optimal Performance Index . . . . . . . . . . . . . .. 110 3.2.4 Finite-Time Linear Quadratic Regulator: Time-Varying Case: Summary ............. 112 3.2.5 Salient Features. . . . . . . . . . . . . . . . . . . . . . .. 114 3.2.6 LQR System for General Performance Index ... 118 3.3 Analytical Solution to the Matrix Differential Riccati Equation .................... 119 3.3.1 MATLAB© Implementation of Analytical Solution to Matrix DRE. . . . . . . . . . . . . . . . .. 122 3.4 Infinite-Time LQR System I . . . . . . . . . . . . . . . . . . .. 125 3.4.1 Infinite-Time Linear Quadratic Regulator: Time-Varying Case: Summary ............. 128 3.5 Infinite-Time LQR System II ................... 129 3.5.1 Meaningful Interpretation of Riccati Coefficient . 132 3.5.2 Analytical Solution of the Algebraic Riccati Equation . . . . . . . . . . . . . . . . . . . . . .. 133 3.5.3 Infinite-Interval Regulator System: Time-Invariant Case: Summary ............. 134 3.5.4 Stability Issues of Time-Invariant Regulator. . .. 139

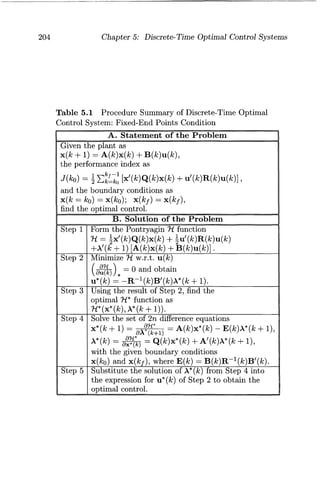

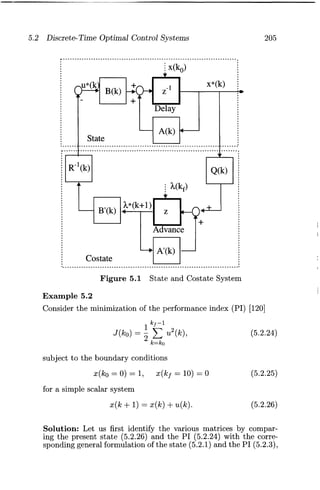

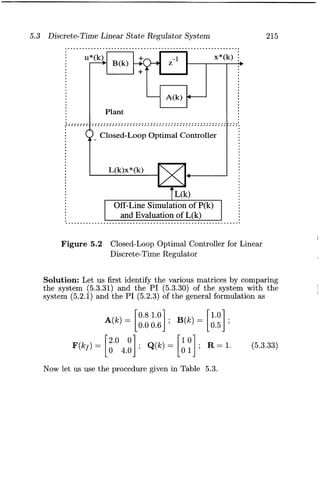

- 15. xv 3.5.5 Equivalence of Open-Loop and Closed-Loop Optimal Controls ....................... 141 3.6 Notes and Discussion ......................... 144 3.7 Problems .................................. 147 4 Linear Quadratic Optimal Control Systems II 151 4.1 Linear Quadratic Tracking System: Finite-Time Case 152 4.1.1 Linear Quadratic Tracking System: Summary 157 4.1.2 Salient Features of Tracking System . . . . . . . .. 158 4.2 LQT System: Infinite-Time Case ................. 166 4.3 Fixed-End-Point Regulator System ............... 169 4.4 LQR with a Specified Degree of Stability . . . . . . . . . .. 175 4.4.1 Regulator System with Prescribed Degree of Stability: Summary . . . . . . . . . . . . . . . . . . . .. 177 4.5 Frequency-Domain Interpretation ................ 179 4.5.1 Gain Margin and Phase Margin ............ 181 4.6 Problems.................................. 188 5 Discrete-Time Optimal Control Systems 191 5.1 Variational Calculus for Discrete-Time Systems .................................. 191 5.1.1 Extremization of a Functional .............. 192 5.1.2 Functional with Terminal Cost ............. 197 5.2 Discrete-Time Optimal Control Systems ........... 199 5.2.1 Fixed-Final State and Open-Loop Optimal Control. . . . . . . . . . . . . . . . . . . . . . . . . . . . .. 203 5.2.2 Free-Final State and Open-Loop Optimal Control 207 5.3 Discrete-Time Linear State Regulator System ................................... 207 5.3.1 Closed-Loop Optimal Control: Matrix Difference Riccati Equation . . . . . . . . . . . . . . . . . . . . . . . 209 5.3.2 Optimal Cost Function .................. 213 5.4 Steady-State Regulator System .................. 219 5.4.1 Analytical Solution to the Riccati Equation .... 225 5.5 Discrete-Time Linear Quadratic Tracking System . . . .. 232 5.6 Frequency-Domain Interpretation ................ 239 5.7 Problems .................................. 245

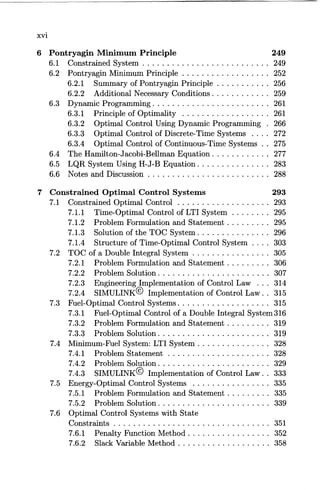

- 16. XVI 6 Pontryagin Minimum Principle 249 6.1 Constrained System .......................... 249 6.2 Pontryagin Minimum Principle . . . . . . . . . . . . . . . . .. 252 6.2.1 Summary of Pontryagin Principle . . . . . . . . . . . 256 6.2.2 Additional Necessary Conditions ............ 259 6.3 Dynamic Programming. . . . . . . . . . . . . . . . . . . . . . . . 261 6.3.1 Principle of Optimality .................. 261 6.3.2 Optimal Control Using Dynamic Programming . 266 6.3.3 Optimal Control of Discrete-Time Systems .... 272 6.3.4 Optimal Control of Continuous-Time Systems .. 275 6.4 The Hamilton-Jacobi-Bellman Equation ............ 277 6.5 LQR System Using H-J-B Equation ............. " 283 6.6 Notes and Discussion ......................... 288 7 Constrained Optimal Control Systems 293 7.1 Constrained Optimal Control . . . . . . . . . . . . . . . . . .. 293 7.1.1 Time-Optimal Control of LTI System ........ 295 7.1.2 Problem Formulation and Statement . . . . . . . .. 295 7.1.3 Solution of the TOC System ............... 296 7.1.4 Structure of Time-Optimal Control System .... 303 7.2 TOC of a Double Integral System ................ 305 7.2.1 Problem Formulation and Statement. . . . . . . .. 306 7.2.2 Problem Solution ....................... 307 7.2.3 Engineering Implementation of Control Law ... 314 7.2.4 SIMULINK© Implementation of Control Law .. 315 7.3 Fuel-Optimal Control Systems ................... 315 7.3.1 Fuel-Optimal Control of a Double Integral System 316 7.3.2 Problem Formulation and Statement ......... 319 7.3.3 Problem Solution. . . . . . . . . . . . . . . . . . . . . .. 319 7.4 Minimum-Fuel System: LTI System ............... 328 7.4.1 Problem Statement ..................... 328 7.4.2 Problem Solution. . . . . . . . . . . . . . . . . . . . . .. 329 7.4.3 SIMULINK© Implementation of Control Law. . 333 7.5 Energy-Optimal Control Systems ................ 335 7.5.1 Problem Formulation and Statement ......... 335 7.5.2 Problem Solution. . . . . . . . . . . . . . . . . . . . . .. 339 7.6 Optimal Control Systems with State Constraints . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .. 351 7.6.1 Penalty Function Method. . . . . . . . . . . . . . . .. 352 7.6.2 Slack Variable Method ................... 358

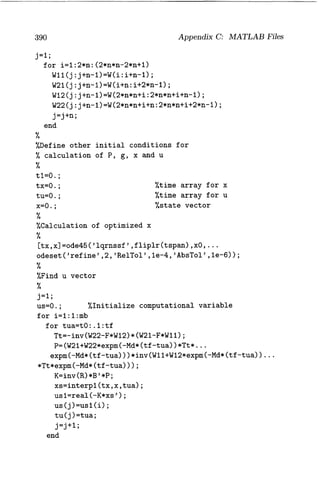

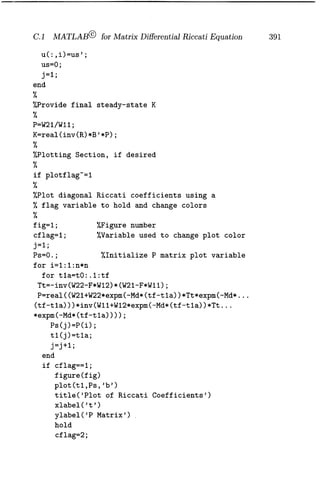

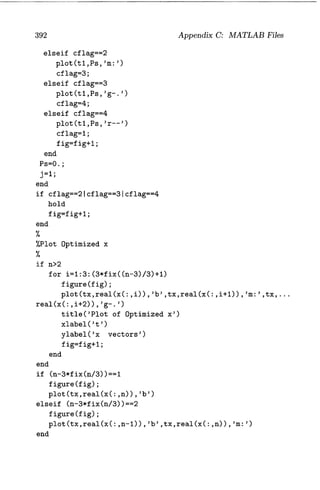

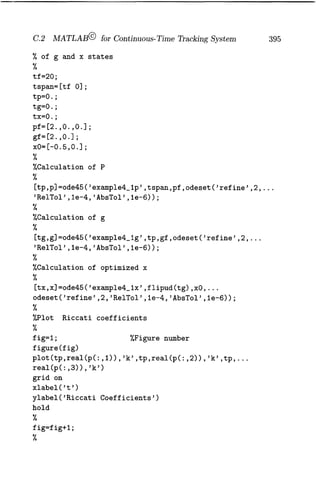

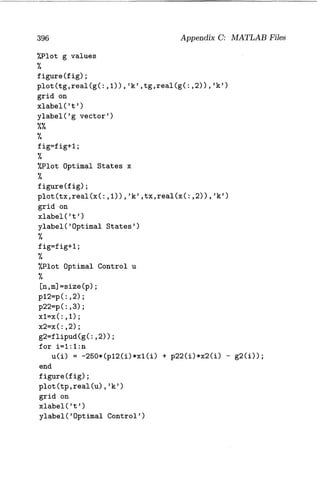

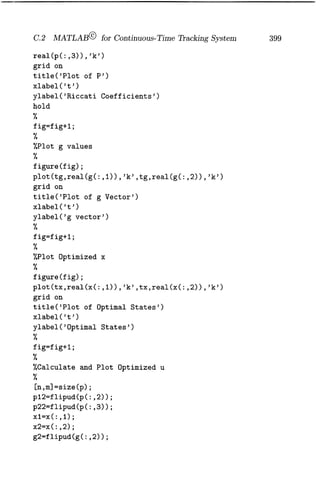

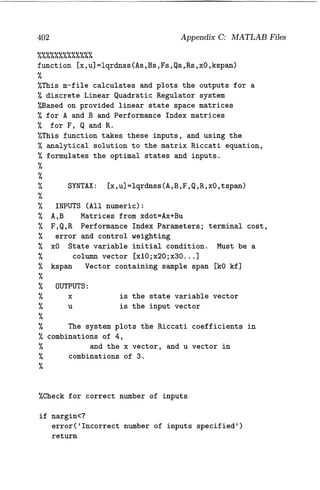

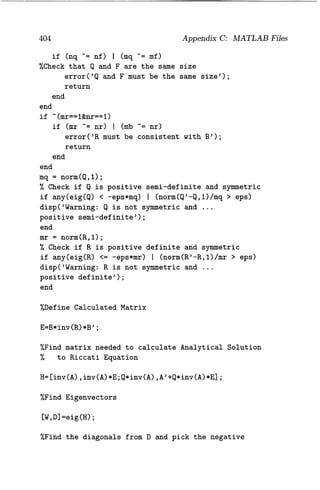

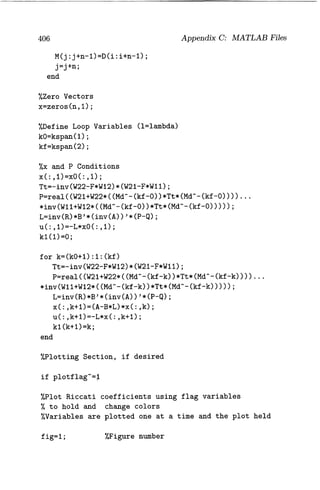

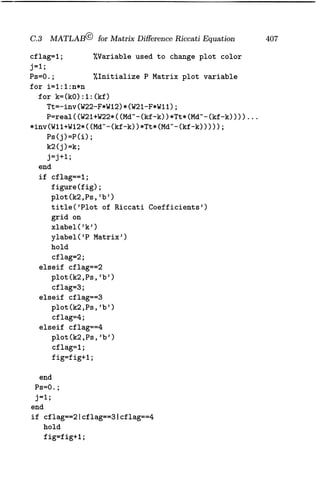

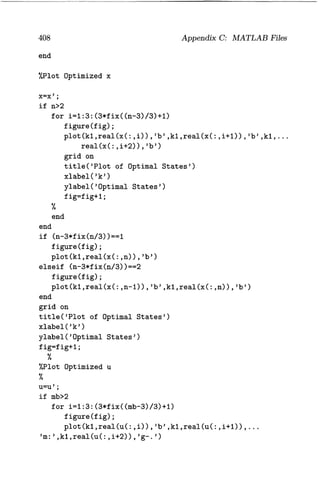

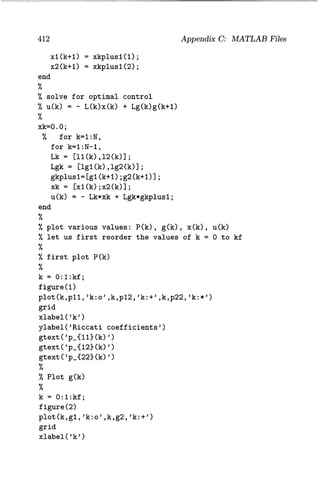

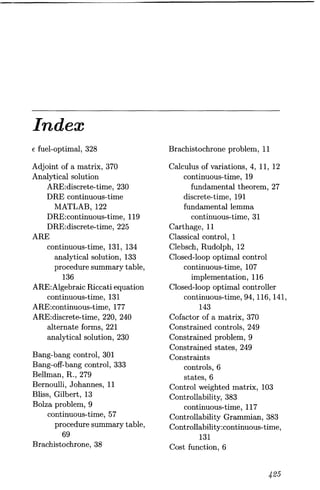

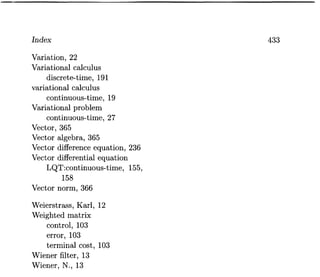

- 17. xvii 7.7 Problems .................................. 361 Appeddix A: Vectors and Matrices 365 A.1 Vectors ................................... 365 A.2 Matrices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 367 A.3 Quadratic Forms and Definiteness ................ 376 Appendix B: State Space Analysis 379 B.1 State Space Form for Continuous-Time Systems ...... 379 B.2 Linear Matrix Equations. . . . . . . . . . . . . . . . . . . . . .. 381 B.3 State Space Form for Discrete-Time Systems . . . . . . .. 381 B.4 Controllability and Observability ................. 383 B.5 Stabilizability, Reachability and Detectability ........ 383 Appendix C: MATLAB Files 385 C.1 MATLAB© for Matrix Differential Riccati Equation .. 385 C.l.1 MATLAB File lqrnss.m .................. 386 C.l.2 MATLAB File lqrnssf.m .................. 393 C.2 MATLAB© for Continuous-Time Tracking System ... 394 C.2.1 MATLAB File for Example 4.1(example4_l.m) . 394 C.2.2 MATLAB File for Example 4.1(example4_1p.m). 397 C.2.3 MATLAB File for Example 4.1(example4_1g.m). 397 C.2.4 MAT LAB File for Example 4.1(example4_1x.m). 397 C.2.5 MATLAB File for Example 4.2(example4_l.m) . 398 C.2.6 MATLAB File for Example 4.2( example4_2p.m). 400 C.2.7 MATLAB File for Example 4.2(example4_2g.m). 400 C.2.8 MATLAB File for Example 4.2( example4_2x.m). 401 C.3 MATLAB© for Matrix Difference Riccati Equation ... 401 C.3.1 MAT LAB File lqrdnss.m . . . . . . . . . . . . . . . .. 401 C.4 MATLAB© for Discrete-Time Tracking System ...... 409 References . ..................................... 415 Index . ......................................... 425

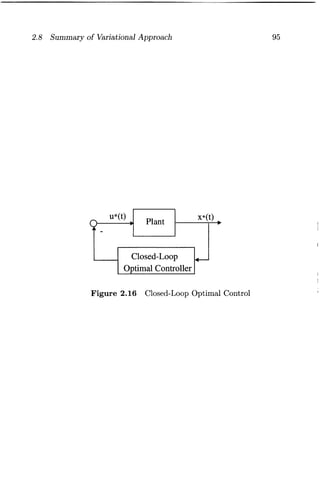

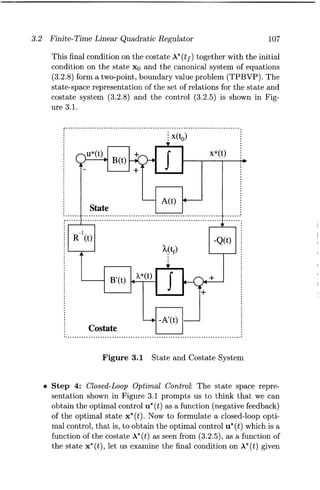

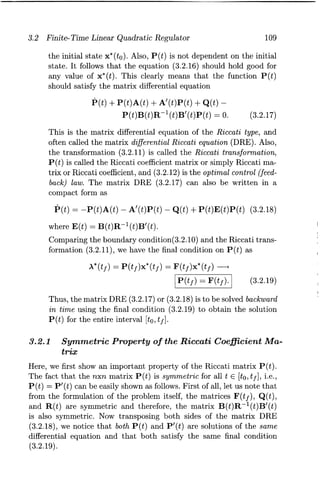

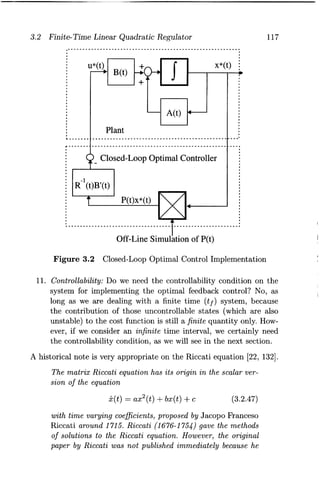

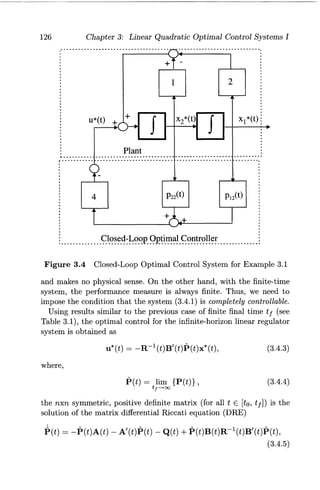

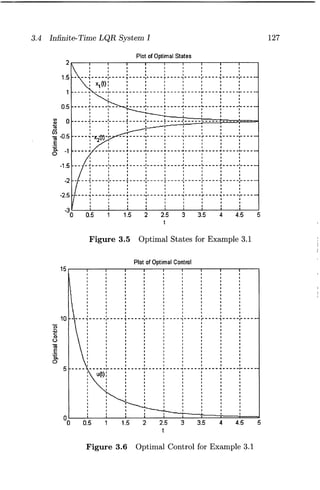

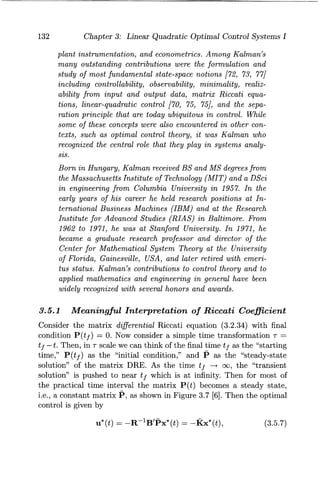

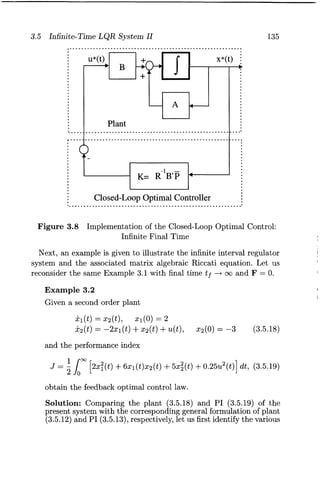

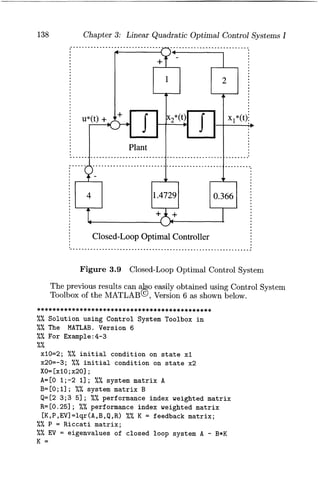

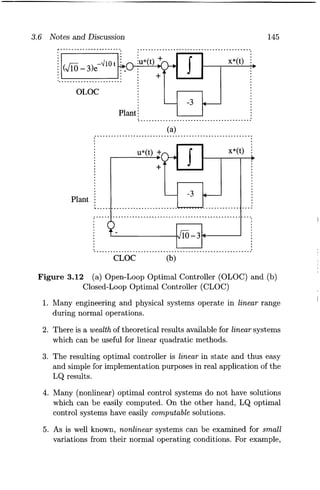

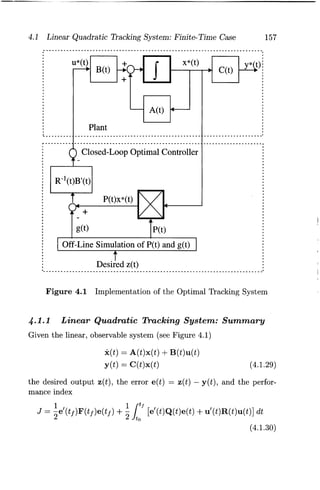

- 19. List of Figures 1.1 Classical Control Configuration . . . . . . . . . . . . . . . . . . . . 1 1.2 Modern Control Configuration .................... 3 1.3 Components of a Modern Control System ............ 4 1.4 Overview of Optimization . . . . . . . . . . . . . . . . . . . . . . . . 5 1.5 Optimal Control Problem ....................... 10 2.1 Increment ~f, Differential df, and Derivative j of a Function f ( t) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23 2.2 Increment ~J and the First Variation 8J of the Func-tional J .................................... 24 2.3 ( a) Minimum and (b) Maximum of a Function f ( t) . . . . . 26 2.4 Fixed-End Time and Fixed-End State System ........ 29 2.5 A Nonzero g(t) and an Arbitrary 8x(t) ............. 32 2.6 Arc Length ................................. 37 2.7 Free-Final Time and Free-Final State System ......... 59 2.8 Final-Point Condition with a Moving Boundary B(t) .... 63 2.9 Different Types of Systems: (a) Fixed-Final Time and Fixed-Final State System, (b) Free-Final Time and FixedFinal State System, (c) Fixed-Final Time and Free-Final State System, (d) Free-Final Time and Free-Final State System .................................... 66 2.10 Optimal Controller for Example 2.12 ............... 72 2.11 Optimal Control and States for Example 2.12 ......... 74 2.12 Optimal Control and States for Example 2.13 ......... 77 2.13 Optimal Control and States for Example 2.14 ......... 81 2.14 Optimal Control and States for Example 2.15 ......... 84 2.15 Open-Loop Optimal Control ..................... 94 2.16 Closed-Loop Optimal Control .................... 95 3.1 State and Costate System ...................... 107 3.2 Closed-Loop Optimal Control Implementation ....... 117 X'lX

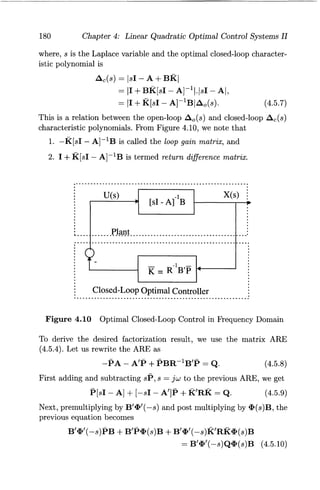

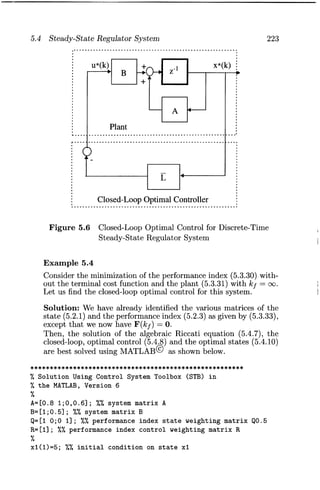

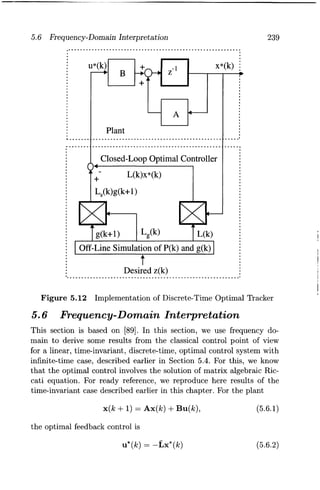

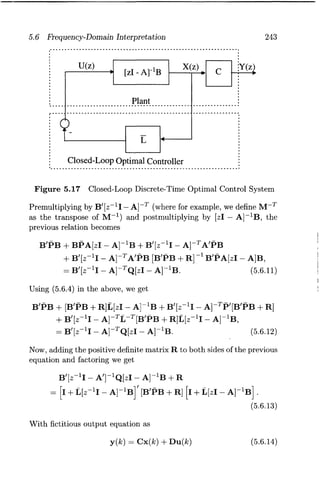

- 20. xx 3.3 Riccati Coefficients for Example 3.1 . . . . . . . . . . . . . .. 125 3.4 Closed-Loop Optimal Control System for Example 3.1 126 3.5 Optimal States for Example 3.1. . . . . . . . . . . . . . . . .. 127 3.6 Optimal Control for Example 3.1 ................ 127 3.7 Interpretation of the Constant Matrix P ........... 133 3.8 Implementation of the Closed-Loop Optimal Control: Infinite Final Time. . . . . . . . . . . . . . . . . . . . . . . . . .. 135 3.9 Closed-Loop Optimal Control System . . . . . . . . . . . .. 138 3.10 Optimal States for Example 3.2. . . . . . . . . . . . . . . . .. 140 3.11 Optimal Control for Example 3.2 ................ 141 3.12 (a) Open-Loop Optimal Controller (OLOC) and (b) Closed-Loop Optimal Controller (CLOC) ........ 145 4.1 Implementation of the Optimal Tracking System ..... 157 4.2 Riccati Coefficients for Example 4.1 ............... 163 4.3 Coefficients 91(t) and 92(t) for Example 4.1 ......... 164 4.4 Optimal States for Example 4.1 .................. 164 4.5 Optimal Control for Example 4.1 ................ 165 4.6 Riccati Coefficients for Example 4.2 ............... 167 4.7 Coefficients 91(t) and 92(t) for Example 4.2 ......... 168 4.8 Optimal Control and States for Example 4.2 ........ 168 4.9 Optimal Control and States for Example 4.2 ........ 169 4.10 Optimal Closed-Loop Control in Frequency Domain ... 180 4.11 Closed-Loop Optimal Control System with Unity Feedback. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .. 184 4.12 Nyquist Plot of Go(jw) ........................ 185 4.13 Intersection of Unit Circles Centered at Origin and -1 + jO ............................... 186 5.1 State and Costate System. . . . . . . . . . . . . . . . . . . . .. 205 5.2 Closed-Loop Optimal Controller for Linear Discrete-Time Regulator . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .. 215 5.3 Riccati Coefficients for Example 5.3 ............... 219 5.4 Optimal Control and States for Example 5.3 ........ 220 5.5 Optimal Control and States for Example 5.3 ........ 221 5.6 Closed-Loop Optimal Control for Discrete-Time Steady-State Regulator System . . . . . . . . . . . . . . . . .. 223 5.7 Implementation of Optimal Control for Example 5.4 . .. 226 5.8 Implementation of Optimal Control for Example 5.4 ... 227 5.9 Riccati Coefficients for Example 5.5. . . . . . . . . . . . . .. 231

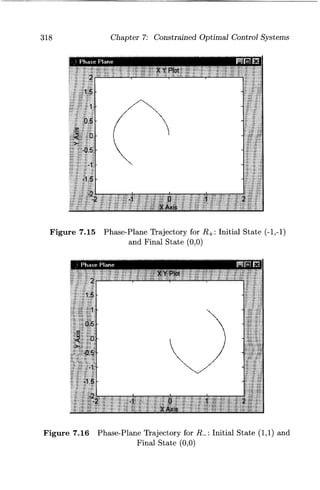

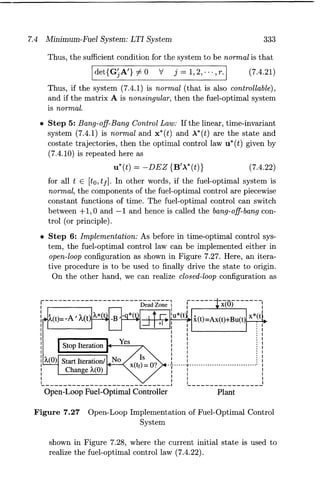

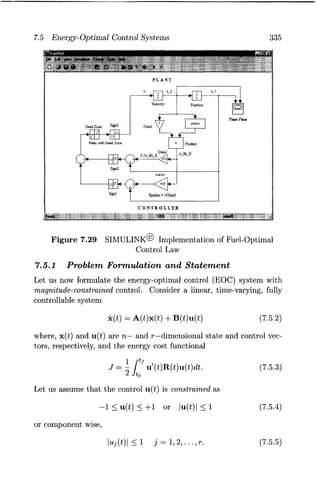

- 21. XXI 5.10 Optimal States for Example 5.5 ................ " 232 5.11 Optimal Control for Example 5.5 ................ 233 5.12 Implementation of Discrete-Time Optimal Tracker .... 239 5.13 Riccati Coefficients for Example 5.6 . . . . . . . . . . . . . .. 240 5.14 Coefficients 91(t) and 92(t) for Example 5.6 ......... 241 5.15 Optimal States for Example 5.6. . . . . . . . . . . . . . . . .. 241 5.16 Optimal Control for Example 5.6 ................ 242 5.17 Closed-Loop Discrete-Time Optimal Control System. . . 243 6.1 (a) An Optimal Control Function Constrained by a Boundary (b) A Control Variation for Which -8u(t) Is Not Admissible ........................... 254 6.2 Illustration of Constrained (Admissible) Controls ..... 260 6.3 Optimal Path from A to B . . . . . . . . . . . . . . . . . . . .. 261 6.4 A Multistage Decision Process .................. 262 6.5 A Multistage Decision Process: Backward Solution .... 263 6.6 A Multistage Decision Process: Forward Solution ..... 265 6.7 Dynamic Programming Framework of Optimal State Feedback Control . . . . . . . . . . . . . . . . . . . . . . . . . . .. 271 6.8 Optimal Path from A to B . . . . . . . . . . . . . . . . . . . . . 290 7.1 Signum Function . . . . . . . . . . . . . . . . . . . . . . . . . . .. 299 7.2 Time-Optimal Control ........................ 299 7.3 Normal Time-Optimal Control System ............. 300 7.4 Singular Time-Optimal Control System ............ 301 7.5 Open-Loop Structure for Time-Optimal Control System 304 7.6 Closed-Loop Structure for Time-Optimal Control System 306 7.7 Possible Costates and the Corresponding Controls .... 309 7.8 Phase Plane Trajectories for u = + 1 (dashed lines) and u = -1 (dotted lines) ......................... 310 7.9 Switch Curve for Double Integral Time-Optimal Control System ................................... 312 7.10 Various Trajectories Generated by Four Possible Control Sequences . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .. 313 7.11 Closed-Loop Implementation of Time-Optimal Control Law ..................................... 315 7.12 SIMULINK@ Implementation of Time-Optimal Control Law ............................... 316 7.13 Phase-Plane Trajectory for 1'+: Initial State (2,-2) and Final State (0,0) ............................ 317

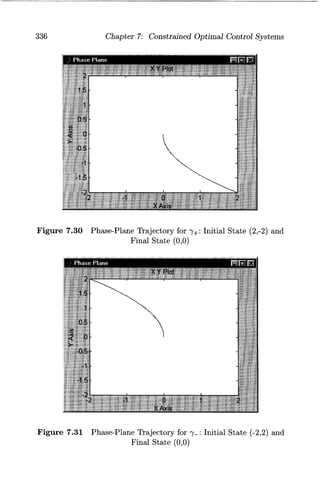

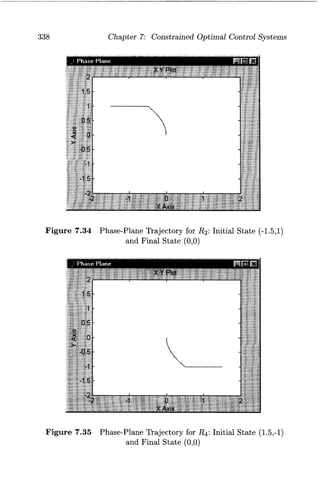

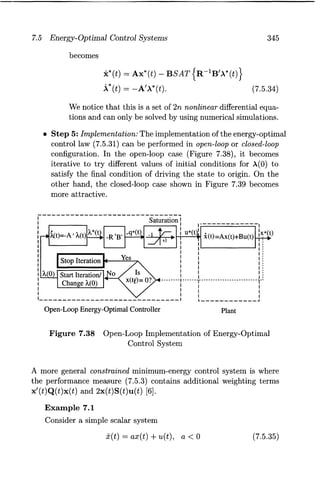

- 22. xxii 7.14 Phase-Plane Trajectory for 7-: Initial State (-2,2) and Final State (0,0) ............................ 317 7.15 Phase-Plane Trajectory for R+: Initial State (-1,-1) and Final State (0,0) ............................ 318 7.16 Phase-Plane Trajectory for R_: Initial State (1,1) and Final State (0,0) ............................ 318 7.17 Relations Between A2(t) and lu*(t)1 + u*(t)A2(t) ...... 322 7.18 Dead-Zone Function. . . . . . . . . . . . . . . . . . . . . . . . .. 323 7.19 Fuel-Optimal Control ......................... 323 7.20 Switching Curve for a Double Integral Fuel-Optimal Control System ............................. 324 7.21 Phase-Plane Trajectories for u(t) = 0 .............. 325 7.22 Fuel-Optimal Control Sequences ................. 326 7.23 E-Fuel-Optimal Control. ....................... 327 7.24 Optimal Control as Dead-Zone Function ........... 330 7.25 Normal Fuel-Optimal Control System ............. 331 7.26 Singular Fuel-Optimal Control System ............. 332 7.27 Open-Loop Implementation of Fuel-Optimal Control System ................................... 333 7.28 Closed-Loop Implementation of Fuel-Optimal Control System ................................... 334 7.29 SIMULINK@ Implementation of Fuel-Optimal Control Law ..................................... 334 7.30 Phase-Plane Trajectory for "Y+: Initial State (2,-2) and Final State (0,0) ............................ 336 7.31 Phase-Plane Trajectory for "Y-: Initial State (-2,2) and Final State (0,0) ............................ 336 7.32 Phase-Plane Trajectory for R1 : Initial State (1,1) and Final State (0,0) ............................ 337 7.33 Phase-Plane Trajectory for R3: Initial State (-1,-1) and Final State (0,0) ............................ 337 7.34 Phase-Plane Trajectory for R2 : Initial State (-1.5,1) and Final State (0,0) ............................ 338 7.35 Phase-Plane Trajectory for R4: Initial State (1.5,-1) and Final State (0,0) ............................ 338 7.36 Saturation Function .......................... 343 7.37 Energy-Optimal Control ....................... 344 7.38 Open-Loop Implementation of Energy-Optimal Control System ................................... 345

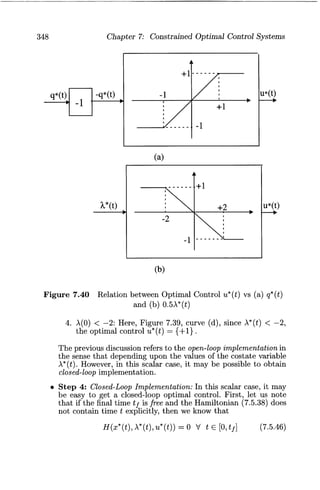

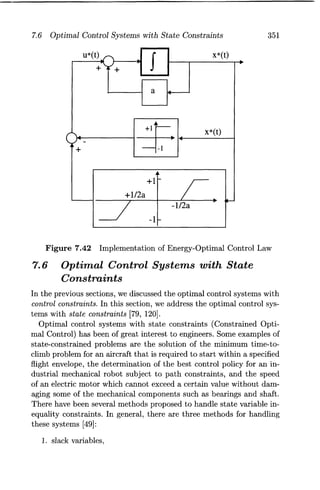

- 23. XXlll 7.39 Closed-Loop Implementation of Energy-Optimal Control System . . . . . . . . . . . . . . . . . . . . . . . . . . . .. 346 7.40 Relation between Optimal Control u*(t) vs (a) q*(t) and (b) 0.5A*(t) ................................ 348 7.41 Possible Solutions of Optimal Costate A*(t) ......... 349 7.42 Implementation of Energy-Optimal Control Law ...... 351 7.43 Relation between Optimal Control u*(t) and Optimal Costate A2 ( t) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .. 358

- 25. List of Tables 2.1 Procedure Summary of Pontryagin Principle for Bolza Problem ................................... 69 3.1 Procedure Summary of Finite-Time Linear Quadratic Regulator System: Time-Varying Case. . . . . . . . . . . .. 113 3.2 Procedure Summary of Infinite-Time Linear Quadratic Regulator System: Time-Varying Case. . . . . . . . . . . .. 129 3.3 Procedure Summary of Infinite-Interval Linear Quadratic Regulator System: Time-Invariant Case . . . . . . . . . . .. 136 4.1 Procedure Summary of Linear Quadratic Tracking System159 4.2 Procedure Summary of Regulator System with Prescribed Degree of Stability . . . . . . . . . . . . . . . . . . . . . . . . . .. 178 5.1 Procedure Summary of Discrete-Time Optimal Control System: Fixed-End Points Condition .............. 204 5.2 Procedure Summary for Discrete-Time Optimal Control System: Free-Final Point Condition ............... 208 5.3 Procedure Summary of Discrete-Time, Linear Quadratic Regulator System ............................ 214 5.4 Procedure Summary of Discrete-Time, Linear Quadratic Regulator System: Steady-State Condition . . . . . . . . .. 222 5.5 Procedure Summary of Discrete-Time Linear Quadratic Tracking System ............................ 238 6.1 Summary of Pontryagin Minimum Principle ......... 257 6.2 Computation of Cost during the Last Stage k = 2 ..... 269 6.3 Computation of Cost during the Stage k = 1,0 ....... 270 6.4 Procedure Summary of Hamilton-Jacobi-Bellman (HJB) Approach ................................. 280 xxv

- 26. XXVI 7.1 Procedure Summary of Optimal Control Systems with State Constraints . . . . . . . . . . . . . . . . . . . . . . . . . . .. 355

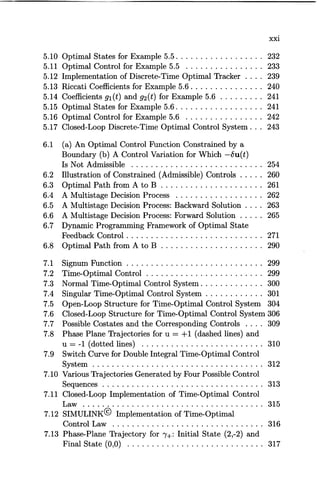

- 27. Chapter 1 Introduction In this first chapter, we introduce the ideas behind optimization and optimal control and provide a brief history of calculus of variations and optimal control. Also, a brief summary of chapter contents is presented. 1.1 Classical and Modern Control The classical (conventional) control theory concerned with single input and single output (8180) is mainly based on Laplace transforms theory and its use in system representation in block diagram form. From Figure 1.1, we see that Reference Input R(s) + Error Signal - E(s) Y(s) R(s) G(s) Control BPI 1 + G(s)H(s) c ompensator ant .. Gc(s) Input ... G (s) U(s) p Feedback H(s) ... (1.1.1) Output yes) Figure 1.1 Classical Control Configuration 1

- 28. 2 Chapter 1: Introduction where s is Laplace variable and we used (1.1.2) Note that 1. the input u(t) to the plant is determined by the error e(t) and the compensator, and 2. all the variables are not readily available for feedback. In most cases only one output variable is available for feedback. The modern control theory concerned with multiple inputs and multiple outputs (MIMO) is based on state variable representation in terms of a set of first order differential (or difference) equations. Here, the system (plant) is characterized by state variables, say, in linear, timeinvariant form as x(t) = Ax(t) + Bu(t) y(t) = Cx(t) + Du(t) (1.1.3) (1.1.4) where, dot denotes differentiation with respect to (w.r.t.) t, x(t), u(t), and y( t) are n, r, and m dimensional state, control, and output vectors respectively, and A is nxn state, B is nxr input, Cis mxn output, and D is mxr transfer matrices. Similarly, a nonlinear system is characterized by x(t) = f(x(t), u(t), t) y(t) = g(x(t), u(t), t). (1.1.5) (1.1.6) The modern theory dictates that all the state variables should be fed back after suitable weighting. We see from Figure 1.2 that in modern control configuration, 1. the input u( t) is determined by the controller (consisting of error detector and compensator) driven by system states x(t) and reference signal r ( t ) , 2. all or most of the state variables are available for control, and 3. it depends on well-established matrix theory, which is amenable for large scale computer simulation.

- 29. 1.1 Classical and Modern Control 3 Plant Control Output Input .. p .. u(t) y(t) State R eference x(t) Input .. C '" r(t) Controller Figure 1.2 Modern Control Configuration The fact that the state variable representation uniquely specifies the transfer function while there are a number of state variable representations for a given transfer function, reveals the fact that state variable representation is a more complete description of a system. Figure 1.3 shows components of a modern control system. It shows three components of modern control and their important contributors. The first stage of any control system theory is to obtain or formulate the dynamics or modeling in terms of dynamical equations such as differential or difference equations. The system dynamics is largely based on the Lagrangian function. Next, the system is analyzed for its performance to find out mainly stability of the system and the contributions of Lyapunov to stability theory are well known. Finally, if the system performance is not according to our specifications, we resort to design [25, 109]. In optimal control theory, the design is usually with respect to a performance index. We notice that although the concepts such as Lagrange function [85] and V function of Lyapunov [94] are old, the techniques using those concepts are modern. Again, as the phrase modern usually refers to time and what is modern today becomes ancient after a few years, a more appropriate thing is to label them as optimal control, nonlinear control, adaptive control, robust control and so on.

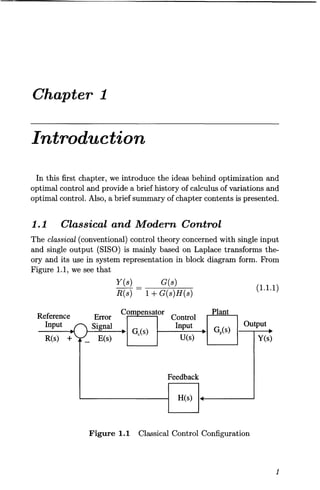

- 30. 4 Chapter 1: Introduction I Modem Control System I ~ ~r ~ r System Dynamics System Analysis System Synthesis (Modeling) (Perfonnance) (Design) r r ~ State Function of V Function of H Function of Lagrange Lyapunov Pontraygin (1788) (1892) (1956) Figure 1.3 Components of a Modern Control System 1.2 Optimization Optimization is a very desirable feature in day-to-day life. We like to work and use our time in an optimum manner, use resources optimally and so on. The subject of optimization is quite general in the sense that it can be viewed in different ways depending on the approach (algebraic or geometric), the interest (single or multiple), the nature of the signals (deterministic or stochastic), and the stage (single or multiple) used in optimization. This is shown in Figure 1.4. As we notice that the calculus of variations is one small area of the big picture of the optimization field, and it forms the basis for our study of optimal control systems. Further, optimization can be classified as static optimization and dynamic optimization. 1. Static Optimization is concerned with controlling a plant under steady state conditions, i.e., the system variables are not changing with respect to time. The plant is then described by algebraic equations. Techniques used are ordinary calculus, Lagrange multipliers, linear and nonlinear programming. 2. Dynamic Optimization concerns with the optimal control of plants under dynamic conditions, i.e., the system variables are changing with respect to time and thus the time is involved in system description. Then the plant is described by differential

- 31. 1.2 Optimization Calculus and Lagrange Multipliers OPTIMIZATION Algebraic Approach Multiple Interest Game Theory Stochastic Multiple Stage Dynamic Programming Linear and Nonlinear Programming Functional Analysis Figure 1.4 Overview of Optimization 5

- 32. 6 Chapter 1: Introduction (or difference) equations. Techniques used are search techniques, dynamic programming, variational calculus (or calculus of variations) and Pontryagin principle. 1.3 Optimal Control The main objective of optimal control is to determine control signals that will cause a process (plant) to satisfy some physical constraints and at the same time extremize (maximize or minimize) a chosen performance criterion (performance index or cost function). Referring to Figure 1.2, we are interested in finding the optimal control u*(t) (* indicates optimal condition) that will drive the plant P from initial state to final state with some constraints on controls and states and at the same time extremizing the given performance index J. The formulation of optimal control problem requires 1. a mathematical description (or model) of the process to be controlled (generally in state variable form), 2. a specification of the performance index, and 3. a statement of boundary conditions and the physical constraints on the states and/or controls. 1.3.1 Plant For the purpose of optimization, we describe a physical plant by a set of linear or nonlinear differential or difference equations. For example, a linear time-invariant system is described by the state and output relations (1.1.3) and (1.1.4) and a nonlinear system by (1.1.5) and (1.1.6). 1.3.2 Performance Index Classical control design techniques have been successfully applied to linear, time-invariant, single-input, single output (8180) systems. Typical performance criteria are system time response to step or ramp input characterized by rise time, settling time, peak overshoot, and steady state accuracy; and the frequency response of the system characterized by gain and phase margins, and bandwidth. In modern control theory, the optimal control problem is to find a control which causes the dynamical system to reach a target or fol-

- 33. 1.3 Optimal Control 7 low a state variable (or trajectory) and at the same time extremize a performance index which may take several forms as described below. 1. Performance Index for Time-Optimal Control System: We try to transfer a system from an arbitrary initial state x(to) to a specified final state x( t f) in minimum time. The corresponding performance index (PI) is it! J = dt = t f - to = t*. to (1.3.1 ) 2. Performance Index for Fuel-Optimal Control System: Consider a spacecraft problem. Let u(t) be the thrust of a rocket engine and assume that the magnitude I u( t) I of the thrust is proportional to the rate of fuel consumption. In order to minimize the total expenditure of fuel, we may formulate the performance index as it! J = lu(t)ldt. to (1.3.2) For several controls, we may write it as (1.3.3) where R is a weighting factor. 3. Performance Index for Minimum-Energy Control System: Consider Ui (t) as the current in the ith loop of an electric network. Then 2:i!1 u;(t)ri (where, ri is the resistance of the ith loop) is the total power or the total rate of energy expenditure of the network. Then, for minimization of the total expended energy, we have a performance criterion as (1.3.4) or in general, it! J = u'(t)Ru(t)dt to (1.3.5)

- 34. 8 Chapter 1: Introduction where, R is a positive definite matrix and prime (') denotes transpose here and throughout this book (see Appendix A for more details on definite matrices). Similarly, we can think of minimization of the integral of the squared error of a tracking system. We then have, it! J = x/(t)Qx(t)dt to (1.3.6) where, Xd(t) is the desired value, xa(t) is the actual value, and x(t) = xa(t) - Xd(t), is the error. Here, Q is a weighting matrix, which can be positive semi-definite. 4. Performance Index for Terminal Control System: In a terminal target problem, we are interested in minimizing the error between the desired target position Xd (t f) and the actual target position Xa (t f) at the end of the maneuver or at the final time t f. The terminal (final) error is x ( t f) = Xa ( t f) - Xd ( t f ). Taking care of positive and negative values of error and weighting factors, we structure the cost function as (1.3.7) which is also called the terminal cost function. Here, F is a positive semi-definite matrix. 5. Performance Index for General Optimal Control System: Combining the above formulations, we have a performance index in general form as it! J = x/(tf)Fx(tf) + [X/(t)QX(t) + u/(t)Ru(t)]dt to (1.3.8) or, it! J = S(x(tf),tf) + V(x(t),u(t),t)dt to (1.3.9) where, R is a positive definite matrix, and Q and F are positive semidefinite matrices, respectively. Note that the matrices Q and R may be time varying. The particular form of performance index (1.3.8) is called quadratic (in terms of the states and controls) form.

- 35. 1.3 Optimal Control 9 The problems arising in optimal control are classified based on the structure of the performance index J [67]. If the PI (1.3.9) contains the terminal cost function S(x(t), u(t), t) only, it is called the Mayer problem, if the PI (1.3.9) has only the integral cost term, it is called the Lagrange problem, and the problem is of the Bolza type if the PI contains both the terminal cost term and the integral cost term as in (1.3.9). There are many other forms of cost functions depending on our performance specifications. However, the above mentioned performance indices (with quadratic forms) lead to some very elegant results in optimal control systems. 1.3.3 Constraints The control u( t) and state x( t) vectors are either unconstrained or constrained depending upon the physical situation. The unconstrained problem is less involved and gives rise to some elegant results. From the physical considerations, often we have the controls and states, such as currents and voltages in an electrical circuit, speed of a motor, thrust of a rocket, constrained as (1.3.10) where, +, and - indicate the maximum and minimum values the variables can attain. 1.3.4 Formal Statement of Optimal Control System Let us now state formally the optimal control problem even risking repetition of some of the previous equations. The optimal control problem is to find the optimal control u*(t) (* indicates extremal or optimal value) which causes the linear time-invariant plant (system) x(t) = Ax(t) + Bu(t) (1.3.11) to give the trajectory x* (t) that optimizes or extremizes (minimizes or maximizes) a performance index J = x'(tf)Fx(tf) + J.tJ [x'(t)Qx(t) + u'(t)Ru(t)]dt (1.3.12) to or which causes the nonlinear system x(t) = f(x(t), u(t), t) (1.3.13)

- 36. 10 Chapter 1: Introduction to give the state x*(t) that optimizes the general performance index itf J = S(x(tj), tf) + V(x(t), u(t), t)dt to (1.3.14) with some constraints on the control variables u( t) and/or the state variables x(t) given by (1.3.10). The final time tf may be fixed, or free, and the final (target) state may be fully or partially fixed or free. The entire problem statement is also shown pictorially in Figure 1.5. Thus, Optimal Control System I + .. • Plant I Cost Function II Constraints , , J J ~ .. ~ J* ... ~ J* .. u*(t) u(t) r u*(t) u(t) (a) Minimum (b) Maximum Figure 1.5 Optimal Control Problem we are basically interested in finding the control u*(t) which when applied to the plant described by (1.3.11) or (1.3.13), gives an optimal performance index J* described by (1.3.12) or (1.3.14). The optimal control systems are studied in three stages. 1. In the first stage, we just consider the performance index of the form (1.3.14) and use the well-known theory of calculus of variations to obtain optimal functions. 2. In the second stage, we bring in the plant (1.3.11) and try to address the problem of finding optimal control u*(t) which will

- 37. 1.4 Historical Tour 11 drive the plant and at the same time optimize the performance index (1.3.12). Next, the above topics are presented in discretetime domain. 3. Finally, the topic of constraints on the controls and states (1.3.10) is considered along with the plant and performance index to obtain optimal control. 1.4 Historical Tour We basically consider two stages of the tour: first the development of calculus of variations, and secondly, optimal control theory [134, 58, 99, 28]1. 1.4.1 Calculus oj Variations According to a legend [88], Tyrian princess Dido used a rope made of cowhide in the form of a circular arc to maximize the area to be occupied to found Carthage. Although the story of the founding of Carthage is fictitious, it probably inspired a new mathematical discipline, the calculus of variations and its extensions such as optimal control theory. The calculus of variations is that branch of mathematics that deals with finding a function which is an extremum (maximum or minimum) of a functional. A functional is loosely defined as a function of a function. The theory of finding maxima and minima of functions is quite old and can be traced back to the isoperimetric problems considered by Greek mathematicians such as Zenodorus (495-435 B.C.) and by Poppus (c. 300 A.D.). But we will start with the works of Bernoulli. In 1699 Johannes Bernoulli (1667-1748) posed the brachistochrone problem: the problem of finding the path of quickest descent between two points not in the same horizontal or vertical line. This problem which was first posed by Galileo (1564-1642) in 1638, was solved by John, his brother Jacob (1654- 1705), by Gottfried Leibniz (1646-1716), and anonymously by Isaac Newton (1642-1727). Leonard Euler (1707-1783) joined John Bernoulli and made some remarkable contributions, which influenced Joseph-Louis Lagrange (1736-1813), who finally gave an el- IThe permission given by Springer-Verlag for H. H. Goldstine, A History of the Calculus of Variations, Springer-Verlag, New York, NY, 1980, is hereby acknowledged.

- 38. 12 Chapter 1: Introduction egant way of solving these types of problems by using the method of (first) variations. This led Euler to coin the phrase calculus of variations. Later this necessary condition for extrema of a functional was called the Euler - the Lagrange equation. Lagrange went on to treat variable end - point problems introducing the multiplier method, which later became one of the most powerful tools-Lagrange (or EulerLagrange) multiplier method-in optimization. The sufficient conditions for finding the extrema of functionals in calculus of variations was given by Andrien Marie Legendre (1752-1833) in 1786 by considering additionally the second variation. Carl Gustav Jacob Jacobi (1804-1851) in 1836 came up with a more rigorous analysis of the sufficient conditions. This sufficient condition was later on termed as the Legendre-Jacobi condition. At about the same time Sir William Rowan Hamilton (1788-1856) did some remarkable work on mechanics, by showing that the motion of a particle in space, acted upon by various external forces, could be represented by a single function which satisfies two first-order partial differential equations. In 1838 Jacobi had some objections to this work and showed the need for only one partial differential equation. This equation, called Hamilton-Jacobi equation, later had profound influence on the calculus of variations and dynamic programming, optimal control, and as well as on mechanics. The distinction between strong and weak extrema was addressed by Karl Weierstrass (1815-1897) who came up with the idea of the field of extremals and gave the Weierstrass condition, and sufficient conditions for weak and strong extrema. Rudolph Clebsch (1833-1872) and Adolph Mayer proceeded with establishing conditions for the more general class of problems. Clebsch formulated a problem in the calculus of variations by adjoining the constraint conditions in the form of differential equations and provided a condition based on second variation. In 1868 Mayer reconsidered Clebsch's work and gave some elegant results for the general problem in the calculus of variations. Later Mayer described in detail the problems: the problem of Lagrange in 1878, and the problem of Mayer in 1895. In 1898, Adolf Kneser gave a new approach to the calculus of variations by using the result of Karl Gauss (1777-1855) on geodesics. For variable end-point problems, he established the transversality condition which includes orthogonality as a special case. He along with Oskar Bolza (1857-1942) gave sufficiency proofs for these problems. In 1900, David Hilbert (1862-1943) showed the second variation as a

- 39. 1.4 Historical Tour 13 quadratic functional with eigenvalues and eigenfunctions. Between 1908 and 1910, Gilbert Bliss (1876-1951) [23] and Max Mason looked in depth at the results of Kneser. In 1913, Bolza formulated the problem of Bolza as a generalization of the problems of Lagrange and Mayer. Bliss showed that these three problems are equivalent. Other notable contributions to calculus of variations were made by E. J. McShane (1904-1989) [98], M. R. Hestenes [65], H. H. Goldstine and others. There have been a large number of books on the subject of calculus of variations: Bliss (1946) [23], Cicala (1957) [37], Akhiezer (1962) [1], Elsgolts (1962) [47], Gelfand and Fomin (1963) [55], Dreyfus (1966) [45], Forray (1968) [50], Balakrishnan (1969) [8], Young (1969) [146], Elsgolts (1970) [46], Bolza (1973) [26], Smith (1974) [126], Weinstock (1974) [143], Krasnov et al. (1975) [81], Leitmann (1981) [88], Ewing (1985) [48], Kamien and Schwartz (1991) [78], Gregory and Lin (1992) [61], Sagan (1992) [118], Pinch (1993) [108], Wan (1994) [141], Giaquinta and Hildebrandt (1995) [56, 57], Troutman (1996) [136], and Milyutin and Osmolovskii (1998) [103]. 1.4.2 Optimal Control Theory The linear quadratic control problem has its origins in the celebrated work of N. Wiener on mean-square filtering for weapon fire control during World War II (1940-45) [144, 145]. Wiener solved the problem of designing filters that minimize a mean-square-error criterion (performance measure) of the form (1.4.1) where, e( t) is the error, and E {x} represents the expected value of the random variable x. For a deterministic case, the above error criterion is generalized as an integral quadratic term as J = 1000 e'(t)Qe(t)dt (1.4.2) where, Q is some positive definite matrix. R. Bellman in 1957 [12] introduced the technique of dynamic programming to solve discretetime optimal control problems. But, the most important contribution to optimal control systems was made in 1956 [25] by L. S. Pontryagin (formerly of the United Soviet Socialistic Republic (USSR)) and his associates, in development of his celebrated maximum principle described

- 40. 14 Chapter 1: Introduction in detail in their book [109]. Also, see a very interesting article on the "discovery of the Maximum Principle" by R. V. Gamkrelidze [52], one of the authors of the original book [109]. At this time in the United States, R. E. Kalman in 1960 [70] provided linear quadratic regulator (LQR) and linear quadratic Gaussian (LQG) theory to design optimal feedback controls. He went on to present optimal filtering and estimation theory leading to his famous discrete Kalman filter [71] and the continuous Kalman filter with Bucy [76]. Kalman had a profound effect on optimal control theory and the Kalman filter is one of the most widely used technique in applications of control theory to real world problems in a variety of fields. At this point we have to mention the matrix Riccati equation that appears in all the Kalman filtering techniques and many other fields. C. J. Riccati [114, 22] published his result in 1724 on the solution for some types of nonlinear differential equations, without ever knowing that the Riccati equation would become so famous after more than two centuries! Thus, optimal control, having its roots in calculus of variations developed during 16th and 17th centuries was really born over 300 years ago [132]. For additional details about the historical perspectives on calculus of variations and optimal control, the reader is referred to some excellent publications [58, 99, 28, 21, 132]. In the so-called linear quadratic control, the term "linear" refers to the plant being linear and the term "quadratic" refers to the performance index that involves the square or quadratic of an error, and/or control. Originally, this problem was called the mean-square control problem and the term "linear quadratic" did not appear in the literature until the late 1950s. Basically the classical control theory using frequency domain deals with single input and single output (SIS0) systems, whereas modern control theory works with time domain for SISO and multi-input and multi-output (MIMO) systems. Although modern control and hence optimal control appeared to be very attractive, it lacked a very important feature of robustness. That is, controllers designed based on LQR theory failed to be robust to measurement noise, external disturbances and unmodeled dynamics. On the other hand, frequency domain techniques using the ideas of gain margin and phase margin offer robustness in a natural way. Thus, some researchers [115, 95], especially in the United Kingdom, continued to work on developing frequency domain

- 41. 1.5 About This Book 15 approaches to MIMO systems. One important and relevant field that has been developed around the 1980s is the Hoo-optimal control theory. In this framework, the work developed in the 1960s and 1970s is labeled as H2-optimal control theory. The seeds for Hoo-optimal control theory were laid by G. Zames [148], who formulated the optimal Hoo-sensitivity design problem for SISO systems and solved using optimal N evanilina-Pick interpolation theory. An important publication in this field came from a group of four active researchers, Doyle, Glover, Khargonekar, and Francis[44], who won the 1991 W. R. G. Baker Award as the best IEEE Transactions paper. There are many other works in the field of Hoo control ([51, 96, 43, 128, 7, 60, 131, 150, 39, 34]). 1.5 About This Book This book, on the subject of optimal control systems, is based on the author's lecture notes used for teaching a graduate level course on this subject. In particular, this author was most influenced by Athans and Falb [6], Schultz and Melsa [121], Sage [119], Kirk [79], Sage and White [120], Anderson and Moore [3] and Lewis and Syrmos [91], and one finds the footprints of these works in the present book. There were a good number of books on optimal control published during the era of the "glory of modern control," (Leitmann (1964) [87], Tou (1964) [135], Athans and Falb (1966) [6], Dreyfus (1966) [45], Lee and Markus (1967) [86], Petrov (1968) [106], Sage (1968) [119], Citron (1969) [38], Luenberger (1969) [93], Pierre (1969) [107], Pun (1969) [110], Young (1969) [146], Kirk (1970) [79], Boltyanskii [24], Kwakernaak and Sivan (1972) [84], Warga (1972) [142], Berkovitz (1974) [17], Bryson and Ho (1975) [30]), Sage and White (1977) [120], Leitmann (1981) [88]), Ryan (1982) [116]). There has been renewed interest with the second wave of books published during the last few years (Lewis (1986) [89], Stengal (1986) [127], Christensen et al. (1987) [36] Anderson and Moore (1990) [3], Hocking (1991) [66], Teo et al. (1991) [133], Gregory and Lin (1992) [61], Lewis (1992) [90], Pinch (1993) [108], Dorato et al. (1995) [42], Lewis and Syrmos (1995) [91]), Saberi et al. (1995) [117], Sima (1996) [124], Siouris [125], Troutman (1996) [136] Bardi and Dolcetta (1997) [9], Vincent and Grantham (1997) [139], Milyutin and Osmolovskii (1998) [103], Bryson (1999) [29], Burl [32], Kolosov (1999) [80], Pytlak (1999) [111], Vinter (2000) [140], Zelikin

- 42. 16 Chapter 1: Introduction (2000) [149], Betts (2001) [20], and Locatelli (2001) [92]. The optimal control theory continues to have a wide variety of applications starting from the traditional electrical power [36] to economics and management [16, 122, 78, 123]. 1.6 Chapter Overview This book is composed of seven chapters. Chapter 2 presents optimal control via calculus of variations. In this chapter, we start with some basic definitions and a simple variational problem of extremizing a functional. We then bring in the plant as a conditional optimization problem and discuss various types of problems based on the boundary conditions. We briefly mention both Lagrangian and Hamiltonian formalisms for optimization. Next, Chapter 3 addresses basically the linear quadratic regulator (LQR) system. Here we discuss the closedloop optimal control system introducing matrix Riccati differential and algebraic equations. We look at the analytical solution to the Riccati equations and development of MATLAB© routine for the analytical solution. Tracking and other problems of linear quadratic optimal control are discussed in Chapter 4. We also discuss the gain and phase margins of the LQR system. So far the optimal control of continuous-time systems is described. Next, the optimal control of discrete-time systems is presented in Chapter 5. Here, we start with the basic calculus of variations and then touch upon all the topics discussed above with respect to the continuous-time systems. The Pontryagin Principle and associated topics of dynamic programming and Hamilton-Jacobi-Bellman results are briefly covered in Chapter 6. The optimal control of systems with control and state constraints is described in Chapter 7. Here, we cover topics of control constraints leading to time-optimal, fuel-optimal and energy-optimal control systems and briefly discuss the state constraints problem. Finally, the Appendices A and B provide summary of results on matrices, vectors, matrix algebra and state space, and Appendix C lists some of the MATLAB© files used in the book.

- 43. 1.7 Problems 17 1. 7 Problems Problem 1.1 A D.C. motor speed control system is described by a second order state equation, :h (t) = 25x2(t) X2(t) = -400Xl(t) - 200X2(t) + 400u(t) , where, Xl(t) = the speed of the motor, and X2(t) = the current in the armature circuit and the control input u( t) = the voltage input to an amplifier supplying the motor. Formulate a performance index and optimal control problem to keep the speed constant at a particular value. Problem 1.2 [83] In a liquid-level control system for a storage tank, the valves connecting a reservoir and the tank are controlled by gear train driven by a D. C. motor and an electronic amplifier. The dynamics is described by a third order system Xl(t) = -2Xl(t) X2(t) = X3(t) X3(t) = -10X3(t) + 9000u(t) where, Xl(t) = is the height in the tank, X2(t) = is the angular position of the electric motor driving the valves controlling the liquid from reservoir to tank, X3(t) = the angular velocity of the motor, and u(t) = is the input to electronic amplifier connected to the input of the motor. Formulate optimal control problem to keep the liquid level constant at a reference value and the system to act only if there is a change in the liquid level. Problem 1.3 [35] In an inverted pendulum system, it is required to maintain the upright position of the pendulum on a cart. The linearized state equations are Xl(t) = X2(t) X2(t) = -X3(t) + O.2u(t) X3(t) = X4(t) X4(t) = 10x3(t) - O.2u(t)

- 44. 18 Chapter 1: Introduction where, Xl (t) = is horizontal linear displacement of the cart, X2(t) = is linear velocity of the cart, X3(t) = is angular position of the pendulum from vertical line, X4(t) = is angular velocity, and u(t) = is the horizontal force applied to the cart. Formulate a performance index to keep the pendulum in the vertical position with as little energy as possible. Problem 1.4 [101J A mechanical system consisting of two masses and two springs, one spring connecting the two masses and the other spring connecting one of the masses to a fixed point. An input is applied to the mass not connected to the fixed point. The displacements (XI(t) and X2 (t)) and the corresponding velocities (X3 (t) and X4 (t)) of the two masses provide a fourth-order system described by XI(t) = X3(t) X2(t) = X4(t) X3(t) = -4XI(t) + 2X2(t) X4(t) = XI(t) - X2(t) + u(t) Formulate a performance index to minimize the errors in displacements and velocities and to minimize the control effort. Problem 1.5 A simplified model of an automobile suspension system is described by mx(t) + kx(t) = bu(t) where, x(t) is the position, u(t) is the input to the suspension system (in the form of an upward force), m is the mass of the suspension system, and k is the spring constant. Formulate the optimal control problem for minimum control energy and passenger comfort. Assume suitable values for all the constants. Problem 1.6 [112J Consider a continuous stirred tank chemical reactor described by XI(t) = -O.lXI(t) - 0.12x2(t) X2(t) = -0.3XI(t) - 0.012x2(t) - 0.07u(t) where, the normalized deviation state variables of the linearized model are Xl (t) = reaction variable, X2 (t) = temperature and the control variable u(t) = effective cooling rate coefficient. Formulate a suitable performance measure to minimize the deviation errors and to minimize the control effort.

- 45. Chapter 2 Calculus of Variations and Optimal Control Calculus of variations (Co V) or variational calculus deals with finding the optimum (maximum or minimum) value of a functional. Variational calculus that originated around 1696 became an independent mathematical discipline after the fundamental discoveries of L. Euler (1709-1783), whom we can claim with good reason as the founder of calculus of variations. In this chapter, we start with some basic definitions and a simple variational problem of extremizing a functional. We then incorporate the plant as a conditional optimization problem and discuss various types of problems based on the boundary conditions. We briefly mention both the Lagrangian and Hamiltonian formalisms for optimization. It is suggested that the student reviews the material in Appendices A and B given at the end of the book. This chapter is motivated by [47, 79, 46, 143, 81, 48]1. 2.1 Basic Concepts 2.1.1 Function and Functional We discuss some fundamental concepts associated with functionals along side with those of functions. (a) Function: A variable x is a function of a variable quantity t, (writ- IThe permission given by Prentice Hall for D. E. Kirk, Optimal Control Theory: An Introduction, Prentice Hall, Englewood Cliffs, NJ, 1970, is hereby acknowledged. 19

- 46. 20 Chapter 2: Calculus of Variations and Optimal Control ten as x(t) = !(t)), if to every value of t over a certain range of t there corresponds a value x; i.e., we have a correspondence: to a number t there corresponds a number x. Note that here t need not be always time but any independent variable. Example 2.1 Consider x(t) = 2t2 + 1. (2.1.1 ) For t = 1, x = 3, t = 2, x = 9 and so on. Other functions are x(t) = 2t; X(tb t2) = tt + t§. N ext we consider the definition of a functional based on that of a function. (b) Functional: A variable quantity J is a functional dependent on a function ! (x), written as J = J (f (x) ), if to each function f (x), there corresponds a value J, i.e., we have a correspondence: to the function f (x) there corresponds a number J. Functional depends on several functions. Example 2.2 Let x(t) = 2t2 + 1. Then {I (I 2 5 J(x(t)) = io x(t)dt = io (2t2 + l)dt = 3 + 1 = 3 (2.1.2) is the area under the curve x(t). If v(t) is the velocity of a vehicle, then l ti J ( v ( t )) = v ( t ) dt to (2.1.3) is the path traversed by the vehicle. Thus, here x(t) and v(t) are functions of t, and J is a functional of x(t) or v(t). Loosely speaking, a functional can be thought of as a "function of a function." 2.1.2 Increment We consider here increment of a function and a functional.

- 47. 2.1 Basic Concepts 21 (a) Increment of a Function: In order to consider optimal values of a function, we need the definition of an increment [47, 46, 79]. DEFINITION 2.1 The increment of the function I, denoted by ~/, is defined as ~/~/(t + ~t) - I(t). (2.1.4) It is easy to see from the definition that ~I depends on both the independent variable t and the increment of the independent variable ~t, and hence strictly speaking, we need to write the increment of a function as ~/(t, ~t). Example 2.3 If find the increment of the function I ( t) . Solution: The increment ~I becomes ~I ~ I(t + ~t) - I(t) = (tl + ~iI + t2 + ~t2? - (tl + t2)2 = (tl + ~tl)2 + (t2 + ~t2)2 + 2(iI + ~h)(t2 + ~t2) - (tI + t§ + 2tlt2) = 2(tl + t2)~tl + 2(tl + t2)~t2 + (~tl)2 + (~t2)2 (2.1.5) +2~tl~t2. (2.1.6) (b) Increment of a Functional: Now we are ready to define the increment of a functional. DEFINITION 2.2 The increment of the functional J, denoted by ~J, is defined as I ~J~J(x(t) + 8x(t)) - J(x(t))·1 (2.1. 7) Here 8x(t) is called the variation of the function x(t). Since the increment of a functional is dependent upon the function x(t) and its

- 48. 22 Chapter 2: Calculus of Variations and Optimal Control variation 8x(t), strictly speaking, we need to write the increment as ilJ(x(t),8x(t)). Example 2.4 Find the increment of the functional (2.1.8) Solution: The increment of J is given by ilJ ~ J(x(t) + 8x(t)) - J(x(t)), = it! [2(x(t) + 8x(t))2 + 1] dt _it! [2x2(t) + 1] dt, it! to to = [4x(t)8x(t) + 2(8x(t) )2] dt. (2.1.9) to 2.1.3 Differential and Variation Here, we consider the differential of a function and the variation of a functional. (a) Differential of a Function: Let us define at a point t* the increment of the function J as ilf~J(t* + ilt) - J(t*). (2.1.10) By expanding J (t* + ilt) in a Taylor series about t*, we get Af = f(t') + (:), At + :, (~n, (At)2 + ... - f(t*). (2.1.11) Neglecting the higher order terms in ilt, Af = (:) * At = j(t*)At = df. (2.1.12) Here, df is called the differential of J at the point t*. j(t*) is the derivative or slope of J at t*. In other words, the differential dJ is the first order approximation to increment ilt. Figure 2.1 shows the relation between increment, differential and derivative.

- 49. 2.1 Basic Concepts 23 f(t) f(t* +~t) ....... '. . .. ... . ......... ' [(to) ~:~~~ ~ [ ... :. : ::t ~ .. .1~. . ~t :~ . o t* t*+~t t Figure 2.1 Increment fl.j, Differential dj, and Derivative j of a Function j ( t) Example 2.5 Let j(t) = t2 + 2t. Find the increment and the derivative of the function j ( t). Solution: By definition, the increment fl.j is fl.j £ j(t + fl.t) - j(t), = (t + fl.t)2 + 2(t + fl.t) - (t2 + 2t), = 2tfl.t + 2fl.t + ... + higher order terms, = 2(t + l)fl.t, = j(t)fl.t. Here, j(t) = 2(t + 1). (2.1.13) (b) Variation of a Functional: Consider the increment of a functional fl.J£J(x(t) + 8x(t)) - J(x(t)). (2.1.14)

- 50. 24 Cbapter 2: Calculus of Variations and Optimal Control Expanding J(x(t) + 8x(t)) in a Taylor series, we get {)J 1 {)2 J jj.J = J(x(t)) + -{) 8x(t) + -, {) 2 (8x(t))2 + ... - J(x(t)) x 2. x {)J 1 {)2J 2 = {)x 8x(t) + 2! {)x2 (8x(t)) + ... = 8 J + 82 J + ... , (2.1.15) where, {)J 8J = {)x 8x(t) and (2.1.16) are called the first variation (or simply the variation) and the second variation of the functional J, respectively. The variation 8 J of a functional J is the linear (or first order approximate) part (in 8x(t)) of the increment jj.J. Figure 2.2 shows the relation between increment and the first variation of a functional. J(x(t» J(x*(t)+Ox(t» . . . . . . . . . .. ... . ......... , . J(x*(t» ... .:. : J ~~ .. .1~. :.. ~ : ox(t): o x*(t) x*(t)+ Ox(t) x(t) Figure 2.2 Increment jj.J and the First Variation 8J of the Functional J

- 51. 2.2 Optimum of a Function and a Functional 25 Example 2.6 Given the functional it! J(x(t)) = [2x2(t) + 3x(t) + 4]dt, to (2.1.17) evaluate the variation of the functional. Solution: First, we form the increment and then extract the variation as the first order approximation. Thus ~J ~ J(x(t) + 8x(t)) - J(x(t)), it! = [2(x(t) + 8x(t))2 + 3(x(t) + 8x(t)) + 4) to -(2x2(t) + 3x(t) + 4)] dt, it! = [4x(t)8x(t) + 2(8x(t))2 + 38x(t)] dt. to (2.1.18) Considering only the first order terms, we get the (first) variation as it! 8J(x(t),8x(t)) = (4x(t) + 3)8x(t)dt. to (2.1.19) 2.2 Optimum of a Function and a Functional We give some definitions for optimum or extremum (maximum or minimum) of a function and a functional [47, 46, 79]. The variation plays the same role in determining optimal value of a functional as the differential does in finding extremal or optimal value of a function. DEFINITION 2.3 Optimum of a Function: A function f (t) is said to have a relative optimum at the point t* if there is a positive parameter E such that for all points t in a domain V that satisfy It - t* I < E, the increment of f(t) has the same sign (positive or negative). In other words, if ~f = f(t) - f(t*) 2:: 0, (2.2.1)

- 52. 26 Chapter 2: Calculus of Variations and Optimal Control f(t f(t) v 0 t* t f(t) 0 t o t* t (a) m 0 t* t f(t) 0 t t* o~-------.- ----.t ... ~ ;.~~., ~ f(t*)<0 (b) Figure 2.3 (a) Minimum and (b) Maximum of a Function f (t) then, f(t*) is a relative local minimum. On the other hand, if b.f = f(t) - f(t*) ~ 0, (2.2.2) then, f (t*) is a relative local maximum. If the previous relations are valid for arbitrarily large E, then, f(t*) is said to have a global absolute optimum. Figure 2.3 illustrates the (a) minimum and (b) maximum of a function. It is well known that the necessary condition for optimum of a function is that the (first) differential vanishes, i.e., df = O. The sufficient condition

- 53. 2.3 The Basic Variational Problem 1. for minimum is that the second differential is positive, i.e., d2 f > 0, and 2. for maximum is that the second differential is negative, i.e., d2 f < 0. If d2 f = 0, it corresponds to a stationary (or inflection) point. 27 DEFINITION 2.4 Optimum of a Functional: A functional J is said to have a relative optimum at x* if there is a positive E such that for all functions x in a domain n which satisfy Ix - x* I < E, the increment of J has the same sign. In other words, if !1J = J(x) - J(x*) ~ 0, (2.2.3) then J(x*) is a relative minimum. On the other hand, if !1J = J(x) - J(x*) ~ 0, (2.2.4) then, J(x*) is a relative maximum. If the above relations are satisfied for arbitrarily large E, then, J(x*) is a global absolute optimum. Analogous to finding extremum or optimal values for functions, in variational problems concerning functionals, the result is that the variation must be zero on, an optimal curve. Let us now state the result in the form of a theorem, known as fundamental theorem of the calculus of variations, the proof of which can be found in any book on calculus of variations [47, 46, 79]. THEOREM 2.1 For x*(t) to be a candidate for an optimum, the (first) variation of J must be zero on x*(t), i.e., 6J(x*(t), 6x(t)) = ° for all admissible values of 6x(t). This is a necessary condition. As a sufficient condition for minimum, the second variation 62J > 0, and for maximum 62J < 0. 2.3 The Basic Variational Problem 2.3.1 Fixed-End Time and Fixed-End State System We address a fixed-end time and fixed-end state problem, where both the initial time and state and the final time and state are fixed or given

- 54. 28 Chapter 2: Calculus of Variations and Optimal Control a priori. Let x(t) be a scalar function with continuous first derivatives and the vector case can be similarly dealt with. The problem is to find the optimal function x* (t) for which the functional it! J(x(t)) = V(x(t), x(t), t)dt to (2.3.1) has a relative optimum. It is assumed that the integrand V has continuous first and second partial derivatives w.r.t. all its arguments; to and t f are fixed (or given a priori) and the end points are fixed, i.e., x(t = to) = Xo; x(t = tf) = xf' (2.3.2) We already know from Theorem 2.1 that the necessary condition for an optimum is that the variation of a functional vanishes. Hence, in our attempt to find the optimum of x(t), we first define the increment for J, obtain its variation and finally apply the fundamental theorem of the calculus of variations (Theorem 2.1). Thus, the various steps involved in finding the optimal solution to the fixed-end time and fixed-end state system are first listed and then discussed in detail. • Step 1: Assumption of an Optimum • Step 2: Variations and Increment • Step 3: First Variation • Step 4: Fundamental Theorem • Step 5: Fundamental Lemma • Step 6: Euler-Lagrange Equation • Step 1: Assumption of an Optimum: Let us assume that x*(t) is the optimum attained for the function x(t). Take some admissible function xa(t) = x*(t) + 8x(t) close to x*(t), where 8x(t) is the variation of x*(t) as shown in Figure 2.4. The function xa(t) should also satisfy the boundary conditions (2.3.2) and hence it is necessary that (2.3.3)

- 55. 2.3 The Basic Variational Problem 29 x(t) xo ..... . o Figure 2.4 Fixed-End Time and Fixed-End State System • Step 2: Variations and Increment: Let us first define the increment as 6.J(x*(t), 8x(t)) ~ J(x*(t) + 8x(t), x*(t) + 8x(t), t) -J(x*(t), x*(t), t) It! = V (x*(t) + 8x(t), x*(t) + 8x(t), t) dt to It! - V(x*(t), x*(t), t)dt. to (2.3.4) which by combining the integrals can be written as It! 6.J(x*(t), 8x(t)) = [V (x*(t) + 8x(t), x*(t) + 8x(t), t) to - V(x* (t), x*(t), t)] dt. (2.3.5) where, x(t) = d:~t) and 8x(t) = :t {8x(t)} (2.3.6) Expanding V in the increment (2.3.5) in a Taylor series about the point x*(t) and x*(t), the increment 6.J becomes (note the

- 56. 30 Chapter 2: Calculus of Variations and Optimal Control cancelation of V(x*(t), x*(t), t)) = l' [8V(X*(~~X*(t), t) 6x(t) + 8V(X*(~~ x*(t), t) 6x(t) ~J = ~J(x*(t), 8x(t)) ~ {82V( ... ) (8 ())2 82 + V( ... ) (8· ( ))2 2! 8x2 x t + 8x2 X t + + 2~:~~·) 6x (t)6x (t) } + .. -] dt. (2.3.7) Here, the partial derivatives are w.r.t. x(t) and x(t) at the optimal condition (*) and * is omitted for simplicity . • Step 3: First Variation: Now, we obtain the variation by retaining the terms that are linear in 8x(t) and 8x(t) as 8J(x*(t),8x(t)) = it! [8V(X*(t), x*(t), t) 8x(t) to 8x 8V(x*(t), x*(t), t)8· ( )] d + 8x x t t. (2.3.8) To express the relation for the first variation (2.3.8) entirely in terms containing 8x(t) (since 8x(t) is dependent on 8x(t)), we integrate by parts the term involving 8x(t) as (omitting the arguments in V for simplicity) 1:' (~~) * 6x(t)dt = 1:' (~~) * ! (6x(t))dt = 1:' (~~) * d(6x(t)), = [( ~~) * 6X(t{: _it! 8x(t)~ (8~) dt. to dt 8x * (2.3.9) In the above, we used the well-known integration formula J udv = uv - J vdu where u = 8V/8X and v = 8x(t)). Using (2.3.9), the

- 57. 2.3 The Basic Variational Problem 31 relation (2.3.8) for first variation becomes 8J(x*(t),6x(t)) = {' (~:) * 6x(t)dt + [( ~~) * 6X(t)[ _ rtf !i (a~) 8x(t)dt, lto dt ax * = rtf [(av) _!i (a~) ]8x(t)dt lto ax * dt ax * + [( ~~) * 6x(t)] I:: . (2.3.10) Using the relation (2.3.3) for boundary variations in (2.3.10), we get 8J(x*(t),6x(t)) = 1:' [( ~:) * - :t (~~) .l6X(t)dt. (2.3.11) • Step 4: Fundamental Theorem: We now apply the fundamental theorem of the calculus of variations (Theorem 2.1), i.e., the variation of J must vanish for an optimum. That is, for the optimum x*(t) to exist, 8J(x*(t),8x(t)) = O. Thus the relation (2.3.11) becomes rtf [(av) _!i (a~) ]8X(t)dt = O. lto ax * dt ax * (2.3.12) Note that the function 8x(t) must be zero at to and tf, but for this, it is completely arbitrary . • Step 5: Fundamental Lemma: To simplify the condition obtained in the equation (2.3.12), let us take advantage of the following lemma called the fundamental lemma of the calculus of variations [47, 46, 79]. LEMMA 2.1 If for every function g(t) which is continuous, ltf g(t)8x(t)dt = 0 to (2.3.13)

- 58. 32 Chapter 2: Calculus of Variations and Optimal Control where the function 8x(t) is continuous in the interval [to, tf]' then the function 9 ( t) must be zero everywhere throughout the interval [to, t f] . (see Figure 2.5.) Proof: We prove this by contradiction. Let us assume that g(t) is nonzero (positive or negative) during a short interval [ta, tb]. Next, let us select 8x(t), which is arbitrary, to be positive (or negative) throughout the interval where 9 ( t) has a nonzero value. By this selection of 8x(t), the value of the integral in (2.3.13) will be nonzero. This contradicts our assumption that g( t) is non-zero during the interval. Thus g( t) must be identically zero everywhere during the entire interval [to, tf] in (2.3.13). Hence the lemma. get) t 8x(t) Figure 2.5 A Nonzero g(t) and an Arbitrary 8x(t) • Step 6: Euler-Lagrange Equation: Applying the previous lemma to (2.3.12), a necessary condition for x*(t) to be an optimal of the functional J given by (2.3.1) is ( av(x*(t),x*(t),t)) _ ~ (av(x*(t),.x*(t),t)) = 0 (2.3.14) ax * dt ax *