Journal Description

Econometrics

Econometrics

is an international, peer-reviewed, open access journal on econometric modeling and forecasting, as well as new advances in econometrics theory, and is published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), EconLit, EconBiz, RePEc, and other databases.

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 29.6 days after submission; acceptance to publication is undertaken in 6.2 days (median values for papers published in this journal in the first half of 2024).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

1.1 (2023);

5-Year Impact Factor:

1.4 (2023)

Latest Articles

Estimating the Effects of Credit Constraints on Productivity of Peruvian Agriculture

Econometrics 2024, 12(4), 27; https://doi.org/10.3390/econometrics12040027 - 26 Sep 2024

Abstract

This paper proposes an estimator for the endogenous switching regression models with fixed effects. The decision to switch from one regime to the other may depend on unobserved factors, which would cause the state, such as being credit constrained, to be endogenous. Our

[...] Read more.

This paper proposes an estimator for the endogenous switching regression models with fixed effects. The decision to switch from one regime to the other may depend on unobserved factors, which would cause the state, such as being credit constrained, to be endogenous. Our estimator allows for this endogenous selection and for conditional heteroscedasticity in the outcome equation. Applying our estimator to a dataset on the productivity in agriculture substantially changes the conclusions compared to earlier analysis of the same dataset. Intuitively, the reason that our estimate of the impact of switching between states is smaller than previously estimated is that we captured the selection issue: switching between being credit constrained and credit unconstrained may be endogenous to farm production. In particular, we find that being credit constant has the substantial effect of reducing yield by 11%, but not the previously estimated very dramatic effect of reducing yield by 26%.

Full article

Open AccessArticle

Estimating Treatment Effects Using Observational Data and Experimental Data with Non-Overlapping Support

by

Kevin Han, Han Wu, Linjia Wu, Yu Shi and Canyao Liu

Econometrics 2024, 12(3), 26; https://doi.org/10.3390/econometrics12030026 - 20 Sep 2024

Abstract

When estimating treatment effects, the gold standard is to conduct a randomized experiment and then contrast outcomes associated with the treatment group and the control group. However, in many cases, randomized experiments are either conducted with a much smaller scale compared to the

[...] Read more.

When estimating treatment effects, the gold standard is to conduct a randomized experiment and then contrast outcomes associated with the treatment group and the control group. However, in many cases, randomized experiments are either conducted with a much smaller scale compared to the size of the target population or accompanied with certain ethical issues and thus hard to implement. Therefore, researchers usually rely on observational data to study causal connections. The downside is that the unconfoundedness assumption, which is the key to validating the use of observational data, is untestable and almost always violated. Hence, any conclusion drawn from observational data should be further analyzed with great care. Given the richness of observational data and usefulness of experimental data, researchers hope to develop credible methods to combine the strength of the two. In this paper, we consider a setting where the observational data contain the outcome of interest as well as a surrogate outcome, while the experimental data contain only the surrogate outcome. We propose an easy-to-implement estimator to estimate the average treatment effect of interest using both the observational data and the experimental data.

Full article

Open AccessArticle

Score-Driven Interactions for “Disease X” Using COVID and Non-COVID Mortality

by

Szabolcs Blazsek, William M. Dos Santos and Andreco S. Edwards

Econometrics 2024, 12(3), 25; https://doi.org/10.3390/econometrics12030025 - 4 Sep 2024

Abstract

►▼

Show Figures

The COVID-19 (coronavirus disease of 2019) pandemic is over; however, the probability of such a pandemic is about 2% in any year. There are international negotiations among almost 200 countries at the World Health Organization (WHO) concerning a global plan to deal with

[...] Read more.

The COVID-19 (coronavirus disease of 2019) pandemic is over; however, the probability of such a pandemic is about 2% in any year. There are international negotiations among almost 200 countries at the World Health Organization (WHO) concerning a global plan to deal with the next pandemic on the scale of COVID-19, known as “Disease X”. We develop a nonlinear panel quasi-vector autoregressive (PQVAR) model for the multivariate t-distribution with dynamic unobserved effects, which can be used for out-of-sample forecasts of causes of death counts in the United States (US) when a new global pandemic starts. We use panel data from the Centers for Disease Control and Prevention (CDC) for the cross section of all states of the United States (US) from March 2020 to September 2022 regarding all death counts of (i) COVID-19 deaths, (ii) deaths that medically may be related to COVID-19, and (iii) the remaining causes of death. We compare the t-PQVAR model with its special cases, the PVAR moving average (PVARMA), and PVAR. The t-PQVAR model provides robust evidence on dynamic interactions among (i), (ii), and (iii). The t-PQVAR model may be used for out-of-sample forecasting purposes at the outbreak of a future “Disease X” pandemic.

Full article

Figure 1

Open AccessArticle

Signs of Fluctuations in Energy Prices and Energy Stock-Market Volatility in Brazil and in the US

by

Gabriel Arquelau Pimenta Rodrigues, André Luiz Marques Serrano, Gabriela Mayumi Saiki, Matheus Noschang de Oliveira, Guilherme Fay Vergara, Pedro Augusto Giacomelli Fernandes, Vinícius Pereira Gonçalves and Clóvis Neumann

Econometrics 2024, 12(3), 24; https://doi.org/10.3390/econometrics12030024 - 23 Aug 2024

Abstract

►▼

Show Figures

Volatility reflects the degree of variation in a time series, and a measurement of the stock performance in the energy sector can help one understand the pattern of fluctuations within this industry, as well as the factors that influence it. One of these

[...] Read more.

Volatility reflects the degree of variation in a time series, and a measurement of the stock performance in the energy sector can help one understand the pattern of fluctuations within this industry, as well as the factors that influence it. One of these factors could be the COVID-19 pandemic, which led to extreme volatility within the stock market in several economic sectors. It is essential to understand this regime of volatility so that robust financial strategies can be adopted to handle it. This study used stock data from the Yahoo! Finance API and data from the energy-price database from the US Energy Information Administration to conduct a comparative analysis of the volatility in the energy sector in Brazil and in the United States, as well as of the energy prices in California. The volatility in these time series were modeled using GARCH. The stock volatility regimes, both before and after COVID-19, were identified with a Markov switching model; the spillover index between the energy markets in the USA and in Brazil was evaluated with the Diebold–Yilmaz index; and the causality between the energy stock price and the energy prices was measured with the Granger causality test. The findings of this study show that (i) the volatility regime introduced by COVID-19 is still prevalent in Brazil and in the USA, (ii) the changes in the energy market in the US affect the Brazilian market significantly more than the reverse, and (iii) there is a causality relationship between the energy stock markets and the energy prices in California. These results may assist in the achievement of effective regulation and economic planning, while also supporting better market interventions. Also, acknowledging the persistent COVID-19-induced volatility can help with developing strategies for future crisis resilience.

Full article

Figure 1

Open AccessArticle

Transient and Persistent Technical Efficiencies in Rice Farming: A Generalized True Random-Effects Model Approach

by

Phuc Trong Ho, Michael Burton, Atakelty Hailu and Chunbo Ma

Econometrics 2024, 12(3), 23; https://doi.org/10.3390/econometrics12030023 - 12 Aug 2024

Abstract

►▼

Show Figures

This study estimates transient and persistent technical efficiencies (TEs) using a generalized true random-effects (GTRE) model. We estimate the GTRE model using maximum likelihood and Bayesian estimation methods, then compare it to three simpler models nested within it to evaluate the robustness of

[...] Read more.

This study estimates transient and persistent technical efficiencies (TEs) using a generalized true random-effects (GTRE) model. We estimate the GTRE model using maximum likelihood and Bayesian estimation methods, then compare it to three simpler models nested within it to evaluate the robustness of our estimates. We use a panel data set of 945 observations collected from 344 rice farming households in Vietnam’s Mekong River Delta. The results indicate that the GTRE model is more appropriate than the restricted models for understanding heterogeneity and inefficiency in rice production. The mean estimate of overall technical efficiency is 0.71 on average, with transient rather than persistent inefficiency being the dominant component. This suggests that rice farmers could increase output substantially and would benefit from policies that pay more attention to addressing short-term inefficiency issues.

Full article

Figure 1

Open AccessArticle

Is It Sufficient to Select the Optimal Class Number Based Only on Information Criteria in Fixed- and Random-Parameter Latent Class Discrete Choice Modeling Approaches?

by

Péter Czine, Péter Balogh, Zsanett Blága, Zoltán Szabó, Réka Szekeres, Stephane Hess and Béla Juhász

Econometrics 2024, 12(3), 22; https://doi.org/10.3390/econometrics12030022 - 8 Aug 2024

Abstract

Heterogeneity in preferences can be addressed through various discrete choice modeling approaches. The random-parameter latent class (RLC) approach offers a desirable alternative for analysts due to its advantageous properties of separating classes with different preferences and capturing the remaining heterogeneity within classes by

[...] Read more.

Heterogeneity in preferences can be addressed through various discrete choice modeling approaches. The random-parameter latent class (RLC) approach offers a desirable alternative for analysts due to its advantageous properties of separating classes with different preferences and capturing the remaining heterogeneity within classes by including random parameters. For latent class specifications, however, more empirical evidence on the optimal number of classes to consider is needed in order to develop a more objective set of criteria. To investigate this question, we tested cases with different class numbers (for both fixed- and random-parameter latent class modeling) by analyzing data from a discrete choice experiment conducted in 2021 (examined preferences regarding COVID-19 vaccines). We compared models using commonly used indicators such as the Bayesian information criterion, and we took into account, among others, a seemingly simple but often overlooked indicator such as the ratio of significant parameter estimates. Based on our results, it is not sufficient to decide on the optimal number of classes in the latent class modeling based on only information criteria. We considered aspects such as the ratio of significant parameter estimates (it may be interesting to examine this both between and within specifications to find out which model type and class number has the most balanced ratio); the validity of the coefficients obtained (focusing on whether the conclusions are consistent with our theoretical model); whether including random parameters is justified (finding a balance between the complexity of the model and its information content, i.e., to examine when (and to what extent) the introduction of within-class heterogeneity is relevant); and the distributions of MRS calculations (since they often function as a direct measure of preferences, it is necessary to test how consistent the distributions of specifications with different class numbers are (if they are highly, i.e., relatively stable in explaining consumer preferences, it is probably worth putting more emphasis on the aspects mentioned above when choosing a model)). The results of this research raise further questions that should be addressed by further model testing in the future.

Full article

Open AccessArticle

Instrumental Variable Method for Regularized Estimation in Generalized Linear Measurement Error Models

by

Lin Xue and Liqun Wang

Econometrics 2024, 12(3), 21; https://doi.org/10.3390/econometrics12030021 - 12 Jul 2024

Abstract

►▼

Show Figures

Regularized regression methods have attracted much attention in the literature, mainly due to its application in high-dimensional variable selection problems. Most existing regularization methods assume that the predictors are directly observed and precisely measured. It is well known that in a low-dimensional regression

[...] Read more.

Regularized regression methods have attracted much attention in the literature, mainly due to its application in high-dimensional variable selection problems. Most existing regularization methods assume that the predictors are directly observed and precisely measured. It is well known that in a low-dimensional regression model if some covariates are measured with error, then the naive estimators that ignore the measurement error are biased and inconsistent. However, the impact of measurement error in regularized estimation procedures is not clear. For example, it is known that the ordinary least squares estimate of the regression coefficient in a linear model is attenuated towards zero and, on the other hand, the variance of the observed surrogate predictor is inflated. Therefore, it is unclear how the interaction of these two factors affects the selection outcome. To correct for the measurement error effects, some researchers assume that the measurement error covariance matrix is known or can be estimated using external data. In this paper, we propose the regularized instrumental variable method for generalized linear measurement error models. We show that the proposed approach yields a consistent variable selection procedure and root-n consistent parameter estimators. Extensive finite sample simulation studies show that the proposed method performs satisfactorily in both linear and generalized linear models. A real data example is provided to further demonstrate the usage of the method.

Full article

Figure 1

Open AccessArticle

Comparing Estimation Methods for the Power–Pareto Distribution

by

Frederico Caeiro and Mina Norouzirad

Econometrics 2024, 12(3), 20; https://doi.org/10.3390/econometrics12030020 - 11 Jul 2024

Abstract

►▼

Show Figures

Non-negative distributions are important tools in various fields. Given the importance of achieving a good fit, the literature offers hundreds of different models, from the very simple to the highly flexible. In this paper, we consider the power–Pareto model, which is defined by

[...] Read more.

Non-negative distributions are important tools in various fields. Given the importance of achieving a good fit, the literature offers hundreds of different models, from the very simple to the highly flexible. In this paper, we consider the power–Pareto model, which is defined by its quantile function. This distribution has three parameters, allowing the model to take different shapes, including symmetrical and left- and right-skewed. We provide different distributional characteristics and discuss parameter estimation. In addition to the already-known Maximum Likelihood and Least Squares of the logarithm of the order statistics estimation methods, we propose several additional methods. A simulation study and an application to two datasets are conducted to illustrate the performance of the estimation methods.

Full article

Figure 1

Open AccessArticle

Stochastic Debt Sustainability Analysis in Romania in the Context of the War in Ukraine

by

Gabriela Dobrotă and Alina Daniela Voda

Econometrics 2024, 12(3), 19; https://doi.org/10.3390/econometrics12030019 - 5 Jul 2024

Abstract

►▼

Show Figures

Public debt is determined by borrowings undertaken by a government to finance its short- or long-term financial needs and to ensure that macroeconomic objectives are met within budgetary constraints. In Romania, public debt has been on an upward trajectory, a trend that has

[...] Read more.

Public debt is determined by borrowings undertaken by a government to finance its short- or long-term financial needs and to ensure that macroeconomic objectives are met within budgetary constraints. In Romania, public debt has been on an upward trajectory, a trend that has been further exacerbated in recent years by the COVID-19 pandemic. Additionally, a significant non-economic event influencing Romania’s public debt is the war in Ukraine. To analyze this, a stochastic debt sustainability analysis was conducted, incorporating the unique characteristics of Romania’s emerging market into the research methodology. The projections focused on achieving satisfactory results by following two lines of research. The first direction involved developing four scenarios to assess the risks presented by macroeconomic shocks. Particular emphasis was placed on an unusual negative shock, specifically the war in Ukraine, with forecasts indicating that the debt-to-GDP ratio could reach 102% by 2026. However, if policymakers implement discretionary measures, this level could be contained below 88%. The second direction of research aimed to establish the maximum safe limit of public debt for Romania, which was determined to be 70%. This threshold would allow the emerging economy to manage a reasonable level of risk without requiring excessive fiscal efforts to maintain long-term stability.

Full article

Figure 1

Open AccessArticle

Investigation of Equilibrium in Oligopoly Markets with the Help of Tripled Fixed Points in Banach Spaces

by

Atanas Ilchev, Vanya Ivanova, Hristina Kulina, Polina Yaneva and Boyan Zlatanov

Econometrics 2024, 12(2), 18; https://doi.org/10.3390/econometrics12020018 - 17 Jun 2024

Abstract

►▼

Show Figures

In the study we explore an oligopoly market for equilibrium and stability based on statistical data with the help of response functions rather than payoff maximization. To achieve this, we extend the concept of coupled fixed points to triple fixed points. We propose

[...] Read more.

In the study we explore an oligopoly market for equilibrium and stability based on statistical data with the help of response functions rather than payoff maximization. To achieve this, we extend the concept of coupled fixed points to triple fixed points. We propose a new model that leads to generalized triple fixed points. We present a possible application of the generalized tripled fixed point model to the study of market equilibrium in an oligopolistic market dominated by three major competitors. The task of maximizing the payout functions of the three players is modified by the concept of generalized tripled fixed points of response functions. The presented model for generalized tripled fixed points of response functions is equivalent to Cournot payoff maximization, provided that the market price function and the three players’ cost functions are differentiable. Furthermore, we demonstrate that the contractive condition corresponds to the second-order constraints in payoff maximization. Moreover, the model under consideration is stable in the sense that it ensures the stability of the consecutive production process, as opposed to the payoff maximization model with which the market equilibrium may not be stable. A possible gap in the applications of the classical technique for maximization of the payoff functions is that the price function in the market may not be known, and any approximation of it may lead to the solution of a task different from the one generated by the market. We use empirical data from Bulgaria’s beer market to illustrate the created model. The statistical data gives fair information on how the players react without knowing the price function, their cost function, or their aims towards a specific market. We present two models based on the real data and their approximations, respectively. The two models, although different, show similar behavior in terms of time and the stability of the market equilibrium. Thus, the notion of response functions and tripled fixed points seems to present a justified way of modeling market processes in oligopoly markets when searching whether the market has reached equilibrium and if this equilibrium is unique and stable in time

Full article

Figure 1

Open AccessArticle

Modeling the Economic Impact of the COVID-19 Pandemic Using Dynamic Panel Models and Seemingly Unrelated Regressions

by

Ioannis D. Vrontos, John Galakis, Ekaterini Panopoulou and Spyridon D. Vrontos

Econometrics 2024, 12(2), 17; https://doi.org/10.3390/econometrics12020017 - 14 Jun 2024

Abstract

►▼

Show Figures

The importance of assessing and estimating the impact of the COVID-19 pandemic on financial markets and economic activity has attracted the interest of researchers and practitioners in recent years. The proposed study aims to explore the pandemic’s impact on the economic activity of

[...] Read more.

The importance of assessing and estimating the impact of the COVID-19 pandemic on financial markets and economic activity has attracted the interest of researchers and practitioners in recent years. The proposed study aims to explore the pandemic’s impact on the economic activity of six Euro area economies. A class of dynamic panel data models and their corresponding Seemingly Unrelated Regression (SUR) models are developed and applied to model the economic activity of six Eurozone countries. This class of models allows for common and country-specific covariates to affect the real growth, as well as for cross-sectional dependence in the error processes. Estimation and inference for this class of panel models are based on both Bayesian and classical techniques. Our findings reveal that significant heterogeneity exists among the different economies with respect to the explanatory/predictive factors. The impact of the COVID-19 pandemic varied across the Euro area economies under study. Nonetheless, the outbreak of the COVID-19 pandemic profoundly affected real economic activity across all regions and countries. As an exogenous shock of such magnitude, it caused a sharp increase in overall uncertainty that spread quickly across all sectors of the global economy.

Full article

Figure 1

Open AccessArticle

Predicting the Direction of NEPSE Index Movement with News Headlines Using Machine Learning

by

Keshab Raj Dahal, Ankrit Gupta and Nawa Raj Pokhrel

Econometrics 2024, 12(2), 16; https://doi.org/10.3390/econometrics12020016 - 11 Jun 2024

Abstract

►▼

Show Figures

Predicting stock market movement direction is a challenging task due to its fuzzy, chaotic, volatile, nonlinear, and complex nature. However, with advancements in artificial intelligence, abundant data availability, and improved computational capabilities, creating robust models capable of accurately predicting stock market movement is

[...] Read more.

Predicting stock market movement direction is a challenging task due to its fuzzy, chaotic, volatile, nonlinear, and complex nature. However, with advancements in artificial intelligence, abundant data availability, and improved computational capabilities, creating robust models capable of accurately predicting stock market movement is now feasible. This study aims to construct a predictive model using news headlines to predict stock market movement direction. It conducts a comparative analysis of five supervised classification machine learning algorithms—logistic regression (LR), support vector machine (SVM), random forest (RF), extreme gradient boosting (XGBoost), and artificial neural network (ANN)—to predict the next day’s movement direction of the close price of the Nepal Stock Exchange (NEPSE) index. Sentiment scores from news headlines are computed using the Valence Aware Dictionary for Sentiment Reasoning (VADER) and TextBlob sentiment analyzer. The models’ performance is evaluated based on sensitivity, specificity, accuracy, and the area under the receiver operating characteristic (ROC) curve (AUC). Experimental results reveal that all five models perform equally well when using sentiment scores from the TextBlob analyzer. Similarly, all models exhibit almost identical performance when using sentiment scores from the VADER analyzer, except for minor variations in AUC in SVM vs. LR and SVM vs. ANN. Moreover, models perform relatively better when using sentiment scores from the TextBlob analyzer compared to the VADER analyzer. These findings are further validated through statistical tests.

Full article

Figure 1

Open AccessArticle

Exponential Time Trends in a Fractional Integration Model

by

Guglielmo Maria Caporale and Luis Alberiko Gil-Alana

Econometrics 2024, 12(2), 15; https://doi.org/10.3390/econometrics12020015 - 31 May 2024

Abstract

►▼

Show Figures

This paper introduces a new modelling approach that incorporates nonlinear, exponential deterministic terms into a fractional integration framework. The proposed model is based on a specific test on fractional integration that is more general than the standard methods, which allow for only linear

[...] Read more.

This paper introduces a new modelling approach that incorporates nonlinear, exponential deterministic terms into a fractional integration framework. The proposed model is based on a specific test on fractional integration that is more general than the standard methods, which allow for only linear trends.. Its limiting distribution is standard normal, and Monte Carlo simulations show that it performs well in finite samples. Three empirical examples confirm that the suggested specification captures the properties of the data adequately.

Full article

Figure 1

Open AccessArticle

Financial and Oil Market’s Co-Movements by a Regime-Switching Copula

by

Manel Soury

Econometrics 2024, 12(2), 14; https://doi.org/10.3390/econometrics12020014 - 24 May 2024

Abstract

►▼

Show Figures

Over the years, oil prices and financial stock markets have always had a complex relationship. This paper analyzes the interactions and co-movements between the oil market (WTI crude oil) and two major stock markets in Europe and the US (the Euro Stoxx 50

[...] Read more.

Over the years, oil prices and financial stock markets have always had a complex relationship. This paper analyzes the interactions and co-movements between the oil market (WTI crude oil) and two major stock markets in Europe and the US (the Euro Stoxx 50 and the SP500) for the period from 1990 to 2023. For that, I use both the time-varying and the Markov copula models. The latter one represents an extension of the former one, where the constant term of the dynamic dependence parameter is driven by a hidden two-state first-order Markov chain. It is also called the dynamic regime-switching (RS) copula model. To estimate the model, I use the inference function for margins (IFM) method together with Kim’s filter for the Markov switching process. The marginals of the returns are modeled by the GARCH and GAS models. Empirical results show that the RS copula model seems adequate to measure and evaluate the time-varying and non-linear dependence structure. Two persistent regimes of high and low dependency have been detected. There was a jump in the co-movements of both pairs during high regimes associated with instability and crises. In addition, the extreme dependence between crude oil and US/European stock markets is time-varying but also asymmetric, as indicated by the SJC copula. The correlation in the lower tail is higher than that in the upper. Hence, oil and stock returns are more closely joined and tend to co-move more closely together in bullish periods than in bearish periods. Finally, the dependence between WTI crude oil and the SP500 stock index seems to be more affected by exogenous shocks and instability than the oil and European stock markets.

Full article

Figure 1

Open AccessArticle

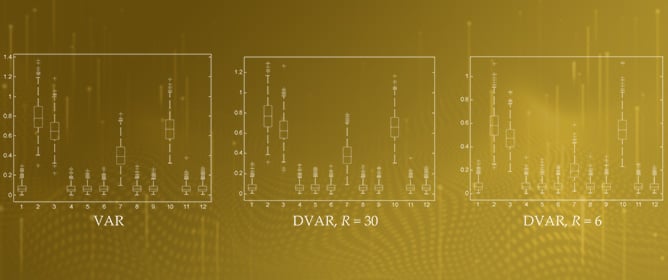

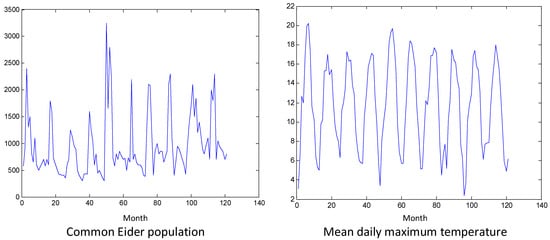

On the Validity of Granger Causality for Ecological Count Time Series

by

Konstantinos G. Papaspyropoulos and Dimitris Kugiumtzis

Econometrics 2024, 12(2), 13; https://doi.org/10.3390/econometrics12020013 - 9 May 2024

Abstract

►▼

Show Figures

Knowledge of causal relationships is fundamental for understanding the dynamic mechanisms of ecological systems. To detect such relationships from multivariate time series, Granger causality, an idea first developed in econometrics, has been formulated in terms of vector autoregressive (VAR) models. Granger causality for

[...] Read more.

Knowledge of causal relationships is fundamental for understanding the dynamic mechanisms of ecological systems. To detect such relationships from multivariate time series, Granger causality, an idea first developed in econometrics, has been formulated in terms of vector autoregressive (VAR) models. Granger causality for count time series, often seen in ecology, has rarely been explored, and this may be due to the difficulty in estimating autoregressive models on multivariate count time series. The present research investigates the appropriateness of VAR-based Granger causality for ecological count time series by conducting a simulation study using several systems of different numbers of variables and time series lengths. VAR-based Granger causality for count time series (DVAR) seems to be estimated efficiently even for two counts in long time series. For all the studied time series lengths, DVAR for more than eight counts matches the Granger causality effects obtained by VAR on the continuous-valued time series well. The positive results, also in two ecological time series, suggest the use of VAR-based Granger causality for assessing causal relationships in real-world count time series even with few distinct integer values or many zeros.

Full article

Figure 1

Open AccessArticle

Short-Term Hourly Ozone Concentration Forecasting Using Functional Data Approach

by

Ismail Shah, Naveed Gul, Sajid Ali and Hassan Houmani

Econometrics 2024, 12(2), 12; https://doi.org/10.3390/econometrics12020012 - 5 May 2024

Abstract

►▼

Show Figures

Air pollution, especially ground-level ozone, poses severe threats to human health and ecosystems. Accurate forecasting of ozone concentrations is essential for reducing its adverse effects. This study aims to use the functional time series approach to model ozone concentrations, a method less explored

[...] Read more.

Air pollution, especially ground-level ozone, poses severe threats to human health and ecosystems. Accurate forecasting of ozone concentrations is essential for reducing its adverse effects. This study aims to use the functional time series approach to model ozone concentrations, a method less explored in the literature, and compare it with traditional time series and machine learning models. To this end, the ozone concentration hourly time series is first filtered for yearly seasonality using smoothing splines that lead us to the stochastic (residual) component. The stochastic component is modeled and forecast using a functional autoregressive model (FAR), where each daily ozone concentration profile is considered a single functional datum. For comparison purposes, different traditional and machine learning techniques, such as autoregressive integrated moving average (ARIMA), vector autoregressive (VAR), neural network autoregressive (NNAR), random forest (RF), and support vector machine (SVM), are also used to model and forecast the stochastic component. Once the forecast from the yearly seasonality component and stochastic component are obtained, both are added to obtain the final forecast. For empirical investigation, data consisting of hourly ozone measurements from Los Angeles from 2013 to 2017 are used, and one-day-ahead out-of-sample forecasts are obtained for a complete year. Based on the evaluation metrics, such as

Figure 1

Open AccessArticle

Stein-like Common Correlated Effects Estimation under Structural Breaks

by

Shahnaz Parsaeian

Econometrics 2024, 12(2), 11; https://doi.org/10.3390/econometrics12020011 - 18 Apr 2024

Abstract

This paper develops a Stein-like combined estimator for large heterogeneous panel data models under common structural breaks. The model allows for cross-sectional dependence through a general multifactor error structure. By utilizing the common correlated effects (CCE) estimation technique, we propose a Stein-like combined

[...] Read more.

This paper develops a Stein-like combined estimator for large heterogeneous panel data models under common structural breaks. The model allows for cross-sectional dependence through a general multifactor error structure. By utilizing the common correlated effects (CCE) estimation technique, we propose a Stein-like combined estimator of the CCE full-sample estimator (i.e., estimation using both the pre-break and post-break observations) and the CCE post-break estimator (i.e., estimation using only the post-break sample observations). The proposed Stein-like combined estimator benefits from exploiting the pre-break sample observations. We derive the optimal combination weight by minimizing the asymptotic risk. We show the superiority of the CCE Stein-like combined estimator over the CCE post-break estimator in terms of the asymptotic risk. Further, we establish the asymptotic properties of the CCE mean group Stein-like combined estimator. The finite sample performance of our proposed estimator is investigated using Monte Carlo experiments and an empirical application of predicting the output growth of industrialized countries.

Full article

Open AccessArticle

The Gini and Mean Log Deviation Indices of Multivariate Inequality of Opportunity

by

Marek Kapera and Martyna Kobus

Econometrics 2024, 12(2), 10; https://doi.org/10.3390/econometrics12020010 - 17 Apr 2024

Abstract

►▼

Show Figures

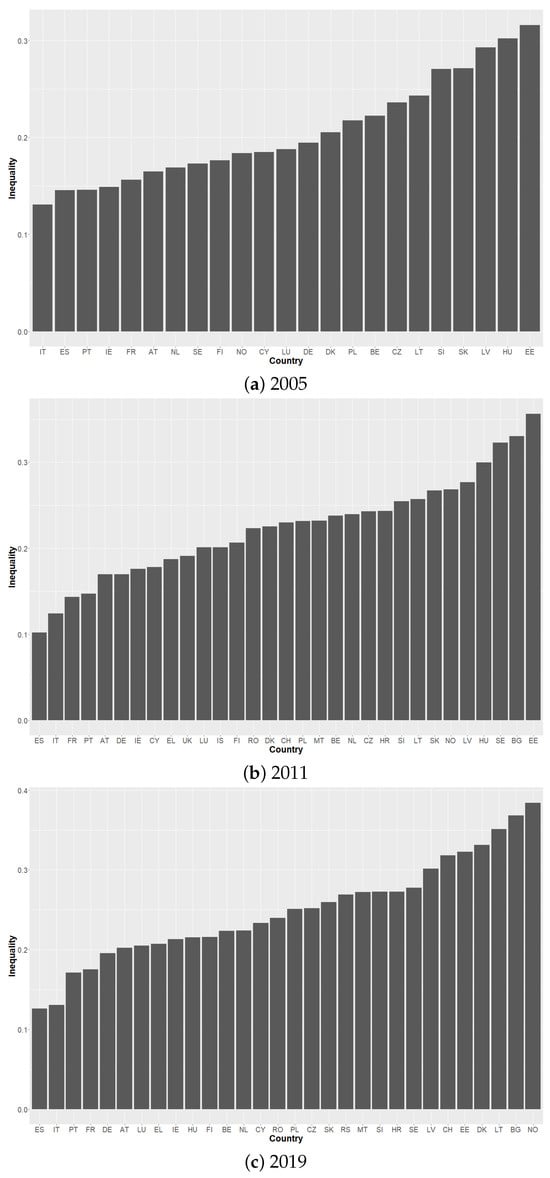

The most common approach to measuring inequality of opportunity in income is to apply the Gini inequality index or the Mean Log Deviation (MLD) index to a smoothed distribution (i.e., a distribution of type mean incomes). We show how this approach can be

[...] Read more.

The most common approach to measuring inequality of opportunity in income is to apply the Gini inequality index or the Mean Log Deviation (MLD) index to a smoothed distribution (i.e., a distribution of type mean incomes). We show how this approach can be naturally extended to include life outcomes other than income (e.g., health, education). We propose two measures: the Gini and MLD indices of multivariate inequality of opportunity. We show that they can be decomposed into the contribution of each outcome and the dependence of the outcomes. Using these measures, we calculate inequality of opportunity in health and income across European countries.

Full article

Figure 1

Open AccessArticle

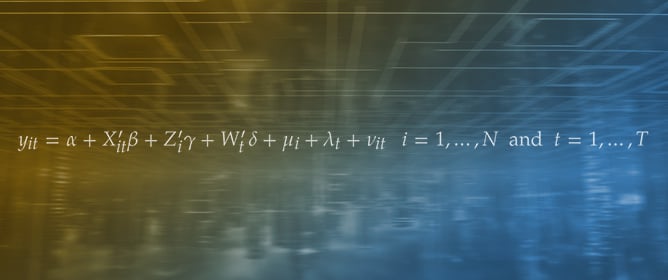

A Pretest Estimator for the Two-Way Error Component Model

by

Badi H. Baltagi, Georges Bresson and Jean-Michel Etienne

Econometrics 2024, 12(2), 9; https://doi.org/10.3390/econometrics12020009 - 16 Apr 2024

Abstract

►▼

Show Figures

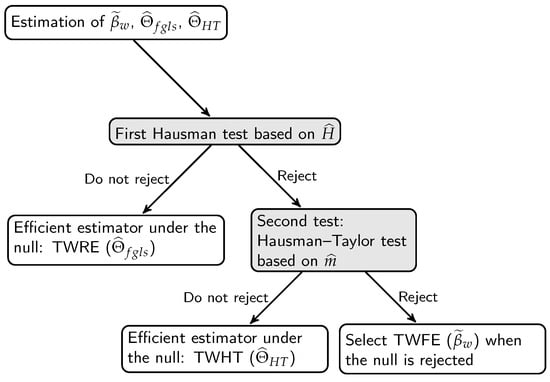

For a panel data linear regression model with both individual and time effects, empirical studies select the two-way random-effects (TWRE) estimator if the Hausman test based on the contrast between the two-way fixed-effects (TWFE) estimator and the TWRE estimator is not rejected. Alternatively,

[...] Read more.

For a panel data linear regression model with both individual and time effects, empirical studies select the two-way random-effects (TWRE) estimator if the Hausman test based on the contrast between the two-way fixed-effects (TWFE) estimator and the TWRE estimator is not rejected. Alternatively, they select the TWFE estimator in cases where this Hausman test rejects the null hypothesis. Not all the regressors may be correlated with these individual and time effects. The one-way Hausman-Taylor model has been generalized to the two-way error component model and allow some but not all regressors to be correlated with these individual and time effects. This paper proposes a pretest estimator for this two-way error component panel data regression model based on two Hausman tests. The first Hausman test is based upon the contrast between the TWFE and the TWRE estimators. The second Hausman test is based on the contrast between the two-way Hausman and Taylor (TWHT) estimator and the TWFE estimator. The Monte Carlo results show that this pretest estimator is always second best in MSE performance compared to the efficient estimator, whether the model is random-effects, fixed-effects or Hausman and Taylor. This paper generalizes the one-way pretest estimator to the two-way error component model.

Full article

Figure 1

Open AccessArticle

Biases in the Maximum Simulated Likelihood Estimation of the Mixed Logit Model

by

Maksat Jumamyradov, Murat Munkin, William H. Greene and Benjamin M. Craig

Econometrics 2024, 12(2), 8; https://doi.org/10.3390/econometrics12020008 - 27 Mar 2024

Abstract

►▼

Show Figures

In a recent study, it was demonstrated that the maximum simulated likelihood (MSL) estimator produces significant biases when applied to the bivariate normal and bivariate Poisson-lognormal models. The study’s conclusion suggests that similar biases could be present in other models generated by correlated

[...] Read more.

In a recent study, it was demonstrated that the maximum simulated likelihood (MSL) estimator produces significant biases when applied to the bivariate normal and bivariate Poisson-lognormal models. The study’s conclusion suggests that similar biases could be present in other models generated by correlated bivariate normal structures, which include several commonly used specifications of the mixed logit (MIXL) models. This paper conducts a simulation study analyzing the MSL estimation of the error components (EC) MIXL. We find that the MSL estimator produces significant biases in the estimated parameters. The problem becomes worse when the true value of the variance parameter is small and the correlation parameter is large in magnitude. In some cases, the biases in the estimated marginal effects are as large as 12% of the true values. These biases are largely invariant to increases in the number of Halton draws.

Full article

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Conferences

Special Issues

Special Issue in

Econometrics

Advancements in Macroeconometric Modeling and Time Series Analysis

Guest Editor: Julien Chevallier

Deadline: 31 March 2025

Special Issue in

Econometrics

Innovations in Bayesian Econometrics: Theory, Techniques, and Economic Analysis

Guest Editor: Deborah GefangDeadline: 31 May 2025