Introduction To Neural Networks

Introduction To Neural Networks

Uploaded by

suryaCopyright:

Available Formats

Introduction To Neural Networks

Introduction To Neural Networks

Uploaded by

suryaOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Copyright:

Available Formats

Introduction To Neural Networks

Introduction To Neural Networks

Uploaded by

suryaCopyright:

Available Formats

Technological Educational Institute Of Crete

Department Of Applied Informatics and

Multimedia

Neural Networks Laboratory

Introduction To

Neural Networks

Prof. George Papadourakis,

Ph.D.

Part I

Introduction and

Architectures

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Introduction To

Neural Networks

Development of Neural Networks date back to the

early 1940s. It experienced an upsurge in popularity

in the late 1980s. This was a result of the discovery

of new techniques and developments and general

advances in computer hardware technology.

Some NNs are models of biological neural networks

and some are not, but historically, much of the

inspiration for the field of NNs came from the desire

to produce artificial systems capable of sophisticated,

perhaps intelligent, computations similar to those

that the human brain routinely performs, and thereby

possibly to enhance our understanding of the human

brain.

Most NNs have some sort of training rule. In other

words, NNs learn from examples (as children learn to

recognize dogs from examples of dogs) and exhibit

some capability for generalization beyond the

training data.

Neural computing must not be considered as a

competitor to conventional computing. Rather, it

should be seen as complementary as the most

successful neural solutions have been those which

operate in conjunction with existing, traditional

techniques.

Slide 2

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Neural Network

Techniques

Computers have to be explicitly

programmed

Analyze the problem to be solved.

Write the code in a programming language.

Neural networks learn from

examples

No requirement of an explicit description of

the problem.

No need for a programmer.

The neural computer adapts itself during a

training period, based on examples of

similar problems even without a desired

solution to each problem. After sufficient

training the neural computer is able to relate

the problem data to the solutions, inputs to

outputs, and it is then able to offer a viable

solution to a brand new problem.

Able to generalize or to handle incomplete

data.

Slide 3

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

NNs vs Computers

Digital Computers

Deductive Reasoning.

We apply known rules

to input data to

produce output.

Computation is

centralized,

synchronous, and

serial.

Memory is packetted,

literally stored, and

location addressable.

Not fault tolerant. One

transistor goes and it

no longer works.

Exact.

Static connectivity.

Applicable if well

defined rules with

precise input data.

Neural Networks

Inductive Reasoning.

Given input and output

data (training

examples), we construct

the rules.

Computation is

collective,

asynchronous, and

parallel.

Memory is distributed,

internalized, short term

and content

addressable.

Fault tolerant,

redundancy, and sharing

of responsibilities.

Inexact.

Dynamic connectivity.

Applicable if rules are

unknown or

complicated, or if data

are noisy or partial.

Slide 4

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Applications off

NNs

classification

in marketing: consumer spending pattern

classification

In defence: radar and sonar image classification

In agriculture & fishing: fruit and catch grading

In medicine: ultrasound and electrocardiogram image

classification, EEGs, medical diagnosis

recognition and identification

In general computing and telecommunications:

speech, vision and handwriting recognition

In finance: signature verification and bank note

verification

assessment

In engineering: product inspection monitoring and control

In defence: target tracking

In security: motion detection, surveillance image analysis

and fingerprint matching

forecasting and prediction

In finance: foreign exchange rate and stock market

forecasting

In agriculture: crop yield forecasting

In marketing: sales forecasting

In meteorology: weather prediction

Slide 5

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

What can you do

with an NN and

what not?

In principle, NNs can compute any

computable function, i.e., they can do

everything a normal digital computer can

do. Almost any mapping between vector

spaces can be approximated to arbitrary

precision by feedforward NNs

In practice, NNs are especially useful for

classification and function approximation

problems usually when rules such as

those that might be used in an expert

system cannot easily be applied.

NNs are, at least today, difficult to apply

successfully to problems that concern

manipulation of symbols and memory.

And there are no methods for training

NNs that can magically create

information that is not contained in the

training data.

Slide 6

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Who is concerned

with NNs?

Computer scientists want to find out about the

properties of non-symbolic information processing

with neural nets and about learning systems in

general.

Statisticians use neural nets as flexible, nonlinear

regression and classification models.

Engineers of many kinds exploit the capabilities of

neural networks in many areas, such as signal

processing and automatic control.

Cognitive scientists view neural networks as a

possible apparatus to describe models of thinking

and consciousness (High-level brain function).

Neuro-physiologists use neural networks to

describe and explore medium-level brain function

(e.g. memory, sensory system, motorics).

Physicists use neural networks to model

phenomena in statistical mechanics and for a lot

of other tasks.

Biologists use Neural Networks to interpret

nucleotide sequences.

Philosophers and some other people may also be

interested in Neural Networks for various reasons

Slide 7

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

The Biological

Neuron

The brain is a collection of about 10 billion

interconnected neurons. Each neuron is a cell that

uses biochemical reactions to receive, process and

transmit information.

Each terminal button is connected to other neurons

across a small gap called a synapse.

A neuron's dendritic tree is connected to a

thousand neighbouring neurons. When one of

those neurons fire, a positive or negative charge is

received by one of the dendrites. The strengths of

all the received charges are added together

through the processes of spatial and temporal

summation.

Slide 8

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

The Key Elements

of Neural

Networks

Neural computing requires a number of

neurons, to be connected together into a

neural network. Neurons are arranged in

layers.

In p u ts

p1

p

W e ig h t s

w1

w

w

2

3

O u tp u t

B ia s

a f p 1w 1 p 2 w 2 p 3w 3 b f

p iw i b

Each neuron within the network is usually a

simple processing unit which takes one or

more inputs and produces an output. At each

neuron, every input has an associated weight

which modifies the strength of each input. The

neuron simply adds together all the inputs

and calculates an output to be passed on.

Slide 9

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Activation

functions

The activation function is generally nonlinear. Linear functions are limited because

the output is simply proportional to the input.

Slide 10

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Training

methods

Supervised learning

In supervised training, both the inputs and the

outputs are provided. The network then

processes the inputs and compares its

resulting outputs against the desired outputs.

Errors are then propagated back through the

system, causing the system to adjust the

weights which control the network. This

process occurs over and over as the weights

are continually tweaked. The set of data which

enables the training is called the training set.

During the training of a network the same set

of data is processed many times as the

connection weights are ever refined.

Example architectures : Multilayer perceptrons

Unsupervised learning

In unsupervised training, the network is

provided with inputs but not with desired

outputs. The system itself must then decide

what features it will use to group the input

data. This is often referred to as selforganization or adaption.

Example architectures : Kohonen, ART

Slide 11

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Perceptrons

Neuron Model

The perceptron neuron produces a 1 if the net

input into the transfer function is equal to or

greater than 0, otherwise it produces a 0.

Architecture

Decision boundaries

Slide 12

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Error Surface

Error Contour

Sum squared Error

Error surface

Bias

Weight

Slide 13

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Feedforword NNs

The basic structure off a feedforward Neural

Network

The learning rule modifies the weights according to

the input patterns that it is presented with. In a

sense, ANNs learn by example as do their biological

counterparts.

When the desired output are known we have

supervised learning or learning with a teacher.

Slide 14

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

An overview of the

backpropagation

1. A set of examples for training the network is assembled. Each

case consists of a problem statement (which represents the

input into the network) and the corresponding solution (which

represents the desired output from the network).

2. The input data is entered into the network via the input layer.

3. Each neuron in the network processes the input data with the

resultant values steadily "percolating" through the network,

layer by layer, until a result is generated by the output layer.

4.

The actual output of the

network is compared to

expected output for

that particular input.

This results in an error

value.. The connection

weights in the network

are gradually adjusted,

working backwards

from the output layer,

through the hidden

layer, and to the input

layer, until the correct

output is produced. Fine

tuning the weights in

this way has the effect

of teaching the network

how to produce the

correct output for a

particular input, i.e. the

network learns.

Slide 15

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

The Learning Rule

The delta rule is often utilized by the most

common class of ANNs called

backpropagational neural networks.

In p u t

D e s ir e d

O u tp u t

When a neural network is initially presented

with a pattern it makes a random guess as to

what it might be. It then sees how far its

answer was from the actual one and makes an

appropriate adjustment to its connection

weights.

Slide 16

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

The Insides off

Delta Rule

Backpropagation performs a gradient descent

within the solution's vector space towards a global

minimum.

The error surface itself is a hyperparaboloid but is

seldom smooth as is depicted in the graphic below.

Indeed, in most problems, the solution space is

quite irregular with numerous pits and hills which

may cause the network to settle down in a local

minimum which is not the best overall solution.

Slide 17

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Early stopping

Training data

Validation

data

Test data

Slide 18

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Other architectures

Slide 19

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Design

Conciderations

What transfer function should be

used?

How many inputs does the

network need?

How many hidden layers does

the network need?

How many hidden neurons per

hidden layer?

How many outputs should the

network have?

There is no standard methodology to

determinate these values. Even there is some

heuristic points, final values are determinate by

a trial and error procedure.

Slide 20

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Time Delay NNs

A recurrent neural

network is one in

which the outputs

from the output

layer are fed back

to a set of input

units. This is in

contrast to feedforward networks,

where the outputs

are connected only

to the inputs of

units in

subsequent layers.

Neural networks of this kind are able to store

information about time, and therefore they are

particularly suitable for forecasting and control

applications: they have been used with considerable

success for predicting several types of time series.

Slide 21

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

TD NNs

applications

Adaptive Filter

Prediction example

Slide 22

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Auto-associative

NNs

The auto-associative neural network is a special kind of MLP in fact, it normally consists of two MLP networks connected

"back to back. The other distinguishing feature of autoassociative networks is that they are trained with a target

data set that is identical to the input data set.

In training, the network weights are adjusted until the

outputs match the inputs, and the values assigned to the

weights reflect the relationships between the various input

data elements. This property is useful in, for example, data

validation: when invalid data is presented to the trained

neural network, the learned relationships no longer hold and

it is unable to reproduce the correct output. Ideally, the

match between the actual and correct outputs would reflect

the closeness of the invalid data to valid values. Autoassociative neural networks are also used in data

compression applications.

Slide 23

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Recurrent

Networks

Elman Networks

Hopfield

Slide 24

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Self Organising

Maps (Kohonen)

The Self Organising Map or Kohonen network uses

unsupervised learning.

Kohonen networks have a single layer of units and,

during training, clusters of units become associated

with different classes (with statistically similar

properties) that are present in the training data.

The Kohonen network is useful in clustering

applications.

Slide 25

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Normalization

Normalization

x xi 2 1

m Kohonen Neurons

Connect

Connect

Connect

Inputs must be in a

hyperdimension sphere

The dimension shinks

from n to n-1. (-2,1,3)

and (-4,2,6) becomes the

same.

n Inputs +1 Synthetic

Normalization

Composite inputs

The classical

method

xi 2

i

n actual Inputs

x

xi i 1,1

z-Axis x f x

1

i

i

f

,

ormalization

2

n s f n

Slide 26

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Learning procedure

In the begging the

weights take random

values.

For an input vector we

declare the winning

neuron.

Weights are changing in

winner neighborhood.

Iterate till balance.

Basic Math Relations

wc t 1 wc t hci t x t wc t

d j oi

i

wij

Slide 27

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Neighborhood

kernel function

hci t e

A

t

Bt

rc ri

2 2 t

Slide 28

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Self Organizing

Maps

Slide 29

Technological Educational Institute Of Crete

Department Of Applied Informatics and

Multimedia

Neural Networks Laboratory

Introduction To

Neural Networks

Prof. George Papadourakis,

Ph.D.

Part II

Application

Development

And Portofolio

30

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Characteristics of

NNs

Learning from experience: Complex

difficult to solve problems, but with

plenty of data that describe the problem

Generalizing from examples: Can

interpolate from previous learning and

give the correct response to unseen data

Rapid applications development: NNs are

generic machines and quite independent

from domain knowledge

Adaptability: Adapts to a changing

environment, if is properly designed

Computational efficiency: Although the

training off a neural network demands a

lot of computer power, a trained network

demands almost nothing in recall mode

Non-linearity: Not based on linear

assumptions about the real word

Slide 31

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Neural Networks

Projects Are

Different

Projects are data driven: Therefore, there is a

need to collect and analyse data as part of the

design process and to train the neural network.

This task is often time-consuming and the effort,

resources and time required are frequently

underestimated

It is not usually possible to specify fully the

solution at the design stage: Therefore, it is

necessary to build prototypes and experiment

with them in order to resolve design issues. This

iterative development process can be difficult to

control

Performance, rather than speed of processing, is

the key issue: More attention must be paid to

performance issues during the requirements

analysis, design and test phases. Furthermore,

demonstrating that the performance meets the

requirements can be particularly difficult.

These issues affect the following areas :

Project planning

Project management

Project documentation

Slide 32

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Project life cycle

Application Identification

Feasibility Study

Developmen

t and

validation of

prototype

Build Train and Test

Optimize prototype

Data Collection

Design Prototype

Validate prototype

Implement System

Validate System

Slide 33

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

NNs in real

problems

Rest of System

Raw data

Pre-processing

Feature vector

Input encode

Network inputs

Neural Network

Network outputs

Output encode

Decoded outputs

Post-processing

Rest of System

Slide 34

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Pre-processing

Transform data to NN inputs

Selection of the most relevant

data and outlier removal

Minimizing network inputs

Applying a mathematical or

statistical function

Encoding textual data from a

database

Feature extraction

Principal components analysis

Waveform / Image analysis

Coding pre-processing data to

network inputs

Slide 35

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Fibre Optic

Image

Transmission

Transmitting image without the

distortion

In addition to

transmitting data fiber

optics, they also offer

a potential for

transmitting images.

Unfortunately images

transmitted over long

distance fibre optic

cables are more

susceptible to

distortion due to

A large Japanese telecommunications company decided to

noise.

use neural computing to tackle this problem. Rather than

trying to make the transmission line as perfect and noisefree as possible, they used a neural network at the receiving

end to reconstruct the distorted image back into its original

form.

Related Applications : Recognizing Images

from Noisy data

Speech recognition

Facial identification

Forensic data analysis

Battlefield scene analysis

Slide 36

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

TV Picture

Quality Control

Assessing picture quality

One of the main quality controls in television manufacture is, a test of

picture quality when interference is present. Manufacturers have tried to

automate the tests, firstly by analysing the pictures for the different

factors that affect picture quality as seen by a customer, and then by

combining the different factors measured into an overall quality

assessment. Although the various factors can be measured accurately, it

has proved very difficult to combine them into a single measure of quality

because they interact in very complex ways.

Neural networks are well suited to problems where many factors combine

in ways that are difficult to analyse. ERA Technology Ltd, working for the

UK Radio Communications Agency, trained a neural network with the

results from a range of human assessments. A simple network proved

easy to train and achieved excellent results on new tests. The neural

network was also very fast and reported immediately

The neural system is able to

carry out the range of

required testing far more

quickly than a human

assessor, and at far lower

cost. This enables

manufacturers to increase

the sampling rate and

achieve higher quality, as

well as reducing the cost of

their current level of quality

Related Applications : Signal Analysis

control.

Testing equipment for electromagnetic compatibility (EMC)

Testing faulty equipment

Switching car radios between alternative transmitters

Slide 37

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Adaptive Inverse

Control

NNs can be used in adaptive control applications.

The top block diagram shows the training of the

inverse model. Essentially, the neural network is

learning to recreate the input that created the

current output of the plant. Once properly trained,

the inverse model (which is another NN) can be used

to control the plant since it can create the necessary

control signals to create the desired system output.

Block diagram for neural network adaptive control

A computerized system for adaptive control

Slide 38

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Chemical

Manufacture

Getting the right mix

In a chemical tank various catalysts are added to the base

ingredients at differing rates to speed up the chemical processes

required. Viscosity has to be controlled very carefully, since

inaccurate control leads to poor quality and hence costly wastage

The system was trained on data recorded from the production

line. Once trained, the neural network was found to be able to

predict accurately over the three-minute measurement delay of

the viscometer, thereby providing an immediate reading of the

viscosity in the reaction tank. This predicted viscosity will be used

by a manufacturing process computer to control the

polymerisation tank.

A more effective modelling tool

Speech recognition (signal analysis)

Environmental control

Power demand analysis

Slide 39

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Stock Market

Prediction

Improving portfolio returns

A major Japanese securities company decided to user neural

computing in order to develop better prediction models. A neural

network was trained on 33 months' worth of historical data. This

data contained a variety of economic indicators such as turnover,

previous share values, interest rates and exchange rates. The

network was able to learn the complex relations between the

indicators and how they contribute to the overall prediction. Once

trained it was then in a position to make predictions based on "live"

economic indicators.

The neural network-based system is able to make faster and more

accurate predictions than before. It is also more flexible since it can be

retrained at any time in order to accommodate changes in stock

market trading conditions. Overall the system outperforms statistical

methods by a factor of 19%, which in the case of a 1 million portfolio

means a gain of 190,000. The system can therefore make a

considerable difference on returns.

Making predictions based on key indicators

Predicting gas and electricity supply and demand

Predicting sales and customer trends

Predicting the route of a projectile

Predicting crop yields

Slide 40

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Oil Exploration

Getting the right signal

The vast quantities of

seismic data involved

are cluttered with

noise and are highly

dependent on the

location being

investigated. Classical

statistical analysis

techniques lose their

effectiveness when the

data is noisy and

comes from an

environment not

A neural network was trained on a

previously

set of traces selected from a

encountered. Even a

representative set of seismic records,

small improvement in

each of which had their first break

correctly identifying

signals highlighted by an expert.

first break signals

could result in a

The neural network achieves better than 95

% accuracy,

easily

considerable

return

on

outperforming existing manual and computer-based

methods.

As

investment.

well as being more accurate, the system also achieves an 88%

improvement in the time taken to identify first break signals.

Considerable cost savings have been made as a result.

Analysing signals buried in background noise

Defence radar and sonar analysis

Medical scanner analysis

Radio astronomy signal analysis

Slide 41

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Automated

Industrial

Inspection

Making better pizza

The design of an industrial inspection system is specific to a particular

task and product, such as examining a particular kind of pizza. If the

system was required to examine a different kind of pizza then it would

need to be completely re-engineered. These systems also require stable

operating environments, with fixed lighting conditions and precise

component alignment on the conveyer belt.

A neural network was trained by personnel in the Quality Assurance

Department to recognise different variations of the item being inspected.

Once trained, the network was then able to identify deviant or defective

items.

If requirements change, for example the need to identify a different kind

of ingredient in a pizza or the need to handle a totally new type of pizza

altogether, the neural network is simply retrained. There is no need to

perform a costly system re-engineering exercise. Costs are therefore

saved in system maintenance and production line down time.

Automatic inspection of components

Inspecting paintwork on cars

Checking bottles for cracks

Checking printed circuit boards for surface defects

.

Slide 42

Technological Educational Institute Of Crete

Department Of Applied Informatics and

Multimedia

Neural Networks Laboratory

A Brief

Introduction To

Neural Networks

Prof. George Papadourakis Phd

Part III

Neural Networks

Hardware

43

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Hardware vs

Software

Implementing your Neural Network in special hardware can

entail a substantial investment of your time and money:

the cost of the hardware

cost of the software to execute on the hardware

time and effort to climb the learning curve to master the use of

the hardware and software.

Before making this investment, you would like to be sure it

is worth it.

A scan of applications in a typical NNW conference

proceedings will show that many, if not most, use

feedforward networks with 10-100 inputs, 10-100 hidden

units, and 1-10 output units.

A forward pass through networks of this size will run in

millisecs on a Pentium.

Training may take overnight but if only done once or

occasionally, this is not usually a problem.

Most applications involve a number of steps, many not

NNW related, that cannot be made parallel. So Amdahl's

law limits the overall speedup from your special hardware.

Intel 86 series chips and other von Neuman processors

have grown rapidly in speed, plus one can take advantage

of huge amount of readily available software.

One quickly begins to see why the business of Neural

Network hardware has not boomed the way some in the

field expected back in the 1980's.

Slide 44

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Applications

of Hardware NNWs

While not yet as successful as NNWs in

software, there are in fact hardware NNW's

hard at work in the real world. For example:

OCR (Optical Character Recognition)

Adaptive Solutions high volume form and

image capture systems.

Ligature Ltd. OCR-on-a-Chip

Voice Recognition

Sensory Inc. RSC Microcontrollers and ASSP

speech recognition specific chips.

Traffic Monitoring

Nestor TrafficVision Systems

High Energy Physics

Online data filter at H1 electon-proton collider

experiment in Hamburg using Adaptive

Solutions CNAPS boards.

However, most NNW applications today are

still run with conventional software

simulation on PC's and workstations with no

special hardware add-ons.

Slide 45

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

NNets in VLSI

Neural networks are parallel devices, but usually

is implement in traditional Von Neuman

architectures. There is also exist Hardware

implementations of NNs.Such hardware includes

digital and analog hardware chips, PC

accelerator boards, and multi-board

neurocomputers.

Digital

Slice Architectures

Multi-processor Chips

Radial Basis Functions

Other Digital Designs

Analog

Hybrid

Optical hardware

Slide 46

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

NNW Features

Neural Network architecture(s)

Programmable or hardwired

network(s)

On-chip learning or chip-in-the-loop

training

Low, medium or high number of

parallel processing elements (PE's)

Maximum network size.

Can chips be chained together to

increase network size.

Bits of precision (estimate for

analog)

Transfer function on-chip or offchip, e.g. in lookup table (LUT).

Accumulator size in bits.

Expensive or cheap

Slide 47

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

NeuroComputers

Neurocomputers are defined here as

standalone systems with elaborate

hardware and software.

Examples:

Siemens Synapse 1 Neurocomputer:

Uses 8 of the MA-16 systolic array chips.

It resides in its own cabinet and communicates via

ethernet to a host workstation.

Peak performance of 3.2 billion multiplications (16-bit x

16-bit) and additions (48-bit) per sec. at 25MHz clock

rate.

Adaptive Solutions - CNAPServer

VME System

VME boards in a custom cabinet run

from a UNIX host via an ethernet link.

Boards come with 1 to 4 chips and up

to two boards to give a total of 512

PE's.

Software includes a C-language

library, assembler, compiler, and a

package of NN algorithms.

Slide 48

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

Analog & Hybrid

NNW Chips

Analog advantages:

Analog disadvantages

Exploit physical properties to do network

operations, thereby obtain high speed and

densities.

A common output line, for example, can sum

current outputs from synapses to sum the

neuron inputs.

Design can be very difficult because of the

need to compensate for variations in

manufacturing, in temperature, etc.

Analog weight storage complicated, especially

if non-volatility required.

Weight*input must be linear over a wide range.

Hybrids combine digital and analog

technology to attempt to get the best of

both. Variations include:

Internal processing analog for speed but

weights set digitally, e.g. capacitors refreshed

periodically with DAC's.

Pulse networks use rate or widths of pulses to

emulate amplitude of I/O and weights.

Slide 49

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

NNW Accelerator

Cards

Another approach to dealing with the PC, is to work with it in

partnership.

Accelerator cards reside in the expansion slots and are used

to speed up the NNW computations.

Cheaper than NeuroComputers.

Usually based on NNW chips but some just use fast digital

signal processors (DSP) that do very fast multiple-accumulate

operations.

Examples:

IBM ZISC ISA and PCI Cards:

California Scientific CNAPS accelerators:

ZISC implements a RBF architecture with RCE learning (more ZISC discussion later.)

ISA card holds to 16 ZISC036 chips, giving 576 prototype neurons.

PCI card holds up to 19 chips for 684 prototypes.

PCI card can process 165,000 patterns/sec, where patterns are 64 8-bit element

vectors.

Runs with CalSci's popular BrainMaker NNW software.

With either 4 or 8 chips (16-PE/chip) to give 64 or 128 total PEs.

Up to 2.27GCPS. See their Benchmarks

Speeds can vary depending on transfer speeds of particular machines.

Hardware and software included

DataFactory NeuroLution PCI Card:

contains up to four SAND/1 neurochips.

Cascadable SAND neurochips use a systolic architecture to do fast 4x4 matrix

multiplies and accumulates.

Four parallel 16 bit multipliers and eight 40 bit adders execute in one clock cycle.

The clock rate is 50 Mhz.

With 4 chips peak performance of the board is 800 MCPS.

Used with the NeuoLution Manager and Connect scripting language.

Feedforward neural networks with a maximum of 512 input neurons and three

hidden layers.

The activation function of the neurons can be programmed in a lookup table.

Kohonen feature maps and radial basis function networks also implemented.

Slide 50

Technological Educational Institute Of Crete

Department Of Applied Informatics and Multimedia

Neural Networks Laboratory

OCNNs inVLSI

1.

2.

Optimization cellular neural network (OCNN) can be

implemented VLSI. The OCNN concept is founded on the

concept of the cellular neural network (CNN), which is a

recursive neural network that comprises a

multidimensional array of mainly identical artificial

neural cells, wherein

Each cell is a dynamic subsystem with continuous state

variables

Each cell is connected to only the few other cells that lie

within a specified radius

A Typical n-by-m

Rectangular Cellular Neural

Network contains cells that

are connected to their nearest

neighbors only.

A "Smart" Optoelectronic Image

Sensor could include an OCNN

sandwiched between a planar array of

optical receivers and a planar array of

optical transmitters, along with circuitry

that would implement a programmable

synaptic-weight matrix memory. This

combination of optics and electronics

would afford fast processing of sensory

information within the sensor package.

Slide 51

You might also like

- Python Data Cleaning Cookbook: Prepare your data for analysis with pandas, NumPy, Matplotlib, scikit-learn, and OpenAIFrom EverandPython Data Cleaning Cookbook: Prepare your data for analysis with pandas, NumPy, Matplotlib, scikit-learn, and OpenAINo ratings yet

- Gaddis Starting Out With C++ 8th Solution of Check PointsDocument31 pagesGaddis Starting Out With C++ 8th Solution of Check Pointsعبد للهNo ratings yet

- Jakub M. Tomczak - Deep Generative Modeling-Springer (2022)Document210 pagesJakub M. Tomczak - Deep Generative Modeling-Springer (2022)Federico Molina MagneNo ratings yet

- Gen AIGame TheoryDocument9 pagesGen AIGame Theorysalma.chrichi2001No ratings yet

- Ai Course File FinalDocument155 pagesAi Course File Finalsanthi sNo ratings yet

- Abstract On The Artificial IntelegenceDocument15 pagesAbstract On The Artificial IntelegenceSreekanth NaiduNo ratings yet

- Generative Adversarial Networks Review 1-06-08-1.editDocument24 pagesGenerative Adversarial Networks Review 1-06-08-1.editgijare6787No ratings yet

- 0ven Toaster Grill Recipe BookDocument32 pages0ven Toaster Grill Recipe Booksurya50% (2)

- Fast Manual Instructions For Economic DVRV1.1Document8 pagesFast Manual Instructions For Economic DVRV1.1Jo Nat100% (1)

- Applicable Artificial Intelligence: Introduction To Neural NetworksDocument36 pagesApplicable Artificial Intelligence: Introduction To Neural Networksmuhammed suhailNo ratings yet

- Neural Network and Fuzzy LogicDocument46 pagesNeural Network and Fuzzy Logicdoc. safe eeNo ratings yet

- Neural NetworkDocument58 pagesNeural Networkarshia saeedNo ratings yet

- Linking Information Systems To The Business PlanDocument10 pagesLinking Information Systems To The Business Planermiyas tesfayeNo ratings yet

- Artificial IntelligenceDocument22 pagesArtificial IntelligenceZain DiyarNo ratings yet

- GANpptDocument34 pagesGANpptSreejith PBNo ratings yet

- The Ultimate Things About IoTDocument100 pagesThe Ultimate Things About IoTMazlan AbbasNo ratings yet

- Cognite ComputingDocument14 pagesCognite ComputingGlauco Torres100% (1)

- Andrew Treadway - Software Engineering For Data Scientists (MEAP v2) - Manning Publications (2023)Document213 pagesAndrew Treadway - Software Engineering For Data Scientists (MEAP v2) - Manning Publications (2023)camilocano258100% (1)

- Artificial - Intelligence - Master Program - SlimupDocument25 pagesArtificial - Intelligence - Master Program - Slimupswapnil suryawanshiNo ratings yet

- AI in Network enDocument159 pagesAI in Network enRodrigo Luis100% (1)

- Machine LearningDocument20 pagesMachine LearningvigneshsrinivasankklNo ratings yet

- DeepLearning NetworkingDocument64 pagesDeepLearning NetworkingAnirudh M KNo ratings yet

- Egomotion Estimation Using Visual OdometryDocument40 pagesEgomotion Estimation Using Visual OdometryRuta DesaiNo ratings yet

- Deep Learning FullDocument25 pagesDeep Learning Fullsandy milk minNo ratings yet

- Machine Learning Operations MLOps Overview Definition and ArchitectureDocument14 pagesMachine Learning Operations MLOps Overview Definition and ArchitectureSerhiy YehressNo ratings yet

- Lang ChainDocument8 pagesLang ChainGokul AakashNo ratings yet

- Fake News DetectionDocument14 pagesFake News DetectionAdarsh LeninNo ratings yet

- Study Material BTech IT VIII Sem Subject Deep Learning Deep Learning Btech IT VIII SemDocument30 pagesStudy Material BTech IT VIII Sem Subject Deep Learning Deep Learning Btech IT VIII SemEducation VietCoNo ratings yet

- Goals of Machine Learning in Artificial IntelligenceDocument3 pagesGoals of Machine Learning in Artificial Intelligencefatima rahimNo ratings yet

- 2020 Packt - Hands On Natural Language Processing With PyTorch 1.xDocument277 pages2020 Packt - Hands On Natural Language Processing With PyTorch 1.xMiguel Angel Pardave Barzola100% (1)

- IoT IT InfrastructureDocument101 pagesIoT IT InfrastructureJoki RoyNo ratings yet

- Coursera - Introduction To Ai NotesDocument4 pagesCoursera - Introduction To Ai Notesgeralineealeia100% (1)

- Artificial Neural NetworkDocument46 pagesArtificial Neural Networkmanish9890No ratings yet

- A Beginner's Guide To Natural Language Processing - IBM DeveloperDocument9 pagesA Beginner's Guide To Natural Language Processing - IBM DeveloperAisha GurungNo ratings yet

- UntitledDocument201 pagesUntitledDEVARAKONDA SRIVATSAVNo ratings yet

- 166 190410245 SiddhantSahooDocument10 pages166 190410245 SiddhantSahooSiddhant SahooNo ratings yet

- Artificial Intelligence-An Introduction: Department of Computer Science & EngineeringDocument17 pagesArtificial Intelligence-An Introduction: Department of Computer Science & EngineeringAravali GFNo ratings yet

- Movidius Neural Computer StickDocument33 pagesMovidius Neural Computer StickmaximinNo ratings yet

- Jetson NanoDocument349 pagesJetson NanoDilip TiloniaNo ratings yet

- The Ultimate Guide To ML For Embedded SystemsDocument22 pagesThe Ultimate Guide To ML For Embedded SystemssusanNo ratings yet

- Big Data - MidsemDocument526 pagesBig Data - MidsemRachana PanditNo ratings yet

- Tensor Processing UnitDocument23 pagesTensor Processing UnitAHMAD ALI50% (2)

- Stanford Internet of Things Short CourseDocument4 pagesStanford Internet of Things Short Coursesusan100% (1)

- Artificial Intelligence and Machine Learning in BusinessDocument5 pagesArtificial Intelligence and Machine Learning in BusinessresearchparksNo ratings yet

- Industrial Revolution of The 21st CenturyDocument74 pagesIndustrial Revolution of The 21st CenturyAbcvdgtyio Sos RsvgNo ratings yet

- Introduction To Deep LearningDocument34 pagesIntroduction To Deep LearningShivam KumarNo ratings yet

- Lecture Notes 1 - Introduction To SMEsDocument7 pagesLecture Notes 1 - Introduction To SMEsAaron Aivan TingNo ratings yet

- Exploring The Security Risks of Using Large Language ModelsDocument15 pagesExploring The Security Risks of Using Large Language Modelspatrickbackup0nn3No ratings yet

- Artificial Intelligence Approaches Tools and Appli...Document179 pagesArtificial Intelligence Approaches Tools and Appli...oucrkqnthiysznpksgNo ratings yet

- Gen Ai Roadmap - v5Document3 pagesGen Ai Roadmap - v5abhijeet_mishra8926No ratings yet

- Advances in Communication Cloud and Big DataDocument178 pagesAdvances in Communication Cloud and Big DataNeo Peter KimNo ratings yet

- Artificial Intelligence (AI)Document11 pagesArtificial Intelligence (AI)Pritom GhoshNo ratings yet

- Artificial Intelligence AIDocument21 pagesArtificial Intelligence AIDheeraj Ssv BuntyNo ratings yet

- A Beginner's Guide To Large Language ModelsDocument10 pagesA Beginner's Guide To Large Language ModelsMarcelo NevesNo ratings yet

- Roadmap To AIDocument23 pagesRoadmap To AICOD PIRATESNo ratings yet

- 02 - Lecture Note - TensorFlow OpsDocument21 pages02 - Lecture Note - TensorFlow OpsRoberto PereiraNo ratings yet

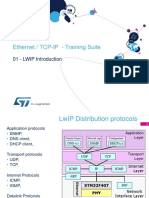

- Ethernet / TCP-IP - Training Suite: 01 - LWIP IntroductionDocument56 pagesEthernet / TCP-IP - Training Suite: 01 - LWIP IntroductionTuan AnhNo ratings yet

- Concurrent and Real-Time Programming in Java: © Andy Wellings, 2004Document35 pagesConcurrent and Real-Time Programming in Java: © Andy Wellings, 2004maximaximoNo ratings yet

- Machine LearningDocument48 pagesMachine LearningsaiafNo ratings yet

- Elon Musk SpeechDocument4 pagesElon Musk SpeechAme Roxan AwidNo ratings yet

- Introduction To Neural NetworksDocument51 pagesIntroduction To Neural NetworksAayush PatidarNo ratings yet

- Introduction To Neural NetworksDocument51 pagesIntroduction To Neural NetworkssavisuNo ratings yet

- Linux Security On HP Servers: Security Enhanced Linux: Technical IntroductionDocument13 pagesLinux Security On HP Servers: Security Enhanced Linux: Technical IntroductionsuryaNo ratings yet

- 2-Kohonen Self-Organizing Maps (Som) : Patterns Associated With That ClusterDocument11 pages2-Kohonen Self-Organizing Maps (Som) : Patterns Associated With That ClustersuryaNo ratings yet

- Machine Learning in Advanced PythonDocument7 pagesMachine Learning in Advanced PythonsuryaNo ratings yet

- Neural Control2 PDFDocument5 pagesNeural Control2 PDFsuryaNo ratings yet

- Productflyer - 978 3 540 34437 7Document1 pageProductflyer - 978 3 540 34437 7suryaNo ratings yet

- Neural Networks For Control Systems: G. Linear and Nonlinear ProgrammingDocument3 pagesNeural Networks For Control Systems: G. Linear and Nonlinear ProgrammingsuryaNo ratings yet

- A Comprehensive Study of Artificial Neural NetworksDocument7 pagesA Comprehensive Study of Artificial Neural NetworkssuryaNo ratings yet

- Neural Networks: Robert Kozma, Steven Bressler, Leonid Perlovsky, Ganesh Kumar VenayagamoorthyDocument2 pagesNeural Networks: Robert Kozma, Steven Bressler, Leonid Perlovsky, Ganesh Kumar VenayagamoorthysuryaNo ratings yet

- (Ksat - Ii) : CLASS - L..K.GDocument25 pages(Ksat - Ii) : CLASS - L..K.Gsurya100% (1)

- Research of Data Mining Based On Neural Networks: Xianjun NiDocument4 pagesResearch of Data Mining Based On Neural Networks: Xianjun NisuryaNo ratings yet

- Sistem Informasi Administrasi Pelayanan Kesehatan Pada Puskesmas Kaligondang PurbalinggaDocument12 pagesSistem Informasi Administrasi Pelayanan Kesehatan Pada Puskesmas Kaligondang PurbalinggaAlfitrah NurjayaNo ratings yet

- Converting Simplex Databases To MultiplexDocument2 pagesConverting Simplex Databases To MultiplexGrant BlairNo ratings yet

- Test Bank For Using MIS, 11th Edition, Kroenke, Randall J. Boyle Download PDF Full ChapterDocument54 pagesTest Bank For Using MIS, 11th Edition, Kroenke, Randall J. Boyle Download PDF Full Chapterbntzmmaki100% (13)

- Smart IvrDocument10 pagesSmart IvrManij MaharjanNo ratings yet

- MT6592 Android ScatterDocument6 pagesMT6592 Android Scattera5636385100% (1)

- Overview of Telecommunications DECEMBER 2021 enDocument25 pagesOverview of Telecommunications DECEMBER 2021 enRaquel RennoNo ratings yet

- DAQmx M SeriesDocument424 pagesDAQmx M SeriesCal SargentNo ratings yet

- Internet: - The Term Internet Has Been Coined From Two Terms, I.e.Document11 pagesInternet: - The Term Internet Has Been Coined From Two Terms, I.e.Jesse JhangraNo ratings yet

- Final Exam Data Structures and AlgorithmsDocument2 pagesFinal Exam Data Structures and AlgorithmsjackblackNo ratings yet

- LG ThinQ Home (Smart Home Solutions)Document35 pagesLG ThinQ Home (Smart Home Solutions)Mohamed TahaNo ratings yet

- Pros and Cons of WebsiteDocument9 pagesPros and Cons of WebsiteDianne GloriaNo ratings yet

- Assignments v0.4Document9 pagesAssignments v0.4Viet DinhvanNo ratings yet

- Tuning SQL Queries For Better Performance in Management Information Systems Using Large Set of DataDocument10 pagesTuning SQL Queries For Better Performance in Management Information Systems Using Large Set of DataBang TrinhNo ratings yet

- Ch-3 Data Representation1Document10 pagesCh-3 Data Representation1Tasebe GetachewNo ratings yet

- TLauncher Setup LogDocument5 pagesTLauncher Setup Logmelissa teresa bautista uwuNo ratings yet

- Ocaml 4.11 RefmanDocument822 pagesOcaml 4.11 RefmanHenrique JesusNo ratings yet

- Selectors, Specificity, and The CascadeDocument85 pagesSelectors, Specificity, and The CascadeSancte ArchangeleNo ratings yet

- 32 SPD - eRAN12.1 - LTE FDD Network Design Technical Training-20170315-A-1.0Document112 pages32 SPD - eRAN12.1 - LTE FDD Network Design Technical Training-20170315-A-1.0Juan Ulises CapellanNo ratings yet

- A Comparison of Current Graph Database ModelsDocument7 pagesA Comparison of Current Graph Database ModelssheltonmaNo ratings yet

- NPSSDocument2 pagesNPSSN InbasagaranNo ratings yet

- Python and Machine Learning Intern: Aam Aadmi PartyDocument10 pagesPython and Machine Learning Intern: Aam Aadmi PartyVaibhav MisraNo ratings yet

- Workforce Pro Wf-C579R: Replaceable Ink Pack SystemDocument2 pagesWorkforce Pro Wf-C579R: Replaceable Ink Pack SystemAhmed Farid GalyNo ratings yet

- Introduction To QEMUDocument73 pagesIntroduction To QEMUsantydNo ratings yet

- Motorola SL2KDocument4 pagesMotorola SL2KrezkyfpNo ratings yet

- KVMRT SSP Elevated & Systems WCT Holdings Berhad Search Export To Excel Project: KVMRT SSP Elevated & SystemsDocument2 pagesKVMRT SSP Elevated & Systems WCT Holdings Berhad Search Export To Excel Project: KVMRT SSP Elevated & Systemsblackflag1No ratings yet

- D-Viewcam Design Tool Release Notes: ContentDocument3 pagesD-Viewcam Design Tool Release Notes: ContentKhryzt DiazNo ratings yet

- Factorial C ProgramDocument7 pagesFactorial C Programbalaji1986No ratings yet

- TCS Exam SDLM AnswersDocument3 pagesTCS Exam SDLM AnswersNalini RayNo ratings yet

- Bizhub C458-C558-C658 Brochure LRDocument4 pagesBizhub C458-C558-C658 Brochure LRMohammad Farooq Khan Jehan Zeb KhanNo ratings yet