Abstract

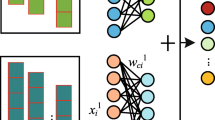

There are mainly two views on the interpretation of high efficiency of Convolutional Neural Networks (CNNs) for the task of image classification: shape bias and texture bias. This is critical to the causality and reliability of CNN models in real applications. In this work, we try to explore the power of CNNs and reconcile the hypothesis contradiction of CNNs from a multi-view image representation. Firstly, we assume an image is generated from object shape representation, object texture representation, and background information. Secondly, we segment and recombine the object shape, texture and image background through two losses: image reconstructed loss and feature discrepancy loss. Finally, the classification loss is combined by shape, texture and background contributions weighted by multi-view features. Comprehensive experiments conducted on real-world datasets show that, first, CNNs generally do not have texture or shape bias, which change with the internal bias of data; second, CNNs are learning knowledge in a lazy way, i.e., high level knowledge is learned only if low level knowledge does not satisfy the task requirements. Our findings might benefit the interpretability of CNNs and provide insight of more robust design.

This paper is supported by National Key Research and Development Program of China under grant No. 2018YFB0204403, No. 2017YFB1401202 and No. 2018YFB1003500.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Adadi, A., Berrada, M.: Peeking inside the black-box: a survey on explainable artificial intelligence (XAI). IEEE Access 6, 52138–52160 (2018)

Ballester, P., Araujo, R.M.: On the performance of GoogLeNet and AlexNet applied to sketches. In: Thirtieth AAAI Conference on Artificial Intelligence (2016)

Bhattacharjee, D., et al.: DUNIT: detection-based unsupervised image-to-image translation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2020)

Brendel, W., Bethge, M.: Approximating CNNs with bag-of-local-features models works surprisingly well on ImageNet. arXiv preprint arXiv:1904.00760 (2019)

Cho, D., Tai, Y.-W., Kweon, I.: Natural image matting using deep convolutional neural networks. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9906, pp. 626–643. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46475-6_39

Dumoulin, V., Shlens, J., Kudlur, M.: A learned representation for artistic style. arXiv preprint arXiv:1610.07629 (2016)

Erhan, D., Bengio, Y., Courville, A., Vincent, P.: Visualizing higher-layer features of a deep network. Univ. Montreal 1341(3), 1 (2019)

Funke, C.M., Gatys, L.A., Ecker, A.S., Bethge, M.: Synthesising dynamic textures using convolutional neural networks. arXiv preprint arXiv:1702.07006 (2017)

Gatys, L., Ecker, A.S., Bethge, M.: Texture sythesis using convolutional neural networks. In: Advances in Neural Information Processing Systems, pp. 262–270 (2015)

Gatys, L.A., Ecker, A.S., Bethge, M.: Image style transfer using convolutional neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2414–2423 (2016)

Geirhos, R., Rubisch, P., Michaelis, C., Bethge, M., Wich-mann, F.A., Brendel, W.: ImageNet-trained CNNs are biased towards texture; increasing shape bias improves accuracy and robustness. arXiv preprint arXiv:1811.12231 (2018)

Hao, S., Zhou, Y., Guo, Y.: A brief survey on semantic segmentation with deep learning. Neurocomputing 406 (2020)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Huang, X., Belongie, S.: Arbitrary style transfer in real-time with adaptive instance normalization. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1501–1510 (2017)

Huang, X., Liu, M.-Y., Belongie, S., Kautz, J.: Multimodal unsupervised image-to-image translation. In: Proceedings of the European Conference on Computer Vision, pp. 172–189 (2018)

Kubilius, J., Bracci, S., de Beeck, H.P.O.: Deep neural networks as a computational model for human shape sensitivity. PLoS Comput. Biol. 12(4), e1004896 (2016)

Karras, T., Laine, S., Aila, T.: A style-based generator architecture for generative adversarial networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2019)

LeCun, Y., Bengio, Y., Hinton, G.: Deep learning. Nature 521(7553), 436 (2015)

LeCun, Y., Bottou, L., Bengio, Y., Haffner, P., et al.: Gradient-based learning applied to document recognition. Proc. IEEE 86(11), 2278–2324 (1998)

Liu, Z., Luo, P., Qiu, S., Wang, X., Tang, X.: DeepFashion: powering robust clothes recognition and retrieval with rich annotations. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (2016)

Milletari, F., Navab, N., Ahmadi, S.-A.: V-Net: fully convolutional neural networks for volumetric medical image segmentation. In: 2016 Fourth International Conference on 3D Vision (3DV), pp. 565–571. IEEE (2016)

Narayanaswamy, S., et al.: Learning disentangled representations with semi-supervised deep generative models. In: Advances in Neural Information Processing Systems, pp. 5925–5935 (2017)

Netzer, Y., Wang, T., Coates, A., Bissacco, A., Wu, B., Ng, A.Y.: Reading digits in natural images with unsupervised feature learning (2011)

Ritter, S., Barrett, D.G., Santoro, A., Botvinick, M.M.: Cognitive psychology for deep neural networks: a shape bias case study. In: Proceedings of the 34th International Conference on Machine Learning, vol. 70, pp. 2940–2949 (2017). JMLR.org

Shen, X., Tao, X., Gao, H., Zhou, C., Jia, J.: Deep automatic portrait matting. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9905, pp. 92–107. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46448-0_6

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014)

Tran, L., Yin, X., Liu, X.: Disentangled representation learning GAN for pose-invariant face recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1415–1424 (2017)

Ulyanov, D., Vedaldi, A., Lempitsky, V.: Improved texture networks: maximizing quality and diversity in feed-forward stylization and texture synthesis. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6924–6932 (2017)

Wang, N., Chen, M., Subbalakshmi, K.P.: Explainable CNN-attention networks (C-attention network) for automated detection of Alzheimer’s disease. arXiv preprint arXiv:2006.14135 (2020)

Wang, Q., et al.: IBRNet: learning multi-view image-based rendering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2021)

Wang, R., et al.: Multi-view bearing fault diagnosis method based on deep learning. J. Phys. Conf. Ser. 1757(1) (2021)

Xiao, H., Rasul, K., Vollgraf, R.: Fashion-MNIST: a novel image dataset for benchmarking machine learning algorithms. arXiv preprint arXiv:1708.07747 (2017)

Xu, N., Price, B., Cohen, S., Huang, T.: Deep image matting. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2970–2979 (2017)

Xu, F., Uszkoreit, H., Du, Y., Fan, W., Zhao, D., Zhu, J.: Explainable AI: a brief survey on history, research areas, approaches and challenges. In: Tang, J., Kan, M.-Y., Zhao, D., Li, S., Zan, H. (eds.) NLPCC 2019. LNCS (LNAI), vol. 11839, pp. 563–574. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-32236-6_51

Zeiler, M.D., Fergus, R.: Visualizing and understanding convolutional networks. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8689, pp. 818–833. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10590-1_53

Zhang, C., Wang, D.-H.: Exploring the prediction consistency of multiple views for transductive visual recognition. IEEE Signal Process. Lett. 28, 668–672 (2021)

Zhao, B., et al.: Multi-view image generation from a single-view. In: Proceedings of the 26th ACM International Conference on Multimedia (2018)

Acknowledgments

This paper is supported by National Key Research and Development Program of China under grant No. 2018YFB0204403, No. 2017YFB1401202 and No. 2018YFB1003500.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Kong, L., Wang, J., Huang, Z., Xiao, J. (2021). A Competition of Shape and Texture Bias by Multi-view Image Representation. In: Ma, H., et al. Pattern Recognition and Computer Vision. PRCV 2021. Lecture Notes in Computer Science(), vol 13022. Springer, Cham. https://doi.org/10.1007/978-3-030-88013-2_12

Download citation

DOI: https://doi.org/10.1007/978-3-030-88013-2_12

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-88012-5

Online ISBN: 978-3-030-88013-2

eBook Packages: Computer ScienceComputer Science (R0)