Abstract

When viewing ambiguous stimuli, people tend to perceive some interpretations more frequently than others. Such perceptual biases impose various types of constraints on visual perception, and accordingly, have been assumed to serve distinct adaptive functions. Here we demonstrated the interaction of two functionally distinct biases in bistable biological motion perception, one regulating perception based on the statistics of the environment â the viewing-from-above (VFA) bias, and the other with the potential to reduce costly errors resulting from perceptual inference â the facing-the-viewer (FTV) bias. When compatible, the two biases reinforced each other to enhance the bias strength and induced less perceptual reversals relative to when they were in conflict. Whereas in the conflicting condition, the biases competed with each other, with the dominant percept varying with visual cues that modulate the two biases separately in opposite directions. Crucially, the way the two biases interact does not depend on the dominant bias at the individual level, and cannot be accounted for by a single bias alone. These findings provide compelling evidence that humans robustly integrate biases with different adaptive functions in visual perception. It may be evolutionarily advantageous to dynamically reweight diverse biases in the sensory context to resolve perceptual ambiguity.

Similar content being viewed by others

Introduction

When confronted with an ambiguous stimulus, the human brain can rapidly achieve an unambiguous interpretation, which is usually accompanied by biases1,2. This perceptual inference process is of great adaptive value, as it ensures the efficiency of perception and helps us overcome the processing limitations of the sensory system. Less tangibly, but perhaps more importantly, the biases that come with the inference process may also serve essential adaptive functions3,4. These biases impose constraints on perception by systematically enhancing our sensitivity to certain kinds of information. In particular, they are based on specific assumptions that arise at least from two sources, according to a theoretical framework for understanding the evolution of cognitive biases4.

The first set of biases is derived from prior knowledge about the environment. This sort of knowledge, represented as prior probability distributions in our brain as proposed by the Bayesian theory of human perception5,6,7, biases perception toward interpretations that match the statistics of the environment8,9,10,11. Consistent with this idea, people immediately perceive an ambiguous shape with shading at its lower part as a bump, assuming that the source of illumination is from above12,13. In the same vein, they tend to experience ambiguous lines14, surfaces15, Necker cubes16,17,18 or biological motions19 as viewing-from-above (VFA) at first glance, which is known as the VFA bias, due to the prior knowledge that objects most probably sit on surfaces below eye level.

Another category of perceptual biases is based on assumptions concerning the consequences of human behaviors. According to the error management theory, a bias leading to less costly errors will evolve20,21. Therefore, if missing a signal would cost higher than a false-alarm, a bias towards such signal can arise. This can partially explain the enhanced sensitivity to information that is potentially dangerous22,23 as well as the asymmetry in the perception of approaching and receding stimuli24,25,26,27. For example, people are more likely to perceive ambiguous moving patterns as approaching motion rather than receding motion28. Similarly, they perceive an ambiguous human walker as facing-the-viewer (FTV) more frequently than the opposite, i.e., away-from-the-viewer29,30,31,32, exhibiting the FTV bias, and have superior sensitivity to the FTV walkers33,34,35.

The two types of perceptual biases, namely, the âstatistics-based biasesâ and the âerror management biasesâ, represent different facets of the perceptual inference process. Yet it remains unclear whether our brain combines such discrepant biases to make sense of the sensory world. When two biases from different categories coexist, and each of the biases alone can enable us to resolve the ambiguity in the sensory input, does the brain rely on one bias while suppressing the other, or allow the two biases to interact? Moreover, considering that perception hinges on not only biases but also the sensory information, it raises the question of whether visual cues that alter the ambiguity of the stimulus could modulate the interaction of the biases.

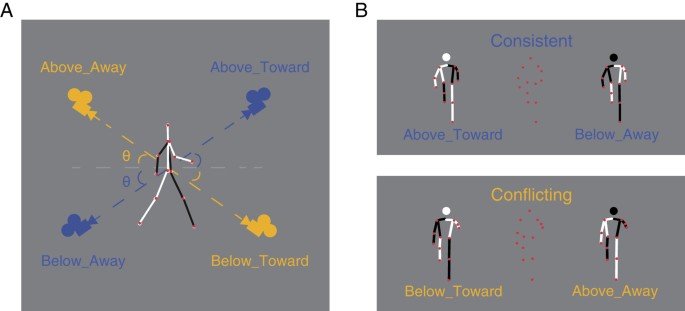

To address these issues, we examined the FTV bias and the VFA bias, given that they belong to the two aforementioned categories respectively and can arise within a single scenario, i.e., biological motion perception. We adopted orthographically projected point-light-walker (PLW) stimuli36 and manipulated the angular deviation from the horizontal plane (Fig. 1A). Without explicit depth cues, these stimuli were ambiguous in terms of orientation-in-depth (toward or away from the viewer) and viewpoint (from above or below). We generated two kinds of ambiguous PLW stimuli, each having two possible interpretations (Fig. 1B). One stimulus could be perceived as viewed from above and walking toward viewer (Above-Toward) or viewed from below and walking away from viewer (Below-Away). We defined it as the consistent condition where the two percepts were either congruent (VFA+âFTV+â) or incongruent (VFAâFTVâ) with both biases. The other stimulus could be perceived as viewed from above while walking away from viewer (Above-Away) or viewed from below while walking toward viewer (Below-Toward). We defined it as the conflicting condition in which one percept was compatible with the VFA bias and opposed to the FTV bias (VFA+âFTVâ) while the other was consistent with the FTV bias but against the VFA bias (FTV+âVFAâ).

The lines connecting the dots (displayed for illustration only) illustrate the depth information: white lines represent the body parts closer to the viewer, and black lines represent parts that are farther in depth. (A) Viewpoints of the two possible percepts for the consistent condition, indicated by blue cameras; and for the conflicting condition, indicated by orange cameras. θ represents the angle of deviation from the horizontal plane, which ranges from 45° to 5° and can be considered as either the angle of elevation or the angle of depression for each stimulus, depending on which percept is referred to. (B) Orthogonally projected PLW stimuli (central) and their corresponding percepts (left and right).

If the brain simultaneously takes into account both the VFA and the FTV assumptions, rather than relying on a single one, as we expected, the overall strength of bias should be modulated by the interaction mode. Particularly, there should be stronger bias in the consistent than in the conflicting condition. Moreover, we predicted that the two biases might compete with each other when they were in conflict, and the competition would depend on visual cues that modulate the ambiguity of the stimulus. To examine these assumptions, we varied the camera angle between 45° and 5° with respect to the horizontal plane using the orthogonal projection method (Fig. 1A). This angular cue was unlikely to directly benefit the resolution of ambiguity, as it did not provide any information about the viewpoint (above/below) or walking direction (toward/away). However, it was possible to modulate the weights of the two biases in the perceptual inference process by amplifying the ambiguity related to the VFA assumption (at relatively large angle) or the FTV assumption (at relatively small angle).

Results

Experiment 1

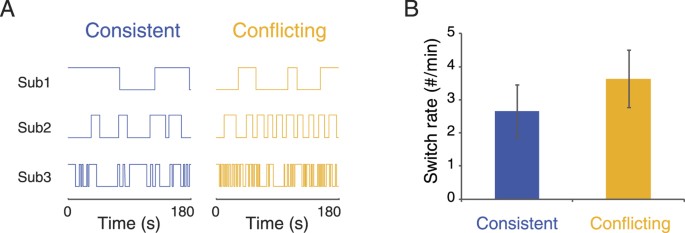

We first set up a pilot experiment to check whether observers could experience the two possible interpretations of the PLW stimuli with large angle of elevation/depression (45°), because no previous studies have examined the perception of such stimuli. Another aim of this experiment was to explore whether interaction modes (consistent and conflicting) of the biases could influence the dynamics of perceptual reversals. Observers were asked to watch the PLW stimuli and report the switches of perception in the consistent condition and the conflicting condition respectively. As shown in Fig. 2A, observers did experience reversals of perception in both conditions during the 3âmin trials, although there were large individual variations in switch rates. More importantly, fewer perceptual reversals were observed in the consistent condition than in the conflicting conditions (Fig. 2B; t(7)â=ââ2.84, pâ=â0.03), suggesting that the consistency between the two biases enhances the stability of bistable perception.

(A) Perceptual reversals as a function of time in the consistent and the conflicting conditions. The panels from top to bottom display sample trials from three observers with slow, medium and fast switch rates, respectively. (B) Mean switch rates for the consistent and the conflicting conditions. Error bars denoteâ±âSEM.

Experiment 2

We further investigated the interaction of the two biases and the potential influence of camera angle cue when the angular deviation from the horizontal plane was relatively large (35°, 20°), in Experiment 2a, and relatively small (15°, 10°, 5°), in Experiment 2b.

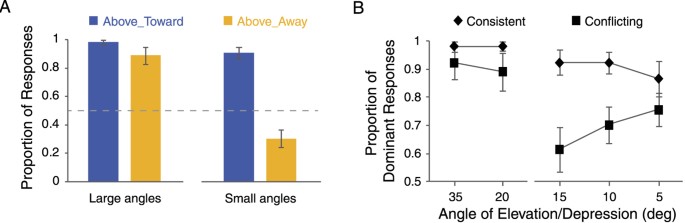

In Experiment 2a, the mean proportion of Above_Toward responses (VFA+âFT+) in the consistent condition and the mean proportion of Above_Away responses (VFA+âFTVâ) in the conflicting condition were both significantly higher than 50% (Fig. 3A, left; one sample T-test: Consistent: t(9)â=â27.64, pâ<â0.001; Conflicting: t(9)â=â6.69, pâ<â0.001), suggesting that the VFA bias dominates perception when the angle of elevation/depression is large. Furthermore, we used the proportion of the dominant percept for each experimental condition, i.e., the percept whose proportion was larger than 0.5 (Fig. 3B, left; Above_Toward for the consistent condition vs. Above_Away for the conflicting condition) as a measure of the strength of bias, and submitted the data to a 2 (Consistent vs. Conflicting)âÃâ2 (35° vs. 20°) repeated measures ANOVA. Neither the main effect of the two factors (interaction mode: (F(1, 9)â=â1.28, pâ=â0.29; angular deviation: F(1, 9)â=â1.79, pâ=â0.21) nor their interaction (F(1, 9)â=â1.71, pâ=â0.22; Fig. 3B left) was significant, indicating that large angular deviation from the horizontal plane prevents the VFA assumption from interacting with the FTV assumption.

(A) Mean proportions of Above_Toward responses in the consistent condition and Above_Away responses in the conflicting condition for Experiment 2a (Large angles) and 2b (Small angles). (B) Mean proportions of the dominant responses in the consistent and the conflicting conditions respectively at each angular deviation. Error bars denoteâ±âSEM.

In Experiment 2b, the mean proportion of Above_Toward responses in the consistent condition was still significantly higher than 50% (t(9)â=â9.54, pâ<â0.001; Fig. 3A, right). Whereas in the conflicting condition, the proportion of Above_Away responses became significantly lower than 50% (t(9)â=â3.00, pâ=â0.02; Fig. 3A right), suggesting that the VFA assumption gives way to the FTV assumption. A two-way repeated measures ANOVA on the proportion of the dominant percept (Fig. 3B, right; Above_Toward for the consistent condition vs. Below_Toward for the conflicting condition) revealed a significant main effect of interaction mode (F(1, 9)â=â9.23, pâ=â0.01) and a significant interaction between interaction mode and angular deviation (F(2, 18)â=â5.64, pâ=â0.01; Fig. 3B right). Specifically, the strength of bias increased as the angular deviation became smaller (15° vs. 10°: t(9)â=ââ2.43, pâ=â0.04; 10° vs. 5°: t(9)â=ââ1.1, pâ=â0.30; Fig. 3B right), only in the conflicting condition.

Taken together, the results of Experiment 2a and 2b provide clear evidence for the interaction of the VFA and the FTV biases. On the one hand, the general strength of bias was stronger in the consistent condition than in the conflicting condition, especially when the angular deviation was small, suggesting that the brain combines the two biases to constrain perception. On the other hand, the dominant percept reversed between large and small angles in the conflicting condition, indicating that rivalry occurs between the two biases.

Experiment 3

Experiment 2 revealed the role of sensory information in modulating the rivalry of biases, by demonstrating the differences between the large angle and small angle conditions. To further elucidate the influence of camera angle on the interaction of the VFA and the FTV biases, we conducted Experiment 3, in which we systematically varied the angle of deviation from the horizontal plane (25°, 20°, 15°, 10°, and 5°) as a within-subjects factor.

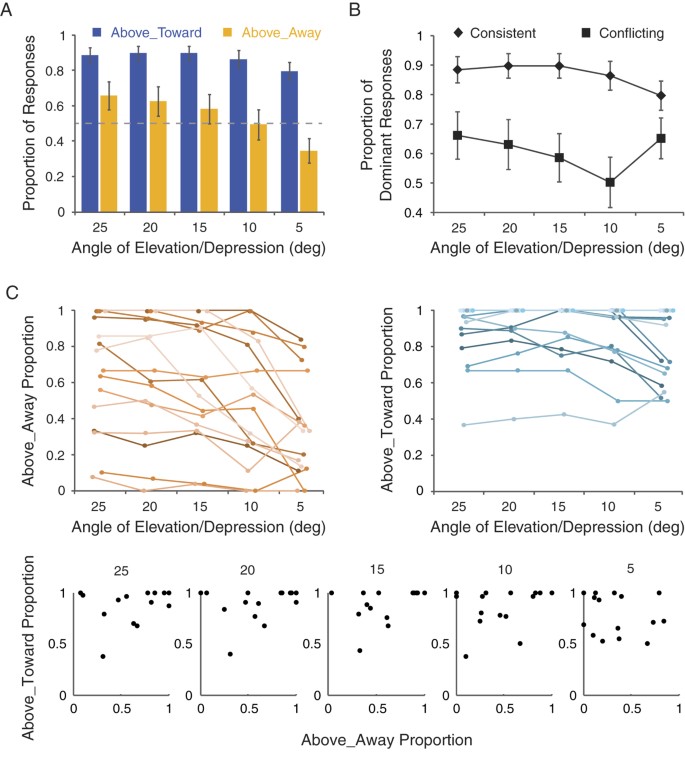

The proportion of Above_Toward responses in the consistent condition was again higher than 50% for all deviation angles (pâ<â0.001; Fig. 4A). By contrast, the proportion of Above_Away responses in the conflicting condition was higher than 50% only at 25°(t(15)â=â2.01, pâ=â0.06), with the pattern reversed when the angle of deviation decreased to 5° (t(15)â=â2.19, pâ=â0.04), which was consistent with the findings from Experiment 2. Moreover, a 2 (interaction mode)âÃâ5 (angular deviation) repeated measures ANOVA on the proportion of the dominant percept (Fig. 4B) showed significant main effects of interaction mode (F(1, 15)â=â17.38, pâ=â0.001) and angular deviation (F(4, 60)â=â20.89, pâ<â0.001), and a significant interaction between the two factors (F(4, 60)â=â5.94, pâ<â0.001). Specifically, the weakest bias came with the angle of 10°, and the strength of bias became stronger as the angle of deviation became either larger (15° vs. 10°: t(15)â=â2.52, pâ=â0.02; 20° vs. 10°: t(15)â=â3.31, pâ<â0.01; 25° vs. 10°: t(15)â=â4.12, pâ<â0.001) or smaller (5° vs. 10°: t(15)â=â2.94, pâ=â0.01), though in those two situations perception was actually biased toward opposite directions. Such pattern was only observed in the conflicting condition, suggesting that angular deviation from the horizontal plane modulates the rivalry between the two biases.

(A) Mean proportions of Above_Toward responses in the consistent condition and Above_Away responses in the conflicting condition as a function of angular deviation. (B) Mean proportions of the dominant responses along 5 angles of deviation in the consistent and the conflicting conditions. (C) Proportions of Above_Away responses in the conflicting conditions (upper-left panel) and proportions of Above_Toward responses in the consistent conditions (upper-right panel) for individual observers, as well as the correlation between them at each camera angle (lower panels). Error bars denoteâ±âSEM.

Interestingly, further examination of individual data revealed that there were large individual differences regarding the dominant percept (Fig. 4C). Some observers consistently manifested a preference for the VFA assumption over the FTV assumption (VFA-dominated), while others exhibited the opposite pattern (FTV-dominated) or a relatively neutral pattern (no apparent preference). Despite such dramatic difference, almost all observers showed the tendency that the proportion of the Above_Away responses decreased gradually with the angle of deviation in the conflicting condition, whereas the proportion of Above_Toward responses was relatively stable across different angles. In addition, it is noteworthy that there is no significant correlation between the VFA percepts in the two conditions (i.e., âAbove_Awayâ responses in the conflicting condition and âAbove_Towardâ responses in the consistent condition) at each camera angle (Fig. 4C, lower panels; 25°: râ=â0.31, pâ=â0.24; 20°: râ=â0.27, pâ=â0.31; 15°: râ=â0.27, pâ=â0.31; 10°: râ=â0.29, pâ=â0.27; 5°: râ=ââ0.14, pâ=â0.60). These results suggest that the observations from the current study are not likely due to the effect merely related to a single bias, because a single-bias-dominant effect would lead to strong correlations between the two conditions in terms of VFA percept or FTV percept.

To sum up, results of Experiment 3 demonstrate that angular deviation modulates the relative weight of the two biases independent of the weight of any single bias at the individual level, which highlights a mechanism underlying visual perception for dynamically reweighting biases with different functions according to the sensory input.

Discussion

The current study investigated the interaction of the VFA bias and the FTV bias, two perceptual biases that have been assumed to serve distinct adaptive functions, in bistable visual perception. We found that when compatible, the two biases produced a robust bias effect, with the strength of bias being stronger (Experiment 2 and Experiment 3) and the perception being more stable (Experiment 1) relative to when the two biases were in conflict. On the other hand, in the conflicting condition, the two biases competed with each other, with their relative strength modulated by the angular deviation from the horizontal plane (Experiment 2 and 3). These findings provide compelling evidence that the human brain spontaneously integrates biases driven by different evolutionary forces to resolve visual ambiguity, and suggest that the interaction of the biases can be modulated by the sensory cues.

These results provide valuable clues about how biases with distinct adaptive goals constrain perception in situations with different degrees of complexity. In a relatively simple situation where a possible percept can meet multiple adaptive goals (e.g., the consistent condition), such percept is undoubtedly the best and simplest solution. Nevertheless, in a situation where no such perfect solution exists (e.g., the conflicting condition), the brain may evaluate the nature of the biases as well as information provided by the sensory input to resolve the ambiguity.

Specifically, we found the interaction of the biases hinged on the camera angle cue only when the two biases were in conflict: at the group level, the VFA bias trumped the competition at relatively large angle, while the FTV bias gained dominance at relatively small angle. This observation can be accounted for by the functional distinction between the two biases. From an evolutionary perspective, the VFA bias probably serves to constrain perceptual inference by applying prior knowledge about the physical environment8,9,10,11, whereas the FTV bias may exist to reduce the likelihood that one would miss the potential threat linked with an approaching creature37,38,39,40,41,42,43. This difference allows the weights of the two biases to change in opposite directions with the camera angle cue. In particular, the larger the angular deviation was, the more imperative solving the ambiguity regarding the viewpoint (from above or from below) became, and then a greater weight would be given to the VFA bias. Conversely, smaller deviation angles indicated a larger possibility that an approaching walker would interact with the viewer, thereby promoting the necessity to deal with the ambiguity regarding orientation-in-depth and requiring the brain to give more priority to the FTV bias. This twofold effect may give rise to the reweighting of the two biases we observed at the group level. Crucially, this pattern was consistent across observers, no matter which bias dominated perception at the individual level, and no significant correlation was observed between the two conditions in terms of the VFA percept (or the FTV percept), further excluding the possibility that the competition of the two biases is governed by any single bias. Rather, our results support the existence of a mechanism underlying perceptual inference for adaptively reweighting diverse heuristic assumptions in accordance with the sensory input.

The weight of a bias varies with the visual cue is consistent with the Bayesian theory of perception5,6,7. In a computational model that has been proposed to account for the interaction of visual constraints, the relative weight of two competing priors that are both based on environmental statistics may change as a function of the reliability of their corresponding visual cues15. It is plausible to assume that the interaction of the VFA bias and the FTV bias also follows the rules of Bayesian inference, with the general bias strength predicted by visual cues that can modulate both biases simultaneously, such as the camera angle. However, to incorporate this assumption, the current theoretical and computational frameworks for the interaction of prior constraints need to be extended to include biases that arise from sources beyond the environmental statistics, such as the FTV bias or the biases concerning the asymmetry in the perception of potentially threatening and neutral stimuli24,25,26,27,37,38,39,40. One major challenge posed by these âerror-management-biasesâ is that they may have more complex sources than the âstatistics-based priorsâ. For example, several studies argue for the important role of physical cues, such as surface form or local motion, in the FTV bias44,45,46. In addition, our recent study reveals a significant and specific contribution of genetic factors to the individual differences in the FTV bias47. Future studies should consider the potential factors contributing to the FTV bias or other âerror management biasesâ, and illuminate how these factors modulate the interaction between these biases and the âstatistics-based biasesâ using psychophysical and modeling methods.

In conclusion, the current study demonstrates the interaction of two biases shaped by distinct adaptive forces (i.e., the VFA bias and the FTV bias) in bistable visual perception. The two biases cooperate to strengthen the percept compatible with both biases, whereas rival for dominance in a stimulus-sensitive manner when in conflict. These findings shed new lights on how the human brain combines biases serving various adaptive functions to gain optimal perception, and emphasize the need to understand the functioning and interaction of perceptual biases in the sensory context.

Methods

Participants

The study was carried out in accordance with procedures and protocols approved by the institutional review board of the Institute of Psychology, Chinese Academy of Sciences. A total of 47 (23 female) undergraduate and graduate students participated in the study, 8 for Experiment 1, 10 for Experiment 2a, 13 for Experiment 2b, and 16 for Experiment 3. Three participants from Experiment 2b were excluded from data analysis due to low response accuracy (see details in Data Analysis). All participants had normal or corrected-to-normal vision, and were naïve to the purpose of the experiments. They gave written informed consent and were paid for their participation.

Stimuli

Stimuli were generated and displayed using MATLAB together with the Psychophysics Toolbox extensions48,49. The point-light walker (PLW) consists of 13 white dots placed on the major joints and the head of a human walker, and can be perceived as either facing toward or away from the observer50. We rotated the walkers with respect to the horizontal plane (by 45° in Experiment 1; by 35° and 20° in Experiment 2a; 15°, 10° and 5° in Experiment 2b; and 25°, 20° 15°, 10°, 5° in Experiment 3) to change the angle of deviation from horizontal. Note that for each stimulus, the angle of deviation could be considered as either the angle of elevation (for one possible percept) or the angle of depression (for the other percept), resulting in ambiguity regarding the observerâs viewpoint (from above or from below). By this means, we generated two kinds of ambiguous PLW stimuli belonging to the consistent and the conflicting experimental conditions respectively (Fig. 1).

Procedure

Participants sat in front of a 19-inch cathode ray tube display (1280âÃâ1024, 60âHz) with a viewing distance of 60âcm. Each trial began with a PLW stimulus displayed with a white fixation cross at the center of the screen on gray background. The stimuli (ranging from 5.7 to 6.7 degrees in height, dependent on the angular deviation from the horizontal plane) were presented in one of three horizontal directions: directly toward the viewer (0°), 45° left and 45° right.

In Experiment 1, the PLW stimulus was displayed for 3âmin in each trial. Observers were asked to report the walking direction of the PLW when it switched between facing toward viewer and away from viewer by pressing the corresponding keys. Before the formal test, observers received practice trials to get familiar with all the stimuli. The formal test consisted of 6 trials in total, with an equal number of trials in the consistent and the conflicting conditions. The order of conditions was counterbalanced across observers. To reduce the potential influence from the previous trial, observers were required to take a compulsive break for 1âminute after each trial.

In Experiments 2 and 3, the PLW stimulus was displayed for 1000âms in each trial. Observers were required to judge (1) the walking direction of the PLW (e.g., toward the viewer and to the observerâs left) and (2) the viewpoint with respect to the horizontal plane (viewing from above or from below) successively by pressing the corresponding keys. There were 120 trials in Experiment 2a, 180 trials in Experiment 2b and 300 trials in Experiment 3, with 30 trials for each experimental condition (consistent or conflicting condition combined with each angular deviation from the horizontal plane). Within each experiment, all trials were run in random order. The observers received a practice session before the formal test, and took compulsive breaks every 20 trials during the experiment.

Data analysis

For Experiment 1, percepts lasting for less than two seconds were considered unstable and excluded from further analysis. For Experiments 2 and 3, we only included the correct trials, i.e., trials with responses matching any of the two possible interpretations of the ambiguous stimulus. In Experiment 2b, three subjects whose mean accuracy was below the chance level computed based on a binomial distribution with a probability threshold at 0.95 were excluded from analysis. For all experiments, data of the three horizontal directions were combined for formal analyses, since preliminary analyses revealed no significant main effect of horizontal direction.

Additional Information

How to cite this article: Zhang, X. et al. The interaction of perceptual biases in bistable perception. Sci. Rep. 7, 42018; doi: 10.1038/srep42018 (2017).

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

References

Long, G. M. & Toppino, T. C. Enduring interest in perceptual ambiguity: alternating views of reversible figures. Psychol. Bull. 130, 748â768 (2004).

Sterzer, P., Kleinschmidt, A. & Rees, G. The neural bases of multistable perception. Trends Cogn. Sci. 13, 310â318 (2009).

Haselton, M. G. et al. Adaptive Rationality: An Evolutionary Perspective on Cognitive Bias. Soc. Cognition 27, 733â763 (2009).

Haselton, M. G., Daniel, N. & Murray, D. The Evolution of Cognitive Bias In The Handbook of Evolutionary Psychology (ed. D. M. Buss ) 968â987 (John Wiley & Sons, 2015).

Mamassian, P., Landy, M. S. & Maloney, L. T. Bayesian Modelling of Visual Perception In Probabilistic models of the brain: Perception and neural function (eds Rajesh, P. N. Rao, Bruno A. Olshausen & Michael S. Lewicki ) 13â35 (MIT press, 2002).

Kersten, D., Mamassian, P. & Yuille, A. Object perception as Bayesian inference. Annu. Rev. Psychol. 55, 271â304 (2004).

Knill, D. C. & Pouget, A. The Bayesian brain: the role of uncertainty in neural coding and computation. Trends Neurosci. 27, 712â719 (2004).

Barlow, H. The exploitation of regularities in the environment by the brain. Behav. Brain Sci. 24, 652â671 (2001).

Weiss, Y., Simoncelli, E. P. & Adelson, E. H. Motion illusions as optimal percepts. Nat. Neurosci. 5, 598â604 (2002).

Jazayeri, M. & Movshon, J. A. Optimal representation of sensory information by neural populations. Nat. Neurosci. 9, 690â696 (2006).

Girshick, A. R., Landy, M. S. & Simoncelli, E. P. Cardinal rules: visual orientation perception reflects knowledge of environmental statistics. Nat. Neurosci. 14, 926â932 (2011).

Ramachandran, V. S. Perception of shape from shading. Nature 331, 163â166 (1988).

Mamassian, P. & Goutcher, R. Prior knowledge on the illumination position. Cognition 81, B1âB9 (2001).

Mamassian, P. & Landy, M. S. Observer biases in the 3D interpretation of line drawings. Vision Res. 38, 2817â2832 (1998).

Mamassian, P. & Landy, M. S. Interaction of visual prior constraints. Vision Res. 41, 2653â2668 (2001).

Necker, L. A. Observations on some remarkable optical phaenomena seen in Switzerland; and on an optical phaenomenon which occurs on viewing a figure of a crystal or geometical solid. Lond. Edinburgh Phil. Magazine J. Sci. 1, 329â337 (1832).

Washburn, M. F., Mallay, H. & Naylor, A. The influence of the size of an outline cube on the fluctuations of its perspective. Am. J. Psychol. 43, 484â489 (1931).

Dobbins, A. C. & Grossmann, J. K. Asymmetries in perception of 3D orientation. PLoS ONE 5, e9553 (2010).

Troje, N. F. & McAdam, M. The viewing-from-above bias and the silhouette illusion. I-Perception 1, 143â148 (2010).

Haselton, M. G. & Buss, D. M. Error management theory: a new perspective on biases in cross-sex mind reading. J. Pers. Soc. Psychol. 78, 81â91 (2000).

Johnson, D. D., Blumstein, D. T., Fowler, J. H. & Haselton, M. G. The evolution of error: error management, cognitive constraints, and adaptive decision-making biases. Trends Ecol. Evol. 28, 474â481 (2013).

Witt, J. K. & Sugovic, M. Spiders appear to move faster than non-threatening objects regardless of oneâs ability to block them. Acta Psychol. 143, 284â291 (2013).

Fessler, D. M. T., Holbrook, C. & Snyder, J. K. Weapons Make the Man (Larger): Formidability Is Represented as Size and Strength in Humans. PLoS One 7, e32751 (2012).

Neuhoff, J. G. An adaptive bias in the perception of looming auditory motion. Ecol. Psychol. 13, 87â110 (2001).

Shirai, N. & Yamaguchi, M. K. Asymmetry in the perception of motion-in-depth. Vision Res. 44, 1003â1011 (2004).

Imura, T., Shirai, N., Tomonaga, M., Yamaguchi, M. K. & Yagi, A. Asymmetry in the perception of motion in depth induced by moving cast shadows. J. Vis. 8, 10 (2008).

Cappe, C., Thut, G., Romei, V. & Murraya, M. M. Selective integration of auditory-visual looming cues by humans. Neuropsychologia 47, 1045â1052 (2009).

Lewist, C. F. & McBeath, M. K. Bias to experience approaching motion in a three-dimensional virtual environment. Perception 33, 259â276 (2004).

Vanrie, J., Dekeyser, M. & Verfaillie, K. Bistability and biasing effects in the perception of ambiguous point-light walkers. Perception 33, 547â560 (2004).

Vanrie, J. & Verfaillie, K. Perceiving depth in point-light actions. Percept. Psychophys. 68, 601â612 (2006).

Schouten, B. & Verfaillie, K. Determining the point of subjective ambiguity of ambiguous biological-motion figures with perspective cues. Behav. Res. Methods 42, 161â167 (2010).

Vanrie, J. & Verfaillie, K. On the depth reversibility of point-light actions. Vis Cogn 19, 1158â1190 (2011).

Doi, H. & Shinohara, K. Bodily movement of approach is detected faster than that of receding. Psychon. Bull. Rev. 19, 858â863 (2012).

Wang, Y. & Jiang, Y. Integration of 3D structure from disparity into biological motion perception independent of depth awareness. PLoS One 9, e89238 (2014).

Sweeny, T. D., Haroz, S. & Whitney, D. Reference repulsion in the categorical perception of biological motion. Vision Res. 64, 26â34 (2012).

Johansso, G. Visual-Perception of Biological Motion and a Model for Its Analysis. Percept. Psychophys. 14, 201â211 (1973).

Brooks, A. et al. Correlated changes in perceptions of the gender and orientation of ambiguous biological motion figures. Curr. Biol. 18, R728âR729 (2008).

Schouten, B., Troje, N. F., Brooks, A., van der Zwan, R. & Verfaillie, K. The facing bias in biological motion perception: Effects of stimulus gender and observer sex. Atten. Percept. Psychophys. 72, 1256â1260 (2010).

Schouten, B., Davila, A. & Verfaillie, K. Further explorations of the facing bias in biological motion perception: perspective cues, observer sex, and response times. PLoS ONE 8, e56978 (2013).

Troje, N. F. & Chang, D. H. F. Shape-Independent Processing of Biological Motion In People watching: Social Perceptual, and Neurophysiological Studies of Body Perception (eds K. Johnson & M. Shiffrar ) 82â100 (Oxford University Press, 2013).

Heenan, A. & Troje, N. F. Both Physical Exercise and Progressive Muscle Relaxation Reduce the Facing-the-Viewer Bias in Biological Motion Perception. Plos One 9, e99902 (2014).

Heenan, A. et al. Assessing threat responses towards the symptoms and diagnosis of schizophrenia using visual perceptual biases. Schizophr. Res. 159, 238â242 (2014).

Heenan, A. & Troje, N. F. The relationship between social anxiety and the perception of depth-ambiguous biological motion stimuli is mediated by inhibitory ability. Acta Psychol. 157, 93â100 (2015).

Schouten, B., Troje, N. & Verfaillie, K. The facing bias in biological motion perception: Structure, kinematics, and body parts. Atten. Percept. Psychophys. 73, 130â143 (2011).

de Lussanet, M. & Lappe, M. Depth perception from point-light biological motion displays. J. Vis. 12, 14 (2012).

Weech, S., McAdam, M., Kenny, S. & Troje, N. F. What causes the facing-the-viewer bias in biological motion? J. Vis. 14, 10 (2014).

Wang, Y., Wang, L., Xu, Q., Liu, D. & Jiang, Y. Domain-Specific Genetic Influence on Visual-Ambiguity Resolution. Psychol. Sci. 25, 1600â1607 (2014).

Pelli, D. G. The VideoToolbox software for visual psychophysics: Transforming numbers into movies. Spat. Vis. 10, 437â442 (1997).

Brainard, D. H. The psychophysics toolbox. Spat. Vis. 10, 433â436 (1997).

Vanrie, J. & Verfaillie, K. Perception of biological motion: a stimulus set of human point-light actions. Behav. Res. Methods Instrum. Comput. 36, 625â629 (2004).

Acknowledgements

This research was supported by grants from National Natural Science Foundation of China (No. 31100733, No. 31525011) and the Strategic Priority Research Program and the Key Research Program of Frontier Sciences of the Chinese Academy of Sciences (No. XDB02010003, No. QYZDB-SSW-SMC030).

Author information

Authors and Affiliations

Contributions

X.Z. and Y.W. conceived the idea; X.Z. designed the experiments and collected and analyzed the data under the supervision of Y.W. and Y.J.; X.Z. and Q.X. drafted the manuscript, Y.W. and Y.J. provided critical revisions.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Rights and permissions

This work is licensed under a Creative Commons Attribution 4.0 International License. The images or other third party material in this article are included in the articleâs Creative Commons license, unless indicated otherwise in the credit line; if the material is not included under the Creative Commons license, users will need to obtain permission from the license holder to reproduce the material. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/

About this article

Cite this article

Zhang, X., Xu, Q., Jiang, Y. et al. The interaction of perceptual biases in bistable perception. Sci Rep 7, 42018 (2017). https://doi.org/10.1038/srep42018

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep42018