Big data in Private Banking

- 1. 1 © Jerome Kehrli @ niceideas.ch Big Data in Private Banking Opportunities and how to get them ?

- 2. 2 1. What is Big Data ? 2. Opportunities in Wealth Management ? 1. Real time performance and risk metrics 2. Portfolio optimization and simulation 3. Leveraging customer data 3. How to get there ? Agenda

- 3. 3 1. What is Big Data ?

- 4. 5 An evolution of society (Souce:http://www.businessinsider.fr/us/vatican-square- 2005-and-2013-2013-3)

- 5. 6 The era of power

- 6. 7 Today In 2018, over 4 billion people are connected and share data on a continues time and everywhere Mobile First !!!

- 7. 8 Tomorrow « The Internet of Things is the next Big Thing » The Economist

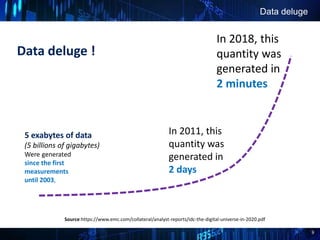

- 8. 9 Data deluge ! Data deluge 5 exabytes of data (5 billions of gigabytes) Were generated since the first measurements until 2003, In 2011, this quantity was generated in 2 days In 2018, this quantity was generated in 2 minutes Source:https://www.emc.com/collateral/analyst-reports/idc-the-digital-universe-in-2020.pdf

- 9. 10 All the time !All the time !

- 10. 11 Everywhere !

- 11. 12 1 minute on Internet

- 12. 13 Technical capability evolution For the 40 years, the IT component capabilties grew exponentially CPU, RAM, Disk … follow the Moore law! Source : http://radar.oreilly.com/2011/08/building-data-startups.html

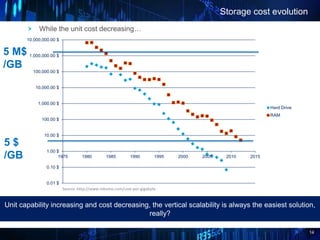

- 13. 14 Storage cost evolution While the unit cost decreasing… Unit capability increasing and cost decreasing, the vertical scalability is always the easiest solution, really? 0.01 $ 0.10 $ 1.00 $ 10.00 $ 100.00 $ 1,000.00 $ 10,000.00 $ 100,000.00 $ 1,000,000.00 $ 10,000,000.00 $ 1975 1980 1985 1990 1995 2000 2005 2010 2015 Hard Drive RAM Source :http://www.mkomo.com/cost-per-gigabyte 5 $ /GB 5 M$ /GB

- 14. 15 Disk throughput evolution Issue : The throughput evolution is always lower than the capacity evolution How read/write more and more data through an always thicker pipe? Gain : x100 000 Capacity: Gain : x 10’000 In 10 years Throughput Gain : x 50 In 10 years

- 15. 16 Origins of Big Data : the web giants !

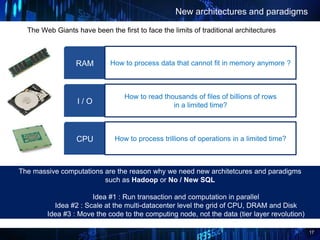

- 16. 17 How to process data that cannot fit in memory anymore ? The Web Giants have been the first to face the limits of traditional architectures New architectures and paradigms The massive computations are the reason why we need new architetcures and paradigms such as Hadoop or No / New SQL Idea #1 : Run transaction and computation in parallel Idea #2 : Scale at the multi-datacenter level the grid of CPU, DRAM and Disk Idea #3 : Move the code to the computing node, not the data (tier layer revolution) RAM How to read thousands of files of billions of rows in a limited time?I / O How to process trillions of operations in a limited time?CPU

- 17. 18 So what is big data? Defining big Data is actually beyond the formal definition. It’s all together a technology evolution anticipated by the Big Consulting companies and a business opportunity ! Big Data More and different data Evolution of Datascience More computing capacities New technologies and architectures

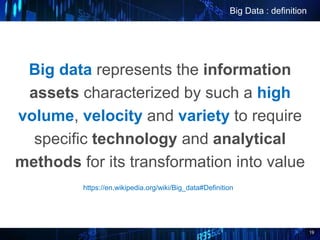

- 18. 19 Big data represents the information assets characterized by such a high volume, velocity and variety to require specific technology and analytical methods for its transformation into value Big Data : definition https://en.wikipedia.org/wiki/Big_data#Definition

- 19. 20 Existing Architectures Limits IO Limit Applications are heavily storage oriented CPU Limit Applications are heavily computation oriented TPS Limit (Transaction Per Second) Applications are heavily high-troughput oriented Transactional Applications / Operational Information Systems EPS Limit (Event Per Second) Applications are heavily ultra low-latency oriented Event Flow applications

- 20. 21 Existing Architectures Limits IO Limit Applications are heavily storage oriented CPU Limit Applications are heavily computation oriented TPS Limit (Transaction Per Second) Applications are heavily high-troughput oriented Transactional Applications / Operational Information Systems EPS Limit (Event Per Second) Applications are heavily ultra low-latency oriented Event Flow applications Over 10 Tb, « classical » architectures requires huge software and hardware adaptations. Over 1 000 transactions / second, « classical » architectures requires huge software and hardware adaptations. Over 1 000 events / second, « classical » architectures requires huge software and hardware adaptations. Over 10 threads/Core CPU, sequential programming reach its limits (IO).

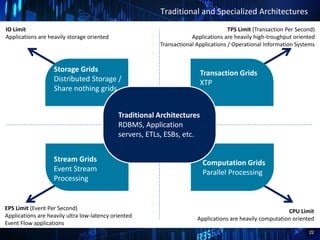

- 21. 22 Traditional and Specialized Architectures IO Limit Applications are heavily storage oriented CPU Limit Applications are heavily computation oriented TPS Limit (Transaction Per Second) Applications are heavily high-troughput oriented Transactional Applications / Operational Information Systems EPS Limit (Event Per Second) Applications are heavily ultra low-latency oriented Event Flow applications Storage Grids Distributed Storage / Share nothing grids Transaction Grids XTP Stream Grids Event Stream Processing Computation Grids Parallel Processing Traditional Architectures RDBMS, Application servers, ETLs, ESBs, etc.

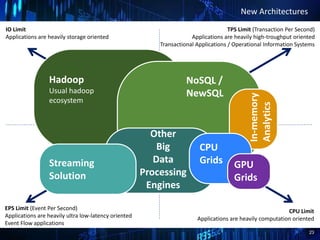

- 22. 23 Traditional Architectures RDBMS, Application servers, ETLs, ESBs, etc. New Architectures IO Limit Applications are heavily storage oriented CPU Limit Applications are heavily computation oriented TPS Limit (Transaction Per Second) Applications are heavily high-troughput oriented Transactional Applications / Operational Information Systems EPS Limit (Event Per Second) Applications are heavily ultra low-latency oriented Event Flow applications Hadoop Usual hadoop ecosystem NoSQL / NewSQL In-memory Analytics Other Big Data Processing Engines Streaming Solution CPU Grids GPU Grids

- 23. 24 Some examples IO Limit Applications are heavily storage oriented CPU Limit Applications are heavily computation oriented TPS Limit (Transaction Per Second) Applications are heavily high-troughput oriented Transactional Applications / Operational Information Systems EPS Limit (Event Per Second) Applications are heavily ultra low-latency oriented Event Flow applications RabbitMQ, Zero MQ Apache Kafka Quartet ActivePivot Sqoop Exalytics HDFS Exadata EMC Teradata SQLFire Giga Spaces HBase Cassandra, MongoDB, CouchDB Voldemort Hana Redis MapR Esper Hama Igraph Spark / Spark Streaming Hive Pig

- 24. 25 2. Opportunities in Wealth Management ?

- 25. 26 Big Data in Wealth Management Investment Research Data discovery / market research Development of Investment ideas Testing of investment strategies Portfolio Management Trading Risk Management Aggregation of position Data Position monitoring Risk dashboards Portfolio Management Customer knowledge Unified / Consolidated customer view Customer profiling and analysis Know Your Customer / External Data Analysis of unstructured data Client knowledge Investment advisory Compliance and Monitoring Pre / Post-Trade Fraud detection and prevention Anti Money Laundering Communication channels monitoring State of the Art use cases 1 2 3

- 26. 27 Portfolio optimization and simulation Focus on some use cases Real-time performance and risk metrics Intra-day positions and trades Risk dashboards Solvency ratios for credit approval Fraud prevention / Anti-Money laundering What if we had taken this or that investment decision ? What about this investment strategy ? Large scale portfolio optimization Leveraging Customer data Customer profiling / classification Personalized investment advices Better / deeper fraud detection Marketing campaigns

- 27. 28 2.1 Real time performance and risk metrics

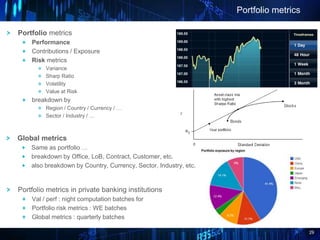

- 28. 29 Portfolio metrics Portfolio metrics Performance Contributions / Exposure Risk metrics Variance Sharp Ratio Volatility Value at Risk breakdown by Region / Country / Currency / … Sector / Industry / … Global metrics Same as portfolio … breakdown by Office, LoB, Contract, Customer, etc. also breakdown by Country, Currency, Sector, Industry, etc. Portfolio metrics in private banking institutions Val / perf : night computation batches for Portfolio risk metrics : WE batches Global metrics : quarterly batches

- 29. 30 Make it real-time … The disastrous global financial crisis put a spotlight on the need to get rapid feedback after market events. Banks are trying to obtain an array of risk metrics in more real time, released multiple times during the day Reduce time to result as much as possible Take intraday positions and trades into account Get immediate feedback on intraday market events Using latest quotes and other metrics

- 30. 31 The good ol’way 1. Night / WE / Quarterly computation batches Missing intraday positions / live quotes Far from real time 2. Intraday calculation within the Operational IS Everything in the RDBMS Load / reload / compute / re-compute again Heavy load on Operational IS / slow computation Very long response time (several minutes) / crash … 3. Efficient off-Operational IS computation (rare …) Distributed cache (Jboss Cache) Reload time / Huge operating costs Computing Grids (Terradata, …) Huge licensing cost

- 31. 32 Commodity hardware Reduce TCO Scale Out Open-source software stack No licensing Cost Ease of operation (Web giants initiated) From Pull to Push Computing Portfolio or global Perf or VaR in real-time is difficult Processing market events and updating metrics in real-time is straightforward We have the technology The Big Data Way

- 32. 33 2.2 Portfolio optimization and simulation

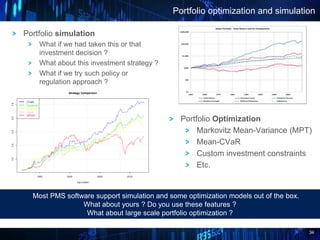

- 33. 34 Portfolio optimization and simulation Portfolio Optimization Markovitz Mean-Variance (MPT) Mean-CVaR Custom investment constraints Etc. Portfolio simulation What if we had taken this or that investment decision ? What about this investment strategy ? What if we try such policy or regulation approach ? Most PMS software support simulation and some optimization models out of the box. What about yours ? Do you use these features ? What about large scale portfolio optimization ?

- 34. 35 Portfolio optim. – bactkesting and stress testing Portfolio backtesting / stresstesting o Backtest over past periods o This is Markowitz o Test optimization parameters o Stress-test over market events o A lot of computations … Stress-testing and back-testing are a little less common … What do you have ? What about large scale backtesting ?

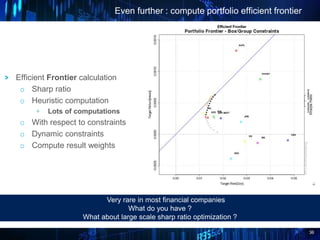

- 35. 36 Even further : compute portfolio efficient frontier Efficient Frontier calculation o Sharp ratio o Heuristic computation + Lots of computations o With respect to constraints o Dynamic constraints o Compute result weights Very rare in most financial companies What do you have ? What about large scale sharp ratio optimization ?

- 36. 37 The good ol’way 1. Excel – Quantitative analysts Use extract from Operational IS … loaded In Excel Simulations / rebalancings are run from Excel Slow / inadapted / buggy 2. Calc. Program - Quantitative analysts Use extract from operational IS … loaded In Matlab Simulations / rebalancings are run from Matlab Steady learning curve Poor User Interface 3. Proprietary/ specialized software (Reuters, BB, …) To be integrated on Operational IS Tricky / Risky Expensive… Inflexible

- 37. 38 The Big Data Way > Findings … + Quantitative research tooling is sub-optimal in most private banking institutions + Some needs are more or less covered + Yet we are far from a large scale and systemic approach to portfolio optimization and back-testing Increase computing capacities with eXtreme parallelization / distribution Large scale distributed systems Move the computing code to the data Reduce TCO Open source software stacks Hadoop, Cassandra, Infinispan, Python SciKit, R, … Commodity Hardware

- 38. 39 2.3 Leveraging customer data

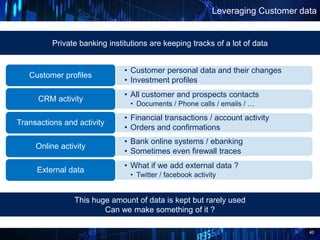

- 39. 40 Leveraging Customer data • Customer personal data and their changes • Investment profiles Customer profiles • All customer and prospects contacts • Documents / Phone calls / emails / … CRM activity • Financial transactions / account activity • Orders and confirmations Transactions and activity • Bank online systems / ebanking • Sometimes even firewall traces Online activity • What if we add external data ? • Twitter / facebook activity External data Private banking institutions are keeping tracks of a lot of data This huge amount of data is kept but rarely used Can we make something of it ?

- 40. 41 Opportunities can be examined from two perspectives: Customer Data : new opportunities ? Big Data as a « Cost killer » or an enhancer Big Data as a way to « widen the field of possibilities »

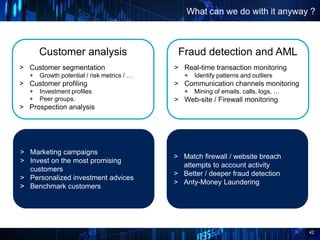

- 41. 42 What can we do with it anyway ? Fraud detection and AML > Real-time transaction monitoring + Identify patterns and outliers > Communication channels monitoring + Mining of emails, calls, logs, … > Web-site / Firewall monitoring Customer analysis > Customer segmentation + Growth potential / risk metrics / … > Customer profiling + Investment profiles + Peer groups. > Prospection analysis > Marketing campaigns > Invest on the most promising customers > Personalized investment advices > Benchmark customers > Match firewall / website breach attempts to account activity > Better / deeper fraud detection > Anty-Money Laundering

- 42. 43 What can we do with it anyway ? (cont’d) Online banking software > Advanced searched on transactions + Multi-criterias : date, name of shop, revenues, full-text search > Annotate transactions, shops, compare, get advises, etc. Long term logs of all transactions Know Your Customer > Customer 360 view … + Everything with contacts, transactions, performance, risk metrics, … > Customer identification program + Matching with Web and social networks > User Experience Revolution > Marketing / Digital Experience > User-engineering > Fast access to all customer data > Unified view of the customer > Real-time construction / display

- 43. 44 The Big Data Way Manipulate and consolidate very large volumes of data efficiently HDFS and Other No/NewSQL storages Highly distributed computing Reduce TCO Open source software stacks Hadoop, Cassandra, Infinispan, Python SciKit-Learn, R, … Commodity Hardware Manipulating, consolidating and mining all the data related to customer and/or users activities resulting from heterogeneous sources is difficult Some initiatives already exist in most institutions. They are however most of the time limited to small and specific sets of data Or implemented on expensive technologies such as Terradata

- 44. 45 3. How to get there ?

- 45. 46 Improve Analytics System Reduce TCO / Cost Killer ! Extend the field of possibilities Consider use cases so far reserved to investment banking institutions Large scale portfolio optimization, simulation, rebalancing, back-testing, etc Real-time metrics Widen the field of possibilities User Experience Revolution Marketing / Digital Experience User-engineering Customer 360 view KYC Deeper depth of analysis Take Away

- 46. 47 Architecture Data acquisition Collection and Analysis InfiniSpan (DataGrid) Live Market Data • Instruments • Quotes • Index values Storm (Real-time) Portfolios • Simulation • Optimization • Rebalancing • Backtesting • Stresstesting Historical Market Data • Instruments • Quotes • Index values Cassandra (DB) RestitutionExternal Data • Web API • Web searches • Social networks (PULL mode) Operational Data • Transactions • Acccount Activity • Mails / Calls • Logs • PMS : Portfolios/ Positions • Trades / • Accounts / Structure • Reference Data Real-time and batch metrics • Portfolio perf. • Risk metrics • Real-time Dashboards Hadoop / HDFS / Hbase (Data Storage And Analysis) Tulip / Hive / Pig (Querying / Data Viz / Results Reporting) Analysis • Customer knowledge • Fraud detection Live Mkt. Data Market Data Accounting Reference PMS Data (Unstructured Data) Account Act. Transactions Big Data Deployment In Private Banking Institutions Expert System (Portfolio simulation, optimization, etc.)

- 47. 48 1. Identify your potential use cases 2. Prioritize use cases with business experts / representatives 3. Identify technological leads / opportunities 4. Implement Proof Of Concepts Incremental / Iterative way : How to get there ? • One time extract • Scheduled extractors Import Data within target Technology • Pig / Hive • DataViz • Storm, Infinispan Discover Data and technology • New analysis • Reporting • Automation Automate / Analyze Data

Editor's Notes

- An evolving society Yesterday – in 2008, we were amazed by the first smartphones. Today they have almost become a part of ourselves. We cannot go without them anymore. We are looking at our smartphone 150 times a day. Is it the biggest invention of the decade ? Likely, but the previous decade, not the current one. Today : always connected / interconnected people Tomorrow : the internet of things The Internet of Things (IoT) refers to uniquely identifiable objects and their interconnection on internet, as well as their automatic exchange of information with third party services.

- An evolving society Yesterday – in 2008, we were amazed by the first smartphones. Today they have almost become a part of ourselves. We cannot go without them anymore. We are looking at our smartphone 150 times a day. Is it the biggest invention of the decade ? Likely, but the previous decade, not the current one. Today : always connected / interconnected people Tomorrow : the internet of things The Internet of Things (IoT) refers to uniquely identifiable objects and their interconnection on internet, as well as their automatic exchange of information with third party services.

- Consumerization : new information technologies emerge first in the consumer market and then spread into businesses This is a change compared to the previous situation Companies used to have better servers/desktop/applications/... than those employees could buy at home Now, new solutions emerge every month : companies can't keep up New trend : employees are hired with their devices and their applications BYOD trend : employees are more comfortable and more efficient with their own devices Same power in an iPad now than in a Cray a few years back This consumerization can be found in infrastructures too and is an enabler for the consumer market A direct consequence of the consumerization: use of a mix of professional and personnal tools by employees (Office Suite, Gmail, Google+, Twitter, Facebook, Dropbox, evernote, ...) Nowadays several companies are still blocking acccess to these tools from their employees (private banks). Tomorrow, that won’t be possible anymore. People are used to be connected all the time, with highly efficient devices on highly responsive services, everywhere and for all kind of uses.

- Global sales of PCs never really exploded. On the other hand, Global sales of smartphones and tablettes explodes. Global Mobile traffic went from 1% in 2009 to 4% in 2010 and 12% in 2012. Today it reaches 30%. In India, the wired telecommunication infrastructure could never be developed as it has been in Europe or in the US. There, the mobile traffic already exceeded the Desktop traffic in 2012. In 2015, over 3 billion people will be connected all the time, everywhere and for all kind of uses.

- 3 billion people connected in 2015. But let’s consider something else : the Internet of Things : IoT The internet of thing is the coming big thing ! Gartner : 26 billion devices on the Internet of Things by 2020. ABI Research : 30 billion devices will be wirelessly connected to the Internet of Things by 2020. Cars, watches, fridges, cameras, whole houses, Internet of Everything “Cisco defines the Internet of Everything (IoE) as bringing together people, process, data, and things to make networked connections more relevant and valuable than ever before-turning information into actions that create new capabilities, richer experiences, and unprecedented economic...” The Internet of Everything is the coming evolution from the interconnection of people and objects, always, all the time, everywhere and for all kind of uses.

- Since we started estimating and measuring the amount of produced data until 2003, 5 exabytes (5 billions gigabytes) have been produced. In 2011, that quantity was generated in 2 days (think of facebook, twitter, google searches logs, financial transaction logs, etc.) In 2014, this quantity is generated in 10 minutes. Not only do we generate more and more data We have the means and the technology to analyze, exploit and mine it and extract meaningful business insights The data generated by the company’s own systems can be a very interesting source if information regarding customer behaviours, profiles, trends, desires, etc. But also external data, facebook, twitter logs, etc. Twitter story : Uber car transportation system in Paris. A driver has refused to carry a customer because the customer was gay. That customer twitted his misadventure. The driver got excluded by Uber only a few hours later. Instead of harming Uber’s reputation, the story rather gave it credit. Just an example on how a company can get significant advantages by monitoring social network feeds

- Data is produced absolutely everywhere ! Satelites is an intersting example to underline this « everywhere » aspect BUT Think of -Facebook / Twitter / Linkedin -> on internet -Financial markets and transactions -> in financial institutions and on market places -Cash distributors / payment card transactions -> everywhere in the world Or event think of -Planes and train traffic -> Electronically monitored -> monitoring data is published -Sattelites are in space here but even underground there is data produced: NYC city, London Paris subway -> electronicaly monitored -> data is published as well Data is produced absolutely everywhere and all the time

- For a long time, the increasing volume of data to be handled has not been an issue The volume of data rises, the number of user rises The processing abilities rise as well, sometimes even more See the Moore low above This model has hold for a very long time. The cost are going down, the computing capacities are rising, one simply needs to buy a new machine to absorb the load increase. This is especially true in the mainframe There wasn’t even any need to make the architecture of the systems (COBOL, etc.) evolve for 30 years Even outside the mainframe world The architecture patterns and styles we are using in the operational IS world haven’t really evolve for the last 15 years Despite new technologies such as Web, Web 2.0, Java, etc. of course I’m just speaking about architecture and styles The analytical systems architecture hasn’t evolve for the last 20 years So everything’s fine ? No ! As we’ll see, at least two problems emerged relatively recently

- 1st concern : the throughput We are able to store more and more data, no problem Yet we are more and more unable to manipulate this data efficiently Specifically, fetching all the data on a computation machine to process it is becoming more and more difficult

- The revolution came from the web giants. They had to find technical answers to business challenges like : GGL : Index the whole web, and keep a response time to any below one second - or how to keep the search free for the user ? LINK : understand how millions of users use their website ? AMZ : how to build a product recommendation engine for millions of customers, on millions of products ? EBAY : how to do a search in ebay ads, even with misspelling ?

- One challenge : how to handle the massive computation needs / massive amount of data ? -> New architecture and paradigms are required 3 ideas …

- 4 classes of grid architecture

- 4 classes of grid architecture

- 4 classes of grid architecture

- 4 classes of grid architecture

- 4 classes of grid architecture

- This is an overview of what is currently investigated in financial companies regarding big data technologies and private banking use cases Investment research Various applications all oriented towards trading, porfolio simulation, market research or development / testing of investment strategies / ideas. Customer knowledge Covers everything aaround the Customer base and CRM analysis such as Know Your Customer – KYC - concerns Customer profiling Customer analysis Customer documents (emails / calls / contracts) analysis Risk Management Uses cases are mostly oriented towards computing risk metrics and consolidated metrics more efficiently Quicker / real time Real-time monitoring Providing consolidated risk dashboards Compliance and monitoring Uses cases essentialy covers various fraud detection issues and compliance assertions - Pre / post trade compliance verifications Communications monitoring AML

- On a vu les terrains de jeux sur lesquels on peut jouer !! VOICI MAINTENANT UN FOCUS SUR 4 CAS PARTICULIERS !!!!!! TODO : les gains attendus !!! (typiquement ROI) TODO : les slides architecture en annexe !!!

- Pourquoi les géeants du web et ouverture de la solution à l’extérieur, google moteur de recherche, commodities Logiciel : un peu débordé par la situation suiveur

- No real-time / intraday values and computations are simply not possible Intraday computation are implemented in operational IS Some put everything in memory -> Huge cluster required / reload time issue

- Data is the new Black Gold A problem in several businesses today : lack of business insight => difficulty to make sound decisions / follow the pace of today’s market Big Data : a tremendous upportunity to drill down and tap into these critical insights Hence the comparison with crude oild => We’ll try to prove this statement in the following presentation As introduction -> a sensibilisation to The Data problem The dimensions of data (all the time / everywhere) The new emerging patterns around Data in Information Systems

- 1. Big Data as cost killer or enhancer -> Make things we are already doing either cheaper or better Various opportunities following this can be found simply by asking ourselves “what compromise have we made at a functional level within the Information System due to limitations of the underlying technology” One example : archiving Several banks are getting rid of the oldest account activity trails or the oldest financial transactions from the Operational information System and store them in archive databases. This is required to reduce the size of the Operational Database and keep it efficient. What if that wouldn’t be make sense any more and information from 20 years ago would still be available in the Live database ? 2. Big Data as a way to widen the field of possibilities This time we ask ourselves “what functional stake / requirement / or idea did we give up on because of limitations of the underlying technology” ? We’ll see some examples soon…

- Développement premium market !