Hive paris

- 1. Szehon Ho 右岸的代碼 Meetup Paris, France July 2017

- 2. Silicon Valley (2007-2016) Informatica (Metadata, Data Integration) Cloudera (Big Data Software) Open Source Software (2014-2016) Apache Hive Committer/ PMC Hive on Spark Criteo in Paris (2016- now) Biggest Hadoop Cluster in Europe Analytic Data Storage Team: Hive

- 3. Big Data Technologies Storage (Hadoop File System) Processing (Hadoop MapReduce) User Interface (Hive) New Technologies (Spark, Hive on Spark) Other (Open Source in Big Data)

- 4. Hadoop File System (HDFS) Each File replicated 3 times for backup Each File divided across many blocks, on different data nodes Reading one file is in parallel (can read from many nodes) Files are recoverable even if a few nodes fail Namenode knows where all the files are stored

- 5. Execution: Map Reduce (MR) Mappers (Many processes read HDFS file/files in parallel) Shuffle (Mappers trade output to other nodes in parallel) Reduce (More processes write final output in parallel) Java Application (JAR) provides mapper and reducer

- 6. Interface for accessing Hadoop FileSystem and MapReduce One of the most common ways to access (easier than writing a jar) Reuses concepts that have been around for decades: Relational Database (tables) and SQL (standard query language) Allow analysts to easily move from traditional data systems to big data world SQL Hadoop MapReduce : Compile, optimize, execute job, monitor job, result

- 7. Ad Servers Vertica Business Intelligence Hive MapReduce Catalog Databases Kafka Sqoop Data Sources Ingestion Data Pipelines Machine Learning HDFS

- 8. • SQL: INSERT INTO TABLE pv_users SELECT pv.pageid, u.age FROM page_view pv JOIN user u ON (pv.userid = u.userid); pageid userid time 1 111 9:08:01 2 111 9:08:13 1 222 9:08:14 userid age gender 111 25 female 222 32 male pageid age 1 25 2 25 1 32 X = page_view user pv_users

- 9. key value 111 <pv,1> 111 <pv,2> 222 <pv,1> key value 111 <u,25 > 222 <u,32 > pageid userid time 1 111 9:08:01 2 111 9:08:13 1 222 9:08:14 userid age gender 111 25 female 222 32 male page_view user Map key value 111 <pv,1> 111 <pv,2> 111 <u,25> key value 222 <pv,1> 222 <u,32 > Sort§ Reduce Shuffle

- 10. But Multi-Stage Queries spawn multiple jobs Takes a long time, intermediate data written to disk Select a.name, b.name, c.name From a join b on (a.id = b.id) join c on (a.id = c.id) join d on (a.id = d.id) Maps Reducers Hive Query Maps Reducers Maps Reducers HDFS

- 11. New Execution: Spark Replaces MapReduce: Abstract out HDFS Files into RDD (Resilient Distributed Dataset) RDD can be transformed as many times, kept in memory until needed. Better for jobs of many iterations

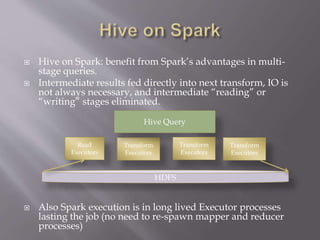

- 12. Hive on Spark: benefit from Spark’s advantages in multi- stage queries. Intermediate results fed directly into next transform, IO is not always necessary, and intermediate “reading” or “writing” stages eliminated. Also Spark execution is in long lived Executor processes lasting the job (no need to re-spawn mapper and reducer processes) Read Executors Transform Executors Hive Query Transform Executors Transform Executors HDFS

- 13. Spark has its own challenges Newer, less tuning information than MapReduce Requires more memory More tuning options How many executors How many Transform tasks each executor runs How much memory to give to each executor Mappers and Reducers relatively less intensive in memory due to simplicity (reads one row, process, write to disk)

- 14. Hadoop came from Google Papers Hadoop developed at Yahoo, contributed by big companies: Twitter, Facebook, LinkedIn, NTT,… Hive developed at Facebook, contributed by the big companies: Twitter, Yahoo, LinkedIn, Msft, Netflix,... Hadoop Vendors: Cloudera and Hortonworks sell software/support of Hadoop and stack such as Hive and have become biggest contributors Spark developed by UC Berkeley, now fastest growing project

- 15. Innovates very rapidly, tens or hundreds of contributors working at the same time. Some politics between companies (forks, preferences to support some other components). Particularly successful in infrastructure, platform software. Every big company needs it It is usually not worth it to build it yourself as the main business value is built on top of it

- 16. Thank you