Microsoft SQL server 2017 Level 300 technical deck

- 1. SQL Server 2017 Everything Built In—Technical Overview

- 2. United platform for the modern service provider CUSTOMER DATACENTER SERVICE PROVIDER MICROSOFT AZURE CONSISTENT PLATFORM Enterprise-grade Global reach, scale, and security to meet business demands Hybrid cloud Consistent platform across multiple environments and clouds People-focused Expands technical skill sets to the cloud for new innovation Microsoft vision for a new era

- 3. End-to-end mobile BI on any device Choice of platform and language Most secure over the last 7 years 0 20 40 60 80 100 120 140 160 180 200 Vulnerabilities(2010-2016) A fraction of the cost Self-serviceBIperuser Only commercial DB with AI built-in Microsoft Tableau Oracle $120 $480 $2,230 Industry-leading performance 1/10 Most consistent data platform #1 OLTP performance #1 DW performance #1 price/performance T-SQL Java C/C++ C#/VB.NET PHP Node.js Python Ruby R R and Python + in-memory at massive scale Native T-SQL scoring S Q L S E R V E R 2 0 1 7 I N D U S T R Y - L E A D I N G P E R F O R M A N C E A N D S E C U R I T Y N O W O N L I N U X A N D D O C K E R Private cloud Public cloud In-memory across all workloads 1/10th the cost of Oracle

- 4. SQL Server 2017 Meeting you where you are It’s the same SQL Server Database Engine that has many features and services available for all your applications—regardless of your operational ecosystem. Linux Any data Any application Anywhere Choice of platform T-SQL Java C/C++ C#/VB.NET PHP Node.js Python Ruby 1010 0101 0010 { }

- 5. How we develop SQL • Cloud-first but not cloud-only • Use SQL Database to improve core SQL Server features and cadence • Many interesting and compelling on-premises cloud scenarios SQL Server and APS Azure SQL Virtual Machines Azure SQL Database Azure SQL Data Warehouse

- 6. A consistent experience from SQL Server on-premises to Microsoft Azure IaaS and PaaS • On-premises, private cloud, and public cloud • SQL Server local (Windows and Linux), VMs (Windows and Linux), containers, and SQL Database • Common development, management, and identity tools including Active Directory, Visual Studio, Hyper-V, and System Center • Scalability, availability, security, identity, backup and restore, and replication • Many data sources • Reporting, integration, processing, and analytics All supported in the hybrid cloud Consistency and integration

- 8. Database Engine new features Linux/Docker support • RHEL, Ubuntu, SLES, and Docker Adaptive query processing • Faster queries just by upgrading • Interleaved execution • Batch-mode memory grant feedback • Batch-mode adaptive joins

- 9. Database Engine new features Graph • Store relationships using nodes/edges • Analyze interconnected data using node/edge query syntax SELECT r.name FROM Person AS p, likes AS l1, Person AS p2, likes AS l2, Restaurant AS r WHERE MATCH(p-(l1)->p2-(l2)->r) AND p.name = 'Chris' Automatic tuning • Automatic plan correction—identify, and optionally fix, problematic query execution plans causing query performance problems • Automatic index management—make index recommendations (Azure SQL Database only)

- 10. Database Engine new features Enhanced performance for natively compiled T-SQL modules • OPENJSON, FOR JSON, JSON • CROSS APPLY operations • Computed columns New string functions • TRIM, CONCAT_WS, TRANSLATE, and STRING_AGG with support for WITHIN GROUP (ORDER BY) Bulk import now supports CSV format and Azure Blob storage as file source

- 11. Database Engine new features Native scoring with T-SQL PREDICT Resumable online index rebuild • Pause/resume online index rebuilds Clusterless read-scale availability groups • Unlimited, geo-distributed, linear read scaling P S1 S2 S3 S4

- 12. Integration Services new features Integration Services scale out • Distribute SSIS package execution more easily across multiple workers, and manage executions and workers from a single master computer Integration Services on Linux • Run SSIS packages on Linux computers • Currently some limitations Connectivity improvements • Connect to the OData feeds of Microsoft Dynamics AX Online and Microsoft Dynamics CRM Online with the updated OData components

- 13. Analysis Services new features 1400 Compatibility level for tabular models Object level security for tabular models Get data enhancements • New data sources, parity with Power BI Desktop and Excel 2016 • Modern experience for tabular models Enhanced ragged hierarchy support • New Hide Members property to hide blank members in ragged hierarchies Detail Rows • Custom row set contributing to a measure value • Drillthrough action in more detail than the aggregated level in tabular models

- 14. Reporting Services new features Comments • Comments are now available for reports, to add perspective and collaborate with others—you can also include attachments with comments Broader DAX support • With Report Builder and SQL Server Data Tools, you create native DAX queries against supported tabular data models by dragging desired fields to the query designers Standalone installer • SSRS is no longer distributed through SQL Server setup • Power BI Report Server

- 15. Machine Learning Services new features Python support • Python and R scripts are now supported • Revoscalepy—Pythonic equivalent of RevoScaleR—parallel algorithms for data processing with a rich API MicrosoftML • Package of machine learning algorithms and transforms (with Python bindings), as well as pretrained models for image extraction or sentiment analysis

- 17. Multiple data types Heterogeneous environments Different development languages On-premises, cloud, and hybrid environments enterprise DB market runs on Linux 36% Evolution of SQL Server HDInsight on Linux R Server on Linux Linux in Azure SQL Server drivers and connectivity Visual Studio Code extension for SQL Server T-SQL Java C/C++ C#/VB.NET PHP Node.js Python Ruby 1010 0101 0010 { } 20K+applications for private preview

- 18. Power of the SQL Server Database Engine on the platform of your choice Linux distributions: RedHat Enterprise Linux (RHEL), Ubuntu, and SUSE Linux Enterprise Server (SLES) Docker: Windows and Linux containers Windows Server/Windows 10 Linux Linux/Windows container Windows

- 19. Buying a SQL Server license gives you the option to use it on Windows Server, Linux, or Docker. Regardless of where you run it— VM, Docker, physical, cloud, on- premises—the licensing model is the same; available features depend on which edition of SQL Server you use. LICENSE Same license, new choice

- 21. Supported platforms Platform Supported version(s) Supported file system(s) Red Hat Enterprise Linux 7.3 XFS or EXT4 SUSE Linux Enterprise Server v12 SP2 EXT4 Ubuntu 16.04 EXT4 Docker Engine (on Windows, Mac, or Linux) 1.8+ N/A System requirements for SQL Server on Linux

- 22. Cross-system architecture SQL Platform Abstraction Layer (SQLPAL) RDBMS AS IS RS Windows Linux Windows Host Extension. Linux Host Extension SQL Platform Abstraction Layer (SQLPAL) Host extension mapping to OS system calls (IO, memory, CPU scheduling) Win32-like APIsSQL OS API SQL OS v2 System resource and latency sensitive code paths Everything else

- 23. Installing SQL Server on Linux Add the SQL Server repository to your package manager Install the mssql-server package Run mssql-conf setup to configure SA password and edition Configure the firewall to allow remote connections (optional) SQL Server on Linux overview page sudo curl -o /etc/yum.repos.d/mssql-server.repo https://packages.microsoft.com/config/rhel/7/mssql-server-2017.repo sudo yum update sudo yum install -y mssql-server sudo /opt/mssql/bin/mssql-conf setup sudo firewall-cmd --zone=public --add-port=1433/tcp --permanent sudo firewall-cmd --reload

- 24. What’s installed? SQL Server runtime and associated libraries: /opt/mssql/bin/ /opt/mssql/lib/ /opt/mssql/ Data and log files for SQL Server databases: /var/opt/mssql/data/ /var/opt/mssql/log/ /var/opt/mssql/

- 25. Tools and programmability • Windows-based SQL Server tools—like SSMS, SSDT, and Profiler—work when connected to SQL Server on Linux • All existing drivers and frameworks supported • Third-party tools continue to work • Native command-line tools—sqlcmd, bcp • Visual Studio Code mssql extension

- 26. Client connectivity Language Platform More Details C# Windows, Linux, macOS Microsoft ADO.NET for SQL Server Java Windows, Linux, macOS Microsoft JDBC Driver for SQL Server PHP Windows, Linux, macOS PHP SQL Driver for SQL Server Node.js Windows, Linux, macOS Node.js Driver for SQL Server Python Windows, Linux, macOS Python SQL Driver Ruby Windows, Linux, macOS Ruby Driver for SQL Server C++ Windows, Linux, macOS Microsoft ODBC Driver for SQL Server SQL Server client drivers are available for many programming languages, including:

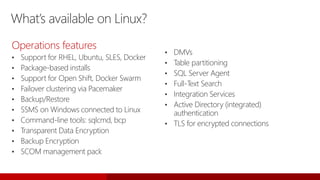

- 27. What’s available on Linux? Operations features • Support for RHEL, Ubuntu, SLES, Docker • Package-based installs • Support for Open Shift, Docker Swarm • Failover clustering via Pacemaker • Backup/Restore • SSMS on Windows connected to Linux • Command-line tools: sqlcmd, bcp • Transparent Data Encryption • Backup Encryption • SCOM management pack • DMVs • Table partitioning • SQL Server Agent • Full-Text Search • Integration Services • Active Directory (integrated) authentication • TLS for encrypted connections

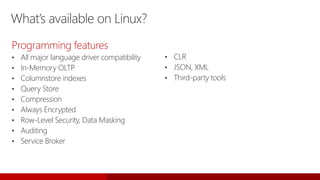

- 28. What’s available on Linux? Programming features • All major language driver compatibility • In-Memory OLTP • Columnstore indexes • Query Store • Compression • Always Encrypted • Row-Level Security, Data Masking • Auditing • Service Broker • CLR • JSON, XML • Third-party tools

- 29. Features not currently supported on Linux

- 31. In-Memory OLTP

- 32. In-Memory Online Transaction Processing (OLTP) In-Memory OLTP is the premier technology available in SQL Server and Azure SQL Database for optimizing performance of transaction processing, data ingestion, data load, and transient data scenarios. Memory-optimized tables outperform traditional disk-based tables, leading to more responsive transactional applications. Memory-optimized tables also improve throughput and reduce latency for transaction processing, and can help improve performance of transient data scenarios such as temp tables and ETL.

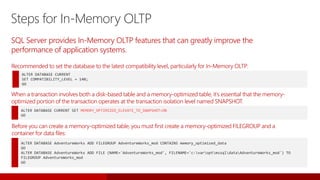

- 33. SQL Server provides In-Memory OLTP features that can greatly improve the performance of application systems. Steps for In-Memory OLTP ALTER DATABASE CURRENT SET COMPATIBILITY_LEVEL = 140; GO Recommended to set the database to the latest compatibility level, particularly for In-Memory OLTP: ALTER DATABASE CURRENT SET MEMORY_OPTIMIZED_ELEVATE_TO_SNAPSHOT=ON GO When a transaction involves both a disk-based table and a memory-optimized table, it’s essential that the memory- optimized portion of the transaction operates at the transaction isolation level named SNAPSHOT. ALTER DATABASE AdventureWorks ADD FILEGROUP AdventureWorks_mod CONTAINS memory_optimized_data GO ALTER DATABASE AdventureWorks ADD FILE (NAME='AdventureWorks_mod', FILENAME='c:varoptmssqldataAdventureWorks_mod') TO FILEGROUP AdventureWorks_mod GO Before you can create a memory-optimized table, you must first create a memory-optimized FILEGROUP and a container for data files:

- 34. Memory-optimized tables In short, memory-optimized tables are stored in main memory as opposed to on disk. Memory-optimized tables are fully durable by default; data is persisted to disk in the background. Memory-optimized tables can be accessed with T-SQL, but are accessed more efficiently with natively compiled stored procedures.

- 35. Memory-optimized tables The primary store for memory-optimized tables is main memory; unlike disk-based tables, data does not need to be read in to memory buffers from disk. CREATE TABLE dbo.ShoppingCart ( ShoppingCartId INT IDENTITY(1,1) PRIMARY KEY NONCLUSTERED, UserId INT NOT NULL INDEX ix_UserId NONCLUSTERED HASH WITH (BUCKET_COUNT=1000000), CreatedDate DATETIME2 NOT NULL, TotalPrice MONEY ) WITH (MEMORY_OPTIMIZED=ON) GO To create a memory-optimized table, use the MEMORY_OPTIMIZED = ON clause INSERT dbo.ShoppingCart VALUES (8798, SYSDATETIME(), NULL) INSERT dbo.ShoppingCart VALUES (23, SYSDATETIME(), 45.4) INSERT dbo.ShoppingCart VALUES (80, SYSDATETIME(), NULL) INSERT dbo.ShoppingCart VALUES (342, SYSDATETIME(), 65.4) Insert records into the table

- 36. Natively compiled stored procedures Natively compiled stored procedures are Transact-SQL stored procedures that are compiled to native code and can access memory-optimized tables. For information on creating natively complied stored procedures, see: https://docs.microsoft.com/en-us/sql/relational-databases/in-memory-oltp/creating-natively-compiled-stored-procedures Natively compiled stored procedures implement a subset of T-SQL. For more information, see: https://docs.microsoft.com/en-us/sql/relational-databases/in-memory-oltp/supported-features-for-natively-compiled-t-sql-modules This allows for efficient execution of the queries and business logic in the stored procedure. Native compilation enables faster data access and more efficient query execution than interpreted (traditional) Transact-SQL.

- 37. ALTER TABLE Sales.SalesOrderDetail ALTER INDEX PK_SalesOrderID REBUILD WITH (BUCKET_COUNT=100000000) T-SQL surface area: New {LEFT|RIGHT} OUTER JOIN Disjunction (OR, NOT) UNION [ALL] SELECT DISTINCT Subqueries (EXISTS, IN, scalar) Better T-SQL coverage, including: • Full collations support in native modules • Query surface area improvements • Nested stored procedures (EXECUTE) • Natively compiled scalar user-defined functions • Query Store support Other improvements: • Full schema change support: add/alter/drop column/constraint • Increased size allowed for durable tables • ALTER TABLE support • Multiple Active Results Sets (MARS) support In-Memory OLTP enhancements (SQL Server 2016)

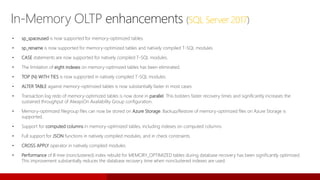

- 38. In-Memory OLTP enhancements (SQL Server 2017) • sp_spaceused is now supported for memory-optimized tables. • sp_rename is now supported for memory-optimized tables and natively compiled T-SQL modules. • CASE statements are now supported for natively compiled T-SQL modules. • The limitation of eight indexes on memory-optimized tables has been eliminated. • TOP (N) WITH TIES is now supported in natively compiled T-SQL modules. • ALTER TABLE against memory-optimized tables is now substantially faster in most cases. • Transaction log redo of memory-optimized tables is now done in parallel. This bolsters faster recovery times and significantly increases the sustained throughput of AlwaysOn Availability Group configuration. • Memory-optimized filegroup files can now be stored on Azure Storage. Backup/Restore of memory-optimized files on Azure Storage is supported. • Support for computed columns in memory-optimized tables, including indexes on computed columns. • Full support for JSON functions in natively compiled modules, and in check constraints. • CROSS APPLY operator in natively compiled modules. • Performance of B-tree (nonclustered) index rebuild for MEMORY_OPTIMIZED tables during database recovery has been significantly optimized. This improvement substantially reduces the database recovery time when nonclustered indexes are used.

- 40. Real-time analytics/HTAP SQL Server’s support for columnstore and In-Memory allows you to generate analytics in real time, direct from your transactional databases. This pattern is called Hybrid Transactional and Analytical Processing (HTAP), because it combines OLTP and OLAP in one database. • Analytics can be performed on operational data with minimal overhead • Improving the timeliness of analytics adds significant business value

- 41. Traditional operational/analytics architecture Key issues • Complex implementation • Requires two servers (capital expenditures and operational expenditures) • Data latency in analytics • High demand—requires real-time analytics IIS Server BI analysts

- 42. Minimizing data latency for analytics Challenges • Analytics queries are resource intensive and can cause blocking • Minimizing impact on operational workloads • Sub-optimal execution of analytics on relational schema Benefits • No data latency • No ETL • No separate data warehouse BI analysts IIS Server

- 43. Real-time analytics/HTAP The ability to run analytics queries concurrently with operational workloads using the same schema. Goals: • Minimal impact on operational workloads with concurrent analytics • Performance analytics for operational schema Not a replacement for: • Extreme analytics performance queries that are possible only using customized schemas (for example, Star/Snowflake) and preaggregated cubes • Data coming from nonrelational sources • Data coming from multiple relational sources requiring integrated analytics

- 45. HTAP with columnstore index Key points • Create an updateable NCCI for analytics queries • Drop all other indexes that were created for analytics • No application changes • Columnstore index is maintained just like any other index • Query optimizer will choose columnstore index where needed B-tree index Delta row groups Nonclustered columnstore index (NCCI)

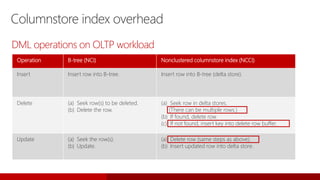

- 46. Columnstore index overhead Operation B-tree (NCI) Nonclustered columnstore index (NCCI) Insert Insert row into B-tree. Insert row into B-tree (delta store). Delete (a) Seek row(s) to be deleted. (b) Delete the row. (a) Seek row in delta stores. (There can be multiple rows.) (b) If found, delete row. (c) If not found, insert key into delete row buffer. Update (a) Seek the row(s). (b) Update. (a) Delete row (same steps as above). (b) Insert updated row into delta store. DML operations on OLTP workload

- 47. Minimizing columnstore overhead Key points • Create a columnstore only on cold data by using filtered predicate to minimize maintenance • Analytics query accesses both columnstore and “hot” data transparently • Example: • Order management application: create nonclustered columnstore index where order_status = “SHIPPED” B-tree index Nonclustered columnstore index—filtered index B-tree index Delta row groups

- 48. Using Availability Groups instead of data warehouses Key points • Mission critical operational workloads typically configured for high availability using Always On Availability Groups • You can offload analytics to readable secondary replicaSecondary replica Secondary replica Secondary replica Primary replica Always On Availability Group

- 50. Columnstore on In-Memory tables No explicit delta row group • Rows (tail) not in columnstore stay in In-Memory OLTP table • No columnstore index overhead when operating on tail • Background task migrates rows from tail to columnstore in chunks of 1 million rows • Deleted Rows Table (DRT)—tracks deleted rows • Columnstore data fully resident in memory • Persisted together with operational data • No application changes required In-Memory OLTP table Deleted rows table Tail Nonclustered index Hash index Columnstore index

- 51. Operational analytics: Columnstore overhead Operation Hash or range index HK-CCI Insert Insert row into HK. Insert row into HK. Delete (a) Seek the row(s) to be deleted. (b) Delete the row. (a) Seek the row(s) to be deleted. (b) Delete the row in HK. (c) If the row is in the TAIL, then return. If not, insert <colstore-RID> into DRT. Update (a) Seek the row(s) to be updated. (b) Update (delete/insert). (a) Seek the row(s) to be updated. (b) Update (delete/insert) in HK. (c) If the row is in the TAIL, then return. If not, insert <colstore-RID> into DRT. DML operations on In-Memory OLTP

- 52. Minimizing columnstore index overhead In-Memory OLTP table Updateable CCI TailDRT Hash index Syntax: Create nonclustered columnstore index <name> on <table> (<columns>) with (compression_delay = 30) Key points • Delta rowgroup is only compressed after compression_delay duration • Minimizes/eliminates index fragmentation

- 54. Mission critical availability • Provides unified, simplified solution • Streamlines deployment, management, and monitoring • Reuses existing investments • Offers SAN/DAS environments • Allows use of HA hardware resources • Supports fast, transparent failover • Detects failures reliably • Handles multiple failures at once

- 55. Always On • Failover on SQL Server instance level • Shared storage (SAN/SMB) • Failover can take minutes based on load • Multi-node clustering • Passive secondary nodes • Failover on database level • Direct attached storage • Failover takes seconds • Multiple secondaries • Active secondaries Failover cluster instances for servers Availability Groups for groups of databases

- 56. Cluster nodeCluster node Failover cluster instances Server failover Shared storage Multi-node clustering Passive secondary nodes Failover in minutes Windows and Linux failover clusters are supported SQL Server 2017 Shared storage SQL Server 2017SQL Server failover cluster instance

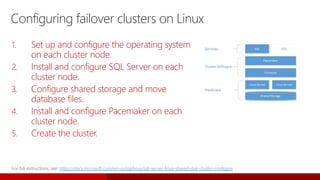

- 57. Configuring failover clusters on Linux 1. Set up and configure the operating system on each cluster node. 2. Install and configure SQL Server on each cluster node. 3. Configure shared storage and move database files. 4. Install and configure Pacemaker on each cluster node. 5. Create the cluster. https://docs.microsoft.com/en-us/sql/linux/sql-server-linux-shared-disk-cluster-configure

- 58. Always On Availability Groups Availability Groups: High availability and disaster recovery solution where one or several databases failover together. SQL Server 2017 supports one primary, and up to eight secondaries, for a total of nine replicas. Secondaries can be enabled as read-only replicas, which can be load balanced. Storage Storage Failover cluster Failover cluster Azure region Azure region

- 59. High availability and disaster recovery • Resilience against guest and OS level failures • Planned and unplanned events • Minimum downtime for patching and upgrades • Minutes RTO Simple HADR VM failure • Protection against accidental or malicious data corruption • DR protection • Minutes to hours RTO Backup/restore • Instance level protection • Automatic failure detection and failover • Seconds to minutes RTO • Resilience against OS and SQL Server failures Standard HADR Failover cluster • AG with two replicas • Replaces Database Mirroring Basic Availability Groups • Warm standbys for DR Log shipping • Database level protection • Seconds RTO • No data loss • Recover from unplanned outage • No downtime for planned maintenance • Offload read/backup workload to active secondaries • Failover to geographically distributed secondary site Availability Groups Mission critical HADR

- 60. Availability Groups and failover clustering (Windows) Always On: Failover Cluster Instances and Availability Groups work together to ensure data is accessible despite failures Windows Server Failover Clustering (WSFC) cluster Network Subnet Network Subnet Storage Node NodeNodeNodeNode SQL Server Instance SQL Server Instance SQL Server Instance AlwaysOn SQL Server Failover Cluster Instance Secondary Replica Secondary Replica Always On Availability Group Instance Network Name WSFC Configuration WSFC Configuration WSFC Configuration WSFC Configuration WSFC Configuration Instance Network Name Instance Network Name Instance Network Name Availability Group Listener Virtual Network Name Storage Storage Shared Storage Secondary Replica Primary Replica

- 61. Network Subnet Network Subnet Storage Node NodeNodeNodeNode SQL Server Instance SQL Server Instance SQL Server Instance AlwaysOn SQL Server Failover Cluster Instance Instance Network Name Pacemaker Configuration Pacemaker Configuration Pacemaker Configuration Pacemaker Configuration Pacemaker Configuration Instance Network Name Instance Network Name Instance Network Name Storage Storage Shared Storage Availability Groups and failover clustering (Linux) Always On: Failover Cluster Instances and Availability Groups work together to ensure data is accessible despite failures Pacemaker Cluster Pacemaker cluster virtual IP DNS name (manual registration) Secondary Replica Secondary Replica Always On Availability Group Secondary Replica Primary Replica

- 62. Always On cross-platform capabilities Mission critical availability on any platform • Always On Availability Groups for Linux NEW* and Windows for HA and DR • Flexibility for HA architectures NEW* • Ultimate HA with OS-level redundancy and failover • Load balancing of readable secondaries •High Availability •Offload backups •Scale BI reporting •Enables testing •Enables migrations

- 63. Greater scalability • Load balancing readable secondaries • Increased number of automatic failover targets • Log transport performance • Distributed Availability Groups Improved manageability • DTC support • Database-level health monitoring • Group Managed Service Account • Domain-independent Availability Groups • Basic HA in Standard Edition AG_Listener New York (Primary) Asynchronous data movement Synchronous data movement Unified HA solution Enhanced Always On Availability Groups (SQL Server 2016) Hong Kong (Secondary) New Jersey (Secondary)

- 64. Guarantee commits on synchronous secondary replicas Use REQUIRED_COPIES_TO_COMMIT with CREATE AVAILABILITY GROUP or ALTER AVAILABILITY GROUP. When REQUIRED_COPIES_TO_COMMIT is set to a value higher than 0, transactions at the primary replica databases will wait until the transaction is committed on the specified number of synchronous secondary replica database transaction logs. If enough synchronous secondary replicas are not online, write transactions to primary replicas will stop until communication with sufficient secondary replicas resumes. Enhanced Always On Availability Groups (SQL Server 2017) AG_Listener New York (Primary) Asynchronous data movement Synchronous data movement Unified HA solution Hong Kong (Secondary) New Jersey (Secondary)

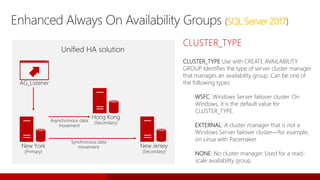

- 65. CLUSTER_TYPE CLUSTER_TYPE Use with CREATE AVAILABILITY GROUP. Identifies the type of server cluster manager that manages an availability group. Can be one of the following types: WSFC: Windows Server failover cluster. On Windows, it is the default value for CLUSTER_TYPE. EXTERNAL: A cluster manager that is not a Windows Server failover cluster—for example, on Linux with Pacemaker. NONE: No cluster manager. Used for a read- scale availability group. Enhanced Always On Availability Groups (SQL Server 2017) AG_Listener New York (Primary) Asynchronous data movement Synchronous data movement Unified HA solution Hong Kong (Secondary) New Jersey (Secondary)

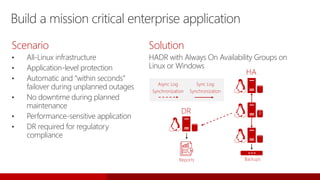

- 66. DR Build a mission critical enterprise application Scenario • All-Linux infrastructure • Application-level protection • Automatic and “within seconds” failover during unplanned outages • No downtime during planned maintenance • Performance-sensitive application • DR required for regulatory compliance Solution HADR with Always On Availability Groups on Linux or Windows HA P BackupsReports Sync Log Synchronization Async Log Synchronization

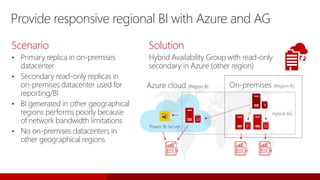

- 67. Provide responsive regional BI with Azure and AG Scenario • Primary replica in on-premises datacenter • Secondary read-only replicas in on-premises datacenter used for reporting/BI • BI generated in other geographical regions performs poorly because of network bandwidth limitations • No on-premises datacenters in other geographical regions Solution Hybrid Availability Group with read-only secondary in Azure (other region) P S1 S3 S2 Hybrid AG

- 68. Scale/DR with Distributed Availability Groups Scenario • Availability Group must span multiple datacenters • Not possible to add all servers to a single WSFC (datacenter networks/inter-domain trust) • Secondary datacenter provides DR • Geographically distributed read-only replicas required Solution Distributed Always On Availability Groups on Linux or Windows Async Log Synchronization

- 69. Migration/testing Scenarios • ISV solution built on SQL Server on Windows • Linux Certification • Enterprise moving to an all-Linux infrastructure • Rigorous business requirements • Seamless migration Solution Minimum downtime and HA for cross- platform migrations with Distributed Availability Groups Migration/testing

- 70. Improve read concurrency with read-scale Availability Groups Scenario • SaaS app (website) • Catalog database with high volume of concurrent read-only transactions • Bottlenecks on Availability Groups primary due to read workloads • Increased response time • HA/DR elements of Availability Groups not required Solution Read-scale Availability Groups • No cluster required • Both Linux and Windows P S1 S2 S3 S4

- 71. Automatic tuning

- 72. Automatic tuning Automatic plan correction identifies problematic plans and fixes SQL plan performance problems: Adapt Verify Learn

- 73. Automatic plan choice detection sys.dm_db_tuning_recommendations

- 74. Automatic plan correction sys.dm_db_tuning_recommendations by enabling the AUTOMATIC_TUNING database property: ALTER DATABASE current SET AUTOMATIC_TUNING ( FORCE_LAST_GOOD_PLAN = ON );

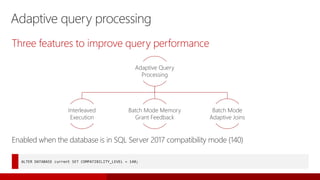

- 76. Adaptive query processing Three features to improve query performance Enabled when the database is in SQL Server 2017 compatibility mode (140) ALTER DATABASE current SET COMPATIBILITY_LEVEL = 140; Adaptive Query Processing Interleaved Execution Batch Mode Memory Grant Feedback Batch Mode Adaptive Joins

- 77. Query processing and cardinality estimation When estimates are accurate (enough), we make informed decisions around order of operations and physical algorithm selection CE uses a combination of statistical techniques and assumptions During optimization, the cardinality estimation (CE) process is responsible for estimating the number of rows processed at each step in an execution plan

- 78. Common reasons for incorrect cardinality estimates Missing statistics Stale statistics Inadequate statistics sample rate Bad parameter sniffing scenarios Out-of-model query constructs • For example, MSTVFs, table variables, XQuery Assumptions not aligned with data being queried • For example, independence versus correlation

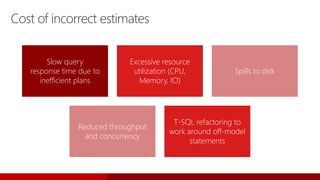

- 79. Cost of incorrect estimates Slow query response time due to inefficient plans Excessive resource utilization (CPU, Memory, IO) Spills to disk Reduced throughput and concurrency T-SQL refactoring to work around off-model statements

- 80. Interleaved execution Pre 2017 2017+ 100 rows guessed for MSTVFs MSTVF identified 500,000 rows assumed Performance issues if skewed Execute MSTVF Good performance Problem: Multi-statement table valued functions (MSTVFs) are treated as a black box by QP and we use a fixed optimization guess. Interleaved execution will materialize row counts for MSTVFs. Downstream operations will benefit from the corrected MSTVF cardinality estimate. Optimize Execute Optimize Execute Optimize Execute…

- 81. Batch mode memory grant feedback Problem: Queries can spill to disk or take too much memory, based on poor cardinality estimates. Memory grant feedback (MGF) will adjust memory grants based on execution feedback. MGF will remove spills and improve concurrency for repeating queries.

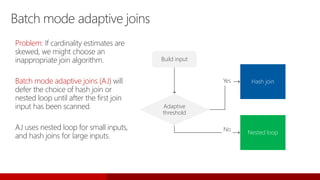

- 82. Batch mode adaptive joins Problem: If cardinality estimates are skewed, we might choose an inappropriate join algorithm. Batch mode adaptive joins (AJ) will defer the choice of hash join or nested loop until after the first join input has been scanned. AJ uses nested loop for small inputs, and hash joins for large inputs. Build input Adaptive threshold Hash join Nested loop Yes No

- 83. About interleaved execution Expected performance improvements? Benefits workloads with skews and downstream operations 0 20 40 60 80 100 120 140 160 A B Original Exec Time (sec) Interleaved Execution Time (sec)

- 84. About interleaved execution • Minimal, because MSTVFs are always materializedExpected overhead? • First execution cached will be used by consecutive executionsCached plan considerations • Contains interleaved execution candidates • Is interleaved executed Plan attributes • Execution status, CE update, disabled reasonXevents

- 85. Interleaved execution candidates SELECT statements 140 compatibility level MSTVF not used on the inside of a CROSS APPLY Not using plan forcing Not using USE HINT with DISABLE_PARAMETER_SNIFFING (or TF 4136)

- 86. About batch mode memory grant feedback • Benefits workloads with spills or overages Expected performance improvements? Before After

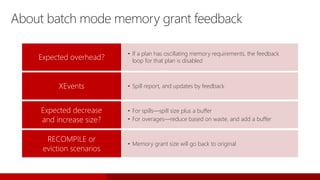

- 87. About batch mode memory grant feedback • If a plan has oscillating memory requirements, the feedback loop for that plan is disabledExpected overhead? • Spill report, and updates by feedbackXEvents • For spills—spill size plus a buffer • For overages—reduce based on waste, and add a buffer Expected decrease and increase size? • Memory grant size will go back to original RECOMPILE or eviction scenarios

- 88. About batch mode adaptive join Expected performance benefit? • Performance gains occur for workloads where, prior to adaptive joins being available, the optimizer chooses the wrong join type due to incorrect cardinality estimates. 0 2 4 6 8 10 12 14 16 18 Customer Invoice Transactions Retail Top Products Report Adaptive Join Test Results Adaptive join enabled (seconds) Adaptive join disabled (seconds)

- 89. About batch mode adaptive join Queries involving columnstore indexes can dynamically switch between nested loop join and hash join operators at execution time:

- 90. About batch mode adaptive join • Memory is granted even for a nested loop scenario—if nested loop is always optimal, there is a greater overheadExpected overhead? • Adaptive threshold rows, estimated and actual join typePlan attributes • Adaptive join skippedXEvents • Single compiled plan can accommodate low and high row scenariosCached plan considerations

- 91. About batch mode adaptive join Eligible statements • The join is eligible to be executed both by an indexed nested loop join or a hash join physical algorithm. • The hash join uses batch mode—either through the presence of a columnstore index in the query overall or a columnstore indexed table being referenced directly by the join. • The generated alternative solutions of the nested loop join and hash join should have the same first child (outer reference).

- 92. Adaptive join threshold 1 50,000 Cost Rows Adaptive Join Threshold Hash Join Nested Loop Join

- 93. Query Store

- 94. Problems with query performance Fixing query plan choice regressions is difficult Query plan cache is not well suited for performance troubleshooting. Long time to detect the issue (TTD) Which query is slow? Why is it slow? What was the previous plan? Long time to mitigate (TTM) Can I modify the query? How to use plan guide? Temporary performance issues Website is down DB upgraded Database is not working Impossible to predict root cause Regression caused by new bits

- 95. The solution: Query Store Dedicated store for query workload performance data • Captures the history of plans for each query • Captures the performance of each plan over time • Persists the data to disk (works across restarts, upgrades, and recompiles) Significantly reduces TTD/TTM • Find regressions and other issues in seconds • Allows you to force previous plans from history DBA is now in control

- 96. Durability latency controlled by DB option DATA_FLUSH_INTERNAL_SECONDS Compile Execute Plan store Runtime stats Query Store schema Query Store architecture • Collects query texts (plus all relevant properties) • Stores all plan choices and performance metrics • Works across restarts/upgrade /recompiles • Dramatically lowers the bar for performance troubleshooting • New views • Intuitive and easy plan forcing

- 97. Query Store write architecture Query StoreQuery Execution Internal tables Query and plan store Runtime stats store Query execute stats Compile Execute async Query text and plan

- 98. Query Store read architecture • Views merge in-memory and on-disk content • Users always see “latest” data Query Store views Query StoreQuery Execution Internal tables Query and plan store Runtime stats store Query execute stats Compile Execute async Query text and plan

- 99. Keeping stability while upgrading to SQL Server 2017 Install bits keep existing compatibility level Run Query Store (create a baseline) Move to compatibility level 140 Fix regressions with plan forcing SQL Server 2017 Query Optimizer (QO) enhancements tied to database compatibility level

- 100. Monitoring performance by using the Query Store The Query Store feature provides DBAs with insight on query plan choice and performance

- 101. Working with Query Store /* (1) Turn ON Query Store */ ALTER DATABASE MyDB SET QUERY_STORE = ON; /* (2) Review current Query Store parameters */ SELECT * FROM sys.database_query_store_options /* (3) Set new parameter values */ ALTER DATABASE MyDB SET QUERY_STORE ( OPERATION_MODE = READ_WRITE, CLEANUP_POLICY = ( STALE_QUERY_THRESHOLD_DAYS = 30 ), DATA_FLUSH_INTERVAL_SECONDS = 3000, MAX_SIZE_MB = 500, INTERVAL_LENGTH_MINUTES = 15 ); /* (4) Clear all Query Store data */ ALTER DATABASE MyDB SET QUERY_STORE CLEAR; /* (5) Turn OFF Query Store */ ALTER DATABASE MyDB SET QUERY_STORE = OFF; /* (6) Performance analysis using Query Store views*/ SELECT q.query_id, qt.query_text_id, qt.query_sql_text, SUM(rs.count_executions) AS total_execution_count FROM sys.query_store_query_text qt JOIN sys.query_store_query q ON qt.query_text_id = q.query_text_id JOIN sys.query_store_plan p ON q.query_id = p.query_id JOIN sys.query_store_runtime_stats rs ON p.plan_id = rs.plan_id GROUP BY q.query_id, qt.query_text_id, qt.query_sql_text ORDER BY total_execution_count DESC /* (7) Force plan for a given query */ exec sp_query_store_force_plan 12 /*@query_id*/, 14 /*@plan_id*/ DB-level feature exposed through T-SQL extensions • ALTER DATABASE • Catalog views (settings, compile, and runtime stats) • Stored procedures (plan forcing, query/plan/stats cleanup)

- 102. Query Store enhancements (SQL Server 2017) • Query Store now tracks wait stats summary information. Tracking wait stats categories per query in Query Store enables the next level of performance troubleshooting experience. It provides even more insight into the workload performance and its bottlenecks while preserving the key Query Store advantages.

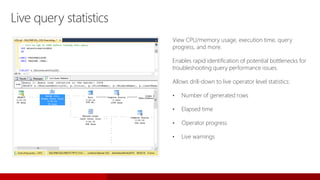

- 103. Live query statistics View CPU/memory usage, execution time, query progress, and more. Enables rapid identification of potential bottlenecks for troubleshooting query performance issues. Allows drill-down to live operator level statistics: • Number of generated rows • Elapsed time • Operator progress • Live warnings

- 104. Summary: Query Store Capability • Query Store helps customers quickly find and fix query performance issues • Query Store is a “flight data recorder” for database workloads Benefits • Greatly simplifies query performance troubleshooting • Provides performance stability across SQL Server upgrades • Allows deeper insight into workload performance

- 105. Resource Governor

- 106. Resource Governor Resource Governor enables you to specify limits on the amount of CPU, physical IO, and memory that incoming application requests to the Database Engine can use. With Resource Governor, you can: • Provide multitenancy and resource isolation on single instances of SQL Server that serve multiple client workloads. • Provide predictable performance and support SLAs for workload tenants in a multiworkload and multiuser environment. • Isolate and limit runaway queries or throttle IO resources for operations such as DBCC CHECKDB that can saturate the IO subsystem and negatively affect other workloads. • Add fine-grained resource tracking for resource usage chargebacks and to provide predictable billing to consumers of the server resources.

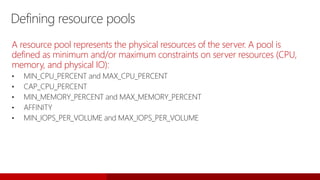

- 108. Defining resource pools A resource pool represents the physical resources of the server. A pool is defined as minimum and/or maximum constraints on server resources (CPU, memory, and physical IO): • MIN_CPU_PERCENT and MAX_CPU_PERCENT • CAP_CPU_PERCENT • MIN_MEMORY_PERCENT and MAX_MEMORY_PERCENT • AFFINITY • MIN_IOPS_PER_VOLUME and MAX_IOPS_PER_VOLUME

- 110. Columnstore

- 111. Data stored as columns SQL Server performance features: Columnstore Columnstore A technology for storing, retrieving, and managing data by using a columnar data format called a columnstore. You can use columnstore indexes for real-time analytics on your operational workload. Key benefits Provides a very high level of data compression, typically 10x, to reduce your data warehouse storage cost significantly. Indexing on a column with repeated values vastly improves performance for analytics. Improved performance: • More data fits in memory • Batch-mode execution

- 112. Columnstore: Clustered vs. nonclustered indexes In SQL Server, rowstore refers to a table where the underlying data storage format is a heap, clustered index, or memory-optimized table. Data that is logically organized as a table with rows and columns, and then physically stored in a row- wise data format. Rowstore Data that is logically organized as a table with rows and columns, and physically stored in a column- wise data format. Columnstore

- 113. Columnstore: Clustered vs. nonclustered indexes A secondary index on the standard table (rowstore). Nonclustered index The primary storage for the entire table. Clustered index Both columnstore indexes offer high compression (10x) and improved query performance. Nonclustered indexes enable a standard OLTP workload on the underlying rowstore, and a separate simultaneous analytical workload on the columnstore—with negligible impact to performance (Real-Time Operational Analytics).

- 114. Steps to creating a columnstore (NCCI) Add a columnstore index to the table by executing the T-SQL SELECT ProductID, SUM(UnitPrice) SumUnitPrice, AVG(UnitPrice) AvgUnitPrice, SUM(OrderQty) SumOrderQty, AVG(OrderQty) AvgOrderQty FROM Sales.SalesOrderDetail GROUP BY ProductID ORDER BY ProductID Execute the query that should use the columnstore index to scan the table SELECT * FROM sys.indexes WHERE name = 'IX_SalesOrderDetail_ColumnStore' GO SELECT * FROM sys.dm_db_index_usage_stats WHERE database_id = DB_ID('AdventureWorks') AND object_id = OBJECT_ID('AdventureWorks.Sales.SalesOrderDetail'); Verify that the columnstore index was used by looking up its object_id and confirming that it appears in the usage stats for the table CREATE NONCLUSTERED COLUMNSTORE INDEX [IX_SalesOrderDetail_ColumnStore] ON Sales.SalesOrderDetail (UnitPrice, OrderQty, ProductID) GO

- 115. Columnstore index enhancements (SQL Server 2016) Improvements SQL Server 2016 Clustered columnstore index • Master copy of the data (10x compression) • Additional B-tree indexes for efficient equality, short-range searches, and PK/FK constraints • Locking granularity at row level using NCI index path • DDL: ALTER, REBUILD, REORGANIZE Updatable nonclustered index • Updatable • Ability to mix OLTP and analytics workload • Ability to create filtered NCCI • Partitioning supported Equality and short-range queries • Optimizer can choose NCI on column C1; index points directly to rowgroup • No full index scan • Covering NCI index String predicate pushdown • Apply filter on dictionary entries • Find rows that refer to dictionary entries that qualify (R1) • Find rows not eligible for this optimization (R2) • Scan returns (R1 + R2) rows • Filter node applies string predicate on (R2) • Row returned by Filter node = (R1 + R2)

- 116. Columnstore index enhancements (SQL Server 2017) • Clustered columnstore indexes now support LOB columns (nvarchar(max), varchar(max), varbinary(max)) • Online nonclustered columnstore index build and rebuild support added

- 117. PolyBase

- 118. Interest in big data spurs customer demand Adoption of big data technologies like Hadoop Increase in number and variety of data sources that generate large quantities of data Realization that data is “too valuable” to delete Dramatic decline in hardware cost, especially storage $

- 119. Query relational and non-relational data, on-premises and in Azure T-SQL query Apps SQL Server Hadoop PolyBase Query relational and non-relational data with T-SQL

- 120. PolyBase view • Execute T-SQL queries against relational data in SQL Server and semi-structured data in Hadoop or Azure Blob storage • Use existing T-SQL skills and BI tools to gain insights from different data stores PolyBase in SQL Server 2017

- 121. PolyBase use cases

- 122. PolyBase components • PolyBase Engine Service • PolyBase Data Movement Service (with HDFS Bridge) • External table constructs • MR pushdown computation support Head Node SQL 2017 PolyBase Engine PolyBase DMS

- 123. PolyBase architecture PolyBase T-SQL queries submitted here PolyBase queries can only refer to tables and/or external tables here PolyBase Group Head Node Compute Nodes SQL 2017 PolyBase Engine PolyBase DMS SQL 2017 PolyBase DMS SQL 2017 PolyBase DMS SQL 2017 PolyBase DMS Hadoop Cluster Namenode Datanode Datanode Datanode Datanode File System AB 01 01 01 01 File System File System File System

- 124. Supported big data sources Hortonworks HDP 1.3 on Linux/Windows Server Hortonworks HDP 2.0-2.3 on Windows Server Hortonworks HDP 2.0-2.6 on Windows Server Cloudera CDH 4.3 on Linux Cloudera CDH 5.1-5.12 on Linux Azure Blob storage What happens behind the scenes? Loading the right client jars to connect to Hadoop distribution -- different numbers map to various Hadoop flavors -- example: value 4 stands for HDP 2.x on Linux, value 5 for HDP 2.x on Windows, value 6 for CHD 5.x on Linux Supported big data sources

- 125. After setup • Compute nodes are used for scale-out query processing on external tables in HDFS • Tables on compute nodes cannot be referenced by queries submitted to head node • Number of compute nodes can be dynamically adjusted by DBA • Hadoop clusters can be shared among multiple PolyBase groups PolyBase Group Head Node Compute Nodes SQL 2017 PolyBase Engine PolyBase DMS SQL 2017 PolyBase DMS SQL 2017 PolyBase DMS SQL 2017 PolyBase DMS Hadoop Cluster Namenode Datanode Datanode Datanode Datanode File System AB 01 01 01 01 File System File System File System

- 126. CREATE EXTERNAL DATA SOURCE HadoopCluster WITH( TYPE = HADOOP, LOCATION = 'hdfs://10.14.0.4:8020' ); CREATE EXTERNAL FILE FORMAT CommaSeparatedFormat WITH( FORMAT_TYPE = DELIMITEDTEXT, FORMAT_OPTIONS (FIELD_TERMINATOR = ',', USE_TYPE_DEFAULT = TRUE) ); CREATE EXTERNAL TABLE [dbo].[SensorData]( vin varchar(255), speed int, fuel int, odometer int, city varchar(255), datatimestamp varchar(255) ) WITH( LOCATION = '/apps/hive/warehouse/sensordata', DATA_SOURCE = HadoopCluster, FILE_FORMAT = CommaSeparatedFormat ); • Create an external data source • Create an external file format • Create an external table for unstructured data Creating Polybase objects

- 127. SELECT [vin], [speed], [datetimestamp] FROM dbo.SensorData SELECT [make], [model], [modelYear], [speed], [datetimestamp] FROM dbo.AutomobileData LEFT JOIN dbo.SensorData ON dbo.AutomobileData.[vin] = dbo.SensorData.[vin] Query external data table as SQL data • Data returned as defined in external data table Join SQL data with external data • Join data between internal and external table • All TSQL commands supported • PolyBase will optimize between SQL-side query and pushdown to MapReduce Polybase queries

- 129. Resumable online indexing With resumable online index rebuild you can resume a paused index rebuild operation from where the rebuild operation was paused, rather than having to restart the operation at the beginning. In addition, this feature rebuilds indexes using only a small amount of log space. • Resume an index rebuild operation after an index rebuild failure, such as following a database failover or after running out of disk space. There’s no need to restart the operation from the beginning. This can save a significant amount of time when rebuilding indexes for large tables. • Pause an ongoing index rebuild operation and resume it later—for example, to temporarily free up system resources to execute a high priority. Instead of aborting the index rebuild process, you can pause the index rebuild operation, and resume it later without losing prior progress. • Rebuild large indexes without using a lot of log space and have a long-running transaction that blocks other maintenance activities. This helps log truncation and avoids out-of-log errors that are possible for long-running index rebuild operations.

- 130. Using resumable online index rebuild Start a resumable online index rebuild ALTER INDEX test_idx on test_table REBUILD WITH (ONLINE=ON, RESUMABLE=ON) ; Pause a resumable online index rebuild ALTER INDEX test_idx on test_table PAUSE ; Resume a paused online index rebuild ALTER INDEX test_idx on test_table RESUME ; Abort a resumable online index rebuild (which is running or paused) ALTER INDEX test_idx on test_table ABORT ; View metadata about resumable online index operations SELECT * FROM sys.index_resumable_operations ;

- 131. Partitioning

- 132. Partitioning SQL Server supports partitioning of tables and indexes. In partitioning, a logical table (or index) is split into two or more physical partitions, each containing a portion of the data. Allocation of data to (and retrieval from) the partitions is managed automatically by the Database Engine, based on a partition function and partition scheme that you define. Partitioning can enhance the performance and manageability of large data sets, enabling you to work with a subset of the data.

- 133. Steps to create a partitioned table Create a partition function -- Creates a partition scheme called myRangePS1 that applies myRangePF1 to four database filegroups CREATE PARTITION SCHEME myRangePS1 AS PARTITION myRangePF1 TO (test1fg, test2fg, test3fg, test4fg) ; GO Create a partition scheme (assumes four filegroups, test1fg to test4fg) -- Creates a partitioned table called PartitionTable that uses myRangePS1 to partition col1 CREATE TABLE PartitionTable (col1 int PRIMARY KEY, col2 char(10)) ON myRangePS1 (col1) ; GO Create a partitioned table based on the partition scheme -- Creates a partition function called myRangePF1 that will partition a table into four partitions CREATE PARTITION FUNCTION myRangePF1 (int) AS RANGE LEFT FOR VALUES (1, 100, 1000) ; GO New data added to the table will be assigned to a partition, based on the values provided for col1.

- 134. Manage large tables with table partitioning Scenario • Log data table grows by millions of rows a day • Removing old data (for regulatory compliance) exceeds maintenance window Solution • Partition the table with a partition function based on date (day or month) • New data loaded into the current active partition • Historic data can be removed by clearing down partitions

- 135. Security

- 136. SQL Protect data Encryption at rest Transparent Data Encryption Backup Encryption Cell-Level Encryption Encryption in transit Transport Layer Security (SSL/TLS) Encryption in use (client) Always Encrypted Control access Database access SQL Authentication Active Directory Authentication Granular Permissions Application access Row-Level Security Dynamic Data Masking Security Monitor access Tracking activities Fine-Grained Audit

- 137. SQL Server 2017 and GDPR compliance Control access to personal data • Authentication • Authorization • Dynamic Data Masking • Row-Level Security Safeguarding data • Transparent Data Encryption • Transport Layer Security (TLS) • Always Encrypted • SQL Server Audit

- 138. Always Encrypted

- 139. Always Encrypted Always Encrypted allows clients to encrypt sensitive data inside client applications, and never reveal the encryption keys to the Database Engine. As a result, Always Encrypted provides a separation between those who own the data (and can view it) and those who manage the data (but should have no access). Always Encrypted makes encryption transparent to applications. An Always Encrypted-enabled driver installed on the client computer achieves this by automatically encrypting and decrypting sensitive data in the client application. The driver encrypts the data in sensitive columns before passing the data to the Database Engine, and automatically rewrites queries so that the semantics to the application are preserved. Similarly, the driver transparently decrypts data that is stored in encrypted database columns, and contained in query results.

- 140. Protect your data at rest and in motion (without impacting database performance) Always Encrypted Query TrustedApps SELECT Name FROM Patients WHERE SSN=@SSN @SSN='198-33-0987' Result Set SELECT Name FROM Patients WHERE SSN=@SSN @SSN=0x7ff654ae6d Column Encryption Key Enhanced ADO.NET Library Column Master Key Client side ciphertext Name 243-24-9812 SSN Country Denny Usher 198-33-0987 USA Alicia Hodge 123-82-1095 USA Philip Wegner USA dbo.Patients SQL Server dbo.Patients Philip Wegner Name SSN USA Denny Usher 0x7ff654ae6d USA Alicia Hodge 0x8fj754ea2c USA 0x7fg655se2e Country Philip Wegner Name 0x7fg655se2e SSN USA Country Denny Usher 0x7ff654ae6d USA Alicia Hodge 0x8fj754ea2c USA dbo.Patients Result Set Denny Usher Name 0x7ff654ae6d SSN USA Country 198-33-0987

- 141. Row-Level Security

- 142. Row-Level Security Row-Level Security (RLS) enables customers to control access to rows in a database table based on the characteristics of the user executing a query (for example, in a group membership or execution context). • The database system applies the access restrictions every time a tier attempts to access data • This makes the security system more reliable and robust by reducing the surface area of your security system • RLS works with a predicate (condition) which, when true, allows access to appropriate rows • Can be either a filter or block predicate • A filter predicate “filters out” rows from a query—the filter is transparent, and the end user is unaware of any filtering • A block predicate prevents unauthorized action, and will throw an exception if the action cannot be performed

- 143. Configure Row-Level Security 1. Create user accounts to test Row-Level Security GRANT SELECT ON Sales.SalesOrderHeader TO Manager; GRANT SELECT ON Sales.SalesOrderHeader TO SalesPerson280; 2. Grant read access to users on a required table CREATE SCHEMA Security; GO CREATE FUNCTION Security.fn_securitypredicate(@SalesPersonID AS int) RETURNS TABLE WITH SCHEMABINDING AS RETURN SELECT 1 AS fn_securitypredicate_result WHERE ('SalesPerson' + CAST(@SalesPersonId as VARCHAR(16)) = USER_NAME()) OR (USER_NAME() = 'Manager'); 3. Create a new schema and inline table-valued function USE AdventureWorks2014; GO CREATE USER Manager WITHOUT LOGIN; CREATE USER SalesPerson280 WITHOUT LOGIN; CREATE SECURITY POLICY SalesFilter ADD FILTER PREDICATE Security.fn_securitypredicate(SalesPersonID) ON Sales.SalesOrderHeader, ADD BLOCK PREDICATE Security.fn_securitypredicate(SalesPersonID) ON Sales.SalesOrderHeader WITH (STATE = ON); 4. Create a security policy, adding the function as both a filter and block predicate on the table 5. Execute the query to the required table so that each user sees the result (can also alter the security policy to disable)

- 144. Dynamic Data Masking

- 145. • Configuration made easy in the new Azure portal • Policy-driven at the table and column level, for a defined set of users • Data masking applied in real time to query results based on policy • Multiple masking functions available (for example, full, partial) for various sensitive data categories (credit card numbers, SSN, and so on) SQL Database SQL Server 2017 Table.CreditCardNo 4465-6571-7868-5796 4468-7746-3848-1978 4484-5434-6858-6550 Real-time data masking, partial masking Dynamic Data Masking Prevent the abuse of sensitive data by hiding it from users

- 146. Dynamic Data Masking walkthrough ALTER TABLE [Employee] ALTER COLUMN [SocialSecurityNumber] ADD MASKED WITH (FUNCTION = ‘SSN()’) ALTER TABLE [Employee] ALTER COLUMN [Email] ADD MASKED WITH (FUNCTION = ‘EMAIL()’) ALTER TABLE [Employee] ALTER COLUMN [Salary] ADD MASKED WITH (FUNCTION = ‘RANDOM(1,20000)’) GRANT UNMASK to admin1 1. Security officer defines Dynamic Data Masking policy in T-SQL over sensitive data in Employee table. 2. Application user selects from Employee table. 3. Dynamic Data Masking policy obfuscates the sensitive data in the query results. SELECT [Name], [SocialSecurityNumber], [Email], [Salary] FROM [Employee]

- 147. Configure Dynamic Data Masking Use an ALTER TABLE statement to add a masking function to the required column in the table CREATE USER TestUser WITHOUT LOGIN; GRANT SELECT ON Person.EmailAddress TO TestUser; Create a new user with SELECT permission on the table, and then execute a query to view masked data Verify that the masking function changes the required column with a masked field USE AdventureWorks2014; GO ALTER TABLE Person.EmailAddress ALTER COLUMN EmailAddress ADD MASKED WITH (FUNCTION = 'email()'); EXECUTE AS USER = 'TestUser'; SELECT EmailAddressID, EmailAddress FROM Person.EmailAddress; REVERT;

- 148. Auditing

- 149. SQL Server 2017 Auditing • SQL Server Audit is the primary auditing tool in SQL Server • Track and log server-level events in addition to individual database events • SQL Server Audit uses Extended Events to help create and run audit-related events • SQL Server Audit includes several audit components: SQL Server Audit: This container holds a single audit specification for either server- or database-level audits. You define multiple server audits to run simultaneously. SQL Server Audit specifications: This tracks server-level audits and invokes the necessary extended events as defined by the user. You can define only one server audit per audit (container). SQL Server Database Audit specifications: This object also comes under the server audit. User-defined database-level events are tracked and logged. Predefined templates help you define a database audit.

- 150. Unfortunately it cannot be done at column level as of yet Permissions required: ALTER ANY SERVER AUDIT CONTROL SERVER SQL Server Audit The server audit is the parent component of a SQL Server audit and can contain both: Server audit specifications Database audit specifications It resides in the master database, and is used to define where the audit information will be stored, the file rollover policy, the queue delay and how SQL Server should react in case auditing is not possible. The following server audit configuration is required: The server audit name The action to take • Continue and ignore the log issue • Shut down the server • Fail the operation The audit destination

- 151. Database Audit Specification Unfortunately this cannot yet be done at column level Permissions required: ALTER ANY DATABASE AUDIT SPECIFICATION. ALTER or CONTROL (permission for the database to which you would like to add the audit) This is at the database level. Using more granular auditing can minimize the performance impact on your server. This is done by using a Database Audit Specification that is only available in Enterprise Edition. Using the Database Audit Specification, auditing can be performed at object or user level. • The Database Audit Specification name (optional—default name will be assigned) • The server audit that the specification must be linked to • The Audit Action type. There are both: Audit Actions Audit Action groups (which may be selected, INSERTED and UPDATED or DELETED) • The object name of the object to be audited when an Audit Action has been selected • The schema of the selected object • The principal name. To audit all users, use the keyword “public” in this field

- 152. TODO: BI placeholder

- 153. Advanced analytics

- 155. In-Database analytics with SQL Server In SQL Server 2016, Microsoft launched two server platforms for integrating the popular open source R language with business applications: • SQL Server R Services (In-Database), for integration with SQL Server • Microsoft R Server, for enterprise-level R deployments on Windows and Linux servers In SQL Server 2017, the name has been changed to reflect support for the popular Python language: • SQL Server Machine Learning Services (In-Database) supports both R and Python for in- database analytics • Microsoft Machine Learning Server supports R and Python deployments on Windows servers—expansion to other supported platforms is planned for late 2017

- 156. Capability • Extensible in-database analytics, integrated with R, exposed through T-SQL • Centralized enterprise library for analytic models Benefits SQL Server Analytical engines Integrate with R/Python Data management layer Relational data Use T-SQL interface Stream data in-memory Analytics library Share and collaborate Manage and deploy R Data scientists Business analysts Publish algorithms, interact directly with data Analyze through T-SQL, tools, and vetted algorithms DBAs Manage storage and analytics together Machine Learning Services

- 157. Enhanced Machine Learning Services (SQL Server 2017) • Python support • Microsoft Machine Learning package included • Process multiple related models in parallel with the rxExecBy function • Create a shared script library with R script package management • Native scoring with T-SQL PREDICT • In-place upgrade of R components

- 158. Setup and configuration SQL Server 2017 setup Install Machine Learning Services (In-Database) Consent to install Microsoft R Open/Python Optional: Install R packages on SQL Server 2017 machine Database configuration Enable R language extension in database Configure path for RRO runtime in database Grant EXECUTE EXTERNAL SCRIPT permission to users CREATE EXTERNAL EXTENSION [R] USING SYSTEM LAUNCHER WITH (RUNTIME_PATH = 'c:revolutionbin‘) GRANT EXECUTE SCRIPT ON EXTERNAL EXTENSION::R TO DataScientistsRole; /* User-defined role / users */

- 159. Management and monitoring ML runtime usage Resource governance via resource pool Monitoring via DMVs Troubleshooting via XEvents/ DMVs CREATE RESOURCE POOL ML_runtimes FOR EXTERNAL EXTENSION WITH MAX_CPU_PERCENT = 20, MAX_MEMORY_PERCENT = 10; select * from sys.dm_resource_governor_resouce_pools where name = ‘ML_runtimes';

- 160. External script usage from SQL Server Original R script: IrisPredict <- function(data, model){ library(e1071) predicted_species <- predict(model, data) return(predicted_species) } library(RODBC) conn <- odbcConnect("MySqlAzure", uid = myUser, pwd = myPassword); Iris_data <-sqlFetch(conn, "Iris_Data"); Iris_model <-sqlQuery(conn, "select model from my_iris_model"); IrisPredict (Iris_data, model); Calling R script from SQL Server: /* Input table schema */ create table Iris_Data (name varchar(100), length int, width int); /* Model table schema */ create table my_iris_model (model varbinary(max)); declare @iris_model varbinary(max) = (select model from my_iris_model); exec sp_execute_external_script @language = 'R' , @script = ' IrisPredict <- function(data, model){ library(e1071) predicted_species <- predict(model, data) return(predicted_species) } IrisPredict(input_data_1, model); ' , @parallel = default , @input_data_1 = N'select * from Iris_Data' , @params = N'@model varbinary(max)' , @model = @iris_model with result sets ((name varchar(100), length int, width int , species varchar(30))); • Values highlighted in yellow are SQL queries embedded in the original R script • Values highlighted in aqua are R variables that bind to SQL variables by name

- 161. The SQL extensibility architecture launchpad.exe sp_execute_external_script sqlservr.exe Named pipe SQLOS XEvent MSSQLSERVER Service MSSQLLAUNCHPAD Service (one per SQL Server instance) What and how to launch “launcher” Bxlserver.exe sqlsatellite.dll Bxlserver.exe sqlsatellite.dll Windows Satellite Process sqlsatellite.dll Run query

- 162. SQL Server Machine Learning Services is scalable More efficient than standalone clients • Data does not all have to fit in memory • Reduced data transmission over the network Most R Open (and Python R) functions are single threaded • ScaleR and RevoScalePy APIs in scripts support multi-threaded processing on the SQL Server computer We can stream data in parallel and batches from SQL Server to/from script Use the power of SQL Server and ML to develop, train, and operationalize • SQL Server compute context (remote compute context) • T-SQL queries • Memory-optimized tables • Columnstore indexes • Data compression • Parallel query execution • Stored procedures

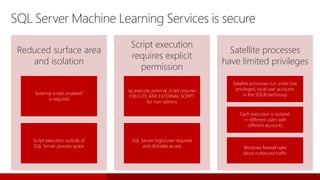

- 163. SQL Server Machine Learning Services is secure Reduced surface area and isolation “external scripts enabled” is required Script execution outside of SQL Server process space Script execution requires explicit permission sp_execute_external_script requires EXECUTE ANY EXTERNAL SCRIPT for non-admins SQL Server login/user required and db/table access Satellite processes have limited privileges Satellite processes run under low privileged, local user accounts in the SQLRUserGroup Each execution is isolated — different users with different accounts Windows firewall rules block outbound traffic

- 164. MicrosoftML package MicrosoftML is a package for Microsoft R Server, Microsoft R Client, and SQL Server Machine Learning Services that adds state-of-the-art data transforms, machine learning algorithms, and pretrained models to Microsoft R functionality. • Data transforms helps you to compose, in a pipeline, a custom set of transforms that are applied to your data before training or testing. The primary purpose of these transforms is to allow you to featurize your data. • Machine learning algorithms enable you to tackle common machine learning tasks such as classification, regression and anomaly detection. You run these high-performance functions locally on Windows or Linux machines or on Azure HDInsight (Hadoop/Spark) clusters. • Pretrained models for sentiment analysis and image featurization can also be installed and deployed with the MicrosoftML package.

- 165. Hybrid cloud

- 166. Back up to Azure

- 167. Managed backup • Granular control of the backup schedule • Local staging support for faster recovery and resiliency to transient network issues • Support for system databases • Support for simple recovery mode Back up to Azure block blobs • Cost savings on storage • Significantly improved restore performance • More granular control over Azure Storage Azure Storage snapshot backup • Fastest method for creating backups and running restores • SQL Server database files on Azure Blob storage Back up to Azure

- 168. Managed backup Support for system databases Support for databases in simple recovery mode Using backup to block blobs: more granular control Allows customized backup schedules: full backup and log backup 180

- 169. Customized scheduling EXEC Managed_Backup.sp_backup_config_schedule @database_name = 'testDB' ,@scheduling_option= 'Custom' ,@full_backup_freq_type = 'weekly' ,@days_of_week = 'Saturday' ,@backup_begin_time = '11:00' ,@backup_duration = '02:00' ,@log_backup_freq = '00:05' EXEC msdb.managed_backup.sp_backup_config_basic @database_name= 'testDB', @enable_backup=1, @container_url='https://storage account name.blob.core.windows.net/container name', @retention_days=30 Step 1: Run the scheduling SP to configure custom scheduling: Step 2: Run the basic SP to configure managed backup:

- 170. Back up to Azure block blobs • Two times cheaper storage • Backup striping and faster restore • Maximum backup size is 12 TB-plus • Granular access and unified credential story (SAS URLs) • Support for all existing backup/restore features (except append) CREATE CREDENTIAL [https://<account>.blob.core.windows.net/<container>] WITH IDENTITY = 'Shared Access Signature', SECRET = 'sig=mw3K6dpwV%2BWUPj8L4Dq3cyNxCI‘ BACKUP DATABASE database TO URL = N'https://<account>.blob.core.windows.net/<container>/<blob1>', URL = N'https://<account>.blob.core.windows.net/<container>/<blob2>'

- 171. Back up to Azure with file snapshots BACKUP DATABASE database TO URL = N'https://<account>.blob.core.windows.net/<container>/<backupfileblob1>' WITH FILE_SNAPSHOT Instance MDF Database MDF LDFLDF BAK

- 172. Back up to Azure with file snapshots • Available to users whose database files are located in Azure Storage • Copies database using a virtual snapshot within Azure Storage • Database data does not move between storage system and server instance, removing IO bottleneck • Uses only a fraction of the space that a traditional backup would consume • Very fast

- 173. Point-in-time restore with file snapshots Traditional backup • Multiple backup types • Complex point-in-time restore process Back up to Azure with file snapshots • Full backup only once • Point-in-time only needs two adjacent backups Full Log Log Log Diff Log Log Log Diff Log Log Log Full . . . . . Log Log Log Log Log Log Log Log Log Log Log

- 174. SQL Server on Azure VM

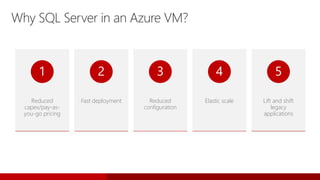

- 175. Why SQL Server in an Azure VM? Reduced capex/pay-as- you-go pricing 1 Fast deployment 2 Reduced configuration 3 Elastic scale 4 Lift and shift legacy applications 5

- 176. Microsoft gallery images • SQL Server 2008 R2 / 2012 / 2014 / 2016 / 2017 • SQL Server Web / Standard / Enterprise / Developer / Express Editions • Windows Server 2008 R2 / 2012 R2 / 2016 • Linux RHEL / Ubuntu SQL licensing • Based on SQL Server edition and core count (VM Sizes) • Pay-per-minute Bring your own license • Move an existing license to Azure through BYOL images Commissioned in ~10 minutes Connect via RDP, ADO .Net, OLEDB, JDBC, PHP, and so on Manage via Azure portal, SSMS, Powershell, CLI, System Center, and so on SQL Server in Azure VM—deploying

- 177. Azure VM sizes • Recommended for SQL Server production workloads • Local SSD storage • Premium storage • Portal optimizes VM for SQL Server workloads DSv3, DSv2 , DS & FS Series VM • Premium performance • Local SSD storage • Premium storage • Intel® Xeon® processor E5 v3 family GS, Ls-series VM https://docs.microsoft.com/en-us/azure/virtual-machines/windows/sizes https://docs.microsoft.com/en-us/azure/virtual-machines/linux/sizes

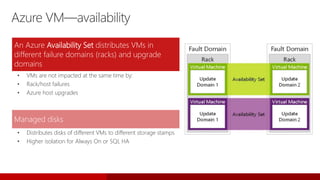

- 178. Azure VM—availability An Azure Availability Set distributes VMs in different failure domains (racks) and upgrade domains • VMs are not impacted at the same time by: • Rack/host failures • Azure host upgrades Managed disks • Distributes disks of different VMs to different storage stamps • Higher isolation for Always On or SQL HA

- 179. Azure VM—availability SLAs Single-VM SLA: 99.9% (<43 minutes downtime p/month) • 99.46% single VMs achieve 99.999% (<26 seconds downtime p/month) Multi-VM SLA: 99.95% (<22 minutes downtime p/month) • 99.99% of multi-VM deployments achieve 99.999% • Includes: • Planned downtime due to host OS patching • Unplanned downtime due to physical failures Doesn’t include servicing of guest OS or software inside (for example, SQL) SQL Server multi-VM deployments need Always On • If VM becomes unavailable, fail over to another (~15s) • Detects SQL instance failures (for example, service down or hung)

- 180. Azure VM—storage Each disk has three copies in Azure Storage An extent is replicated three times across different fault and upgrade domains With random selection for where to place replicas for fast MTTR Remote storage connected over high-speed network Quorum-write Checksum all stored data Verified on every client read Scrubbed every few days Automated disk verification and decommissioning Rereplicate on disk/node/rack failure or checksum mismatch

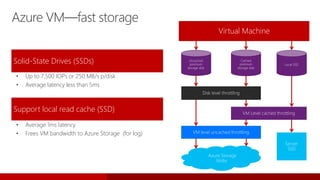

- 181. Solid-State Drives (SSDs) • Up to 7,500 IOPs or 250 MB/s p/disk • Average latency less than 5ms Support local read cache (SSD) • Average 1ms latency • Frees VM bandwidth to Azure Storage (for log) Uncached premium storage disk Cached premium storage disk Local SSD Virtual Machine

- 182. Physical security Infrastructure security Many certifications SQL security • Datacenters monitored constantly • Microsoft Ops and Support personnel don’t have access to customer storage • Virtual Networks—deployments are isolated in their own private networks • Storage—Encrypted Storage and authenticated via strong keys • ISO 27001/27002, SOC 1/SSAE 16/ISAE 3402 and SOC 2, • Cloud Security Alliance CCM, FISMA, HIPAA, EU model clauses, FIPS 140-2 • Auto patching • Encryption of databases and backups • Encryption of connections • Authentication: Windows/SQL • Row-Level Security and Always Encrypted (SQL Server 2016) SQL Azure VM—many layers of security

- 183. Azure VM—connectivity Over the internet Over secure site-to-site tunnel • On public connection • Dedicated connection (Express Route)—recommended Apps transparently connect to primary via listener Listeners are supported through Azure Load Balancer • Internal (VNET) or External (internet) • Hybrid (Vnet to Vnet) On-premises Virtual Network

- 184. Stretch Database

- 185. Ever-growing data, ever-shrinking IT What to do? • Expand server and storage • Move data elsewhere • Delete • Massive tables (hundreds of millions/billions of rows, TBs in size) • Users want/need to retain data indefinitely • Cold data infrequently accessed but must be online • Datacenter consolidation • Maintenance challenges • Business SLAs at risk

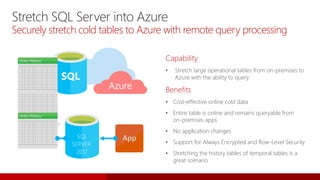

- 186. Capability • Stretch large operational tables from on-premises to Azure with the ability to query Benefits • Cost-effective online cold data • Entire table is online and remains queryable from on-premises apps • No application changes • Support for Always Encrypted and Row-Level Security • Stretching the history tables of temporal tables is a great scenario Stretch SQL Server into Azure Securely stretch cold tables to Azure with remote query processing SQL SERVER 2017 Azure

- 187. Stretch Database architecture How it works • Creates a secure linked server definition in the on-premises SQL Server • Targets remote endpoint with linked server definition • Provisions remote resources and begins to migrate eligible data, if migration is enabled • Queries against tables run against both local database and remote endpoint Remote endpoint Remote data Azure Internetboundary Linked servers Local database Local data Eligible data

- 188. -- Enable local server EXEC sp_configure 'remote data archive' , '1'; RECONFIGURE; -- Provide administrator credential to connect to -- Azure SQL Database CREATE CREDENTIAL <server_address> WITH IDENTITY = <administrator_user_name>, SECRET = <administrator_password> -- Alter database for remote data archive ALTER DATABASE <database name> SET REMOTE_DATA_ARCHIVE = ON (SERVER = server name); GO -- Alter table for remote data archive ALTER TABLE <table name> ENABLE REMOTE_DATA_ARCHIVE WITH ( MIGRATION_STATE = ON ); GO; High-level steps • Configure local server for remote data archive • Create a credential with administrator permission • Alter specific database for remote data archive • Create a filter predicate (optional) to select rows to migrate • Alter table to enable Stretch for a table • Stretch Wizard in SQL Server Management Studio makes all this easy (does not currently support creating filter predicates) Typical workflow to enable Stretch Database Hybrid solutions

- 189. Queries continue working • Business applications continue working without disruption • DBA scripts and tools work as before (all controls still held in local SQL Server) • Developers continue building or enhancing applications with existing tools and methods

- 190. Advanced security features supported • Data in motion always via secure channels (TLS 1.1/1.2) • Always Encrypted supported if enabled by user (encryption key remains on-premises) • Row-Level Security and auditing supported

- 191. Backup and restore benefits • DBAs only back up/restore local SQL Server hot data • StretchDB ensures remote data is transactionally consistent with local data • Upon completion of local restore, SQL Server reconciles with remote data using metadata operation, not data copy • Time of restore for remote not dependent on size of data

- 192. Current limitations that block stretching a table • Tables with more than 1,023 columns or more than 998 indexes cannot be stretched • FileTables or FILESTREAM data not supported • Replicated tables, memory-optimized tables • CLR data types (including geometry, geography, hierarchyid and CLR user-defined types) • Column types (COLUMN_SET, computed columns) • Constraints (default and check constraints) • Foreign key constraints that reference the table in a parent-child relationship—you can stretch the child table (for example Order_Detail) • Full text indexes • XML indexes • Spatial indexes • Indexed views that reference the table

- 194. Graph processing

- 195. A graph is a collection of nodes and edges Undirected graph Directed graph Weighted graph Property graph What is a graph? Node Person Person

- 196. Typical scenarios for graph databases A Hierarchical or interconnected data, entities with multiple parents. Analyze interconnected data, materialize new information from existing facts. Identify connections that are not obvious. Complex many-to-many relationships. Organically grow connections as the business evolves.

- 197. Introducing SQL Server Graph • A collection of node and edge tables in the database • Language Extensions • DDL Extensions—create node and edge tables • DML Extensions—SELECT - T-SQL MATCH clause to support pattern matching and traversals; DELETE, UPDATE, and INSERT support graph tables • Graph support is integrated into the SQL Server ecosystem Database Contains Graph isCollectionOf Node table has Properties Edge table may or may not have Properties Node Table(s) Edges connect Nodes Edge Table(s)

- 198. DDL Extensions • Create node and edge tables • Properties associated with nodes and edges CREATE TABLE Product (ID INTEGER PRIMARY KEY, name VARCHAR(100)) AS NODE; CREATE TABLE Supplier (ID INTEGER PRIMARY KEY, name VARCHAR(100)) AS NODE; CREATE TABLE hasInventory AS EDGE; CREATE TABLE located_at(address varchar(100)) AS EDGE;

- 199. DML Extensions Multihop navigation and join-free pattern matching using the MATCH predicate: SELECT Prod.name as ProductName, Sup.name as SupplierName FROM Product Prod, Supplier Sup, hasInventory hasIn, located_at supp_loc, Customer Cus, located_at cust_loc, orders, location loc WHERE MATCH( cus-(orders)->Prod<-(hasIn)-Sup AND cus-(cust_loc)->location<-(supp_loc)-Sup ) ;

- 200. Spatial

- 201. Spatial Spatial data represents information about the physical location and shape of geometric objects. These objects can be point locations, or lines, or more complex objects such as countries, roads, or lakes. SQL Server supports two spatial data types: the geometry data type and the geography data type. • The geometry type represents data in a Euclidean (flat) coordinate system. • The geography type represents data in a round-earth coordinate system.

- 202. Spatial functionality • Simple and compound spatial data types supported • Import and export spatial data to industry-standard formats (Open Geospatial Consortium WKT and WKB) • Functions to query the properties of, the behaviours of, and the relationships between, spatial data instances • Spatial columns can be indexed to improve query performance