Street Jibe Evaluation

- 1. A Conversation about Program Evaluation: Why, How and When? Uzo Anucha Applied Social Welfare Research and Evaluation Group School of Social Work York University

- 2. Program evaluation means taking a systematic approach to asking and answering questions about a program. Program evaluation is not an assessment of individual staff performance. The purpose is to gain an overall understanding of the functioning of a program. Program evaluation is not an audit – evaluation does not focus on compliance with laws and regulations. Program evaluation is not research . It is a pragmatic way to learn about a program. What is Program Evaluation?

- 3. Program evaluation is not one method . It can involve a range of techniques for gathering information to answer questions about a program. Most programs already collect a lot of information that can be used for evaluation. Data collection for program evaluation can be incorporated in the ongoing record keeping of the program. What is Program Evaluation?

- 4. Definition of Program Evaluation “ Program evaluation is a collection of methods, skills and sensitivities necessary to determine whether a human service is needed and likely to be used , whether it is sufficiently intensive to meet the unmet needs identified, whether the service is offered as planned , and whether the human service actually does help people in need at reasonable cost without undesirable side effects ” (Posavac & Carey, 2003. p.2)

- 5. Verify that resources are devoted to meeting unmet needs Verify that planned programs do provide services Examine the results Determine which services produce the best results Select the programs that offer the most needed types of services Why Evaluate?

- 6. Provide information needed to maintain and improve quality Watch for unplanned side effects Create program documentation Help to better allocate program resources Assist staff in program development and improvement Why Evaluate?

- 7. Evaluation can…. Increase our knowledge base Guide decision making Policymakers Administrators Practitioners Funders General public Clients Demonstrate accountability Assure that client objectives are being achieved

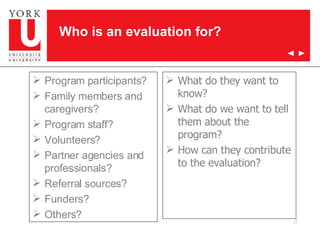

- 8. Who is an evaluation for?

- 9. What do they want to know? What do we want to tell them about the program? How can they contribute to the evaluation? Program participants? Family members and caregivers? Program staff? Volunteers? Partner agencies and professionals? Referral sources? Funders? Others? Who is an evaluation for?

- 10. Every evaluation happens in a political context. Find out what it is. Clarify your role in the evaluation. Let people know what you can and can not do. Be fair and impartial. Consider what is a reasonable and feasible evaluation for the particular program. Being Smart About Evaluation

- 11. Challenging Attitudes toward Program Evaluation……. Expectations of slam-bang effects Assessing program quality is unprofessional Evaluation might inhibit innovation Program will be terminated Information will be misused Qualitative understanding might be lost Evaluation drains resources Loss of program control Evaluation has little impact

- 12. Types of evaluations Needs assessment Evaluability assessment Process evaluation Outcome evaluation Efficiency evaluation (cost evaluation)

- 13. Needs Assessment/Community Capacity Mapping: Prerequisite to program planning and development: What is the community profile? What are the particular unmet needs of a target population? What forms of service are likely to be attractive to the population? Are existing services known or acceptable to potential clients? What barriers prevent clients from assessing existing services?

- 14. Evaluability Assessment Prerequisite to formal evaluation Are program goals articulated and measurable? Is the program model definable (flow diagram)? Are the goals and activities logically linked? Is there sufficient rigour and resources to merit evaluation?

- 15. Process Evaluation: Verify program implementation Is the program attracting a sufficient number of clients? Are clients representative of the target population? How much does the staff actually contact the client? Does the workload of staff match that planned? Are there differences in effort among staff?

- 16. Outcome Evaluation Describe program effects Is the desired outcome observed? Are program participants better off than non-participants? Is there evidence that the program caused the observed changes? Is there support for the theoretical foundations underpinning the program? Is there evidence that the program could be implemented successfully elsewhere?

- 17. Evaluation of Efficiency Effectiveness relative to cost: Are funds spent for intended purposes? Are program outcomes achieved at a reasonable cost? Can dollar values be assigned to the outcomes? Is the outcome achieved greater than other programs of similar costs?

- 19. Process Evaluation Sometimes referred to as “formative evaluation” Looks at the approach to client service delivery...day to day operations Two major elements: 1) how a program’s services are delivered to clients (what worker’s do including frequency and intensity; client characteristics; satisfaction 2) administrative mechanisms to support these services (qualifications; structures; hours; support services; supervision; training)

- 20. Process Evaluation Can occur concurrently with outcome evaluation Need to establish common program language Purpose of process evaluation: improve service; generate knowledge; estimate cost efficiency May be essential component of organizational accreditation

- 21. Steps in Process Evaluation Deciding what questions to ask background; client profile; staff profile; nature, amount and duration of service provided; nature of interventions; admin. supports; satisfaction of key stakeholders; efficiency? Developing data collection instruments ease of use; consistency with program operation and objectives; user input)

- 22. Steps in Process Evaluation Developing a data collection monitoring system (unit of analysis; number of units to include e.g. sampling; when and how to collect data Scoring and analyzing data (categorize by client groups, interventions, program; display graphically). Developing a feedback system (clients, workers, supervisors, administrators). Disseminating and communicating results

- 23. Sources of Process Evaluation Data Funder/agency/program documents (eg. Model; rationale;funding agreement) Key informant interviews with service delivery and admin. personnel or key collateral agencies (e.g. Referral source) Service utilization statistics Management Information Systems (M.I.S.) Surveys/interviews with consumers (e.g. client satisfaction

- 25. Outcome Evaluation Outcomes are benefits or changes for individuals or populations during or after participating in program activities. Outcomes may relate to behavior, skills, knowledge, attitudes, values, condition, or other attributes. They are what participants know, think, or can do; or how they behave; or what their condition is, that is different following the program. Outcome evaluation helps us to demonstrate the nature of change that took place

- 26. Outcome Evaluation Outcome evaluation tests hypotheses about how we believe that clients will change after a period of time in our program. Evaluation findings are specific to a specific group of clients experiencing the specific condition of one specific program over a specific time frame at a specific time.

- 27. For example: A program to counsel families on financial management, outputs--what the service produces--include the number of financial planning sessions and the number of families seen. The desired outcomes--the changes sought in participants' behavior or status- -can include their developing and living within a budget, making monthly additions to a savings account, and having increased financial stability.

- 28. Uses of Outcome Evaluation Improving program services to clients Generating knowledge for the profession Estimating costs Demonstrate nature of change...evaluation of program objectives e.g. what we expect clients to achieve Guide major program decisions and program activities

- 29. Program-Level Evaluations Program level evaluations vary on a continuum and are fundamentally made up of three levels Exploratory Descriptive Explanatory

- 30. The Continuum…… Qualitative ------------Quantitative Exploratory----Descriptive----Explanatory

- 31. Exploratory Outcome Evaluation Designs Questions here include: Did the participants meet a criterion (e.g. Treated vs. Untreated)? Did the participants improve (e.g. appropriate direction)? Did the participants improve enough (e.g. statistical vs. meaningful difference)? Is there a relation between change and service intensity and participant characteristics?

- 32. Exploratory Designs One group post test only Multi-group post test only Longitudinal case study Longitudinal survey

- 33. Strengths of Exploratory Designs Less intrusive and inexpensive Assess the usefulness and feasibility of further evaluations Can correlate improvement with other variables.

- 34. Descriptive Designs To show that something causes something else, it is necessary to demonstrate: That the cause precedes the supposed effects in time e.g. that an intervention precedes the change That the cause covaries with the effect – the change covaries with the intervention – the more the intervention, the more the change. That no viable explanation of the effect can be found except for the assumed cause e.g. there can be no other explanation for the change except the intervention. Both 1 and 2 can be achieved with exploratory designs…but not 3.

- 35. Descriptive Designs Randomized one-group posttest only Randomized cross-sectional and longitudinal survey One-group pretest-posttest Comparison group posttest only Comparison group pretest-posttest Interrupted time series

- 36. Explanatory Designs Defining characteristic is observation of people randomly assigned to either a program or control condition . Considered much better at addressing threats to internal validity Program group vs. Control group: if groups are formed randomly there is no reason to believe they differ in rate of maturation; no self selection into groups; groups did not begin at different levels

- 37. Explanatory Designs Classical experimental Solomon four group Randomized posttest only control group

- 38. Explanatory Designs Strengths/Limitations: counter threats to internal validity allow interpretations of causation expensive and difficult to implement frequently resistance from practitioners who already know what is best Suggested Times to Use: when new program is introduced when stakes are high when there is controversy over efficacy when policy change is desired when program demand is high

- 39. Internal Validity (causality) History Maturation Testing Instrumentation error Statistical regression Differential selection Mortality Reactive effects Interaction effects Relations between experimental and control groups (e.g. rivalry)

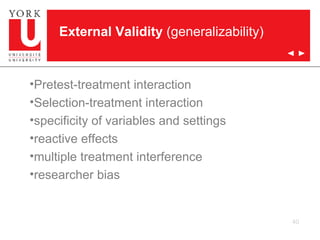

- 40. External Validity (generalizability) Pretest-treatment interaction Selection-treatment interaction specificity of variables and settings reactive effects multiple treatment interference researcher bias

- 41. Steps in Outcome Evaluation Step 1 : Operationalizing program objectives Step 2 : Selecting the measurements and stating the outcomes psychometrics, administration, measurement burden Step 3 : Designing a monitoring system sampling (# clients to include - min. 30 per group of interest; sampling strategies; missing data)

- 42. Steps in Outcome Evaluation Step 3 (cont’d): Designing a monitoring system: deciding when & how data will be collected will depend on question we are trying to answer: What degree is program achieving its objectives e.g. how much change? Differences between program participants and non-participants Question of causality Longevity of client changes Deciding how data will be collected: By telephone, mail or in person.

- 43. Steps in Outcome Evaluation Step 4: Analysing and displaying data Can also present outcome data according to subgroups by using demographics most useful when when data can be aggregated and summarized to provide an overview on client outcomes. Step 5 : Developing a feedback system Step 6 : Disseminating results

- 44. Ready, Set, Go? Some things to consider…..

- 45. Important to consider… Internal or external evaluators? Scope of evaluation? Boundary Size Duration Complexity Clarity and time span of program objectives Innovativeness

- 46. Sources of Data for Evaluation Intended beneficiaries of the program Program participants Artifacts Community indexes Providers of service Program staff Program records Observers Expert observers Trained observers Significant others Evaluation staff

- 47. Good Assessment Procedures Multiple Sources: triangulation and corroborating evidence Multiple Variables: focus on single variable can corrupt evaluation; different variables affected by different sources of error Non-Reactive Measures: measures which do not themselves effect respondents Important Variables: politically, conceptually and methodologically important variables

- 48. Good Assessment Procedures Valid Measures: instrument measures what it is supposed to measure (face, criterion, construct); more focused on objective behaviour...more likely to be valid Reliable Measures: consistent measure of construct (stable e.g test-retest; recognizable e.g. inter-rater; homogeneity e.g. split-half) Sensitivity to Change: able to detect small changes; pre-test scores should be scrutinized

- 49. Good Assessment Procedures Cost-effectiveness: length and ease and cost of production and distribution Grounded in existing research and Experiential relevance: use within literature; published psychometric data and population norms; pre-tested with relevant population

- 50. Ideal Program Evaluation Characteristics… Counter threats to internal/external validity established time ordering (intervention precedes effect) intervention is manipulated (admin. to at least one group) relations between intervention and program must be established design must control for rival hypotheses one control group must be used random assignment

- 51. Planning an Evaluation Identify the program and stakeholders Identify information needs of evaluation Examine the literature Determine the methodology (sample, design, data collection, procedures, analysis, time lines, budget) Prepare a written proposal

- 52. Preparing for an Evaluation Obtain a complete program description (newsletters, annual reports, etc). Identify & meet with stakeholders – program director, staff, funders/program sponsors and clients/program participants. Identify the information needs of evaluation: Who wants an evaluation? What should evaluation focus on? Why is an evaluation needed? When is an evaluation wanted? What resources are needed? What is the evaluability of the program?

- 53. Things to Consider….. Planning an evaluation follows similar steps to the conduct of more basic research with some additional considerations More effort needs to be expended in engaging and negotiating with stakeholder groups (e.g. interviews, or research study steering committee) There needs to be a keener awareness of the social/political context of the evaluation (e.g. differing and competing interests)

- 54. Things to Consider….. Greater effort needs to be expended in becoming familiar with and articulating program evaluation criteria including goals, objectives and implementation of model and theory The choice of measurement needs to be grounded in a detailed understanding of program goals, objectives and service delivery in addition to the qualities of ideal assessment measures (e.g. reliability and validity) All stages of planning must take into consideration practical limitations (eg. time, budget, resources)

- 55. Program Evaluation Exercise….. Consider a social service setting with which you are familiar and illustrate how program evaluation activities could be applied to it. What needs to be done if you were to contemplate evaluating this program? Who are the different stakeholders who should be involved in an evaluation and how? What are some evaluation questions that could be asked and what methods could one use? What are some criteria of program success that can be easily measured but miss the central point of the program (measurable but irrelevant)? What are some measurable and relevant criteria? What else needs to be considered?