Can We Automate Predictive Analytics

- 1. CAN WE AUTOMATE PREDICTIVE ANALYTICS? Thomas W. Dinsmore O P E N D A T A S C I E N C E C O N F E R E N C E_ BOSTON 2015 @opendatasci

- 2. Can we automate predictive analytics? • Buzz about automation • Degrees of automation • Some history • Where we are today • The last mile • The impact of automation Thomas W. Dinsmore

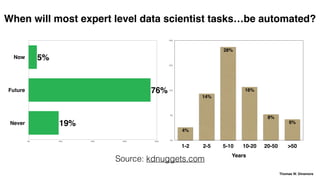

- 5. Now Future Never 0% 20% 40% 60% 80% 19% 76% 5% 0% 8% 15% 23% 30% Years 1-2 2-5 5-10 10-20 20-50 >50 6% 8% 16% 28% 14% 4% When will most expert level data scientist tasks…be automated? Source: kdnuggets.com Thomas W. Dinsmore

- 6. – Mark Ansermino, Director of Pediatric Anesthesia, University of British Columbia “We are convinced the machine can do better than human anesthesiologists” Thomas W. Dinsmore

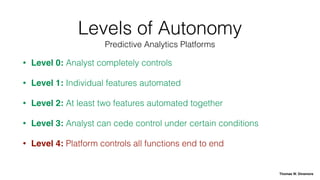

- 14. Levels of Autonomy • Level 0: Driver completely controls • Level 1: Individual controls automated • Level 2: At least two controls automated together • Level 3: Driver can cede control under certain conditions • Level 4: Vehicle controls all functions for the entire trip National Highway Traffic Safety Administration Thomas W. Dinsmore

- 15. 1995: Unica PRW • Optimized neural network specification • 1998: branded as Model One • Automated model selection • Now called IBM PredictiveInsight (Enterprise Marketing Management) Thomas W. Dinsmore

- 16. Late 1990s: MarketSwitch • “Fire your SAS programmers!” • “Russian rocket scientists” • Bought by Experian • Automation replaced by services Thomas W. Dinsmore

- 17. Late 1990s: KXEN • Structural risk minimization for model selection • Original release: rudimentary UI • Repositioned as easy to use tool for marketers • SAP purchased for $40 million in 2013 Thomas W. Dinsmore

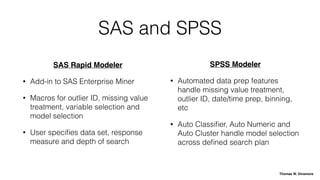

- 18. SAS and SPSS SAS Rapid Modeler • Add-in to SAS Enterprise Miner • Macros for outlier ID, missing value treatment, variable selection and model selection • User specifies data set, response measure and depth of search SPSS Modeler • Automated data prep features handle missing value treatment, outlier ID, date/time prep, binning, etc • Auto Classifier, Auto Numeric and Auto Cluster handle model selection across defined search plan Thomas W. Dinsmore

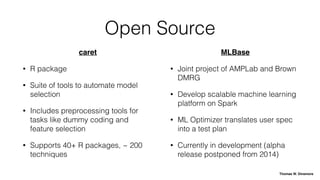

- 19. Open Source caret • R package • Suite of tools to automate model selection • Includes preprocessing tools for tasks like dummy coding and feature selection • Supports 40+ R packages, ~ 200 techniques MLBase • Joint project of AMPLab and Brown DMRG • Develop scalable machine learning platform on Spark • ML Optimizer translates user spec into a test plan • Currently in development (alpha release postponed from 2014) Thomas W. Dinsmore

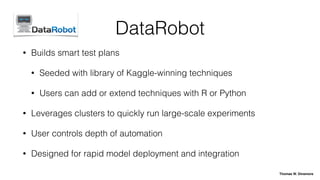

- 21. DataRobot • Builds smart test plans • Seeded with library of Kaggle-winning techniques • Users can add or extend techniques with R or Python • Leverages clusters to quickly run large-scale experiments • User controls depth of automation • Designed for rapid model deployment and integration Thomas W. Dinsmore

- 22. Levels of Autonomy • Level 0: Analyst completely controls • Level 1: Individual features automated • Level 2: At least two features automated together • Level 3: Analyst can cede control under certain conditions • Level 4: Platform controls all functions end to end Predictive Analytics Platforms Thomas W. Dinsmore

- 23. Level 4 Automated Analytics Model Scoring • Predictive models developed offline • Models uploaded through PMML • Scoring built into an automated process Unsupervised Learning • Anomaly detection • Social networks • Topic modeling or taste profiles for personalization Thomas W. Dinsmore

- 24. “Data science is 1% science and 99% data.” Thomas W. Dinsmore

- 25. Data sources are complex and diverse Thomas W. Dinsmore

- 26. Enterprise data: Thomas W. Dinsmore

- 27. It’s still a mess. Thomas W. Dinsmore

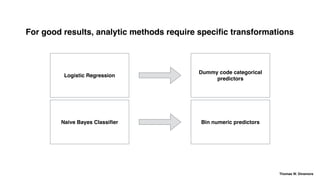

- 28. For good results, analytic methods require specific transformations Logistic Regression Naive Bayes Classifier Dummy code categorical predictors Bin numeric predictors Thomas W. Dinsmore

- 29. We can pre-build data source connections Thomas W. Dinsmore

- 30. Conventional Wisdom • For good results, make the data perfect, e.g.: • Find and remove anomalies • Replace missing data • Consumes time, but worth it The Right Way • Investigate and act on anomalies, but do not remove them • Use techniques that can handle missing data • Your predictive model has to work with dirty data, you should too Work with data “as is” Thomas W. Dinsmore

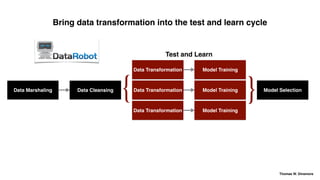

- 31. Data Marshaling Data Cleansing Data Transformation Model Training Model Selection Model Training Model Training { } The Conventional Wisdom Test and Learn Bring data transformation into the test and learn cycle Thomas W. Dinsmore

- 32. Data Marshaling Data Cleansing Data Transformation Model Training Model Selection Model Training Model Training { } Test and Learn Data Transformation Data Transformation Bring data transformation into the test and learn cycle Thomas W. Dinsmore

- 33. “The doctor will see you now.” Thomas W. Dinsmore

- 34. How often are results of your analytics used? 0% 25% 50% 75% 100% 1%5%28%50%16% Always Most of the time Sometimes Rarely Never 2013 Rexer Data Miners Survey Thomas W. Dinsmore

- 35. Why your analysis isn’t used • You do not understand the client’s business problem • You do not understand the deployment environment • The client does not understand your work Thomas W. Dinsmore

- 36. Automation lets data scientists spend more time collaborating, less time crunching Wrangle the data Define the problem Explain your work Develop models From this: Wrangle the data Define the problem Explain your work Develop models To this: Thomas W. Dinsmore

- 37. Can we automate predictive analytics? • Buzz about automation • Degrees of automation • Some history • Where we are today • The last mile • The impact of automation • We already have — almost • The last mile is a steep challenge • Automation will not replace data scientists — it will make them more effective Thomas W. Dinsmore

- 39. Thank You Thomas W. Dinsmore The Big Analytics Blog: www.thomaswdinsmore.com email: thomaswdinsmore@gmail.com @thomaswdinsmore

- 40. CAN WE AUTOMATE PREDICTIVE ANALYTICS? Thomas W. Dinsmore O P E N D A T A S C I E N C E C O N F E R E N C E_ BOSTON 2015 @opendatasci