XS Japan 2008 Networking English

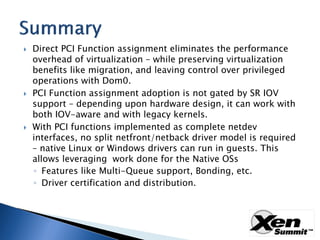

- 2. Direct PCI Function assignment eliminates the performance overhead of virtualization – while preserving virtualization benefits like migration, and leaving control over privileged operations with Dom0. PCI Function assignment adoption is not gated by SR IOV support – depending upon hardware design, it can work with both IOV-aware and with legacy kernels. With PCI functions implemented as complete netdev interfaces, no split netfront/netback driver model is required – native Linux or Windows drivers can run in guests. This allows leveraging work done for the Native OSs ◦ Features like Multi-Queue support, Bonding, etc. ◦ Driver certification and distribution.

- 3. Performance, Performance, Performance. ◦ CPU% overhead of virtualizing 10GbE can be 4x-6x, depending upon networking workloads. ◦ Running benchmarks at 10GbE line rate in a guest is possible with multi-queue solutions – but at the expense of driver complexity and additional cpu% overhead. Use of single “native” drivers in a guest, regardless of which Hypervisor is deployed (or none). ◦ The approach offers number of certification and distribution benefits, as well as rich feature set of a native stack. Driver “sees” the same resources. Incumbent solutions (emulation and para- virtualization) – are further profiled in backup slides.

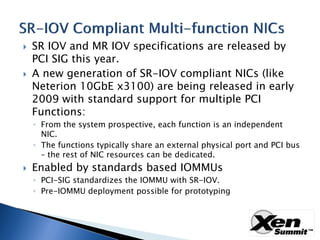

- 4. SR IOV and MR IOV specifications are released by PCI SIG this year. A new generation of SR-IOV compliant NICs (like Neterion 10GbE x3100) are being released in early 2009 with standard support for multiple PCI Functions: ◦ From the system prospective, each function is an independent NIC. ◦ The functions typically share an external physical port and PCI bus – the rest of NIC resources can be dedicated. Enabled by standards based IOMMUs ◦ PCI-SIG standardizes the IOMMU with SR-IOV. ◦ Pre-IOMMU deployment possible for prototyping

- 5. Multi-Port GbE NICs. ◦ Issues range from physical port/cables limitations to bandwidth limitations. 10GbE NICs with SR IOV VFs implemented as a subset of a NIC not as a complete NIC – for example, with VFs implemented as Level 1 or 2 queue pair. ◦ Queue pair is a good way to scale across multiple cpu cores within single OS image, but not across multiple OS images More detailed analysis is included in backup slides

- 6. This presentation is not a call to add support for multi-function NICs in Xen. ◦ Because the support is already there. Xen, and the various GOSs, already have almost everything they need to support multi-function NICs. Xen has PCI Function Delegation. ◦ Xen has migration. ◦ GOSs support bonding/teaming drivers. ◦ GOSs support PCI device insertion/removal. ◦ An example of configuring multi-function x3100 10GbE NIC for ◦ direct access and migration is included in backup slides.

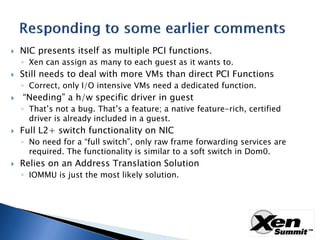

- 7. NIC presents itself as multiple PCI functions. ◦ Xen can assign as many to each guest as it wants to. Still needs to deal with more VMs than direct PCI Functions ◦ Correct, only I/O intensive VMs need a dedicated function. “Needing” a h/w specific driver in guest ◦ That’s not a bug. That’s a feature; a native feature-rich, certified driver is already included in a guest. Full L2+ switch functionality on NIC ◦ No need for a “full switch”, only raw frame forwarding services are required. The functionality is similar to a soft switch in Dom0. Relies on an Address Translation Solution ◦ IOMMU is just the most likely solution.

- 8. Act as a configured switch under Don0 privileged control. ◦ Forwarding may be statically controlled, not learned. Direct incoming frames to the correct PCI Function. Provide internal VNIC-to-VNIC frame forwarding. Provide VNIC to external port forwarding: ◦ Can prevent forged source addresses. ◦ Can enforce VLAN membership. Device domain participation is optional, but Dom0 can control things it already knows (Vlan participation, etc) More details included in Backup slides.

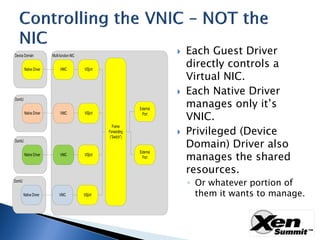

- 9. Each Guest Driver directly controls a Virtual NIC. Each Native Driver manages only it’s VNIC. Privileged (Device Domain) Driver also manages the shared resources. ◦ Or whatever portion of them it wants to manage.

- 10. Some of the resources supported by current Neterion x3100 10GbE drivers ◦ VF MAC addresses VF VLAN membership ◦ VF promiscuous mode ◦ Bandwidth allocation between VFs ◦ Device-wide errors ◦ Link statistics ◦ Link speed and aggregation modes ◦

- 11. Migration Support using Guest OS Services

- 12. Frontend/Backend supplies excellent migration already ◦ But requires a Hypervisor specific frontend driver. Because it is the only universally supported solution it plays a critical role in enabling migration.

- 14. Frontend/Backend is kept in place and is always available. Direct Assignment is used for most important Guests ◦ Each multi-function device will have a limit on how many guests it can directly support. Native Driver talks directly to NIC through its own PCI function, if enabled. Bonding Driver uses frontend/backend if direct NIC is not available. More details on migration are included in Backup slides.

- 15. Server Blade Chassis 360Gb/sec adapter Serious aggregate bandwidth Congestion Mezz Issues Mezz Fabric Module Mezz External Mezz Mezz Interoperability Ethernet Internal Mezz No link-layer Concerns Mezz Fabric Ethernet Mezz flow control 40-80Gb/sec Switch Mezz Mezz ‘egress’ Mezz bandwidth Mezz Mezz Mezz Mezz Mezz Server Unclear management responsibility: Blades Ethernet switches inside the Server domain Server Internal Ethernet Switch Ethernet ‘Fabric’ Management Management Management Extra heat Non hot- on each swappable blade I/O

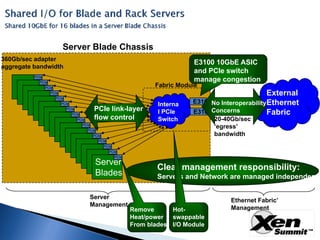

- 16. Server Blade Chassis 360Gb/sec adapter E3100 10GbE ASIC aggregate bandwidth and PCIe switch PCIe manage congestion PCIe Fabric Module PCIe External PCIe PCIe E3100 No InteroperabilityEthernet Interna PCIe PCIe link-layer PCIe E3100Concerns Fabric l PCIe PCIe flow control 20-40Gb/sec Switch PCIe PCIe ‘egress’ PCIe bandwidth PCIe PCIe PCIe PCIe PCIe Server Clear management responsibility: Blades Servers and Network are managed independentl Server Ethernet Fabric’ Management Management Remove Hot- Heat/power swappable From blades I/O Module

- 17. Direct PCI Function assignment eliminates the performance overhead of virtualization – while preserving virtualization benefits like migration, and leaving control over privileged operations with Dom0. PCI Function assignment adoption is not gated by SR IOV support – depending upon hardware design, it can work with both IOV-aware and with legacy kernels. With PCI functions implemented as complete netdev interfaces, no split netfront/netback driver model is required – native Linux or Windows drivers can run in guests. This allows leveraging work done for the Native OSs ◦ Features like Multi-Queue support, Bonding, etc. ◦ Driver certification and distribution.

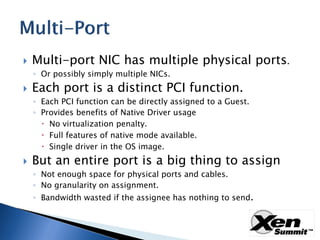

- 19. Multi-port NIC has multiple physical ports. ◦ Or possibly simply multiple NICs. Each port is a distinct PCI function. ◦ Each PCI function can be directly assigned to a Guest. ◦ Provides benefits of Native Driver usage No virtualization penalty. Full features of native mode available. Single driver in the OS image. But an entire port is a big thing to assign ◦ Not enough space for physical ports and cables. ◦ No granularity on assignment. ◦ Bandwidth wasted if the assignee has nothing to send.

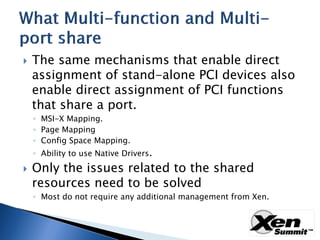

- 20. The same mechanisms that enable direct assignment of stand-alone PCI devices also enable direct assignment of PCI functions that share a port. ◦ MSI-X Mapping. ◦ Page Mapping ◦ Config Space Mapping. ◦ Ability to use Native Drivers. Only the issues related to the shared resources need to be solved ◦ Most do not require any additional management from Xen.

- 21. A Multi-queue NIC provides multiple independent queues within a PCI function. Native OS can use multiple queues itself ◦ CPU Affinity, QoS, Ethernet Priorities. DomD can utilize Guest Specific queues: ◦ But his is not true device assignment. Backend must validate/translate each request (WQE/Transmit Descriptor). Does not enable vendor’s native driver. Which already knows how to use multiple queues.

- 22. Limited functionality queues can be set up to “Queue Pairs” in Guest (or even Guest applications) Creation/Deletion/Control still managed through the Device Domain. Hardware specific code required ◦ In both the Guest and Device Domain. Single-threaded control means entangled control. ◦ Need to separate control-plane work done for a guest before it can be migrated.

- 23. Multi-function NICs present each external port as though it were a multi-queue NIC ◦ But for each of multiple PCI functions. ◦ Each PCI function is virtual – but that term is reserved. Each PCI function can be directly assigned ◦ Allowing Native Drivers to be used as is. ◦ Allowing support of large number of Guests with a compact number of ports. ◦ Enabling existing support of multi-queue capabilities already in the Guest OS.

- 24. DomU Device Domain DomU Application Frontend Backend Backend Frontend Application Bridging “Verbs” “Verbs Native Driver Queue Pair VNIC Queu Pair Packet Input Routing / Output Mixing External Port External Port RDMA-style NIC

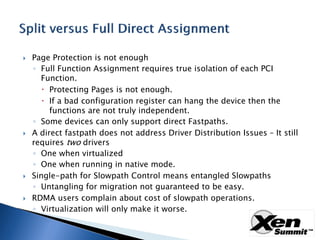

- 25. Page Protection is not enough ◦ Full Function Assignment requires true isolation of each PCI Function. Protecting Pages is not enough. If a bad configuration register can hang the device then the functions are not truly independent. ◦ Some devices can only support direct Fastpaths. A direct fastpath does not address Driver Distribution Issues – It still requires two drivers ◦ One when virtualized ◦ One when running in native mode. Single-path for Slowpath Control means entangled Slowpaths ◦ Untangling for migration not guaranteed to be easy. RDMA users complain about cost of slowpath operations. ◦ Virtualization will only make it worse.

- 26. Availability: ◦ Is the driver installed in the Guest OS image? Efficiency: ◦ Does the driver interfaces efficiently with the NIC? Migration: ◦ Can Guests using this Driver be migrated? Flexibility: ◦ Can new services be supported?

- 27. Availability: ◦ Excellent, NICs to be emulated are selected based on widespread deployment. Performance: ◦ Terrible. Migration: ◦ not a problem. Flexibility: ◦ None. You’re emulating a 20th century NIC.

- 28. Availability: ◦ Good. But there is a lag problem on which frontend has made it into the OS distribution. Performance: ◦ Tolerable. Migration: ◦ not a problem. Flexibility: ◦ New features require extensive collaboration.

- 29. Availability: ◦ Excellent. The same driver is used whether running natively or under any Hypervisor. ◦ NIC vendors already deal with OS distributions. Performance: ◦ Same as native. Migration: ◦ Not really a problem, details to follow. Flexibility: ◦ Same as native.

- 30. Multi-queue is a valuable feature ◦ But it does not really compensate for being a Single PCI Function Device. Multi-function NICs are multi-queue NICs ◦ But each queue is owned by a specific PCI Function. ◦ It operates within the function specific IO MAP Allowing GOS to communicate GPAs directly to the NIC. Each PCI Funciton has its own MSI-X. ◦ PCI Config space. ◦ Function Level Reset. ◦ Statistics. ◦

- 31. No performance penalty ◦ GOS Driver is interacting with Device the same way it would without virtualization. There is Zero penalty to the host. Multi-function NICs offload the cost of sharing. ◦ Frontend/Backend solutions always cost more: Address translation has non-zero cost. Copying even more. Latency penalty unavoidable. An extra step cannot take zero time. Can support ANY service supported by the Native OS. ◦ because the Native OS Driver sees the same resources.

- 32. This is not a bug. It is a feature. There already is a device specific driver in the Guest OS image. The vendor worked very hard to get it there. ◦ And to get it tested, and certified. ◦ There is already a distribution chain Which customers strongly prefer. ◦ It already integrates hardware capabilities with Native OS requirements.

- 33. “Soft Switches” have traditionally performed switch-like services within the Device Domain. ◦ But generally have not been fully compliant 802.1Q Bridges. ◦ For example most do not enable Spanning Tree. ◦ And in fact that is generally a good thing. They don’t have any real information to contribute to the topology discovery. They’d just slow things down. The Dom0 Soft Switch will still be part of the solution ◦ It must support VMs not given direct assignments. ◦ Some co-ordination between the two “Switches” is desired.

- 34. At minimum these services acts as a configured switch under privileged control. ◦ Forwarding may be statically controlled, not learned. Directs incoming frames to the correct PCI Function. Provides internal VNIC-to-VNIC frame forwarding. Provides VNIC to external port forwarding: ◦ Some form of traffic shaping probably required. ◦ Must prevent forged source addresses. ◦ Must enforce VLAN membership.

- 35. Virtual Links cannot be monitored ◦ Need to know where frames really came from. MF-NIC MUST prevent spoofing. Validating Local MAC Address is enough ◦ IP and DNS can be checked using existing tools given a validated MAC address. Only privileged entities can grant permission to a PCI Function to use a given MAC Address.

- 36. Manufacturer ◦ Each directly assigned Ethernet function is a valid Ethernet device. It has a manufacture assigned globally unique MAC Address. No dynamic action is required to enable use of this address. Zero configuration conventions for VLANs: ◦ Membership in default VLAN is usually enabled even without network management (usually VLAN 1).

- 37. Both network management and Xen already assign MAC Address and VLAN Memberships to Ethernet Ports. Xen can perform this function itself, or enable network management daemons in a designated domain to control the multi- function NIC’s “switch ports”. In either cases, the “port configuration” is a privileged operation through the PCI Function driver.

- 38. Neither the Hypervisor or Dom0 needs to be involved. ◦ The Shared Device and its PCI Function Drivers can implement their own solution. ◦ The device already knows how to talk with each VF Driver, and what VFs are active. Dom0 can control things it already knows: ◦ MAC Address of VNIC ports. ◦ VLAN Membership. But Dom0 does not need to deal with new device specific controls. ◦ All required logic can be implemented in device specific drivers and/or network management daemons.

- 39. Device Domain bridge is created for each VLAN. ◦ Each does simple Bridging within a VLAN (802.1D). Script (vif-bridge) is run whenever a VIF is added for a DomU. ◦ Controls which Bridge it is attached to. ◦ May specific the MAC Address Default is random within a reserved OUI. Device Domain’s OS may provide other filtering/controls ◦ Such as netfilter.

- 40. Many methods possible ◦ As though 802.1Q Bridge per external port. ◦ Static defaults applied to be unmanaged switch All VNIC MAC Addresses are Manufacturer supplied. ◦ Privileged operations via the Native Driver Enabled for DomD or stand-alone Native Drivers. ◦ Combinations of the above. Existing vif-bridge script could easily configure the vsport matching the VNIC for a directly assigned VIF. ◦ It already has MAC Address and any VLAN ID. ◦ Suggested naming convention: use PCI Function number to name the Backend instance. Simplifies pairing with direct device.

- 41. Multi-function NIC is unlikely to fully support all netfilter rules in hardware. When considering Direct Assignment: ◦ Determine which netfilter rules are implemented by the Multifunction NICs frame forwarding services. ◦ Determine if the remaining netfilter rules can be trusted to DomU. ◦ If there are filters that the hardware cannot implement, and cannot be trusted to DomU, then don’t do the direct assignment. Direct Assignment complements frontend/backend. It is not a replacement.

- 42. Same-to-same migration only requires checkpoint/restore of any Device state via VF Driver. ◦ Once state is checkpointed in VM memory, the Hypervisor knows how to migrate the VM. Many services do not require migration ◦ Each VM implements one instance of a distributed Service. Persistence is a shared responsibility. ◦ Most Web servers fall in this category. GOS probably already provides failover between dissimilar devices through bonding drivers.

- 43. Directly Assigned Devices can be migrated using existing services: ◦ GOS Device Bonding / Failover. Including netfront/netback in the team enables migrations between platforms with different hardware. ◦ GOS support of PCI device insertion/removal. Including check-pointing of any stateful data in host memory.

- 44. GOS already knows how to failover between two NICs. ◦ Same-to-same migration requires same GOS services whether failing over between two physical NICs or as a result of VM migration. XEN already provides a universally available NIC via para-virtualization. ◦ So a same-to-same Migration is always possible.

- 45. Requirement: device must be able to checkpoint any per-client stateful image in the client’s memory space. Device is told when to checkpoint any Guest- specific stateful information in the Guest memory image. Migrating Guest check-pointed memory image is a known problem that is already solved. Device driver on new host is told to restore from check-pointed memory image. ◦ Checkpointed image should be devoid of any absolute (non-VF relative) references.

- 46. Not all platforms have the same direct-access NICs, but same-to-same migration can be used anyway. Method A: post-migration makes right ◦ Just do a Same-to-Same Migration anyway. ◦ It will work Of course because the actual device is missing on the new platform the re-activated instance will fail. Invoking existing device failover logic within the Guest. ◦ Possible Enhancement: Provide PCI Device removal event immediately on migration.

- 47. Method B: migrate Same-to-same via netfront. ◦ Fail the Directly Assigned device. ◦ GOS will failover to the Frontend device. ◦ Migrate same-to-same to the new target platform. Which always can support netfront. ◦ Enable the appropriate Directly Assigned device on the new platform. ◦ GOS is informed on newly inserted PCI Function. ◦ GOS will failover to the preferred device as though it were being restored to service.

- 48. View of X3100 functions in Dom0 >>lspci –d 17d5:5833 ◦ 05:00.0 Ethernet controller: Neterion Inc.: Unknown device 5833 (rev 01) ◦ -- ◦ 05:00.7 Ethernet controller: Neterion Inc.: Unknown device 5833 (rev 01) ◦ Export a function to DomU, so vxge driver can be loaded: >>echo -n 0000:05:00.1 > /sys/bus/pci/drivers/vxge/unbind ◦ >>echo -n 0000:05:00.1 > /sys/bus/pci/drivers/pciback/new_slot ◦ >>echo -n 0000:05:00.1 > /sys/bus/pci/drivers/pciback/bind ◦ >>xm pci-attach 1 0000:05:00.1 ◦ Configure Bonding interface in DomU with active-backup policy and arp link monitoring, so the delegated interface (say eth1) can be enslaved to the bonding interface. ◦ >>modprobe bonding mode=1 arp_interval=100 arp_ip_target=17.10.10.1 ◦ >>ifconfig bond0 17.10.10.2 up ◦ >>echo +eth1 > /sys/class/net/bond0/bonding/slaves ◦ >>echo eth1 > /sys/class/net/bond0/bonding/primary

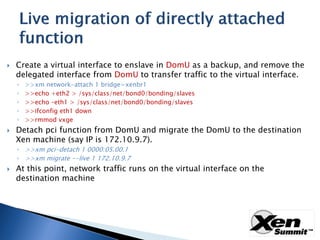

- 49. Create a virtual interface to enslave in DomU as a backup, and remove the delegated interface from DomU to transfer traffic to the virtual interface. >>xm network-attach 1 bridge=xenbr1 ◦ >>echo +eth2 > /sys/class/net/bond0/bonding/slaves ◦ >>echo –eth1 > /sys/class/net/bond0/bonding/slaves ◦ >>ifconfig eth1 down ◦ >>rmmod vxge ◦ Detach pci function from DomU and migrate the DomU to the destination Xen machine (say IP is 172.10.9.7). ◦ >>xm pci-detach 1 0000:05.00.1 ◦ >>xm migrate --live 1 172.10.9.7 At this point, network traffic runs on the virtual interface on the destination machine

- 50. Delegate function on the destination machine ◦ >>xm pci-attach 1 0000:02:00.1 Enslave the direct interface ◦ echo +eth3 > /sys/class/net/bond0/bonding/slaves ◦ echo eth3 > /sys/class/net/bond0/bonding/primary ◦ echo -eth2 > /sys/class/net/bond0/bonding/slaves Remove virtual backup in DomU >>xm network-list 1 ◦ >>Idx BE MAC Addr . handle state evt-ch tx-/rx-ring-ref BE-path ◦ 0 0 00:16:3e:45:de:53 0 4 8 768 /769 /local/domain/0/backend/vif/1/0 ◦ 2 0 00:16:3e:61:91:0a 2 4 9 1281 /1280 /local/domain/0/backend/vif/1/2 ◦ >>xm network-detach 1 2 ◦