Abstract

This study investigates different instructional designs to promote students’ collective cognitive responsibility for Knowledge Building in blended university courses. Using an iterative, design-based research methodology with reference to the conjecture mapping technique, the blended learning design of an undergraduate educational psychology course was refined over three years in three design iterations. The iterations differed substantially in the embodiment of the Concurrent Embedded and Transformative Assessment Knowledge Building principle that engaged students in knowledge assessments and strategy assessments of their community’s work. The design of the knowledge assessment involved face-to-face small group and whole class discussions in all three iterations. In the first and second iterations, students also worked online by writing individual reflections and contributing to a community portfolio. The design of the strategy assessment changed in each iteration. In the first iteration, the students’ strategy assessment took place in face-to-face discussions; in the second iteration, students contributed to an online community portfolio; and in the third iteration, the strategy assessment took place in an online community portfolio and face-to-face discussions before beginning the course and in the online community portfolio in the middle of the course. Collective cognitive responsibility was analyzed in terms of productive and informative participation, interdependence between participants, self-regulation skills. The results show that the second iteration’s design was most effective for fostering the students' collective cognitive responsibility, showing an increase in productive participation and self-regulation skills in the first part of the course and also an increase in the interdependence of participants during the course. Some implications concerning the relationship between the implementation of the CETA principle and Knowledge Building are identified for future directions of inquiry and for blended learning environments design.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

In recent years, technology-enhanced learning has become a common experience for many students in higher education (Dunn & Kennedy, 2019). Developments in educational technology have made university and college experiences more engaging and interactive than ever before (Nguyen et al., 2018). Key trends accelerating technology adoption in higher education include use of blended learning designs, redesigning learning spaces, a growing focus on measuring learning, and advancing cultures of innovation (Alexander et al., 2019). The focus on assessment reflects the growth of analytics and visualization software available to trace students’ behaviour in online and blended courses. The use of learning analytics, or the “measurement, collection, analysis and reporting of data about learners and their contexts, for the purposes of understanding and optimising learning and the environments in which it occurs” (Siemens, 2011), offers much promise to inform teaching, learning, and instructional design of online and blended learning courses.

Blended learning (BL) is a form of technology-enhanced learning that embraces the traditional values of classroom teaching and integrates best practices of online learning (Garrison & Vaughan, 2012). BL is defined variously in the literature and is studied with different foci. For example, Yang et al. (2021) described BL as an “instructional design structure that facilitates the benefits of technology, paired with face-to-face instruction, to address the variance in student learning” and discussed the possibility of using BL to enable personalized learning in K-12 education. They identified Garrison et al. (2000) Community of Inquiry (COI) framework with its’ three interactive elements—cognitive presence, social presence, and teacher presence—as a comprehensive one to guide BL research in various educational settings. Alamri et al. (2020) operationalized Garrison and Kanuka’s (2004) definition of BL as “the thoughtful integration of classroom face-to-face learning experiences with online learning experiences” (p. 96) in their review of the literature on technology models that support the emerging trend of personalization in BL in higher education. Other BL researchers such as Anthony et al. (2019) and Anthony et al. (2020a) defined BL as “a mode of learning that integrates face-to-face (F2F) and online learning” that is increasingly popular and in demand in higher education. Anthony et al. (2019) surveyed students, teachers, and management to develop predictive models to assist university policy makers in decision making for BL adoption. Anthony et al. (2020a) investigated the students’ behavioral intentions toward BL deployment in Malaysia, and found that attitude, subjective norm, perceived behavioral control, and self-efficacy influence students’ intention to accept BL. During the COVID-19 pandemic, Fabbri et al. (2021) transformed a period of high uncertainty into an opportunity for pedagogical development and innovation. They conducted an embedded mixed-method case study investigating BL model designs in two educational science programs at the University of Siena in Italy. Through quantitative analysis of Moodle Learning Management System (LMS) data, observations of synchronous teaching, and both quantitative and qualitative analyses of structured interviews with the faculty, they concluded that a mix of F2F and online instructional formats, is the best solution for accelerating these students’ learning processes.

To transform educational practice and develop twenty-first century capabilities such as collaboration, problem solving, critical thinking, communication, and self-regulated learning needed for advancing cultures of innovation and knowledge creation (Scardamalia et al., 2012), instructional or learning designers and teachers can complement traditional instructional design principles with evidence from the information and learning sciences (Fujita, 2020). As Daniela (2019) points out, the concept of SMART Pedagogy forefronts the need to supplement teacher competence with “predictive analytical competence” to evaluate the outcomes of technologies used to enhance learning.

Repeatedly, learning designers have asserted that both teachers and students have new roles to fulfil in online learning environments, where students become more self-directed learners who embrace active and collaborative learning (Cesareni et al., 2016; Conrad & Donaldson, 2011; Harasim, 2012; Neelen & Kirschner, 2020). Video lectures and self-paced online modules certainly have a place in delivering course content and flexible access; however, advances in technologies now affords us an opportunity to transform teaching and learning practices towards more participatory and community learning (cf. Lee, 2020). Learning sciences researchers have discussed this shift in terms metaphors of learning as “acquisition” and learning as “participation” (Sfard, 1998). “Knowledge creation” may be added as a third metaphor of learning to meet the needs of students who must be prepared for their future in the knowledge age, where collaborating to create new knowledge is the cultural norm (Chen & Hong, 2016; Paavola & Hakkarainen, 2005). To examine learning designs in which the norm is innovation and creating new knowledge (Chen & Hong, 2016; Paavola & Hakkarainen, 2005), we turn to Knowledge Building theory, pedagogy, and technology from the Learning Sciences (Scardamalia & Bereiter, 2014).

According to Gutiérrez-Braojos et al. (2018), “Knowledge Building is a SMART pedagogy that encourages students to take collective responsibility for knowledge advancement; Knowledge Forum technology is designed to support them in this work” (p. 213). The term “knowledge building” is commonly used to refer to “constructivist learning” in educational contexts, and “knowledge capital” in business contexts (Scardamalia & Bereiter, 2010). In this paper we refer to Scardamalia and Bereiter’s Knowledge Building (KB) as a theory, pedagogy and technology based on 30 years of empirical research and design experimentation (Chen & Hong, 2016). KB is not specific to higher education, online learning, or BL; it is focused on the creation of knowledge as a social product, what scientists, scholars, and employees of highly innovative companies produce (Zhang et al., 2009). KB is a kind of inquiry-based learning focused on theory building, where deep learning occurs as a side-product of knowledge-building activities. Thus, KB differs from other constructivist learning activities, such as project-based learning, problem-based learning, or case-based learning, by emphasizing social knowledge creation rather than individual learning (Bereiter, 2002) or individual knowledge construction (Chen & Hong, 2016).

KB is guided by a set of 12 principles or socio-cognitive and technological dynamics that work together in a complex system to organise a community focused on creating new knowledge, called a Knowledge Building Community (KBC), as summarized in Table 1.

Both the KBC and the COI (Garrison et al., 2000) are social constructivist theoretical frameworks and student-centred approaches to guide asynchronous, text-based, online discussion among participants in a collaborative community (Cesareni et al., 2016). However, KB has a technology, Knowledge Forum (KF), specially designed to support KB (Scardamalia, 2004). KF is available in both client and web versions. It offers virtual spaces for students and their teacher(s) in a KBC to contribute notes (messages) that represent ideas that are organized into separate views (folders). A KBC is typically a classroom or course, but not it is not restricted to those boundaries. Students advance the frontiers of their community knowledge by continuously reading notes, writing notes, and producing multimedia to contribute and improve ideas (Chen et al., 2015).

Analytic tools built in to KF enable users to monitor various participation and collaboration patterns nearly instantaneously and permit teachers to provide just-in-time formative feedback to ongoing processes in the KBC (Teplovs, 2010; Teplovs & Scardamalia, 2007). With the support of KF’s analytic tools, teachers can make evidence-based pedagogical decisions to promote knowledge-building activity and collaborative learning among students (Vatrapu et al., 2011). In addition to the analytic tools in KF, previous research exploring instructional designs to support KBC have employed students’ assessments of their own collaborative knowledge building by using KB principles and e-portfolios in high school classrooms (Lee et al., 2006; van Aalst & Chan, 2007), but less is known about students’ assessments of knowledge and assessments of strategies that they employ in higher education blended course settings.

As assessment of individual contributions to KB activities and collective knowledge advances play a central role in the KBC, it could be useful to use both quantitative and qualitative methods to explore these aspects in blended course settings in higher education. Therefore, the problem of inquiry addressed in the present study is how to design instruction in the online learning environment to promote students’ collective cognitive responsibility for Knowledge Building in blended higher education courses. This study focuses on the use of internal assessments, exploring different instructional designs, or embodiments guided by the Concurrent Embedded and Transformative Assessment (CETA) KB principle, to focus on students’ knowledge and strategy assessments of their work.

In the following section, the KB construct of collective cognitive responsibility is first introduced. Then, its three relevant dimensions: participation; interdependence between participants; and self-regulation skills are discussed. Finally, the CETA principle is presented as an operational reference framework within which to implement different forms of activities to promote the students’ collective cognitive responsibility in terms of participation, interdependence between participants, and self-regulation skills.

2 Collective Cognitive Responsibility

In a KBC classroom, all students take responsibility for goals that the teacher usually assumes to deepen their own individual learning and to create knowledge for the learning community. In other words, students are invited to exercise collective cognitive responsibility for generating and advancing ideas to create new knowledge of value for their own community (Scardamalia, 2002; Zhang et al., 2009). To do so, students review and assess the state of knowledge that “live in the world” to generate and continually improve promising ideas (Bereiter & Scardamalia, 1993). Students provide and receive constructive criticism, share and synthesize multiple perspectives, anticipate and identify challenges and solving problems, and collectively define knowledge goals that emerge from the process in which the group members are engaged (Zhang et al., 2009).

In the present study we consider students’ participation, interdependence between participants, and an improvement of self-regulation skills as indicators of collective cognitive responsibility. In the following sub-sections, we will review the research along these dimensions in more depth.

2.1 Students' Participation

The literature highlights students' participation as an important element in the design of successful post-secondary online courses. A persistent and widespread problem in asynchronous, text-based online discussions is the low number of student contributions, and teachers must design instruction to promote the students' participation (Hewitt, 2005). Beaudoin (2003), for instance, suggested that a high level of interaction and participation is desirable in online courses, but also noted that participation cannot easily be correlated with learning. However, a study by Alstete and Beutell (2004) reported that the strongest indicator of student performance in online classes was the discussion board usage, where the number of “hits” or clicks on messages was positively and significantly related to overall course performance. Hewitt (2005) also stated that online interaction supports learning by exposing students to others’ ideas and provides them with an opportunity to articulate their own ideas and receive peer feedback. Hratinski (2009) theorized that online learner participation is a complex process of taking part and maintaining relations with others, supported by both physical and psychological tools and engagement in various learning activities, but stressed that participation is not synonymous with simply talking or writing.

In addition, students’ involvement and participation in online courses needs to be supported by carefully structured learning activities and appropriate teacher facilitation (Fischer et al., 2013; Sansone et al., 2016). Hew et al. (2010) reviewed 50 empirical studies to identify factors contributing to limited student participation (few or no postings, low-level knowledge construction), and report that the use of grades as an incentive, requiring a minimum number of postings, and teacher facilitation all pose problems. They suggest that using students as facilitators may be an alternative solution to promote higher levels of participation in online discussion.

From a KB perspective, a nuanced understanding of participation is needed to understand whether collective cognitive responsibility is taking place in a community. As Zhang et al. (2009) highlight, to advance knowledge in a dynamic community, team members need to work on emergent problems and goals. As the agenda evolves, participant contributions continually alter the problem space. When students view their collaboration as supporting the KB model, they are more likely to participate online and employ a deep approach to learning (Chan & Chan, 2011).

2.2 Interdependence Between Participants

In addition to student participation, interdependence between participants is another indicator needed to analyse collective cognitive responsibility distributed between the members of the community. Zhang et al. (2009) explain that all members of a KBC, not only the teacher but also all the students, need to understand and monitor advances in the community. When students contribute their ideas, they must read the relevant work by their peers and post notes building on those ideas, instead of ignoring them. Interdependence between participants in KB can be shown by exploring the relationship among the students’ writing and reading activities in KF. Interdependence between community members prevents uneven participation, where some members are more engaged in writing notes and other members are more engaged in reading notes. In fact, inquiry can only be considered as a common enterprise if each community member understands the relevance of reading others’ notes and writes contributions that synthesize and advance previous ideas. In online learning environments, when students write contributions without reading other people’s texts, a self-referential situation is created, where each author remains encapsulated within their own ideas.

Alternatively, when community members read others’ contributions without writing contributions themselves, they engage in passive participation typical of “lurking”. The term “lurking” casts a pejorative shadow on people who do not actively post in an online community (Preece et al., 2004). If there is little or no posting in response to a message in an online community, lurking is a problem. Indeed, no one wants to be part of a conversation in which nobody responds to them. These aspects could be clearer if we distinguish an "informative participation" (based on reading activity) oriented to take information from other people, and a "productive participation" (based on writing activity) oriented to share information with other people (Cacciamani, 2017). In other words, from a KB perspective, when students write notes in KF, they are creating conceptual artefacts (containing ideas, questions, new information, etc.); when students read notes in KF, they are interacting with these artefacts. Online communities cannot survive if the most of its members engage in informative participation because people are not interested in being a member of silent communities. In these situations, facilitators must encourage both kinds of participation, as shown by a strong relationship among writing and reading activity. In KF, students' productive participation and informative participation may be analysed through learning analytics, using activity logs and a built-in analytic tool that computes the number of notes written and notes read for each student. Thus, KF makes it possible to analyse quantitatively, the relationship between writing and reading activity for each student. The correlation between the notes that students have written and the notes that they have read in KF can indicate interdependence between community members. As stated previously, this kind of interdependence can be considered as an indicator of collective cognitive responsibility.

2.3 Self-Regulation Skills

Another factor to be considered in the analysis of students' collective cognitive responsibility in the KBC is students’ metacognitive skills, particularly the self-regulative ones. In the BL literature, Anthony et al. (2020b) highlights the flexibility of the learning environment and students’ ability to manage their own learning pace among the factors influencing adoption of BL. Van Laer and Elen (2018) suggests the value of integrating features that support students’ self-regulation in BL environments.

Zimmerman (1998, 2000) states that the process of self-regulation is cyclical and involves three phases. The first phase, forethought, occurs when a learner prepares for performance, setting a goal and defining a strategy to attend it. The second phase, performance/volitional control, involves attention and action focused in implementing the strategy. The final phase, self-reflection, occurs when a learner reviews their own performance, making any necessary adjustments to the strategy implemented. From this theoretical perspective, learners who are highly self-regulating tend to set proximal goals, monitor progress toward those goals, and adapt their strategy as needed when progress is insufficient. Thus, reflection and assessment of strategies play a crucial role in theoretical models of self-regulation.

Self-regulation skills are important for successfully participating in an online course. Self-regulation skills and participation in an online course can have a positive reciprocal influence. On one hand, online students are involved in a new metacognitive challenge that requires them to change the strategies that they usually use to study in traditional courses. This change is necessary for online students to be able to process the plurality of information and to share their ideas with the other participants (Mayer et al., 2020; Narciss et al., 2007). Thus, metacognitive reflections on the strategy of work and self-regulative skills can support student participation in online interactions for knowledge building (e.g. Cacciamani et al., 2012).

To teach students to become self-regulated learners, individuals are usually taught targeted strategies to regulate their cognition, motivation, or behaviour to achieve relatively short-term learning goals (Zimmerman, 1990). On the other hand, when KBC members collaborate towards achieving long-term goals to solve problems of understanding and create knowledge (Chen & Hong, 2016), they may be able to enhance self-regulation skills that are important for successful online learning. Promisingly, several studies have shown that the acquisition of self-regulation skills can be developed through collaborative interaction among peers. When group members co-regulate their activity, this may support the acquisition and refinement of self-regulation skills (e.g. Di Donato, 2013). In online courses, if employed in conjunction with appropriate strategies, technologies can support and encourage informal conversation, dialogue, collaborative content generation and the sharing of knowledge, thereby opening access to a vast array of representations and ideas (McLoughlin & Lee, 2010). Many social software tools afford greater agency to the learner by allowing learner autonomy and engagement in global communities where ideas are exchanged and students assume active roles and create knowledge (Ashton & Newman, 2006; Lee et al., 2008).

These experiences that are made possible by social software tools are active, collaborative, process based, anchored in and driven by learners’ interests, and therefore have the potential to cultivate self-regulated, independent learning (McLaughlin & Lee, 2010). Learners expect features of learning analytics to support their planning and organization of learning processes (Schumacher & Ifenthaler, 2018). Thus, participation in online learning spaces with learning analytics can enhance the development of self-regulative skills (e.g. De Marco & Albanese, 2009; Schumacher & Ifenthaler, 2018).

Therefore, an important consideration in the online and blended course design is to understand how to design instruction that encourages students to engage in metacognitive reflections about the activity in which they are involved. Collective cognitive responsibility in KB activity necessitates students to monitor not only their own but also other students’ progress towards the shared goal to create new knowledge for the community. Therefore, students’ metacognitive reflections on their activity, the relationship between the level of metacognitive reflections, and participation in the KF, can serve as indicators of students' effort to improve their self-regulation skills and, in doing so, their participation in KB.

3 The Concurrent Embedded and Transformative Assessment Principle

An important instructional strategy to promote engagement in online and blended courses is to involve students in assessment activities, aligned not only with individual learning outcomes, but also consistent with the collaborative KB process. Evidence from empirical research shows that both self and peer assessments can help students to reflect on their own knowledge and take part in collaborative learning (Lan et al., 2012; Van Aalst & Chan, 2007).

From the KB perspective, one of the 12 KB principles (Scardamalia, 2002) is Concurrent, Embedded and Transformative Assessment (CETA), which highlights the involvement of students in assessment activities (See Table 1). The CETA principle emphasizes that students need to engage in a continuous evaluation process to identify problems to solve as the work proceeds, focusing on the assessment of the knowledge created by the community, and the assessment of strategies used to create the knowledge. Responsibility for these kinds of assessments traditionally rests with the teacher, but it may be possible to foster student involvement in assessment activities by creating a common space in the online environment where the students also assess the knowledge built by the community and share their metacognitive reflections on the strategy assessment of their work.

Previous CETA research focused on the design and development of a visualization tool for assessment of knowledge-building activity (Teplovs, 2008; Teplovs et al., 2007). Designs of visual analytics can support teachers’ formative assessment of student learning and teacher decision making in twenty-first century classrooms (Vatrapu et al., 2011). These studies have investigated the iterative design of the technological environment to support CETA. To date, relatively few studies have explored the enactment of the KBC model in higher education contexts (e.g. Cacciamani et al., 2012; Cesareni et al., 2016; Chiu & Fujita, 2014). More specifically, there are no studies that examine the iterative design of instruction involving CETA. Thus, the problem of inquiry is how to design instruction in the online learning environment guided by the CETA principle to promote students’ collective cognitive responsibility for KB. To address this problem, this study investigated the following research questions:

What design of instructional intervention involving the CETA principle in a blended course would best promote collective cognitive responsibility for Knowledge Building in terms of:

-

An increase in students’ writing and reading activities for informative and productive participation?

-

An interdependence between participants as indicated in the relationship between writing and reading activities for each participant?

-

An improvement on the self-regulation skills expressed in the level of metacognitive reflection about the strategy of work, and in relationship between metacognitive level and informative, productive participation?

4 Method

4.1 Participants

Participants were undergraduate students in a blended course in educational psychology at the University of Valle d’Aosta. This course was offered over three academic years, where each year represents an iteration of the design experiment. The participants were first-year students in the Faculty of Science of Education, and second-year students in the Faculty of Science for Primary School. The enrollments in each program for each of the three academic years are shown in Table 2.

4.2 Course Organization

The course was organized into a set of learning modules, each of which addressed a specific topic in educational psychology (e.g. theories of learning, motivation, collaborative learning). In the first and third iterations, the course was organized into four modules, whereas in the second year, the course was organized into five modules. Only students in the Faculty of the Science of Education program completed the last module. Thus, the present study focuses only on the first three modules of the course that all students completed. Each module began with a face-to-face meeting in which the teacher introduced the content and set the conditions for an online discussion in a Knowledge Forum (KF) view that unfolded over a period of two weeks. In all three modules, students were asked to reflect metacognitively on their knowledge-building discussion participation.

4.3 Course Designs Implemented Over the Three Iterations

This study uses a Design-Based Research (DBR) methodology for carrying out iterative, situated, and theory-based design experiments of instructional interventions (Collins et al., 2004; The DBR Collective, 2003). It examines three different blended course designs with interventions to support the CETA principle for the same educational psychology course offered in three different academic years. Each year represents a design iteration implementing a different set of knowledge assessment and strategy assessment activities for students based on the findings from the data of the previous iteration.

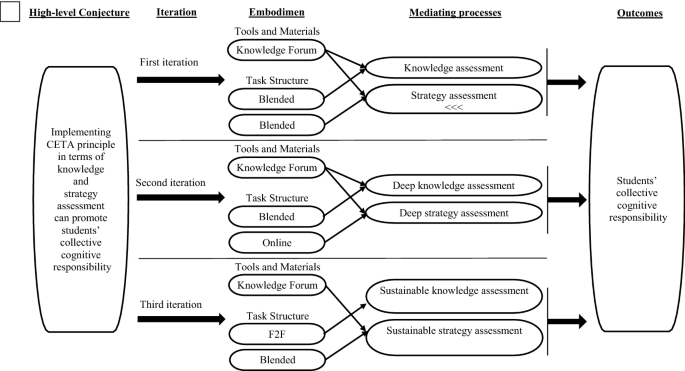

To conceptualize the different design iterations, we used the conjecture mapping technique, “a means of specifying theoretically salient features of a learning environment design and mapping out how they are predicted to work together to produce desired outcomes” (Sandoval, 2014, p. 2). The mapping begins with an assumption that learning environment embodies some high-level conjecture about how to support the kind of learning that will be promoted in that context. In the present study, the high-level conjecture is based on the CETA Knowledge Building principle and hypothesizes that the involvement of students in knowledge and strategy assessments can promote their collective cognitive responsibility in a blended learning higher education course. The embodiment of this high-level conjecture becomes reified in the specific features of the learning environment design. Conjectures can be embodied within four kinds of elements of learning environments: tools and materials, task structures, participant structures, and discursive practices. In the present study, the design elements that we focus on are the tool, Knowledge Forum (KF); and the task structures for knowledge and strategy assessments delivered in the face-to-face (F2F), Online, and Blended (online and F2F interactions) modes, The design changes over the iterations are summarized in Table 3.

In conjecture mapping, the embodiment of the high-level conjecture is expected to generate certain mediating processes, which in our study are represented by knowledge and strategy assessments observable in the students’ online written notes in KF. These mediating processes are intended to produce desired outcomes, which in the present study is collective cognitive responsibility, whose operationalization was described before. The conjecture mapping of the three iterations are represented in Fig. 1

The three iterations of the present study are described in detail below.

4.3.1 First Iteration: Blended-Blended Design for Knowledge and Strategy Assessment

In the first iteration, both knowledge and strategy assessments were implemented in a blended way at the end of each module, in three phases. In the first phase, each student had 15 min was to post a note in KF that assessed two aspects:

-

Knowledge assessment Identifying, from each student’s point of view, the more relevant ideas in the knowledge that was built in their course KBC; and

-

Strategy assessment Describing the strategy that each student used to carry out the online part of the course and identify its strengths and weaknesses.

In the second phase, students worked in small groups for 15 min. First, they read the online discussion notes in KF containing knowledge and strategy assessments written by their peers. Then, they engaged in F2F small group discussion to identify the more relevant ideas and the most important issues that needed clarification, and to share reflections about their strategies.

In the third phase, students discussed the knowledge assessment ideas and issues from small group discussions with the teacher, in a whole-class debate that took about 15 min. A subsequent discussion took place for another 15 min to share reflections on the strategies used by the entire community.

At the end of the course, students also participated in an online discussion that required them to reflect metacognitively on their own knowledge-building activity during the course. The content analysis of the student notes contributed in KF showed that eight (61.5%Footnote 1) of the 13 respondents identified the difficulty in organizing their study time to participate in the online activities. In addition, three students (23%) of 13 indicated this as a problem (two of them also indicated the lack of time as a problem). Furthermore, six students (46%) of 13 expressed some difficulties in the online communication modality (only two students did not mention the lack of time or the study time allotment). Three students did not post any messages. The prevalence of comments about the difficulties in the organization of study time could indicate that the time allotted (15 min + 15 min in the same class meeting) for metacognitive reflection on strategies was not enough to help students use their study time effectively. Thus, for the subsequent, second iteration of the study, to stimulate more deep knowledge and strategy assessments, we introduced an online portfolio for deeper knowledge and strategy assessments at the end of each module to give students three days to reflect and write, rather than attempting to condense all of the assessments in a single 60-min class meeting.

4.3.2 Second Iteration: Blended-Online Design for Deeper Knowledge and Strategy Assessments

In the second iteration, both knowledge and strategy assessments were adapted to address the limitations of the previous iteration. Changes in the embodiment were designed to promote deeper knowledge and strategy assessment; the knowledge assessment was organized in a blended way and the strategy assessment was completely online at the end of each module, a community portfolio in a “view,” an online community space in KF, was introduced to provide students with more time to reflect than a 60-min class. In the online portfolio, each student was asked to assess the community knowledge and to describe the strategies that they had used. Students were asked to answer the following two questions over a period of three days for their knowledge and strategy assessment:

-

1.

What are the two most interesting ideas that emerged from the discussion in this module?

-

2.

What strategies did you use and what strengths and critical points did they reveal?

As in the previous iteration, the knowledge assessment in the second iteration used a blended approach organized into three phases. In the first phase, students wrote notes in the community portfolio in KF. In the second phase, students engaged in F2F small group discussion with their peers to identify the two most interesting ideas. In the third phase, any issues students had identified were clarified with the teacher for about 15 min during a F2F plenary discussion.

However, a challenge emerged for both the knowledge and strategy assessments during the second iteration. The students saw the compilation of a community portfolio over three days at the end of each module as a time-consuming, laborious activity. In the metacognitive reflection at the end of the course, eight students (36%) of 22 indicated some difficulties in the structure of the study time. In addition, ten students (45%) of 22 identified the lack of time as a problem (only one of these students also referred to the organization of study time). Furthermore, two students (9%) spoke of some difficulties in communicating in the asynchronous, text-based online learning environment. Finally, four students (18%) of 22 did not indicate any difficulty concerning the course features, but they noted technical problems with their computers. Therefore, we determined that the main problem that the students faced was the lack of time for knowledge and strategy assessments.

Accordingly, in the third iteration of the design experiment, we aimed to create a more sustainable knowledge and strategy assessments. The community portfolio for knowledge assessment was discontinued, and the portfolio was used only for strategy assessment during a face-to-face meeting before the first module and at the end of the second one.

4.3.3 Third Iteration: F2F-Blended Design for Sustainable Knowledge and Strategy Assessments

In the third iteration of the design experiment, based on the findings of analyses in the previous iteration, changes were designed to make the knowledge and strategy assessment more sustainable. Instead of using the community portfolio, the knowledge assessment took place in two phases in a F2F meeting at the end of each module. Each phase took about 15 min. The first phase of the knowledge assessment consisted of small group F2F discussions, in which students were asked to highlight the more relevant ideas and the most important issues that emerged. A second phase followed, where the main issues that emerged were clarified with the teacher in a whole class discussion.

To address constraints to the strategy assessment in the previous iteration, the strategy assessment in the third iteration was embodied using a blended approach, in two phases. The first phase took place at the beginning of the course before the first module. A community portfolio was during a class meeting used to capture students’ preliminary reflections on the study strategies that they usually use at the university. Students were given 15 min to post a note in the community portfolio in KF responding to the following teacher’s question: “Which strategy do you typically use to study at the university?”.

After the students contributed their study strategies to the community portfolio, in a F2F whole class discussion with the teacher, students d the different strategies that they were currently using.

The second phase of the strategy assessment in the third iteration occurred after the second module. A community portfolio was organized during a face-to-face meeting. The aim of the portfolio was to carry out the strategy assessment at the mid-course mark. Each student was asked to describe, in 15 min, their own study strategy during the first part of the course, highlighting the strengths and weaknesses. To stimulate the metacognitive reflection, the teacher asked students to describe and assess their learning strategy by responding to the following question: “What strategy are you using to study for the online learning part of the course? (Please list the actions of the strategy). A point of strength for this strategy is … A critical point for this strategy is …”.

In the final metacognitive reflections, only two students (18%) of 11 showed some difficulties in the organization of study time, none of them indicated a problem of lack of time, only two students (18%) expressed difficulties in the communication modality in the online environment, and seven (64%) of 11 did not mention any difficulties.

4.4 Data Analysis

To investigate the effects of each embodiment of the CETA principle, the data from the first three modules of each course were analysed. The Analytic Toolkit (ATK; Burtis, 1998) built-in to KF provides summary statistics on activities in a KF database. The following two statistics were used to analyse the students’ observable interactions to produce written notes in KF, and to read these notes: the number of notes written by each participant (productive participation), and the percentage of notes read in the view, from which we derived the number of notes read (informative participation) by each user.

For each design iteration or academic year, t-tests on both numbers of notes read and the numbers of notes written by each student were used to examine the differences in students’ levels of participation between the modules.

In each module, Spearman’s Rho was used to calculate correlations between the notes written and the notes read by each participant to analyse the interdependence between reading and writing activities. The comparison among the correlations of the different modules of each course was managed through Steiger’s (1980) test of the difference between two dependent correlations with no variable in common, following the procedure indicated by Lee and Preacher (2013).

To the level of metacognitive reflection in the strategy assessment, the following coding scheme based on the participant structure, or how students were expected to participate in the task, was created:

Level 0: absence of reflection.

Level 1: reflection but not metacognitive on the strategy used.

Level 2: metacognitive reflection focused only on the strategy description.

Level 3: metacognitive reflection focused on the description and on the analysis of the strengths and weaknesses of the strategy.

Level 4: metacognitive reflection focused on the description, analysis of strengths and weaknesses and possible changes to the strategy.

This coding scheme was used to the first and second strategy assessments (called Time 1 and Time 2Footnote 2 respectively) as we were interested in examining the improvement in the levels metacognitive reflection over time, and the relationship between the level of metacognitive reflection and participation in each iteration. Two independent raters applied the coding scheme to students notes containing their strategy assessment. The raters reached an inter-rater agreement of 82.6% and a Cohen’s kappa equal to 73.3, which are considered acceptable values in the literature (Landis & Kooch, 1977). The controversial cases were discussed until the raters reached a complete agreement.

In each iteration, we used the Wilcoxon signed rank test to compare the level of metacognitive reflections at Time 1 and 2 to see if there was improvement in metacognitive reflection over time. The relationship between the metacognitive level of reflection, informative participation, and productive participation was investigated through a correlation analysis with Spearman’s Rho, considering metacognitive reflection levels as an ordinal variable.

5 Results

5.1 Students’ Participation

The average numbers of notes read and notes written by students in each module for the different designs of CETA principle embodiments are shown in Tables 4 and 5.

In the first design iteration, the results showed a statistically significant decrease in notes read from Module 1 to Module 2 (t(15) = 2.88, p < 0.05), and from Module 2 to Module 3 (t(15) = 3.20, p < 0.01), and also in comparing Module 1 and Module 3 (t(15) = 5.84, p < 0.001). For written notes, there was a statistically significant decrease from Module 1 to Module 2 (t(15) = 2.35, p < 0.05), but not from Module 2 to Module 3. The decrease between Module 1 and Module 3 is also statistically significant (t(15) = 3.44, p < 0.01).

In the second design iteration, the results highlighted a different trend compared to the first iteration. In fact, as Table 4 and 5 reveals, both the number of notes written, and notes read increase from Module 1 to Module 2 and decrease from Module 2 to Module 3. However, with regard to the number notes read, the difference is statistically significant only between Module 2 and Module 3 (t(25) = 5.78, p < 0.01), and the number of notes read in Module 3 is statistically lower than in Module 1 (t(25) = 2.63, p < 0.05). For the number of the written notes, these differences are significant both between Module 1 and Module 2 (t(25) = -2.21, p < 0.05) and between Module 2 and Module 3 (t(25) = 2.43, p < 0.05), while the difference between the first and third module is not significant.

In the third design iteration, the results showed the same pattern as the second iteration in the number of notes read both from Module 1 to Module 2 (t(13) = − 4.60, p < 0.01) and from Module 2 to Module 3 (t(13) = 3.23, p < 0.01). However, no significant differences in the number of notes read were detected between Module 1 and Module 3.

5.2 Interdependence Among Participants

Correlation between reading and writing activities by individual participants in each module was used to measure interdependence among community members for each design iteration. The results are shown in Table 6.

Table 6 indicates that the only statistically significant correlation is found in Module 3 in the second iteration. The table also shows an increase in the correlations in the second iteration, as well as in the third iteration. In addition, the comparison among each pair of correlation coefficients of the modules in each course using Steiger’s test shows a statistically significant difference only in the second iteration, between Module 1 and Module 3 (z = − 1,70, p < 0.05).

5.3 Self-Regulation Skills

The relationship between students’ metacognitive reflections on their activity and their participation was used as indicator of the students' effort to improve their self-regulation skills and, consequently their participation in KB. Results for coding the metacognitive levels of reflection in strategy assessment are shown in Table 7.

As shown in Table 7, very few students did not share reflections about strategy of work (level 0) in each iteration. Using the Wilcoxon rank sum test, we did not find statistically significant differences between metacognitive reflections in Time 1 and Time 2 in each design iteration.

Regarding the correlation between metacognitive levels of reflection and informative participation, we found only a negative correlation in the first iteration (Blended-Blended design) between Time 1 and number of notes read in Module 2 (ρ = − 0.51, p < 0.05). Results concerning correlation between metacognitive levels of reflection and productive participation are shown in Table 8.

As shown in Table 8, we found statistical significant correlations between metacognitive level of reflection and productive participation only in the first design iteration, Module 2, Time 2 (ρ = 0.70, p < 0.01), and in the second iteration, Module 1, Time 1 (ρ = 0.45, p < 0.05) and between Module 2, Time 1 (ρ = 0.39, p < 0.05). We did not find any correlation in the third iteration.

6 Discussion

The aim of the present study was to evaluate three different design iterations of instructional intervention or embodiments of the CETA principle to promote students’ collective cognitive responsibility for knowledge building in blended higher education courses. Collective cognitive responsibility was analysed through four indicators: 1. participation; 2. interdependence among participants; 3. level of metacognitive reflection on the strategy of work; and 4. relationship between participation and metacognitive reflections. We summarise the “lessons learned” from the different implementation cycles of our design-based research study below.

The first iteration of the CETA principle embodiment (Blended-Blended) was designed to elicit knowledge assessment and strategy assessment as mediating processes. This iteration focused on blended interactions at the end of each module. It used the following sequence of activities: individual reflection in KF, F2F discussion in small groups of students, and F2F discussion in a plenary session with the teacher. Analyses reveal that this design did not seem to support students’ participation in the online part of the activity over the time, as demonstrated by the decrease in the number of notes both read and written from Module 1 to Module 3. Moreover, the absence of correlation between the number of notes written and read revealed a lack of interdependence among community members. These results suggest that the students took an individualistic approach to their work, where writing notes and reading the other's notes were disjointed activities. This individualistic approach persisted during throughout the online course.

In addition, the level of metacognitive reflections remained the same at Time 1 and Time 2 in the first iteration. In Module 2, the level of metacognitive reflections at Time 1 correlate negatively with the number of notes read, while at Time 2, they correlate positively with the number of notes written. The more students went into depth in analysing their strategy at Time 1, the less they read their colleagues’ notes, and the more they wrote in the next module. In other words, the level of productive participation is connected to the level of analysis of the strategies the students used. The fact that the number of notes written in Module 2 correlates with the level of reflective analysis at Time 2, which took place after productive participation, but not at Time 1, which took place before, seems to indicate that students relied on their productive participation to carry out their reflections on the strategies adopted. This is confirmed by the absence of correlation between the number of notes written in Module 3 and the reflective analysis level at Time 2. Furthermore, the findings suggest that students preferred to focus on the change of their strategy of work individually. Overall, these results seem to indicate the embodiment of an individualistic, rather than collective, cognitive responsibility toward the knowledge building activity in online course, where each student is mainly focused on his/her ideas and strategies.

The second iteration of the CETA principle embodiment (Blended-Online) involved a community portfolio in KF at the end of each module, where students shared knowledge assessment and metacognitive reflections over three days. Unlike the first iteration, which shows a constant decrease in number of notes both read and written, this embodiment seems to support the students’ online participation. In fact, in contrast to the previous implementation, results show that both the number of notes read and written increase from Module 1 to Module 2, and this increase is significant for the notes read. The results show that both the number of notes read and written significantly decreased in Module 3. Additionally, we find an increased correlation between writing and reading (indicating interdependence among community members) that became statistically significant in the last module. The correlation in Module 3 is also higher compared to the Module 1 at a statistically significant level. It appears that students had changed their strategy from Module 1 to Module 3, moving from an individualistic approach to a more collaborative approach.

Additionally, in the second iteration, we find a correlation between notes written in Module 1 and the level of metacognitive reflection at Time 1. This finding could indicate, as in the previous iteration, that students relied on their productive participation to carry out their reflections on the strategies adopted. Moreover, we also find a correlation between the number of notes written in Module 2 and the level of metacognitive reflection at Time 1. However, this effect was not replicated with the metacognitive reflection in Time 2. A possible explanation of this lack of correlation is that students have relied on their reflections on strategies to increase their productive participation, first expanding the number (Module 2) and then improving the quality (Module 3) of their contributions. To verify this hypothesis, however, an analysis of the content of the text of the written notes, not contemplated in the present research, would have been necessary. The change from an individualistic to a more collaborative approach, made evident in Module 3, could be favoured by the students’ written activity and metacognitive reflection. Thus, a progressive development of collective cognitive responsibility toward knowledge building, can be considered in this course.

In the third design iteration (F2F-Blended), the embodiment of CETA principle in the design entailed F2F discussions at the end of each module intended to promote a more sustainable knowledge assessment, and included a preliminary metacognitive reflection in KF on the students’ usual study strategies at the university. In addition, at the end of the Module 2, a community portfolio in KF was provided to promote a more sustainable strategy assessment. The students were given time during their F2F class meeting to write a KF sharing their metacognitive reflections about their strategy of work. The results in the last iteration of the study show that the number of both notes read and written increase from Module 1 to Module 2 and decrease from Module 2 to Module 3. However, the differences between the modules in the third iteration are significant in the number of notes read, but not significant in the number of notes written. Furthermore, we do not find statistically significant correlations among the number of notes read and written, or between metacognitive reflection and notes read or written.

Overall, the results indicate that the second design iteration of the CETA principle seems to be most effective in promoting students’ collective cognitive responsibility. This iteration features a shared community portfolio that was used over a period of three days at the end of each module to promote a deeper knowledge and strategy assessments. The longer period for creating and sharing knowledge and strategy assessments in KF, compared to the other iterations (15 min) can enhance, through a self-reflective activity, the change from the individualistic cognitive responsibility toward a collective cognitive responsibility.

Interestingly, we notice that two different phenomena occur in two different parts of this course design. First, we see an increase of productive participation from Module 1 and 2. This increase happens after the metacognitive strategy assessment in Time 1. We also see a correlation among notes written in Module 1 and metacognitive reflection at Time 1, and a correlation between the level of metacognitive reflections in Time 1 and notes written in Module 2.

Second, we find that interdependence among the participants increase over the three Modules, becoming significant in Module 3. These results can be explained by the positive reciprocal influence between participation and self-regulative skills. Productive participation in knowledge-building activity, and the systematic shared practice of knowledge and strategy assessments in the community portfolio at the end of each module can enhance the development of self-regulated skills (e.g. De Marco & Albanese, 2009). Self-regulated skills and participation can promote the progressive increase of interdependence among the participants. This interdependence highlights that in knowledge building, the more the students created their own artifacts (write notes) the more they engaged in interacting with other students’ artifacts (read others’ notes) and vice-versa.

Our results are consistent with other studies of self-assessment, where students make judgments about their own learning achievement. Self-assessment is beneficial as it increases the role students take as active participants and allow them to become more aware of the quality of their own work and exercise responsibility for their own learning (Lan et al., 2012). In support of this claim, other studies state that the presence of a space for reflection on the metacognitive strategies in an online course encourages the development of online discussions, and consequently, of students’ participation (e.g.Cacciamani et al., 2012; Cesareni et al., 2008).

7 Conclusions

Knowledge Building is a SMART pedagogy that promotes collective cognitive responsibility in blended course learning environments. From the results of our study, the Blended-Online embodiment of the CETA principle, oriented to promote deep knowledge and strategy assessment, seems to enhance interdependence between community members and relationship between participation and metacognitive reflections. In this instructional design, the strategy assessment at the individual level operates in a shared, community learning environment over a period of three days. Our interpretation is that these features can facilitate, through a deeper self-reflective activity, the students’ development toward the desired outcome of taking on more collective cognitive responsibility from their initial approach of individualistic cognitive responsibility.

The innovative contributions of the present work are at two levels. The first contribution is an operationalization of collective cognitive responsibility for Knowledge Building in terms of participation, interdependence among community members, level of metacognitive reflection on strategies of work and relationship between participation and level of metacognitive reflection. The second contribution is the identification of the best implementation of the CETA principle to promote students’ collective cognitive responsibility.

In terms of implications, the results suggest that learning designers and teachers design BL courses in higher education with online portfolios, in which students conduct deep knowledge and strategy assessments at the end of each module. Thus, the students can participate in a joint effort to improve the community knowledge and identify the strengths and weaknesses of their strategy of work and introduce changes to overcome the limits identified.

Another relevant implication concerns the use of learning analytics to monitor students’ assumption of collective cognitive responsibility for knowledge building during the course through the dimensions identified in this paper (participation, interdependence between participants, and self-regulation skills). It could be possible to develop a feedback system for students, for example in the form of a student-facing dashboard, on the dimensions considered.

However, the present study also has some limits. The main methodological approach used was a quantitative analysis of student notes concerning the knowledge created. We recognize that this kind of analysis needs to be enriched by a qualitative analysis of students’ notes, in order to how different model of the CETA principle embodiments support a different quality of knowledge created, and also to verify if the decreasing level of student notes written could be due to the more complex content of the notes. To the qualitative content of notes, it could be useful to employ coding schemes that examine epistemic complexity and indicate students’ efforts to produce not only descriptions of the material world, but also theoretical explanations and articulations of hidden mechanisms, which are central in science and also in understanding scientific concepts (e.g., Hakkarainen, 2002).

Moreover, it is important to detect the changes that students decide to introduce in their strategy of work to face the challenges of a knowledge-building activity in an online environment. In this case it could be possible to use content analysis to describe this kind of changes described in the students' metacognitive reflections in relation to their participation. The analysis of the contents of the notes written by the students would allow us to investigate the qualitative changes in their productive participation. More specifically, it could also be useful to investigate the interdependence between the students qualitatively, identifying how the content of one community member’s note, read by another member of the community, is taken up by the latter in their notes.

In future studies investigating embodiments of the CETA principle, it could be useful to implement a portfolio before the beginning of the course, as in the third iteration (F2F-Blended model), and a portfolio at the end of each module, as in the second iteration (Blended-Blended model). Using the portfolio at the beginning of a course could enhance students’ awareness of their individualistic approach to the KB activity and represent a first step towards a change towards developing collective cognitive responsibility. Likewise, using a portfolio at the end of each module could support students in changing their strategy and monitoring this evolution. It is possible that using a tool like the portfolio can make explicit to the students the collaborative culture of work in the KBC and to check the sense of community among participants (Balboni et al., 2018; Cacciamani et al., 2019; Perrucci et al., 2012). It could be important to verify if the community dimension favouring collaboration has really become part of the member’s experience. When students are involved in exploring a new more collaborative strategy, or when the online discussion forum becomes messy from a higher level of participation, they could experience a sense of losing their independence. In this regard, it is possible to sustain participation by introducing roles into online activity. Roles, in fact, can work as a dynamic system in the process of knowledge building by creating interdependence among participants and organizing a division of work in the community (Cesareni et al., 2016; Strijbos & Weinberger, 2010). These are only some directions of possible inquiry. More research activity investigating these issues, as it relates to SMART pedagogies, is necessary to understand how to implement new solutions for the problems identified.

Notes

The sum of the percentage is higher than 100% because some students indicated more than one problem time or the study time allotment). Three students did not post any messages.

Time 1 corresponds to the metacognitive reflection after the module 1 for the 1st and the 2nd iteration and before module 1 for the 3rd iteration; Time 2 correspond to the metacognitive reflection after module 2 for all the iterations.

References

Alamri, H. A., Watson, S., & Watson, W. (2020). Learning technology models that support personalization within blended learning environments in higher education. TechTrends, 65(1), 62–78. https://doi.org/10.1007/s11528-020-00530-3

Alexander, B., Ashford-Rowe, K., Barajas-Murphy, N., Dobbin, G., Knott, J., McCormack, M., Pomerantz, J., Seilhamer, R., & Weber, N. (2019). NMC horizon report: 2019 higher education edition. CO: EDUCAUSE.

Alstete, J., & Beutell, N. (2004). Performance indicators in online distance learning courses: A study of management education. Quality Assurance in Education, 12(1), 6–14. https://doi.org/10.1108/09684880410517397

Anthony, B., Jr., Kamaludin, A., Romli, A., Raffei, A. F. M., Phon, D. N. A. E., Abdullah, A., Ming, G. L., Shukor, N. A., Nordin, M. S., & Baba, S. (2019). Exploring the role of blended learning for teaching and learning effectiveness in institutions of higher learning: An empirical investigation. Education and Information Technologies, 24(6), 3433–3466. https://doi.org/10.1007/s10639-019-09941-z

Anthony, B., Jr., Kamaludin, A., Romli, A., Raffei, A. F., Phon, D. N. A. E., Abdullah, A., Shukor, N. A., Nordin, M. S., & Baba, S. (2020a). The International Journal of Information and Learning Technology, 37(4), 179–196. https://doi.org/10.1108/ijilt-02-2020-0013

Anthony, B., Jr., Kamaludin, A., Romli, A., Raffei, A. F. M., Phon, D. N. A. E., Abdullah, A., & Ming, G. L. (2020b). Blended learning adoption and implementation in higher education: A theoretical and systematic review. Technology, Knowledge and Learning. https://doi.org/10.1007/s10758-020-09477-z

Ashton, J., & Newman, L. (2006). An unfinished symphony: 21st century teacher education using knowledge-creating heutagogies. British Journal of Educational Technology, 37(6), 825–884.

Balboni, G., Perrucci, V., Cacciamani, S., & Zumbo, B. D. (2018). Development of a scale of sense of community in university online courses. Distance Education, 39(3), 317–333. https://doi.org/10.1080/01587919.2018.1476843

Beaudoin, M. (2003). Learning or lurking? Tracking the invisible online student. The Internet and Higher Education, 5, 147–155. https://doi.org/10.1016/S1096-7516(02)00086-6

Bereiter, C. (2002). Education and mind in the knowledge age. Erlbaum.

Bereiter, C., & Scardamalia, M. (1993). Surpassing ourselves: An inquiry into the nature and implications of expertise. Open Court.

Burtis, J. (1998). Analytic toolkit for knowledge forum. Centre for Applied Cognitive Science: The Ontario Institute for Studies in Education/University of Toronto.

Cacciamani, S. (2017). Experiential learning and knowledge building in higher education: An application of the progressive design method. Journal of E-Learning and Knowledge Society, 13(1), 27–38.

Cacciamani, S., Cesareni, D., Perrucci, V., Balboni, G., & Khanlari, A. (2019). Effects of social tutor on sense of community in online university courses. British Journal of Educational Technology, 50(4), 1171–1784. https://doi.org/10.1111/bjet.12656

Cacciamani, S., Cesareni, D., Martini, F., Ferrini, T., & Fujita, N. (2012). Influence of participation, facilitator styles, and metacognitive reflection on knowledge building in online university courses. Computers and Education, 58(3), 874–884. https://doi.org/10.1016/j.compedu.2011.10.019

Cesareni, D., Cacciamani, S., & Fujita, N. (2016). Role taking and knowledge building in a blended university course. International Journal of Computer-Supported Collaborative Learning, 11(1), 9–39. https://doi.org/10.1007/s11412-015-9224-0

Cesareni, D., Albanese, O., Cacciamani, S., Castelli, S., De Marco, B., Fiorilli, C., Luciani, M., Mancini, I., Martini, F., & Vanin, L. (2008). Tutorship style and knowledge building in an online community: cognitive and metacognitive aspects. In B. M. Varisco (Ed.), Psychological pedagogical and sociological models for learning and assessment in virtual communities (pp. 13–56). Polimetrica.

Chan, C. K. K., & Chan, Y. Y. (2011). Students’ views of collaboration and online participation in knowledge forum. Computers and Education, 57(1), 1445–1457. https://doi.org/10.1016/j.compedu.2010.09.003

Chen, B., & Hong, H.-Y. (2016). Schools as knowledge-building organizations: Thirty years of design research. Educational Psychologist, 51(2), 266–288. https://doi.org/10.1080/00461520.2016.1175306

Chen, B., Ma, L., Matsuzawa, Y., & Scardamalia, M. (2015). The development of productive vocabulary in Knowledge Building: A longitudinal study. In O. Lindwall, P. Häkkinen, T. Koschman, P. Tchounikine, & S. Ludvigsen. (Eds.), Exploring the material conditions of learning: The computer supported collaborative learning (CSCL) conference 2015, Volume 1 (pp. 443–450). Gothenburg, Sweden: International Society of the Learning Sciences.

Chiu, M. M., & Fujita, N. (2014). Statistical discourse analysis: A method for modeling online discussion processes. Journal of Learning Analytics, 1(3), 61–83. https://doi.org/10.18608/jla.2014.13.5

Collins, A., Joseph, D., & Bielaczyc, K. (2004). Design research: Theoretical and methodological issues. The Journal of the Learning Sciences, 13(1), 15–42. https://doi.org/10.1207/s15327809jls1301_2

Conrad, R.-M., & Donaldson, J. A. (2011). Engaging the online learner: Activities and resources for creative instruction. Jossey-Bass.

De Marco, B., & Albanese, O. (2009). Le competenze autoregolative dell’attività di studio in comunità virtuali. Qwerty-Open and Interdisciplinary Journal of Technology, Culture and Education, 4(2), 123–139.

Di Donato, N. C. (2013). Effective self-and co-regulation in collaborative learning groups: An analysis of how students regulate problem solving of authentic interdisciplinary tasks. Instructional Science, 41(1), 25–47. https://doi.org/10.1007/s11251-012-9206-9

Dunn, T. J., & Kennedy, M. (2019). Technology enhanced learning in higher education: Motivations, engagement, and academic achievement. Computers and Education, 137, 104–113. https://doi.org/10.1016/j.compedu.2019.04.004

Fabbri, L., Giampaolo, M., & Capaccioli, M. (2021). Blended learning and transformative processes: A model for didactic development and innovation. In D. Burgos, P. Ducange, P. Limone, L. Perla, P. Picero, & C. M. Stracke (Eds.), Bridges and mediation in higher distance education (pp. 214–225). Springer International Publishing. https://doi.org/10.1007/978-3-030-67435-9

Fischer, F., Kollar, I., Stegmann, K., & Wecker, C. (2013). Toward a script theory of guidance in computer-supported collaborative learning. Educational Psychologist, 48(1), 56–66.

Fujita, N. (2020). Transforming online teaching and learning: towards learning design informed by the information science and learning sciences. Information and Learning Sciences. https://doi.org/10.1108/ILS-04-2020-0124

Garrison, D. R., & Kanuka, H. (2004). Blended learning: Uncovering the transformative potential in higher education. The Internet and Higher Education, 7, 95–105. https://doi.org/10.1016/j.iheduc.2004.02.001

Garrison, D. R., Anderson, T., & Archer, W. (2000). Critical inquiry in a text-based environment: Computer conferencing in higher education. The Internet and Higher Education, 2(2–3), 87–105. https://doi.org/10.1016/S1096-7516(00)00016-6

Gutiérrez-Braojos, C., Montejo-Gámez, J., Ma, L., Chen, B., Muñoz de Escalona-Fernández, M., Scardamalia, M., & Bereiter, C. (2018). Exploring collective cognitive responsibility through the emergence and flow of forms of engagement in a knowledge building community. In L. Daniela (Ed.), Didactics of smart pedagogy (pp. 213–232). Cham: Springer. https://doi.org/10.1007/978-3-030-01551-0_11

Harasim, L. (2012). Learning theory and online technologies. Routledge.

Hew, K. F., Cheung, W. S., & Ng, C. S. L. (2010). Student contribution in asynchronous online discussion: A review of the research and empirical exploration. Instructional Science, 38, 571–606. https://doi.org/10.1007/s11251-008-9087-0

Hewitt, J. (2005). Toward an understanding of how threads die in asynchronous computer conferences. The Journal of the Learning Sciences, 14(4), 567–589. https://doi.org/10.1207/s15327809jls1404_4

Hrastinski, S. (2009). A theory of online learning as online participation. Computers and Education, 52(1), 78–82. https://doi.org/10.1016/j.compedu.2008.06.009

Lan, Y. F., Lin, P. C., & Hung, C. L. (2012). An approach to encouraging and evaluating learner’s knowledge contribution in web-based collaborative learning. Journal of Educational Computing Research, 47(2), 107–135. https://doi.org/10.2190/EC.47.2.a

Landis, J. R., & Koch, G. G. (1977). The measurement of observer agreement for categorical data. Biometrics, 33, 159–174.

Lee, C. Y. (2020). How to improve the effectiveness of blended learning of pharmacology and pharmacotherapy? A case study in pharmacy program. Technology, Knowledge and Learning, 25(4), 977–988. https://doi.org/10.1007/s10758-020-09447-5

Lee, E. Y. C., Chan, C. K. K., & Van Aalst, J. (2006). Students assessing their own collaborative knowledge building. International Journal of Computer-Supported Collaborative Learning, 1(1), 57–87. https://doi.org/10.1007/s11412-006-6844-4

Lee, M. J. W., McLoughlin, C., & Chan, A. (2008). Talk the talk: Learner-generated podcasts as catalysts for knowledge creation. British Journal of Educational Technology, 39(3), 501–521. https://doi.org/10.1111/j.1467-8535.2007.00746.x

Lee, I. A., & Preacher, K. J. (2013, September). Calculation for the test of the difference between two dependent correlations with no variable in common [Computer software]. Retrieved from http://quantpsy.org.

Mayer, R. E., Fiorella, L., & Stull, A. (2020). Five ways to increase the effectiveness of instructional video. Educational Technology Research and Development, 68(2020), 837–852. https://doi.org/10.1007/s11423-020-09749-6

McLoughlin, C., & Lee, M. J. (2010). Personalised and self-regulated learning in the Web 2.0 era: International exemplars of innovative pedagogy using social software. Australasian Journal of Educational Technology, 26(1), 28–43.

Narciss, S., Proske, A., & Koerndle, H. (2007). Promoting self-regulated learning in web-based learning environments. Computers in Human Behavior, 23(3), 1126–1144. https://doi.org/10.1016/j.chb.2006.10.006

Nguyen, N., Muilu, T., Dirin, A., & Alamäki, A. (2018). An interactive and augmented learning concept for orientation week in education. International Journal of Educational Technology in Higher Education. https://doi.org/10.1186/s41239-018-0118-x

Paavola, S., & Hakkarainen, K. (2005). The knowledge creation metaphor: An emergent epistemological approach to learning. Science and Education, 14, 535–557. https://doi.org/10.1007/s11191-004-5157-0

Perrucci, V., Coscarelli, A., Balboni, G., & Cacciamani, S. (2012). Preliminary validation of the scale of sense of community in online course. World Journal on Educational Technology, 4(2), 126–136.

Preece, J., Nonnecke, B., & Andrews, D. (2004). The top five reasons for lurking: Improving community experience for everyone. Computers in Human Behavior, 20, 201–223. https://doi.org/10.1016/j.chb.2003.10.015

Sandoval, W. (2014). Conjecture mapping: An approach to systematic educational design research. Journal of the Learning Sciences, 23(1), 18–36. https://doi.org/10.1080/10508406.2013.778204

Sansone, N., Ligorio, M. B., & Buglass, S. L. (2016). Peer e-tutoring: Effects on students’ participation and interaction style in online courses. Innovations in Education and Teaching International. https://doi.org/10.1080/14703297.2016.1190296

Scardamalia, M. (2002). Collective cognitive responsibility for the advancement of knowledge. In B. Smith (Ed.), Liberal education in a knowledge society (pp. 67–98). Open Court.

Scardamalia, M. (2004). CSILE/Knowledge Forum®. In Education and technology: An encyclopedia (pp. 183-192). Santa Barbara: ABC-CLIO.

Scardamalia, M., & Bereiter, C. (2014). Knowledge building and knowledge creation. In K. Sawyer (Ed.), Cambridge handbook of the learning sciences (pp. 397–417). Cambridge University Press.

Scardamalia, M., & Bereiter, C. (2010). A brief history of knowledge building. Canadian Journal of Learning and Technology. https://doi.org/10.21432/T2859M

Scardamalia, M., Bransford, J., Kozma, B., & Quellmalz, E. (2012). New assessments and environments for knowledge building. In P. Griffin, B. McGaw, & E. Care (Eds.), Assessment and teaching of 21st century skills (pp. 231–300). Springer. https://doi.org/10.1007/978-94-007-2324-5_5

Schumacher, C., & Ifenthaler, D. (2018). Features students really expect from learning analytics. Computers in Human Behavior, 78, 397–407. https://doi.org/10.1016/j.chb.2017.06.030

Sfard, A. (1998). On two metaphors for learning and the dangers of choosing just one. Educational Researcher, 27, 4–13. https://doi.org/10.3102/0013189X027002004

Siemens, G. (2011). In 1st International Conference on Learning Analytics and Knowledge, Banff, Alberta, February 27-March 1, 2011. Retrieved from https://tekri.athabascau.ca/analytics/.

Steiger, J. H. (1980). Tests for comparing elements of a correlation matrix. Psychological Bulletin, 87, 245–251. https://doi.org/10.1037/0033-2909.87.2.245

Strijbos, J. W., & Weinberger, A. (2010). Emerging and scripted roles in computer-supported collaborative learning. Computers in Human Behavior, 26, 491–494. https://doi.org/10.1016/j.chb.2009.08.006

Teplovs, C. (2008). The knowledge space visualizer: a tool for visualizing online discourse. In Paper presented at the common framework for CSCL interaction analysis workshop, international conference of the learning sciences. Utrecht: NL.

Teplovs, C. (2010). Visualization of knowledge spaces to enable concurrent, embedded and transformative input to knowledge building processes. Unpublished doctoral dissertation, University of Toronto, Toronto, ON. Retrieved from http://hdl.handle.net/1807/24893.

Teplovs, C., & Scardamalia, M. (2007). Visualizations for knowledge building assessment. Paper presented at the Institute for Knowledge Innovation and Technology Summer Institute 2007. Retrieved from https://ikit.org/SummerInstitute2007/Highlights/SI2007_papers/48_Teplovs.pdf.

Teplovs, C., Donoahue, Z., Scardamalia, M., & Philip, D. (2007). Tools for concurrent, embedded, and transformative assessment of knowledge building processes and progress. In C. A. Chinn, G. Erkens, & S. Puntambekar (Eds.), Proceedings of the 8th international conference on computer-supported collaborative learning (CSCL’ 07) (pp. 721–723). International Society of the Learning Sciences.

The Design-Based Research Collective. (2003). Design-based research: An emerging paradigm for educational inquiry. Educational Researcher, 32(1), 5–8. https://doi.org/10.3102/0013189X032001005

Van Aalst, J., & Chan, C. K. K. (2007). Student-directed assessment of knowledge building using electronic portfolios. The Journal of the Learning Sciences, 16(2), 175–220. https://doi.org/10.1080/10508400701193697

Van Laer, S., & Elen, J. (2018). Adults’ self-regulatory behaviour profiles in blended learning environments and their implications for design. Technology, Knowledge and Learning, 25(3), 509–539. https://doi.org/10.1007/s10758-017-9351-y

Vatrapu, R., Teplovs, C., Fujita, N., & Bull, S. (2011). Toward visual analytics for teachers’ dynamic diagnostic pedagogical decision-making. In LAK’11 proceedings of the 1st international conference on learning analytics and knowledge (pp.93–98). Banff, AB: ACM. https://doi.org/10.1145/2090116.2090129

Yang, S., Carter, R. A., Zhang, L., & Hunt, T. (2021). Emanant themes of blended learning in K-12 educational environments: Lessons from the every student succeeds Act. Computers and Education. https://doi.org/10.1016/j.compedu.2020.104116

Zhang, J., Scardamalia, M., Reeve, R., & Messina, R. (2009). Designs for collective cognitive responsibility in knowledge-building communities. The Journal of the Learning Sciences, 18(1), 7–44. https://doi.org/10.1080/10508400802581676

Zimmerman, B. J. (1990). Self-regulated learning and academic achievement: An overview. Educational Psychologist, 25(1), 3–17. https://doi.org/10.1207/s15326985ep2501_2

Zimmerman, B. J. (1998). Developing self-fulfilling cycles of academic regulation: An analysis of exemplary instructional models. In D. H. Schunk & B. J. Zimmerman (Eds.), Self-regulated learning: From teaching to self-reflective practice (pp. 1–19). Guilford Press.

Zimmerman, B. J. (2000). Attaining self-regulation: A social cognitive perspective. In M. Boekaerts, P. R. Pintrich, & M. Zeidner (Eds.), Handbook of self-regulation (pp. 13–39). Academic Press.

Author information

Authors and Affiliations

Contributions