Long Le

Speculative RAG: Enhancing Retrieval Augmented Generation through Drafting

Jul 11, 2024Abstract:Retrieval augmented generation (RAG) combines the generative abilities of large language models (LLMs) with external knowledge sources to provide more accurate and up-to-date responses. Recent RAG advancements focus on improving retrieval outcomes through iterative LLM refinement or self-critique capabilities acquired through additional instruction tuning of LLMs. In this work, we introduce Speculative RAG - a framework that leverages a larger generalist LM to efficiently verify multiple RAG drafts produced in parallel by a smaller, distilled specialist LM. Each draft is generated from a distinct subset of retrieved documents, offering diverse perspectives on the evidence while reducing input token counts per draft. This approach enhances comprehension of each subset and mitigates potential position bias over long context. Our method accelerates RAG by delegating drafting to the smaller specialist LM, with the larger generalist LM performing a single verification pass over the drafts. Extensive experiments demonstrate that Speculative RAG achieves state-of-the-art performance with reduced latency on TriviaQA, MuSiQue, PubHealth, and ARC-Challenge benchmarks. It notably enhances accuracy by up to 12.97% while reducing latency by 51% compared to conventional RAG systems on PubHealth.

Distributed Continual Learning

May 23, 2024Abstract:This work studies the intersection of continual and federated learning, in which independent agents face unique tasks in their environments and incrementally develop and share knowledge. We introduce a mathematical framework capturing the essential aspects of distributed continual learning, including agent model and statistical heterogeneity, continual distribution shift, network topology, and communication constraints. Operating on the thesis that distributed continual learning enhances individual agent performance over single-agent learning, we identify three modes of information exchange: data instances, full model parameters, and modular (partial) model parameters. We develop algorithms for each sharing mode and conduct extensive empirical investigations across various datasets, topology structures, and communication limits. Our findings reveal three key insights: sharing parameters is more efficient than sharing data as tasks become more complex; modular parameter sharing yields the best performance while minimizing communication costs; and combining sharing modes can cumulatively improve performance.

Supervised Parameter Estimation of Neuron Populations from Multiple Firing Events

Oct 02, 2022

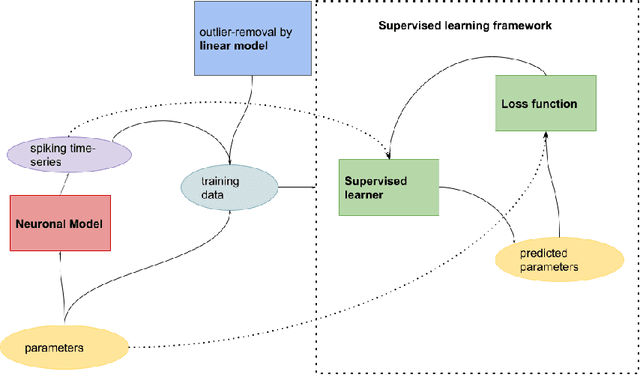

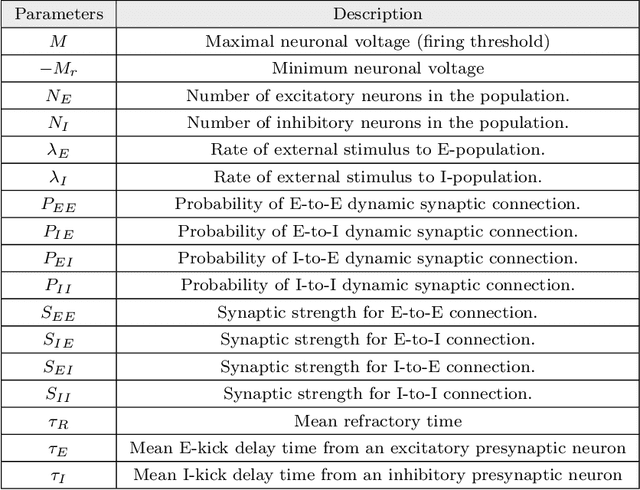

Abstract:The firing dynamics of biological neurons in mathematical models is often determined by the model's parameters, representing the neurons' underlying properties. The parameter estimation problem seeks to recover those parameters of a single neuron or a neuron population from their responses to external stimuli and interactions between themselves. Most common methods for tackling this problem in the literature use some mechanistic models in conjunction with either a simulation-based or solution-based optimization scheme. In this paper, we study an automatic approach of learning the parameters of neuron populations from a training set consisting of pairs of spiking series and parameter labels via supervised learning. Unlike previous work, this automatic learning does not require additional simulations at inference time nor expert knowledge in deriving an analytical solution or in constructing some approximate models. We simulate many neuronal populations with different parameter settings using a stochastic neuron model. Using that data, we train a variety of supervised machine learning models, including convolutional and deep neural networks, random forest, and support vector regression. We then compare their performance against classical approaches including a genetic search, Bayesian sequential estimation, and a random walk approximate model. The supervised models almost always outperform the classical methods in parameter estimation and spike reconstruction errors, and computation expense. Convolutional neural network, in particular, is the best among all models across all metrics. The supervised models can also generalize to out-of-distribution data to a certain extent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge